New approach leverages AI to automate deployment of secure multi-layer data architectures, separating infrastructure from workloads while maintaining security boundaries.

The implementation of secure data architectures in cloud environments has traditionally required extensive manual configuration and specialized expertise. A new approach presented by Microsoft's cloud community demonstrates how GitHub Copilot can automate the deployment of Medallion Architecture patterns on Azure Databricks, significantly reducing implementation time while maintaining robust security controls.

What Changed: AI-Powered Architecture Implementation

The traditional approach to implementing a Medallion Architecture on Azure Databricks involved separate, manual processes for infrastructure provisioning (typically through Terraform) and workload configuration (through Databricks Declarative Automation Bundles). This created several challenges:

- Boundary Management: Keeping infrastructure and workloads properly separated required careful coordination

- Specification Translation: Converting architectural documentation into implementation code was error-prone

- Context Preservation: Maintaining security context across different layers (bronze, silver, gold) demanded consistent configuration

The new approach introduces a GitHub Copilot skill that transforms technical specifications into production-ready code while maintaining clear separation of concerns. The skill follows a strict contract:

- Extract architecture details from technical documentation

- Generate Terraform code exclusively for infrastructure concerns

- Create Databricks bundle files only for workload definitions

- Leave placeholders for environment-specific values

This approach eliminates the common pitfall of AI-generated code that blurs boundaries between infrastructure and application layers.

Provider Comparison: Azure Databricks Implementation Advantages

When compared to alternative approaches on other cloud platforms, this implementation offers several distinct advantages:

AWS Alternative: While AWS offers similar capabilities with Glue and Lake Formation, the tight integration between Azure Databricks and Microsoft's identity management (Entra ID) provides more granular access control without additional configuration. The implementation leverages Azure's native RBAC model for storage access, reducing the attack surface compared to AWS's cross-account access patterns.

GCP Alternative: Google Cloud's Dataproc and BigQuery integration requires more manual configuration for equivalent security boundaries. The Azure implementation's use of managed identities and access connectors provides a more streamlined approach to implementing least-privilege access patterns across data layers.

Key Differentiators:

- Native Azure integration reduces configuration overhead

- Unified identity management across compute and storage

- Simplified external location configuration for Unity Catalog

- More granular RBAC support for service principals

The implementation specifically addresses the complexity of maintaining separate identities and access controls for each data layer while ensuring proper isolation between bronze (raw data), silver (processed data), and gold (curated data) layers.

Business Impact: Accelerated Deployment with Reduced Risk

Organizations adopting this approach can expect significant business benefits:

Reduced Implementation Time: The automation process can generate a complete implementation in hours rather than weeks, accelerating time-to-value for data initiatives.

Improved Consistency: By enforcing strict boundaries between infrastructure and workloads, the approach reduces configuration drift and ensures security policies are consistently applied.

Lower Expertise Requirement: Teams with moderate cloud experience can implement complex architectures without requiring specialists in both Terraform and Databricks configuration.

Enhanced Maintainability: The structured approach with clear separation makes it easier to update and modify architectures as requirements evolve.

The implementation includes a comprehensive TODO tracking system that captures all environment-specific values that require manual configuration, ensuring no critical security parameters are overlooked. This addresses a common risk in automated deployments where AI might generate plausible but incorrect values.

Implementation Process

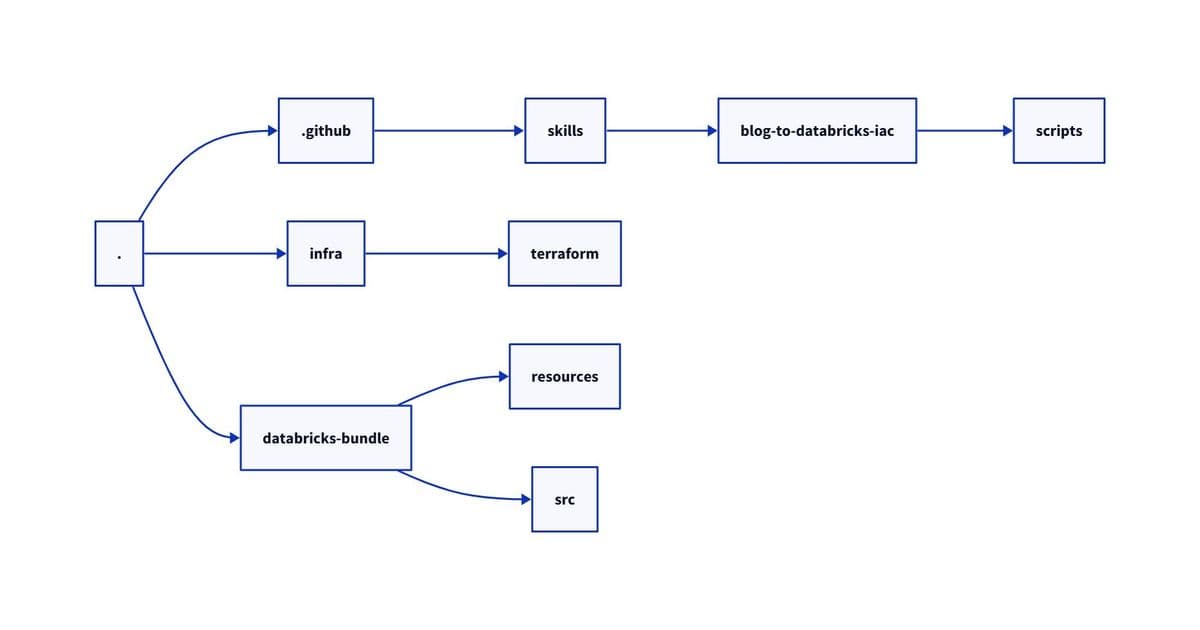

The process begins with creating a structured repository that separates the Copilot skill from generated artifacts. The skill includes several key components:

- Article Fetcher: A Python script that extracts content from technical documentation

- Analysis Engine: Maps extracted content to implementation requirements

- Generator Modules: Create Terraform and Databricks bundle files

- Output Templates: Ensure consistent formatting and structure

The workflow enforces strict boundaries where Terraform handles only infrastructure concerns (resource groups, storage accounts, managed identities, access connectors), while Databricks bundles manage workloads (job definitions, cluster configurations, notebook code).

Security Considerations

The implementation addresses several critical security concerns:

- Least Privilege Access: Each data layer uses separate service principals with restricted permissions

- Secret Management: Integration with Azure Key Vault for credential storage

- Network Isolation: Proper configuration of VNet peering and private endpoints

- Audit Trail: Comprehensive logging through Azure Monitor and Databricks audit logs

Future Implications

This approach represents a significant step toward AI-assisted cloud architecture implementation. As GitHub Copilot and similar tools evolve, we can expect:

- More sophisticated analysis of architectural documentation

- Better understanding of business context in technical specifications

- Enhanced validation of generated configurations against security best practices

- Integration with additional cloud providers and services

Organizations evaluating data architecture implementations should consider this approach as a way to accelerate deployment while maintaining security and compliance requirements. The separation of infrastructure and workloads provides the flexibility to modify either component without affecting the other, a critical factor for evolving data platforms.

For organizations implementing similar architectures, the key takeaway is the importance of establishing clear boundaries and contracts between different components, even when leveraging AI for code generation. This ensures that the resulting implementation maintains the security and operational characteristics required for production environments.

The complete implementation guide and code samples are available through the Microsoft Community Hub, with additional resources for GitHub Copilot skills and Databricks configuration patterns.

Comments

Please log in or register to join the discussion