groundcover reveals the messy reality behind OpenTelemetry claims in GenAI, building a solution to normalize inconsistent telemetry data from multiple SDKs, frameworks, and providers.

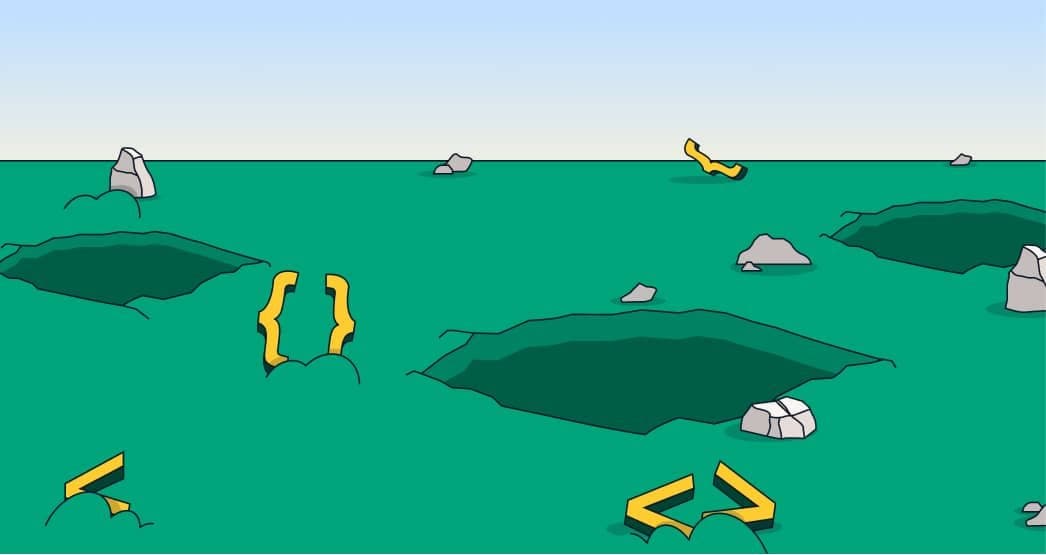

Despite the comforting narrative that OpenTelemetry has standardized GenAI telemetry, the reality is far more complex. groundcover, an observability startup, has spent weeks building a normalizer to handle the inconsistent data emitted by various GenAI SDKs, frameworks, and providers.

"Everyone says they support OpenTelemetry. However, nobody actually emits the same attributes," writes Anais Dotis from groundcover in a recent blog post. "We discovered that there are many hurdles associated with traditional instrumentation."

groundcover offers two paths for capturing GenAI telemetry: SDK instrumentation and eBPF-based interception. Both need to produce the same canonical output, regardless of whether the data comes from Traceloop, LangSmith, or raw API requests intercepted at the kernel level.

The complexity stems from three independent vectors that combine to produce GenAI telemetry:

- Instrumentation SDKs - Traceloop, LangSmith, official OTel instrumentations, or manual attributes, each emitting different attribute names and formats

- Orchestration frameworks - LangGraph, CrewAI, Pydantic AI, AutoGen, Semantic Kernel, each producing different span tree structures and metadata

- LLM providers - OpenAI, Anthropic, Bedrock, Gemini, Groq, Mistral, each with unique API formats, token semantics, and message structures

"The full matrix is SDK × Framework × Provider, and each cell can have unique parsing quirks," Dotis explains.

groundcover engineers identified four wire formats for GenAI spans and developed detection logic for each. The normalizer resolves inconsistencies through priority chains and provider-specific adjustments.

Examples of the challenges they've addressed:

Model identification: Six different ways to report which model handled a request

Token counting: Five naming conventions for the same metric

Token semantics: Providers disagree on what "input tokens" means, requiring different handling for cache tokens

Provider naming: 26 different spellings for 15 provider names

"There is a big difference between 'we support OTel' and 'we produce correct, unified telemetry'," Dotis concludes. "The groundcover OTel normalizer for GenAI absorbs the complexity of differing naming conventions, token counts, message payloads, provider names, and much more so DevOps teams can focus on root cause analysis."

groundcover's approach aims to make AI observability as simple as installing an eBPF sensor, regardless of the providers, frameworks, or SDKs teams choose to use.

For more details on groundcover's GenAI observability solution, visit their official website and check out the full blog post.

Comments

Please log in or register to join the discussion