Intel has posted the fourth version of its Cache Aware Scheduling patches for Linux, bringing performance improvements to modern Xeon and EPYC processors through better cache locality management.

Intel has taken another step toward improving Linux performance on modern multi-core processors with the release of the fourth version of its Cache Aware Scheduling patches. The updates, posted to the kernel mailing list yesterday, represent continued refinement of a feature that could significantly boost performance for both Intel Xeon and AMD EPYC systems by optimizing how the scheduler handles cache locality.

What Is Cache Aware Scheduling?

Cache Aware Scheduling addresses a fundamental challenge in modern computing: ensuring that tasks sharing data remain colocated within the same last level cache (LLC) domain. On processors with multiple cache domains—common in today's high-core-count CPUs—poor scheduling decisions can lead to cache misses and cache bouncing, where data is repeatedly moved between different cache levels or cores.

The scheduler enhancement works by analyzing the cache topology and making intelligent decisions about task placement. When multiple tasks need to access the same data, the scheduler attempts to keep them on cores that share the same LLC, reducing memory latency and improving overall system performance.

What's New in Version 4?

The v4 patch series introduces several refinements to the original concept:

- Limited CPU scanning depth: The scheduler now restricts how deeply it scans for optimal CPU placement when NUMA balancing is enabled, reducing overhead while maintaining effectiveness

- Improved low-load handling: Changes to address load imbalance scenarios when system utilization is low

- Enhanced LLC ID management: Better tracking and utilization of last level cache identifiers

- Refined NUMA node placement: More intelligent preferred NUMA node selection for task placement

Despite these additions, the core cache aware scheduling logic remains consistent with earlier versions, focusing on maintaining cache locality while minimizing performance overhead.

Performance Impact

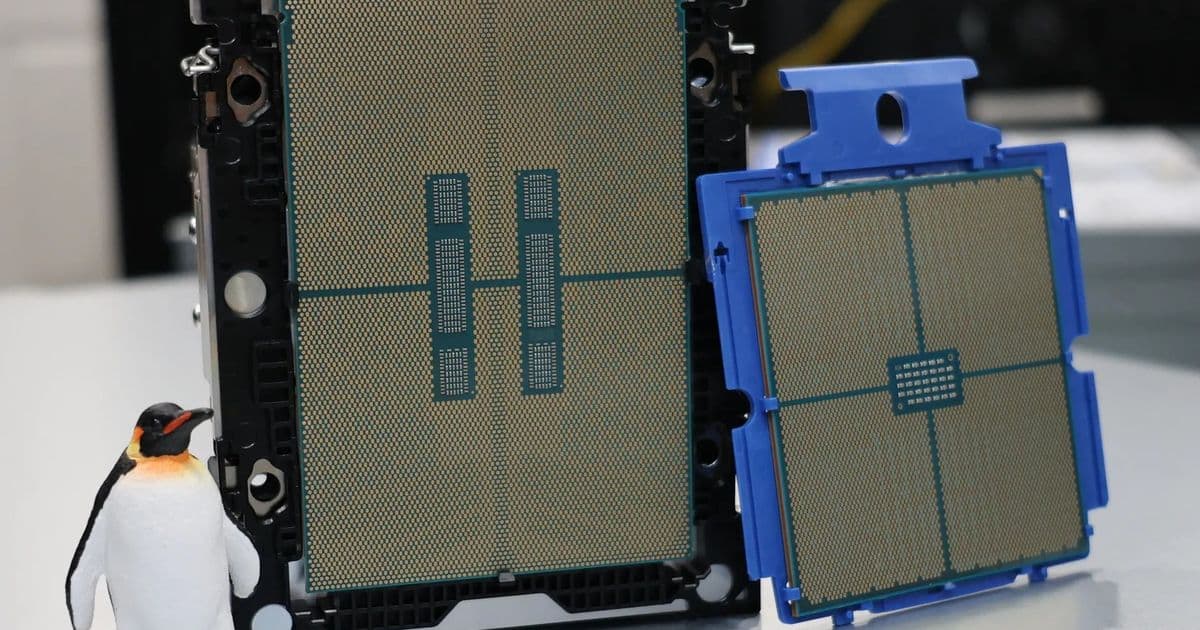

Intel's own benchmarks included with the patch cover letter demonstrate measurable performance gains on both Intel Xeon and AMD EPYC processors. While specific numbers weren't disclosed in the announcement, the performance improvements are described as "nice gains" across various workloads.

Independent testing of previous versions has shown similarly promising results. Testing on AMD EPYC Turin processors revealed "big potential" for performance improvements, while evaluations on Intel Xeon 6 Granite Rapids systems showed tangible performance uplifts.

The Road to Mainline

This marks the fourth iteration of the patches since Intel Linux engineers began working on the feature over a year ago. The fact that it's reached version 4 suggests active development and responsiveness to community feedback, though the patches have yet to be merged into the mainline Linux kernel.

The continued refinement indicates Intel's commitment to seeing this feature adopted upstream, where it could benefit the broader Linux ecosystem. With each revision addressing previous concerns and adding optimizations, the functionality appears to be maturing toward eventual kernel integration.

Why It Matters

For data center operators, HPC users, and anyone running Linux on modern multi-socket systems, Cache Aware Scheduling could provide meaningful performance improvements without requiring application changes. The scheduler enhancement works transparently, optimizing task placement based on cache topology automatically.

As processors continue to scale in core count and complexity, features like Cache Aware Scheduling become increasingly important for extracting maximum performance from hardware. The Linux kernel's scheduler is a critical component, and enhancements that improve cache utilization can have far-reaching effects on system performance.

Looking Ahead

The posting of v4 suggests Intel remains committed to upstreaming this functionality. If the development pace continues and community feedback remains positive, Cache Aware Scheduling could potentially land in a mainline kernel release later this year.

For system administrators and performance enthusiasts running modern Xeon or EPYC systems, this feature represents an exciting development in Linux's ability to optimize itself for contemporary hardware architectures. The combination of Intel's engineering resources and the open source community's scrutiny should result in a robust implementation that benefits users across the Linux ecosystem.

Those interested in examining the technical details can find the full v4 patch series on the kernel mailing list, where it will undergo further review and discussion before potential integration into the mainline kernel.

Comments

Please log in or register to join the discussion