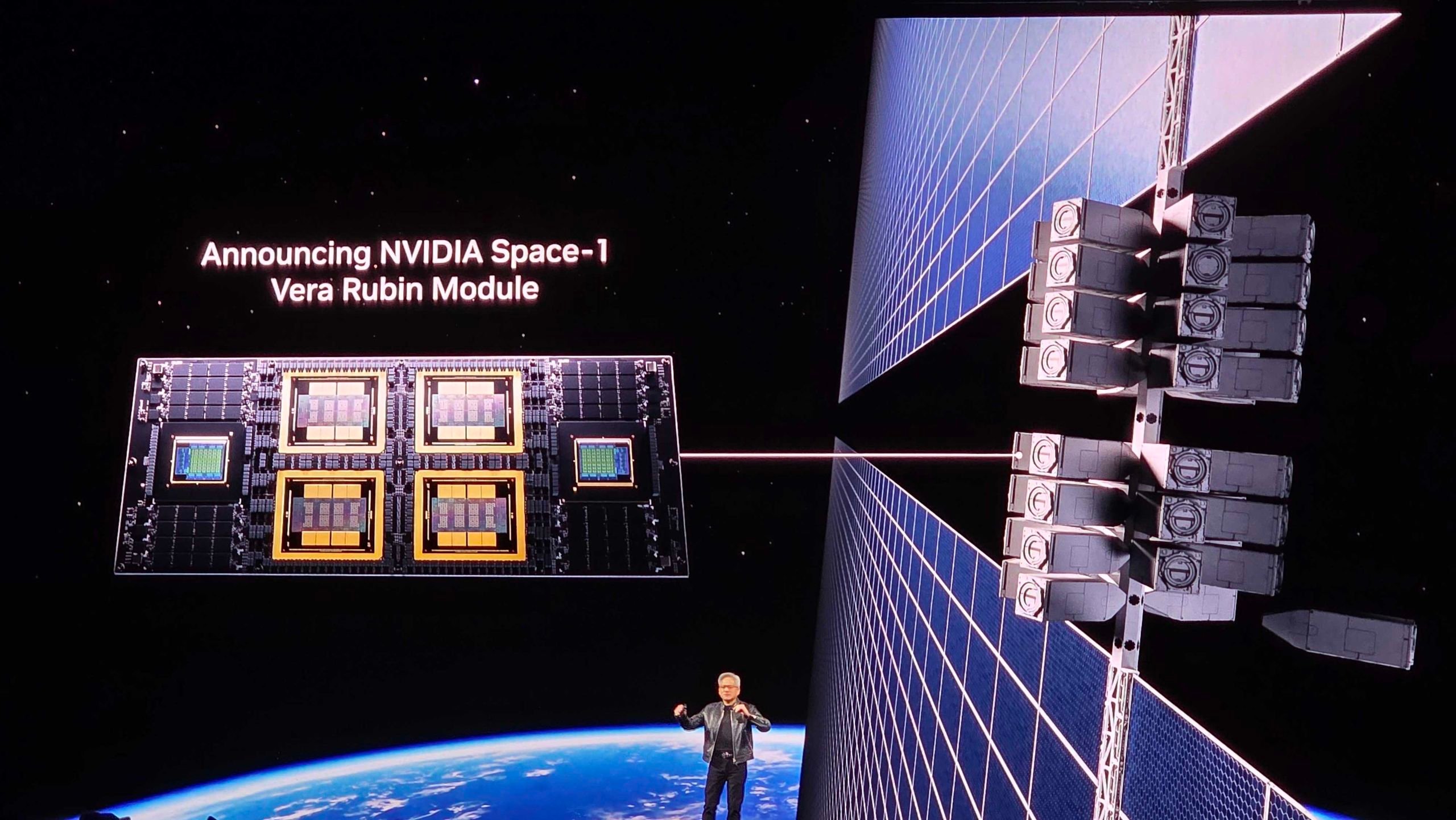

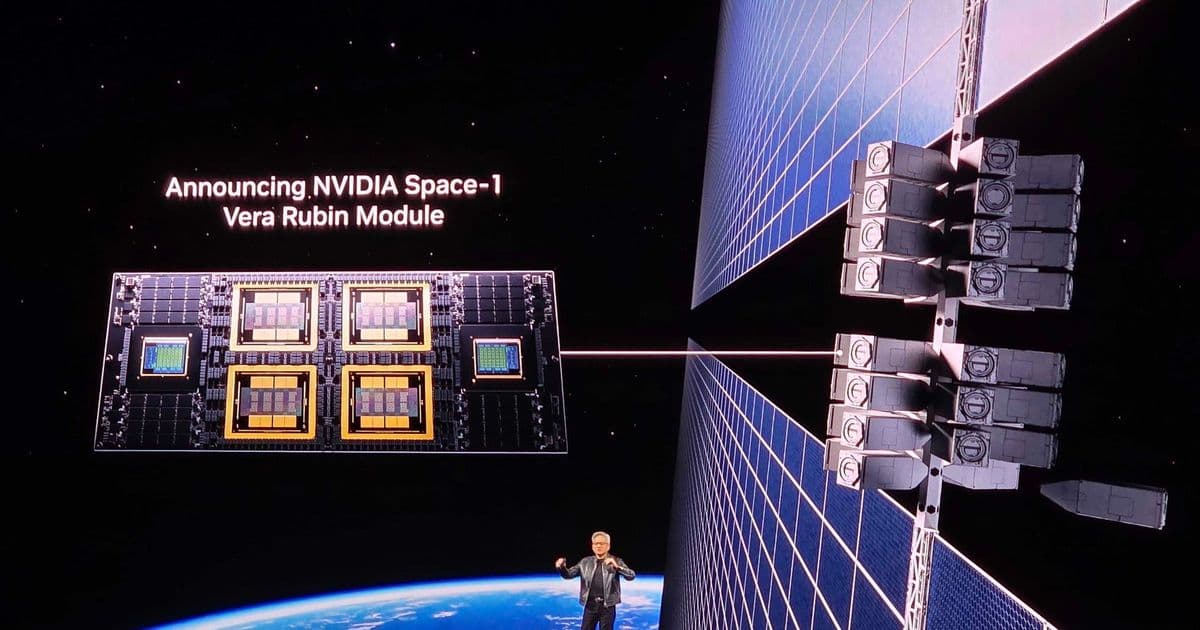

Nvidia unveils Vera Rubin Space Module with 25x H100 performance for orbital AI workloads, targeting space-based data centers and edge computing platforms.

Nvidia has unveiled its Vera Rubin Space Module at GTC 2026, claiming up to 25 times the AI compute performance of the H100 GPU for orbital inference workloads. The announcement marks a significant push into space-based computing infrastructure, with six commercial space companies already deploying the platform.

According to Nvidia's official press release, the Vera Rubin Space Module is designed for orbital data centers running large language models and advanced foundation models directly in space. The system features a tightly integrated CPU-GPU architecture with high-bandwidth interconnect built to handle large data streams from space-based instruments in real time.

Modular Space Computing Architecture

The Vera Rubin platform sits at the top of Nvidia's space computing hierarchy, but the company has developed a complete ecosystem for orbital AI workloads:

Vera Rubin Space Module - The flagship system offering 25x H100 performance for orbital inference, designed for large-scale LLM and foundation model inference in space-based data centers.

Nvidia IGX Thor - Targets mission-critical edge environments with support for real-time AI processing, functional safety, secure boot, and autonomous operation. This platform is built for applications requiring high reliability and security in space environments.

Nvidia Jetson Orin - Handles the smallest form factor, targeting SWaP-constrained satellites for onboard vision, navigation, and sensor data processing. The compact module brings advanced AI capabilities to satellites where size, weight, and power are critical constraints.

Ground-Based Support

While pushing computing into orbit, Nvidia hasn't abandoned terrestrial infrastructure. The company has positioned the RTX PRO 6000 Blackwell Series Server Edition GPU for geospatial intelligence workloads, claiming up to 100 times performance uplift versus legacy CPU-based batch processing systems when analyzing large image archives.

This ground-to-space computing strategy addresses the complete data pipeline, from satellite capture through orbital processing to Earth-based analysis.

Commercial Partnerships

Six companies are currently using Nvidia's platforms across orbital and ground environments:

- Aetherflux - Working on space-based solar power and computing integration

- Axiom Space - Developing commercial space station capabilities

- Kepler Communications - Deploying Jetson Orin across its satellite constellation for AI-driven data management

- Planet Labs PBC - Using space-based computing for Earth observation

- Sophia Space - Building orbital infrastructure solutions

- Starcloud - Already constructing purpose-designed orbital data centers

Kepler Communications' CEO Mina Mitry stated in Nvidia's press release: "Nvidia Jetson Orin brings advanced AI directly to our satellites, allowing us to intelligently manage and route data across our constellation."

The Space Computing Vision

The timing aligns with broader industry predictions about orbital data centers. Last October, Amazon and Blue Origin founder Jeff Bezos predicted that gigawatt-scale data centers in orbit were 10 to 20 years away, citing continuous solar power and the simplified cooling environment of space as primary advantages.

Starcloud is already building what it describes as purpose-designed orbital data centers aimed at running training and inference workloads in orbit, suggesting the market for space-based AI processing may develop faster than previously expected.

Availability and Market Impact

The IGX Thor, Jetson Orin, and RTX PRO 6000 Blackwell Server Edition are available now for immediate deployment. However, the Vera Rubin Space Module has no release date, with Nvidia stating it will be available "at a later date."

This staged rollout suggests Nvidia is taking a measured approach to space computing, building from proven edge platforms toward more ambitious orbital data center deployments.

Technical Implications

The 25x performance claim over H100 represents a significant leap in space computing capability. For context, the H100 delivers approximately 4 petaFLOPS of AI performance, suggesting the Vera Rubin module could offer around 100 petaFLOPS for orbital workloads.

This performance level enables complex AI inference directly in orbit, reducing the need to transmit raw data to Earth for processing. Applications include real-time satellite image analysis, autonomous spacecraft navigation, and on-orbit data filtering for bandwidth-constrained communications links.

Industry Context

The announcement comes as the space industry increasingly focuses on in-orbit servicing, manufacturing, and computing. Companies like Varda Space are already demonstrating on-orbit manufacturing capabilities, while others explore space-based solar power and communications infrastructure.

Nvidia's entry into this market with a complete hardware-software stack could accelerate the development of space-based AI applications, particularly for Earth observation, scientific research, and autonomous spacecraft operations.

The Vera Rubin Space Module represents Nvidia's most ambitious push into space computing yet, potentially enabling a new class of orbital data centers that could transform how we process and analyze information collected from space.

Comments

Please log in or register to join the discussion