OpenAI has significantly expanded its Responses API with new capabilities designed to simplify building autonomous agents, including a shell tool, agent execution loop, containerized workspace, context compaction, and reusable skills.

OpenAI has announced a major expansion of its Responses API, introducing a suite of new capabilities designed to make building autonomous agents more accessible and reliable for developers. The enhancements address fundamental challenges in agent development, including execution environments, context management, and task orchestration.

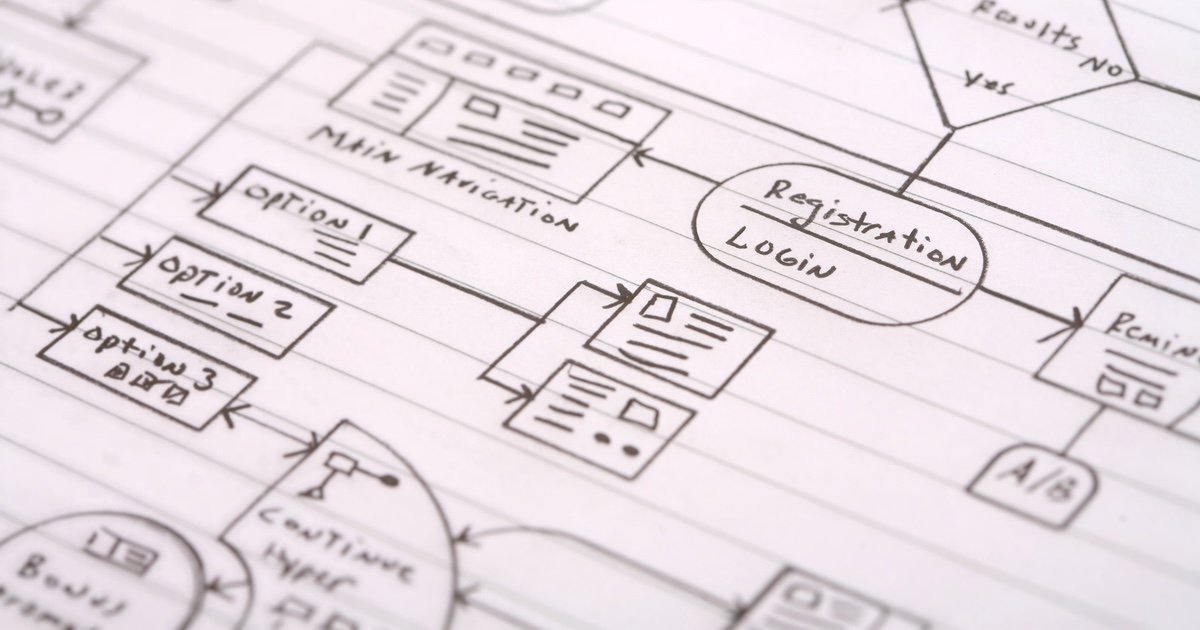

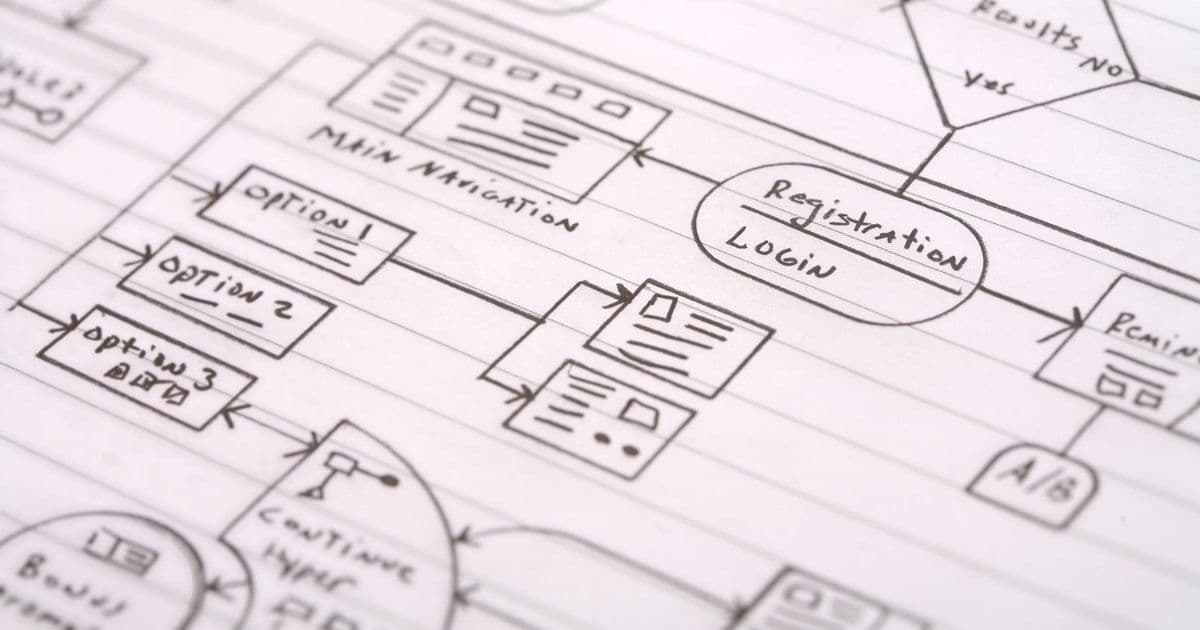

At the core of this update is the agent execution loop, a new architectural pattern that transforms how agents process tasks. Instead of producing a final answer immediately, the model can now "propose" an action—such as running a command, querying data, or fetching information from the internet. This proposed action is then executed in a controlled environment, with the result fed back into the model. The cycle continues iteratively until the task completes.

This approach represents a significant shift from OpenAI's previous code interpreter, which was limited to Python execution. The new Shell tool enables agents to interact with computers via the command line, providing access to familiar Unix utilities like grep, curl, and awk out of the box. More importantly, it allows execution of programs written in various languages including Go, Java, and the ability to start NodeJS servers. This flexibility dramatically expands the range of use cases that agents can handle.

To support these execution capabilities, OpenAI is bundling a containerized execution environment where files and databases can reside. Network access is managed through policy controls, ensuring safe operation. The system routes all outbound traffic through a centralized policy layer that enforces allow-lists and access controls while maintaining observability. Credentials remain external to the container, invisible to the model, which only sees placeholders replaced in the external layer.

A key innovation is the introduction of "skills," which package complex, repeatable tasks into reusable building blocks. A skill is essentially a folder bundle containing SKILL.md (with metadata and instructions) plus supporting resources like API specifications and UI assets. This modular approach allows developers to compose sophisticated agent behaviors from pre-built components.

Context management has also been significantly improved through compaction. Long-running tasks inevitably exceed context limits, so the system now compresses previous steps into shorter representations while preserving essential information—similar to how Codex operates. This enables agents to work across many iterations without hitting token limits.

By combining these elements—the agent execution loop, shell tools, containerized runtime, skills, and compaction—developers can build agents capable of executing long-running tasks from a single prompt. OpenAI emphasizes that this eliminates the need for developers to build their own execution environments to safely and reliably execute real-world tasks.

The new capabilities address practical challenges that all agent developers face: managing intermediate files, optimizing prompt usage, ensuring safe network access, and handling timeouts and retries. With these tools, OpenAI aims to provide a comprehensive foundation for building sophisticated autonomous agents without requiring developers to solve these infrastructure challenges from scratch.

For developers interested in exploring these new capabilities, OpenAI has published detailed documentation covering the agent execution loop, shell tool usage, skills implementation, and context compaction techniques.

Comments

Please log in or register to join the discussion