Zerocopy's new code generation testing approach provides concrete evidence that their safety abstractions don't compromise performance, marking a significant shift from faith-based optimization to verifiable zero-cost guarantees.

Zerocopy represents a fascinating tension in systems programming: how do you provide safe abstractions for low-level memory manipulation without sacrificing the performance that makes such low-level control valuable in the first place? The toolkit has long walked this tightrope, using rigorous testing, formal verification, and careful documentation to prove its safety guarantees. But efficiency—the other half of its promise—has remained more of a leap of faith, relying on #[inline(always)] attributes and trust in LLVM's optimizer to eliminate abstraction overhead.

This faith-based approach to performance has become increasingly strained as Zerocopy's abstractions have grown more sophisticated. The library's internal architecture now features multiple layers of safe wrappers, with the truly dangerous operations sequestered several function calls deep in tightly-scoped implementations. Click through the documentation into the source code of most methods, and you'll rarely encounter immediate uses of unsafe—a testament to the library's commitment to safety. But this architectural pattern raises an uncomfortable question: are these abstractions actually "zero cost" as claimed, or are they quietly introducing performance penalties that users never see?

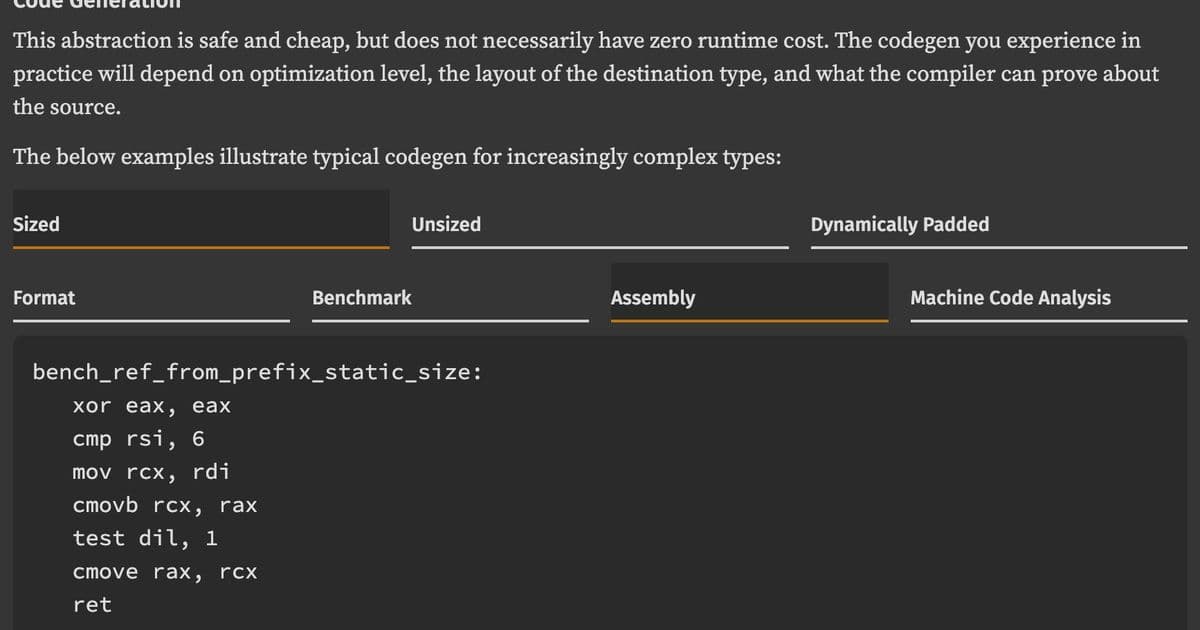

With version 0.8.42, Zerocopy has taken a significant step toward answering this question definitively. The library has begun documenting the code generation you can expect from each routine across representative use cases. Take FromBytes::ref_from_prefix as an example—the documentation now includes concrete expectations about the machine code that should result from typical usage patterns.

The mechanism behind this newfound transparency is equally interesting: rather than traditional runtime benchmarks that measure performance on actual hardware, Zerocopy has adopted a code generation testing approach. The project's benches directory contains comprehensive microbenchmarks, but instead of executing them, the team uses cargo-show-asm to assert that the generated machine code matches model outputs checked directly into the repository.

This methodology offers several compelling advantages. First, it provides deterministic verification—the same code will always produce the same assembly output under the same compiler version, eliminating the noise and variability inherent in hardware benchmarks. Second, it makes the impact of changes immediately visible: when a pull request modifies internal abstractions, the code generation tests will reveal whether those changes have inadvertently introduced performance regressions. Third, it shifts the conversation from "we trust the optimizer" to "here's exactly what the optimizer produces."

For users, this approach delivers something invaluable: confidence that the abstractions they're using won't surprise them at runtime. When you call a Zerocopy function, you can now verify that it compiles down to the minimal machine code you'd write by hand—no hidden allocations, no unnecessary branches, no surprising function call overhead.

The broader implication extends beyond Zerocopy itself. This code generation testing approach represents a maturing of the Rust ecosystem's relationship with performance guarantees. As the language and its libraries grow more sophisticated, blind faith in the optimizer becomes increasingly untenable. The shift toward verifiable zero-cost abstractions mirrors similar trends in other systems programming contexts, where the cost of abstraction must be provable, not just hoped for.

What makes this particularly noteworthy is how it addresses the fundamental tension between safety and performance in systems programming. Traditional approaches often force developers to choose: either write unsafe code for maximum performance, or accept abstraction overhead for safety. Zerocopy's approach suggests a third path—building sophisticated safe abstractions while maintaining the ability to prove they compile to optimal machine code.

This development also highlights an underappreciated aspect of library design: the importance of making implicit assumptions explicit. By documenting expected code generation and verifying it automatically, Zerocopy transforms what was once a hidden contract between library and user into a visible, testable guarantee. Users no longer need to wonder whether a particular abstraction is "expensive"—they can see exactly what it costs.

The approach does raise interesting questions about maintenance overhead. Code generation can vary between compiler versions, and keeping the model outputs current requires ongoing effort. However, this cost seems justified by the benefit of maintaining the library's core promise: that safety and performance aren't competing concerns, but complementary guarantees that can be delivered together.

For the Rust ecosystem more broadly, Zerocopy's evolution suggests a maturing beyond the early days of "trust the compiler" toward a more nuanced understanding of when and how to verify compiler behavior. As abstractions grow more complex and performance requirements more stringent, this kind of rigorous code generation testing may become the standard rather than the exception.

The shift from faith-based to evidence-based optimization represents more than just a technical improvement—it's a philosophical statement about what users deserve from their libraries. When you're building on top of abstractions, you shouldn't have to wonder whether those abstractions are secretly undermining your performance goals. Zerocopy's new approach ensures that the zero in "zero cost" isn't just marketing language, but a verifiable property of the code you ship.

For developers working with low-level memory manipulation, this development removes a significant source of uncertainty. The abstractions that make code safer and more maintainable no longer come with the hidden tax of performance uncertainty. In a field where every cycle counts, that's not just an improvement—it's a fundamental shift in what's possible when safety and performance work in harmony rather than at odds.

Comments

Please log in or register to join the discussion