The term 'Palantir' originated in J.R.R. Tolkien's legendarium as a cautionary symbol of dangerous overconfidence in predictive power—not as a brand for surveillance technology. This article argues that reclaiming the word from corporate appropriation provides a vital framework for understanding how modern data platforms encode bias, erode accountability, and threaten democratic governance, using the NHS contract controversy and ontology manipulation as key examples.

The palantíri of Tolkien’s Middle-earth were not tools of enlightenment but dangerous artifacts that seduced rulers into mistaking limited visions for complete truth. Denethor, Steward of Gondor, fell to despair after seeing only fragmented truths through his palantír, while Saruman was corrupted by the illusions of power it showed. The stones carried an explicit warning: knowledge without wisdom leads to ruin.

Today, the name adorns a surveillance technology company whose tools are deployed in healthcare systems, war zones, and border controls—precisely the contexts where Tolkien’s warning feels most urgent. When Juan Sebastián Pinto, a former AI explainer who worked on systems now used by entities like the Pentagon, stands before audiences to reclaim this term, he’s not engaging in linguistic nostalgia. He’s offering a diagnostic lens for platforms that promise omnisight while delivering distorted realities.

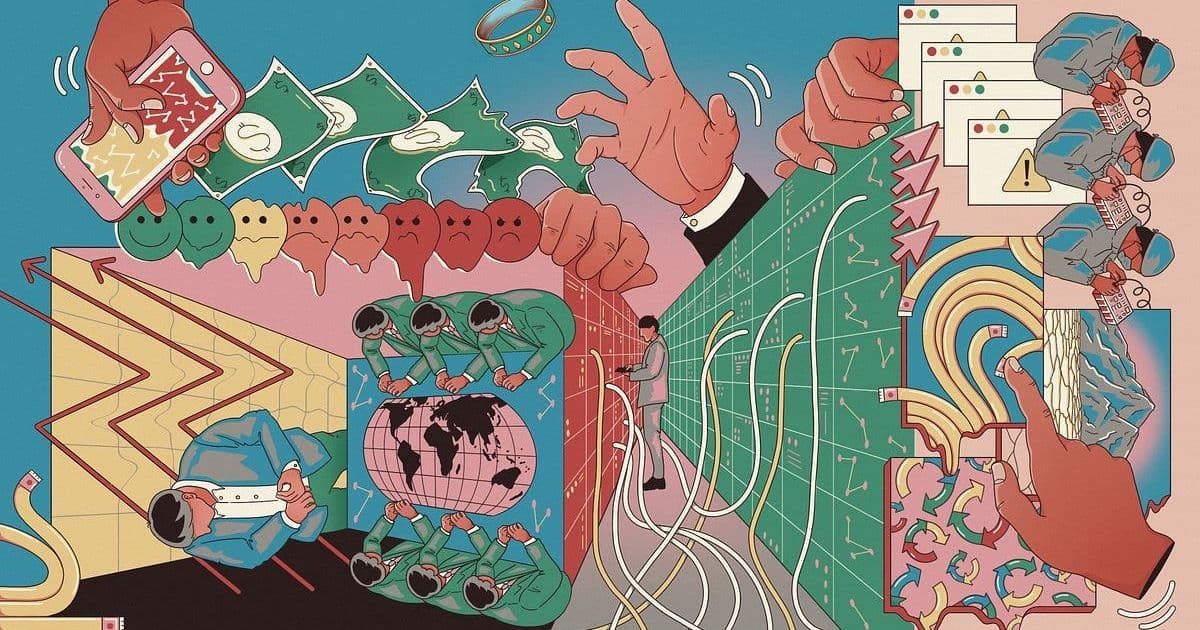

Consider how modern ‘Palantir stones’ operate. Pinto describes a universal input-output architecture: flawed data enters at the base (where bias, illegality, or incompleteness poison the foundation), gets processed through an analytics layer where meaning is constructed (and ideology can be baked in), then outputs as automated decisions affecting real lives. This isn’t theoretical. In the UK’s National Health Service, Palantir’s Foundry platform sparked parliamentary scrutiny after revelations about its role in processing sensitive patient data during pandemic response—a contract later challenged by Amnesty International and the Good Law Project. The concern wasn’t merely technical; it was about who gets to define what counts as a ‘risk’ or a ‘need’ when algorithms sort human lives.

The real battleground, Pinto argues, lies in the concept of ‘ontology’—a term Palantir treats as proprietary intellectual property but which actually belongs to philosophy and information science. Ontology defines how we categorize reality: what counts as a ‘person,’ what relationships matter, what constitutes a ‘threat.’ When Palantir’s tools simulate organizations through these semantic layers, they don’t just reflect reality; they actively shape it. We see this in how the Trump administration used Palantir-adjacent systems to erase transgender recognition at borders—where a deleted ontology category rendered people stateless in their own country—or how the platform has been deployed to target diversity, equity, and inclusion programs within U.S. government agencies.

This is where the Tolkien parallel becomes chillingly precise. The palantíri didn’t show objective truth; they showed what the user feared or desired, amplified by the stone’s inherent bias toward domination. Similarly, modern surveillance ontologies aren’t neutral mirrors but active instruments of worldview enforcement. As Pinto notes, ‘Palantir’s ontology can be reconfigured to harm people and reflect the political goals of authoritarian governments.’ The danger isn’t just inaccuracy—it’s the systematic encoding of prejudice into the architecture of decision-making, where a classification threshold in code becomes the difference between receiving care or being flagged as a threat.

Reclaiming language here isn’t semantic pedantry. It’s about restoring public capacity to scrutinize power. When we let corporations own terms like ‘Palantir’ or ‘ontology,’ we cede the ability to describe what these systems actually do: concentrate predictive authority in unaccountable hands, automate bias under the guise of objectivity, and erode the very notion of shared reality that democracy requires. Tolkien’s elves guarded the palantíri not through technological superiority but through fellowship, ritual, and strict traditions—a reminder that governing powerful tools demands collective wisdom, not just technical expertise.

The opportunity lies in building a shared vocabulary that cuts through marketing mystique. Instead of accepting ‘AI’ as magic, we can ask: What data enters this system? Whose interests shape its categories? Who bears the cost when its predictions fail? By anchoring critique in Tolkien’s original warning—that seeing everything through a single pane of glass invites delusion—we create space for healthier skepticism. This isn’t about rejecting technology; it’s about ensuring it serves human flourishing rather than undermining it. As Pinto concludes, drawing from Tolkien’s ‘On Fairy-Stories,’ fantasy remains a human right—but only when we recognize that the most dangerous spells are those we cast upon ourselves.

Comments

Please log in or register to join the discussion