BuildBuddy's Content-Defined Chunking (CDC) technology transforms how large build outputs are cached by transferring only changed bytes rather than entire files, resulting in significant reductions in data transfer and storage requirements.

The evolution of build caching has reached a pivotal moment with BuildBuddy's implementation of Content-Defined Chunking (CDC), a technology that addresses a fundamental limitation in remote caching systems: the unnecessary transfer and storage of entire files when only small portions have changed. This innovation represents a significant step forward in making build systems more efficient, particularly for large codebases where small changes can ripple through numerous outputs.

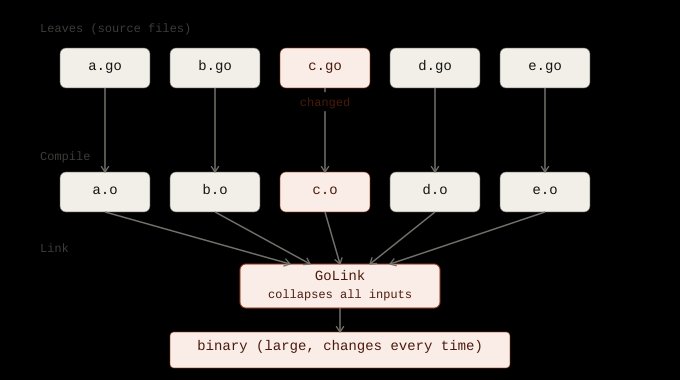

The traditional approach to remote caching, while revolutionary in reducing build times by reusing action outputs across machines and CI jobs, still suffers from a critical inefficiency when dealing with large transitive outputs. When a binary, bundle, package, or archive is mostly unchanged but contains a modified section, the cache treats the entire blob as new, leading to wasteful uploads, downloads, and storage. This problem manifests most acutely in linking, bundling, packaging, and archiving operations—actions that combine many transitive inputs into a single output.

Consider the common scenario where a small source change in a shared library propagates through numerous dependent packages. In a Go project, for instance, an implementation-only change in a shared go_library might only rebuild that library's compilation action. However, downstream linking actions that consume the transitive set of Go archives can produce entirely new digests for their outputs, even when most of the binary content remains identical. Without CDC, each of these large outputs is transferred and stored as a new whole blob, creating a significant bandwidth and storage burden.

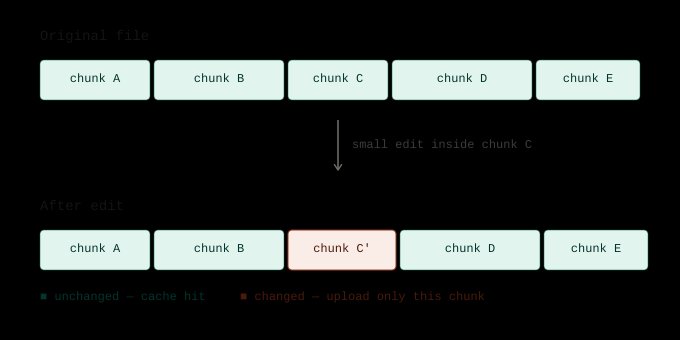

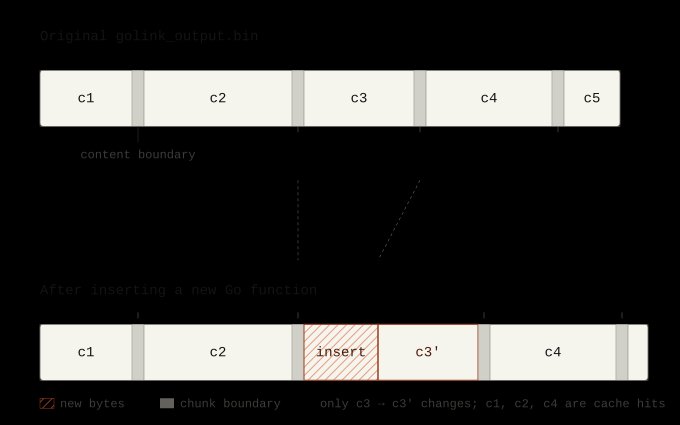

CDC solves this problem by treating the output as a sequence of reusable chunks rather than an indivisible blob. The algorithm employs a rolling hash over a small window of bytes, splitting the file when the hash matches a rare pattern. This approach creates chunk boundaries that are content-defined rather than based on fixed byte offsets, ensuring the same content always produces the same chunk boundaries deterministically. For a practical example, imagine the rolling window is 4 bytes wide and splits whenever the hash of that window ends in 00. If the original file contains sequences aaaa|bbbb|cccc|dddd, and we insert a few bytes within bbbb, the nearby chunks change, but once the rolling window moves past the inserted bytes and reaches cccc again, it finds the same cut point as before.

The benefits of this approach are substantial. In production environments, CDC has deduplicated approximately 85% of written bytes across eligible BuildBuddy cache writes, skipping the upload of around 300 TiB of duplicate chunk data over a two-week period, with peaks exceeding 4 TiB per hour. These savings translate directly into faster builds and more efficient resource utilization. In benchmarks conducted on the BuildBuddy repository, the implementation demonstrated approximately 40% less data uploaded and a 40% smaller disk cache, with corresponding improvements in build performance.

The technical implementation of CDC spans three critical components: the remote APIs that define the chunking protocol, the BuildBuddy server-side implementation, and the Bazel client-side integration. The remote APIs introduce two new operations: SplitBlob for reading chunked data and SpliceBlob for writing it. This protocol allows clients and servers to communicate about chunks rather than only whole blobs, enabling efficient transfer when only portions of a file are missing.

On the server side, BuildBuddy implements these APIs by storing chunks as normal CAS entries keyed by their chunk digest, while keeping reconstruction metadata separately under a key derived from the original blob digest. When SpliceBlob is called, the server verifies that the chunks exist and that concatenating them produces the original blob digest. This verification ensures data integrity while enabling the cache to skip chunks that already exist and transfer missing chunks in parallel.

Bazel's integration in the combined cache coordinates remote cache and disk cache reads and writes, creating chunked paths for large blobs above a server-defined threshold. Importantly, Bazel avoids keeping a second copy of every chunk in memory by using the original file as the source for chunk data and streaming needed byte ranges during upload. This approach minimizes memory overhead while enabling efficient chunked transfers.

The effectiveness of CDC varies depending on the characteristics of the output files. It works best for outputs that are large and byte-stable across revisions, with linking and packaging typically being good fits. Bundling also performs well when outputs are not compressed, obfuscated, or randomized. Compressed formats like tar.gz archives and Docker image layers often prove less chunkable because small input changes can rewrite more of the compressed byte stream. The key determining factor is byte-level similarity, not file extension.

For teams looking to implement CDC, the requirements are straightforward. Bazel support for CDC was introduced in version 8.7 and is available in 9.1+. Users can opt in by passing the --experimental_remote_cache_chunking flag to their BuildBuddy-enabled Bazel builds. BuildBuddy servers currently have CDC enabled for large files flowing through the server-side cache path, while self-hosted executor users should run BuildBuddy executor v2.261.0 or newer to achieve full benefits. No additional executor configuration is required, as CDC-eligible execution requests enable it automatically.

The broader implications of CDC extend beyond simple bandwidth savings. By reducing the amount of data that needs to be transferred and stored, CDC makes remote caching more practical for organizations with limited network bandwidth or high-latency connections. It also improves cache retention effectiveness by reducing the amount of duplicate data stored. Furthermore, CDC enables more efficient remote execution by making it practical to move large outputs between executors and caches, addressing a key limitation of RBE (Remote Build Execution).

Looking ahead, several potential enhancements could further improve CDC's effectiveness. Adaptive chunking strategies could optimize chunk sizes based on file characteristics, while smarter handling of compressed files might improve deduplication rates. Additionally, integrating CDC with other build optimization techniques, such as action result reuse and smarter dependency analysis, could create a more comprehensive approach to build acceleration.

In conclusion, BuildBuddy's implementation of Content-Defined Chunking represents a significant advancement in build caching technology. By addressing the fundamental inefficiency of transferring entire files when only small portions change, CDC dramatically reduces network traffic and storage requirements while improving build performance. As build systems continue to evolve and scale to larger codebases and more complex dependency graphs, technologies like CDC will play an increasingly crucial role in maintaining efficient development workflows.

For organizations using Bazel with BuildBuddy, the implementation of CDC offers a straightforward path to substantial performance improvements. The technology works transparently with existing build configurations, requiring only a simple flag to enable. As the results demonstrate, the benefits are immediate and substantial, with production environments already achieving significant reductions in data transfer and storage requirements.

Further Reading:

Comments

Please log in or register to join the discussion