The Rust language project is implementing a nuanced policy governing LLM usage in contributions, reflecting broader tensions in the developer community around AI-generated code.

Rust Project Navigates LLM Policy Tightrope: Balancing Innovation with Quality Control

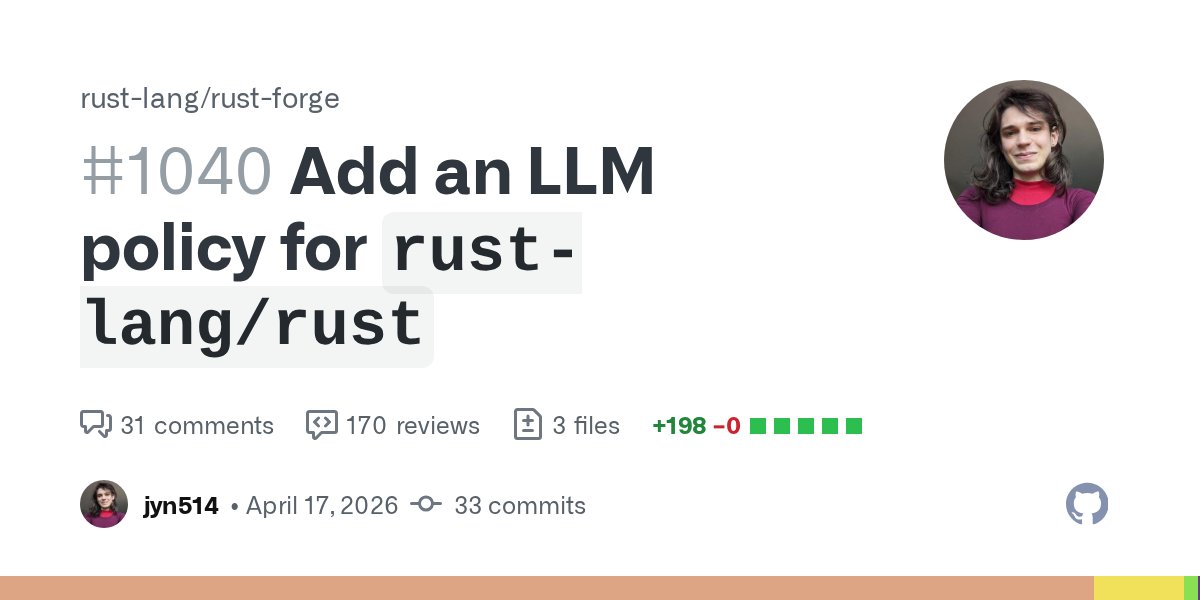

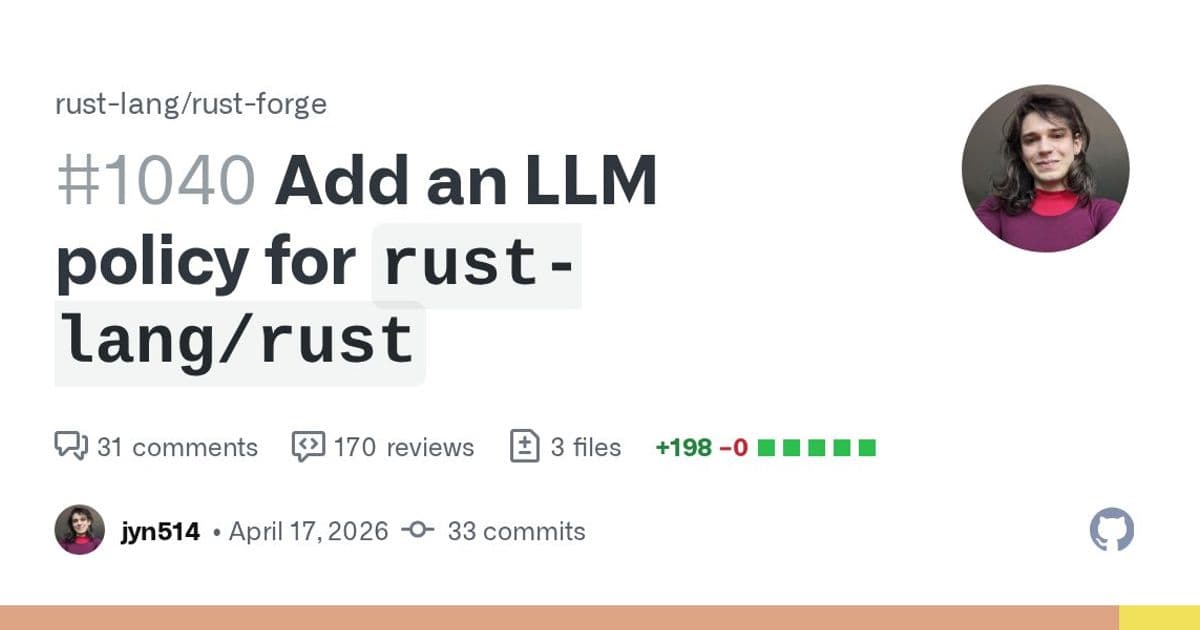

The Rust programming language project has taken a significant step toward establishing formal guidelines for Large Language Model (LLM) usage in its main repository, rust-lang/rust. The proposed policy, detailed in Pull Request #1040, represents a carefully calibrated approach to addressing the growing presence of AI-generated contributions while maintaining the project's high standards for code quality.

Context: The Deluge of 'Slop' PRs

The policy emerges from what contributors describe as a "deluge of low-effort 'slop' PRs primarily authored by LLMs" in the rust-lang/rust repository. This phenomenon is not unique to Rust but represents a challenge facing many open source projects as AI tools become more accessible to contributors with varying levels of expertise and commitment.

"Many people find LLM-generated code and writing deeply unpleasant to read or review," explains the policy's author, jyn514. "Many people find LLMs to be a significant aid to learning and discovery." This dual perspective underscores the complexity of crafting a policy that acknowledges both the potential benefits and real drawbacks of AI-assisted development.

A Scoped, Repository-Specific Approach

Unlike some projects attempting comprehensive, organization-wide AI policies, Rust's approach is deliberately limited in scope. The policy applies specifically to rust-lang/rust, explicitly excluding subtrees, submodules, dependencies from crates.io, and other repositories in the rust-lang organization.

This narrow focus represents a pragmatic acknowledgment that different components of the Rust ecosystem may have different needs and tolerance levels for AI-generated content. The compiler team, for example, operates with much higher standards for correctness than tools like Clippy, suggesting that one-size-fits-all policies may be impractical.

Policy Nuances and Exceptions

The proposed policy establishes several key distinctions:

Private use is permitted: LLMs may be used for private development work, with output not shown publicly.

Public LLM-generated content is restricted: Using LLMs to generate code, documentation, or text for public PRs or issues is generally disallowed, with specific exceptions.

Experimental program for experienced contributors: An opt-in program allows experienced developers to work with reviewers when using LLMs for certain tasks.

The policy intentionally errs on the side of being overly restrictive rather than too permissive. "We intentionally err on the side of banning too much rather than too little in order to make the policy easy to understand and moderate," the policy explains.

Moderation Challenges and Boundaries

The proposal comes after extensive community discussion, with over 3,000 messages on Zulip addressing various aspects of LLM usage in Rust development. Recognizing the difficulty of reaching consensus on such a polarizing topic, the policy authors have established clear boundaries for discussion:

- Long-term social or economic impact of LLMs

- The environmental impact of LLMs

- Copyright status of LLM output

- Moral judgments about people who use LLMs

These topics, while important, are explicitly considered out of scope for this particular policy discussion. "We still consider these topics to be important, we simply do not believe this is the right place to discuss them," the policy states.

Counter-Perspectives and Concerns

The proposal has elicited a range of responses from the Rust community. Some contributors worry that the policy may be overly restrictive, potentially hindering legitimate uses of AI tools. nikomatsakis, a prominent contributor, suggested a simpler approach: "LLMs for private use is allowed. Using LLMs to generate code, documentation or text that you post in a PR or issue is disallowed apart from a specific set of cases."

Others question whether the policy goes far enough. Darksonn commented, "The policy bans the number one most important use-case of LLMs in the programming space (writing code), so of course it can be seen as an anti-AI policy."

There's also debate about the appropriate decision-making process. Kobzol suggested that "the policy on rust-lang/rust is central enough that this is effectively 'committing the project' and should be a leadership council decision," while others argued that the repository-specific nature of the policy makes team-level approval appropriate.

Comparison with Other Open Source Projects

The Rust policy exists within a spectrum of approaches to AI usage in open source projects. At the restrictive end, projects like postmarketOS explicitly ban AI use and "encouraging others to use AI for solving problems." At the permissive end, the Linux Foundation "encourages usage, focuses on legal liability, mentions that tooling exists to help automate managing legal liability."

Other projects take nuanced positions:

- zig takes a philosophical approach, citing concerns around "the construction and origins of original thought"

- qemu focuses on copyright and licensing concerns

- blender has a pragmatic approach, "directly lists concerns around ai"

- mesa frames its policy as a contribution policy rather than an AI policy, emphasizing that "author must understand the code they contribute"

Looking Forward: Implementation and Evolution

The policy is designed to be a living document, with provisions for periodic review and adjustment. "I would hope that we can update the policy as we gain more experience without a massive FCP," nikomatsakis suggested.

The experimental program for experienced contributors represents an attempt to find a middle ground—allowing some exploration of beneficial LLM uses while maintaining quality standards. This approach acknowledges that as AI tools evolve, so too should the project's approach to their integration.

The Rust project's experience in crafting this policy may offer valuable insights for other open source communities grappling with similar questions. By establishing clear boundaries while leaving room for adaptation, the project demonstrates a thoughtful approach to a complex challenge—one that balances respect for human contribution with acknowledgment of technological change.

Comments

Please log in or register to join the discussion