A discussion between Solidigm's Greg Matson and NVIDIA's Kevin Deierling reveals how agentic AI is forcing a fundamental storage architecture shift, introducing a high-performance 'middle tier' enabled by wafer-scale NAND integration in SSDs like the D5 P5336, optimized KV cache usage, and extreme co-design with liquid cooling to meet projected exabyte-scale demands.

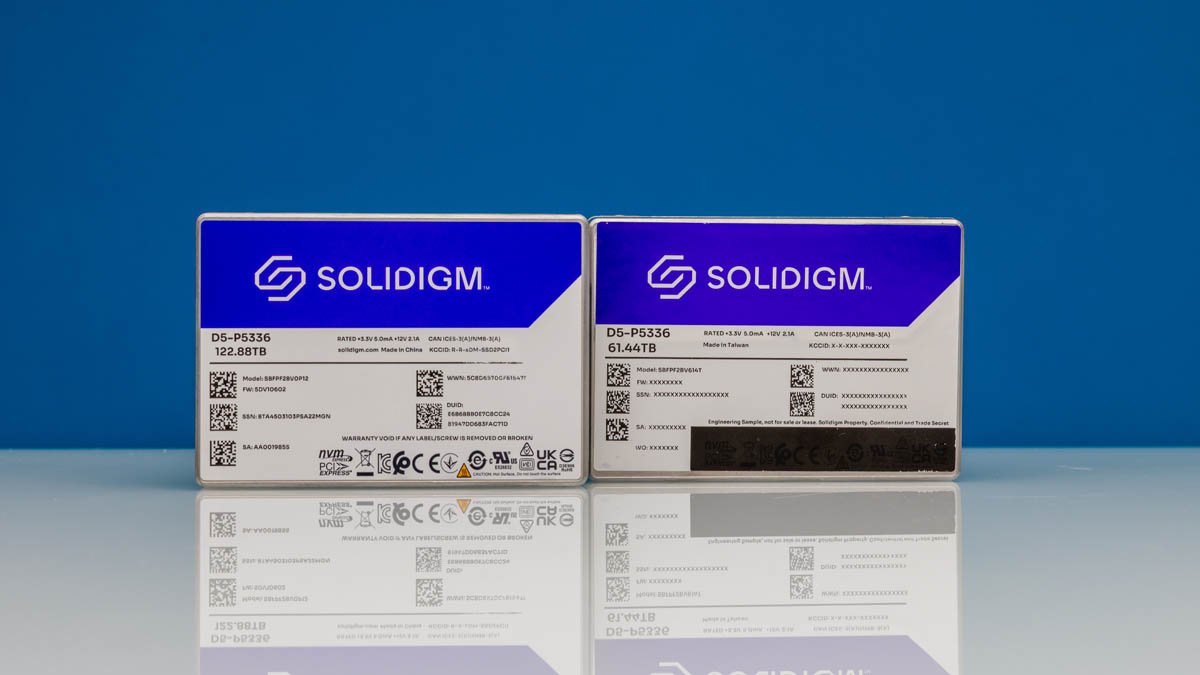

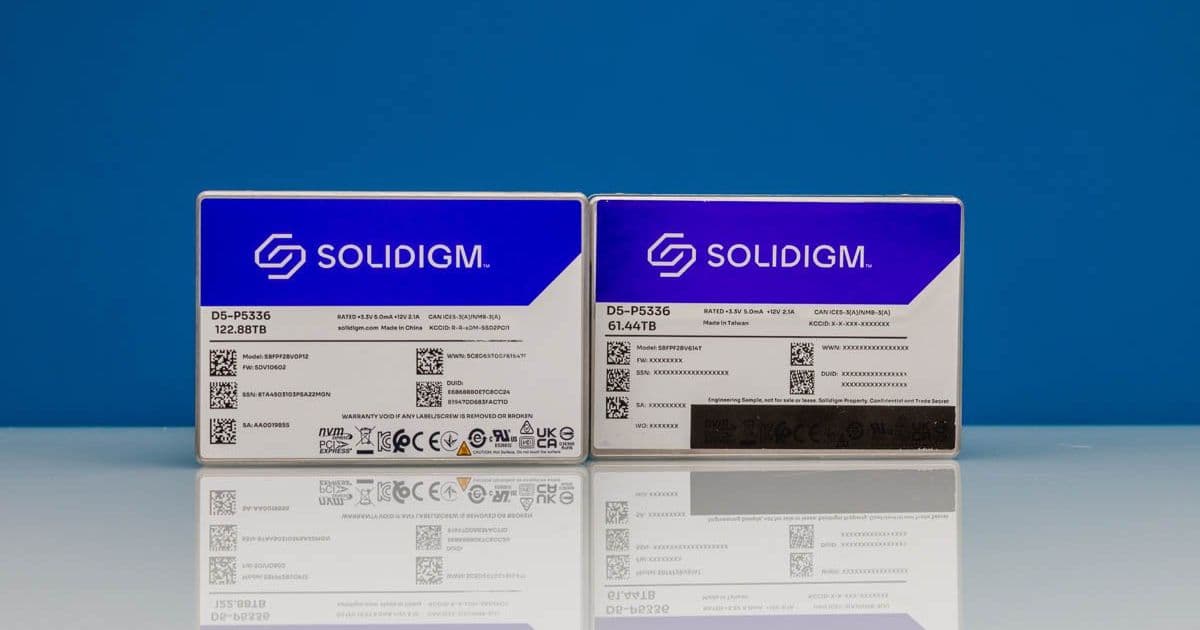

The transition from AI inferencing to agentic workflows isn't just changing software—it's rewiring storage hierarchy demands at the foundational level. When Greg Matson (SVP, Products & Marketing at Solidigm) and Kevin Deierling (SVP of Networking at NVIDIA) sat down to discuss AI factory storage, they outlined a clear inflection point: traditional storage tiers (ultra-fast expensive HBM vs. high-capacity slow NAS) are insufficient for workloads where AI models must retain and manipulate massive context windows over multi-step reasoning chains. This necessitates a new 'middle tier' of storage—flash-based, balancing performance and capacity—where innovations like Solidigm's D5 P5336 SSDs (offering 61.44TB and 122.88TB capacities) become critical enablers.

A striking detail from the conversation underscores why these capacities are now feasible: a single D5 P5336 SSD incorporates nearly an entire 300mm silicon wafer of NAND flash. This isn't merely about stacking more dies; it represents a fundamental shift in SSD architecture where wafer-level integration reduces interconnect latency, improves yield, and pushes density boundaries previously constrained by traditional die-to-die bonding. For context, legacy enterprise SSDs might use 4-8 dies per package; wafer-scale integration effectively eliminates the package boundary, treating the wafer as a monolithic storage array. This directly addresses the cost-performance gap Kevin highlighted—HBM memory costs approximately $10,000 per terabyte, while flash-based middle-tier storage targets a fraction of that, making large-scale AI context retention economically viable.

The discussion zeroed in on KV cache as the killer application for this new tier. Unlike traditional storage requiring absolute durability, KV cache stores intermediate tensor states during LLM inference. Since original prompt data can be recomputed (albeit with compute cost), KV cache tolerates higher failure rates in exchange for radically lower latency and higher throughput. Kevin emphasized that disabling KV cache in local LLM experiments immediately reveals its impact: computation demands can increase 5-10x for long-context tasks because the model must reprocess entire input sequences instead of reusing cached states. This recomputability allows storage architects to optimize relentlessly for speed—prioritizing IOPS and bandwidth over elaborate error correction—knowing that data loss merely triggers a recoverable compute penalty, not silent corruption.

Achieving the necessary performance within data center power and thermal envelopes requires what Greg termed 'Extreme Co-Design' between Solidigm and NVIDIA. This goes beyond simple interface compatibility; it involves joint optimization of electrical signaling (to minimize latency over longer traces in dense racks), thermal modeling (to prevent throttling during sustained 10+ DWPD workloads), and power delivery networks. Liquid-cooled SSDs exemplify this—the D5 P5336's liquid-coolable variant isn't just about adding a cold plate; it involves reworking the SSD's internal thermal architecture to efficiently transfer heat from the NAND and controller directly to the facility coolant loop, maintaining consistent performance where air-cooled drives would throttle. This was visibly demonstrated alongside next-gen NVIDIA Vera Rubin rack designs, where liquid-cooled storage blocks are integrated into the same cooling manifold as GPUs and DPUs.

Looking ahead, the scale is staggering. A single gigawatt AI factory—projected to become common within 3-5 years as AI permeates manufacturing, logistics, and autonomous systems—could require up to 25 exabytes of flash storage for optimal efficiency. This isn't just about raw capacity; it demands storage that sustains mixed workloads: high-bandwidth KV cache operations alongside bulk checkpointing and dataset loading. The middle tier must therefore deliver predictable low-latency performance under concurrent read/write patterns, a challenge where traditional enterprise SSD benchmarks (often focused on sequential or random 4K) fall short. Real-world validation will require new metrics capturing AI-specific access patterns, such as sustained 128KB sequential reads during context loading mixed with random 4K writes for cache updates.

For builders architecting AI infrastructure today, the implications are clear: focus on NVMe SSDs with proven high queue depth performance (>1M IOPS random read) and explicit liquid-cooling options for dense deployments. Validate KV cache acceleration frameworks (like NVIDIA's TensorRT-LLM) that intelligently tier hot KV states to local NVMe versus warmer states to networked storage. Most critically, recognize that storage isn't a passive backend—it's an active compute accelerator. As Kevin noted, treating KV cache as a distinct storage tier with different resilience requirements isn't an optimization; it's a prerequisite for scaling AI factories beyond today's limits.

Comments

Please log in or register to join the discussion