While query-based compilation promises incremental updates, its effectiveness is fundamentally limited by language design and the avalanche effect, making direct approaches preferable when possible.

Query-based compilers have become increasingly popular in modern language tooling, promising efficient incremental compilation through clever dependency tracking. The concept is elegant in theory: by modeling compilation as a graph of function calls and only recomputing paths affected by changes, we can achieve near-instantaneous feedback during development. However, this approach comes with significant limitations that are often overlooked in the rush to adopt incremental computation patterns.

The Promise of Incremental Computation

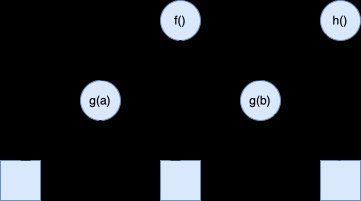

The core idea behind query-based compilation is straightforward. A compiler can be viewed as a complex transformation from source code to executable artifacts, implemented through numerous interconnected functions. By treating each function call as a query with dependencies on its inputs, we can create a system that automatically tracks which parts of the compilation need to be re-executed when source code changes.

This visualization shows how a change in input (like a modified source file) only requires recomputing the affected path through the compilation graph, rather than starting from scratch. The beauty of this approach lies in its generality—it can be applied to any computation with minimal changes to existing code.

Early Cutoff Optimization

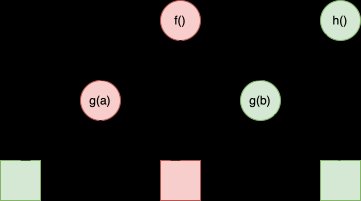

A particularly clever optimization emerges from this framework: early cutoff. If a function's inputs change but its output remains the same (for example, whitespace changes that don't affect type checking), the system can stop propagating changes early, saving computation time.

This optimization is especially valuable in IDE contexts where compilation must respond to rapid, small edits within tight time constraints—typically around 100ms. The big-O thinking here is crucial: the time to react to changes should scale with the size of the change, not the overall codebase size.

The Avalanche Problem

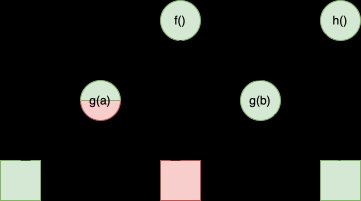

However, the effectiveness of query-based compilation faces a fundamental limitation: the avalanche effect. This occurs when small changes in input can potentially affect large portions of the output, making incremental updates inefficient.

Consider a simple compiler that converts text to uppercase. This is easily incrementalized—changing a few letters in the input only affects those same letters in the output. But if the compiler performs hashing or encryption, the situation changes dramatically.

For good encryption algorithms, changing even a single bit in the input should flip roughly half the bits in the output. This avalanche property means that the work required to update the output is proportional to the entire output size (O(N)), not just the change size (O(1)). The incremental engine must still perform all the work to determine what changed, even if the actual change propagation is minimal.

Language Design Matters

The effectiveness of query-based compilation is fundamentally constrained by the dependency structure of the source language. Languages that require global analysis for local operations are particularly problematic.

Rust exemplifies these challenges. Unlike languages where files can be parsed independently, Rust's macro system means parsing cannot complete until all macros are expanded. This requires name resolution, which in Rust is a crate-wide operation rather than file-specific. As a result, typing in one file can change parsing results in another file, forcing fine-grained dependency tracking throughout the entire frontend.

The trait system compounds this issue. For every trait method call, the compiler must track dependencies not only on the relevant impl block but also on the non-existence of conflicting impls in every other file. This creates a dense web of dependencies that undermines the efficiency gains of incremental compilation.

Alternative Approaches

When possible, direct approaches to incremental compilation are preferable to query-based systems. Zig demonstrates this principle well. In Zig, every file can be parsed completely in isolation, allowing compilation to start by parsing all files independently and in parallel. Because Zig requires explicit declarations (no implicit uses), name resolution can also run on a per-file basis without queries.

Zig goes even further by directly converting untyped AST into intermediate representation, emitting errors in the process. This approach works because the language is carefully designed to allow such direct transformations. By the time the compiler reaches tracked queries, it's already working with data far removed from raw source code.

In-Place Updates

Another technique that becomes less available with query-based systems is in-place updates. Consider a language with package declarations and fully qualified names. A compiler might maintain a map of all public declarations, where keys are fully qualified names and values are the declarations themselves.

Rather than building this map through recursive queries, a more direct approach uses only two queries: per-file and global. When a file changes, the system computes a diff of added or removed declarations and applies this diff to the global map. This approach avoids the overhead of structural sharing and recursive computation.

Zig is exploring similar techniques for incremental linking—rather than producing entirely new binaries, the idea is to in-place patch the previous binary, gluing mostly unchanged chunks of machine code.

When Queries Are Necessary

Despite these limitations, query-based compilation remains valuable as a fallback option, particularly for languages with complex interdependencies that cannot be avoided. The key insight is that as a language designer, it's worth pushing the need for queries as far down the compilation pipeline as possible, sticking to more direct approaches where feasible.

Not using queries is simpler, faster, and easier to optimize. Profiling a query-based compiler presents unique challenges, as the abstraction layer can obscure performance bottlenecks. Direct approaches provide clearer performance characteristics and more predictable behavior.

Conclusion

Query-based compilation represents an important tool in the language implementer's toolkit, but it's not a universal solution. Its effectiveness is fundamentally limited by language design choices and the nature of the transformations being performed. When designing new languages or optimizing existing compilers, careful consideration should be given to whether the benefits of query-based approaches outweigh their costs.

The most effective compilers often combine multiple strategies: direct approaches where the language design permits them, and query-based systems only where necessary. This hybrid approach provides the best of both worlds—the efficiency of direct computation where possible, and the flexibility of incremental computation where required.

For those interested in exploring these topics further, the following resources provide deeper insights into compiler architecture and incremental computation:

Comments

Please log in or register to join the discussion