A deep dive into the massive, distributed web scraping attacks that are targeting even small infrastructure, with visualizations showing how 1 in every 2,000 public IPv4 addresses participated in a single day of scraping.

The digital landscape is undergoing a transformation that few anticipated: the emergence of large-scale, coordinated scraping operations that dwarf traditional DDoS attacks in both scale and sophistication. When a personal VPS hosting simple services for friends becomes the target of an attack involving one in every two thousand public IPv4 addresses in a single day, we've entered a new era of digital conflict.

The author's experience, while personal in nature, reveals a broader pattern that threatens the very fabric of the open web. Their infrastructure, modest by any standard—a single CPU VPS with 200Mbps bandwidth—sustained a relentless 3,000 requests per minute for over 24 hours. These weren't random requests but carefully orchestrated operations designed to extract content at any cost. The subsequent attack, exceeding 4,000 requests per minute, demonstrates an escalation that few small operators can sustain against.

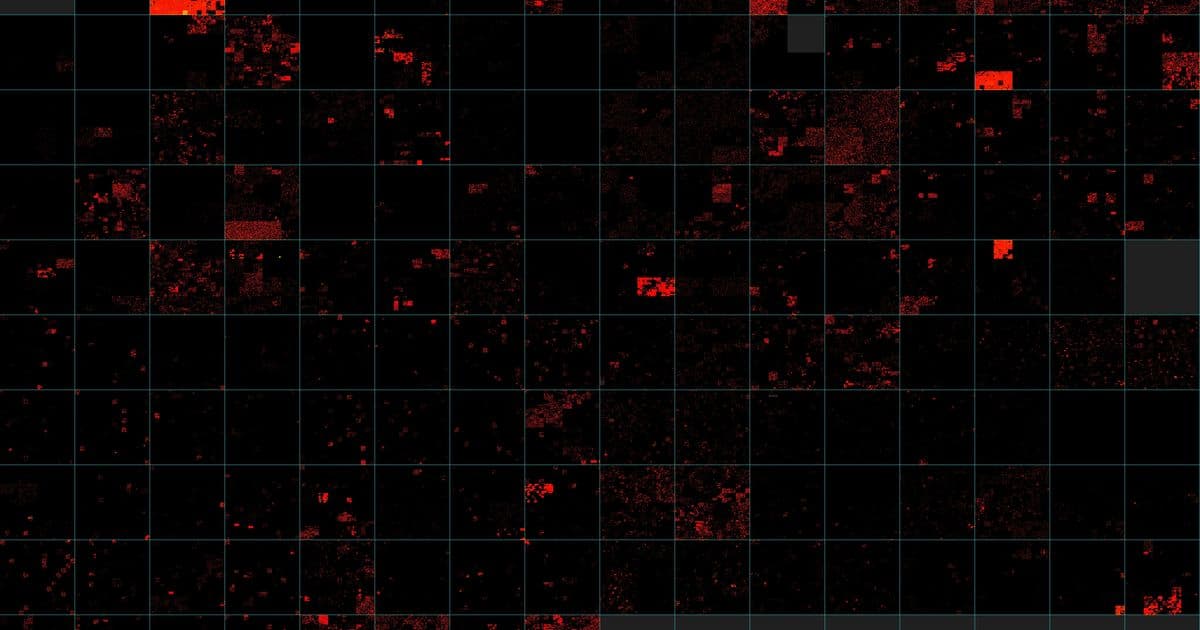

What makes these attacks particularly concerning is their distributed nature. As visualized through the IPv4 address space mapped on a Z-order curve, the attacks aren't concentrated in a few locations but are spread across 202 of the 256 possible /8 blocks. This distribution makes traditional defense strategies ineffective and demonstrates the resources behind these operations. The visualization shows a terrifying red-orange-yellow stain across the IPv4 landscape, with each pixel representing a /24 block containing at least one bot IP.

The statistics are equally staggering:

- 2,040,670 unique IP addresses hit the author's services in 24 hours

- 99.77% were IPv4 addresses, representing approximately one in every 2,000 public IPv4 addresses

- The top performing IP address (74.7.227.156 from Microsoft) made 150,483 requests

- Google's network (AS15169) was also heavily represented

These numbers reveal something crucial: we're not dealing with amateur scrapers but well-resourced operations, likely affiliated with major AI companies training large language models. The economic incentive to scrape the web at scale has created a new class of digital extraction that treats the entire internet as a free resource.

The author's defensive strategy, using Iocaine to generate garbage content that wastes scraper resources, represents an interesting approach in this asymmetric conflict. However, the fact that this approach is now straining the author's single CPU highlights the fundamental problem: defensive measures that consume resources to deter attackers create a prisoners' dilemma where both sides burn infrastructure.

The comparison between a normal day's activity and the attack day is particularly revealing. On a typical day, only a few ISPs like Vietnam's VNPT appear as visible clusters in the visualization. The attack, by contrast, illuminates nearly the entire IPv4 space. This visualization technique, implemented in simple Rust code, provides an unprecedented view of the scale of these operations.

Perhaps most concerning is the evolution of the scrapers themselves. By April 28, the author noted that while the number of detected bot requests decreased, the actual volume hitting infrastructure increased. This suggests the scrapers are becoming more sophisticated at avoiding detection while maintaining their extraction activities. The cat-and-mouse game has entered a new phase where the attackers are adapting faster than defenders can respond.

The implications extend beyond the author's personal infrastructure. If small, personal services are facing attacks of this magnitude, what does this mean for the long-term viability of the open web? The current model, where content creators bear the cost of protecting their work from extraction by massive corporations, is unsustainable. We may be approaching a tipping point where the only viable content protection is complete walled gardens, a outcome that would impoverish the web we know.

The timelapse visualization from April 11 to April 28 shows the progression of these attacks, with the intensity growing over time. This isn't a temporary phenomenon but a new baseline for web operations. As AI companies continue to train increasingly large models on web content, the pressure on the infrastructure that hosts this content will only intensify.

What's needed is a fundamental rethinking of how we value and protect web content. Technical solutions like Iocaine are necessary but insufficient on their own. We may need new economic models, regulatory frameworks, or even technical standards that create a more sustainable equilibrium between content access and protection. Without such interventions, we risk creating a web where only the largest players can afford to publish content, fundamentally altering the nature of our shared digital space.

Comments

Please log in or register to join the discussion