A comprehensive comparison of real-time data push technologies, examining their architectures, use cases, and trade-offs with practical implementation guidance.

When building applications that need to push data to clients in real time, developers face a critical architectural decision. The landscape has evolved significantly from simple polling to sophisticated protocols, each with distinct advantages and limitations. Let's examine the four major approaches to real-time data push and understand when to use each one.

The Evolution of Real-Time Communication

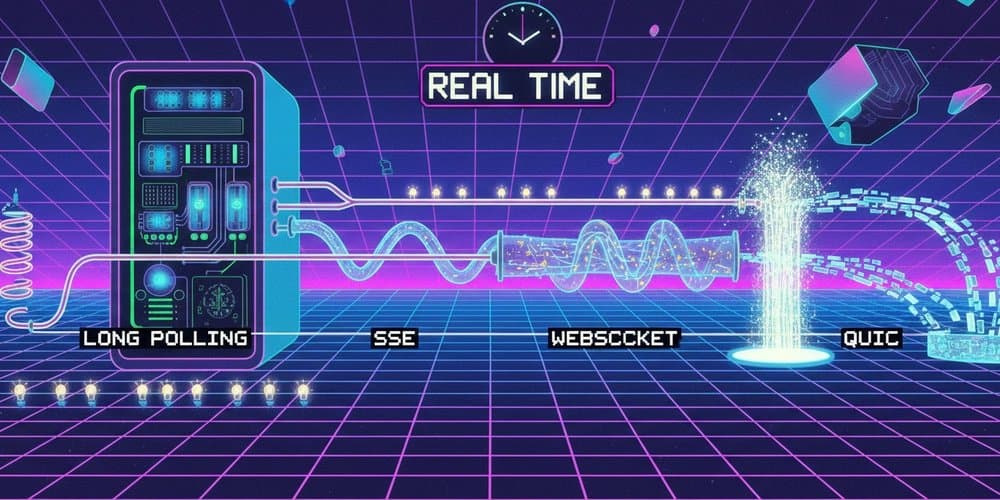

Real-time data push has come a long way from the early days of periodic polling. Today's applications demand immediate updates, whether for chat applications, live dashboards, collaborative editing, or streaming services. The four main approaches—Long Polling, Server-Sent Events (SSE), WebSocket, and QUIC—represent different points in this evolution, each optimized for specific scenarios.

Long Polling: The Universal Workhorse

Long polling is essentially HTTP pretending to be real-time. The client makes a normal HTTP request, but the server holds it open—sometimes for seconds or even minutes—until it has data to send. Once the response arrives, the client immediately fires off the next request.

This creates a continuous cycle: Client polls → Server waits → Data arrives → Server responds → Connection closes → Client immediately polls again.

When Long Polling Makes Sense

Long polling shines in scenarios where you need broad compatibility and can tolerate slight delays. Legacy system compatibility is its strongest suit—it works everywhere HTTP works, including behind strict corporate firewalls and through any proxy. Low-frequency updates benefit from this approach since the overhead of establishing new connections is amortized over longer periods.

The Hidden Costs

The main drawback is that every message costs a full request/response cycle, complete with HTTP header overhead. This translates to higher latency and increased server load compared to persistent connections. Not truly real-time, long polling typically introduces delays of several seconds. The constant opening and closing of connections also puts significant strain on server resources, making it challenging to scale to thousands of concurrent users.

Server-Sent Events: The Streaming Specialist

SSE creates one persistent HTTP connection where the server streams a series of events down it indefinitely using a simple text format. The browser handles reconnection automatically, making it remarkably resilient to network issues.

Perfect for One-Way Data Flow

SSE excels at scenarios where data flows in only one direction—from server to client. AI token streaming, like what you see in ChatGPT-style interfaces, is a perfect use case. Live notifications, stock tickers, and CI/CD log tailing also benefit from SSE's simplicity and reliability.

The built-in auto-reconnect feature means clients recover gracefully from network interruptions without special handling code. The lightweight protocol uses minimal bandwidth compared to WebSocket, and the text-based format makes debugging straightforward.

Limitations to Consider

However, SSE is unidirectional—clients cannot send data back to the server without additional mechanisms. Limited binary support means it's not ideal for applications that need to transmit images, audio, or video directly. For chat applications or gaming, where bidirectional communication is essential, SSE falls short.

WebSocket: The Full-Duplex Champion

WebSocket is what most developers reach for when they say "real-time." It starts as an HTTP request, performs a protocol upgrade handshake, and then becomes a persistent, full-duplex TCP connection. Both sides can send frames at any time with minimal overhead.

Where WebSocket Shines

Multiplayer games rely heavily on WebSocket for real-time player interactions. Collaborative editing tools like Figma and Notion use it to synchronize changes across multiple users instantly. Live chat applications and IoT device control systems benefit from the bidirectional nature that allows immediate command and response.

The low latency and support for binary data make WebSocket ideal for applications where every millisecond counts. Once the connection is established, the overhead is minimal compared to HTTP-based approaches.

Scaling Challenges

WebSocket connections are more complex to scale horizontally. Load balancers need to be configured to handle persistent connections properly, and you often need additional infrastructure like Redis to manage connection state across multiple servers. No automatic reconnection means you must implement this logic yourself, adding complexity to client applications.

QUIC: The Next-Generation Protocol

QUIC represents the future of web transport. Built on UDP and developed by Google, it powers HTTP/3 and introduces independent stream multiplexing that eliminates TCP's core flaw: head-of-line blocking.

Revolutionary Performance Benefits

With TCP, a single lost packet blocks every stream behind it. QUIC streams are truly independent—one dropped packet only stalls its own stream. This architectural difference translates to faster performance, better mobile connectivity, and superior handling of packet loss.

High-performance games benefit from QUIC's reduced latency and better mobile performance. High-quality video streaming services like YouTube and Cloudflare use it to deliver smoother playback. Latency-sensitive APIs see significant improvements in response times.

The Maturity Question

While QUIC offers compelling advantages, server infrastructure still needs to mature. The WebTransport API, which provides browser access to QUIC, is still in early stages. Implementation complexity is higher than traditional approaches, and not all environments support HTTP/3 yet.

Making the Right Choice

Choosing between these technologies depends on your specific requirements:

Start with SSE if you need simple server-to-client streaming and want minimal complexity. It's perfect for notifications, live updates, and any scenario where bidirectional communication isn't required.

Choose WebSocket when you need full-duplex communication and can handle the scaling complexity. It's the go-to choice for chat, gaming, and collaborative applications.

Consider Long Polling when you need maximum compatibility and can tolerate higher latency. It's a reliable fallback when more sophisticated options aren't available.

Look to QUIC when performance is paramount and you're building for the future. It's ideal for high-performance applications where the benefits justify the additional complexity.

Practical Implementation

The choice between these technologies often comes down to your specific use case and infrastructure constraints. Many modern applications use a combination—SSE for notifications, WebSocket for real-time collaboration, and QUIC for performance-critical features.

Understanding the trade-offs between complexity, performance, and compatibility helps you make informed decisions. The right choice balances your application's real-time requirements with your team's capacity to implement and maintain the solution.

As web technologies continue to evolve, QUIC and HTTP/3 will likely become the standard for new applications, but the mature, well-understood options remain valuable for many scenarios. The key is matching the technology to your specific needs rather than chasing the newest option.

Comments

Please log in or register to join the discussion