Stack Overflow announces the general availability of Ingestion in Stack Internal 2026.3, a powerful feature that transforms scattered organizational content into verified, AI-ready knowledge units while reducing manual curation overhead.

The 2026.3 release from Stack Overflow marks a significant milestone for enterprise knowledge management with the general availability of Ingestion in Stack Internal. This new feature addresses a common challenge in technical organizations: the fragmentation of valuable knowledge across various platforms and file formats. Rather than leaving institutional data siloed and inaccessible, Ingestion provides a systematic approach to converting scattered information into trusted, structured knowledge.

Transforming Raw Data into Structured Knowledge

Ingestion represents a fundamental shift in how organizations approach their internal knowledge repositories. Instead of manual content creation and curation, the feature automates the initial processing of unstructured information while maintaining quality through expert validation. The AI pipeline intelligently chunks, cleans, and converts raw text into structured, atomic Q&A pairs that are automatically tagged, mapped to users, and confidence-scored before routing to subject matter experts for final review.

This automation transforms what would typically be hours of manual work into a streamlined process. By converting fragmented, unstructured content into verified units of knowledge, institutional data becomes optimized for both human discovery and AI retrieval. The result is a knowledge base that's both comprehensive and reliable.

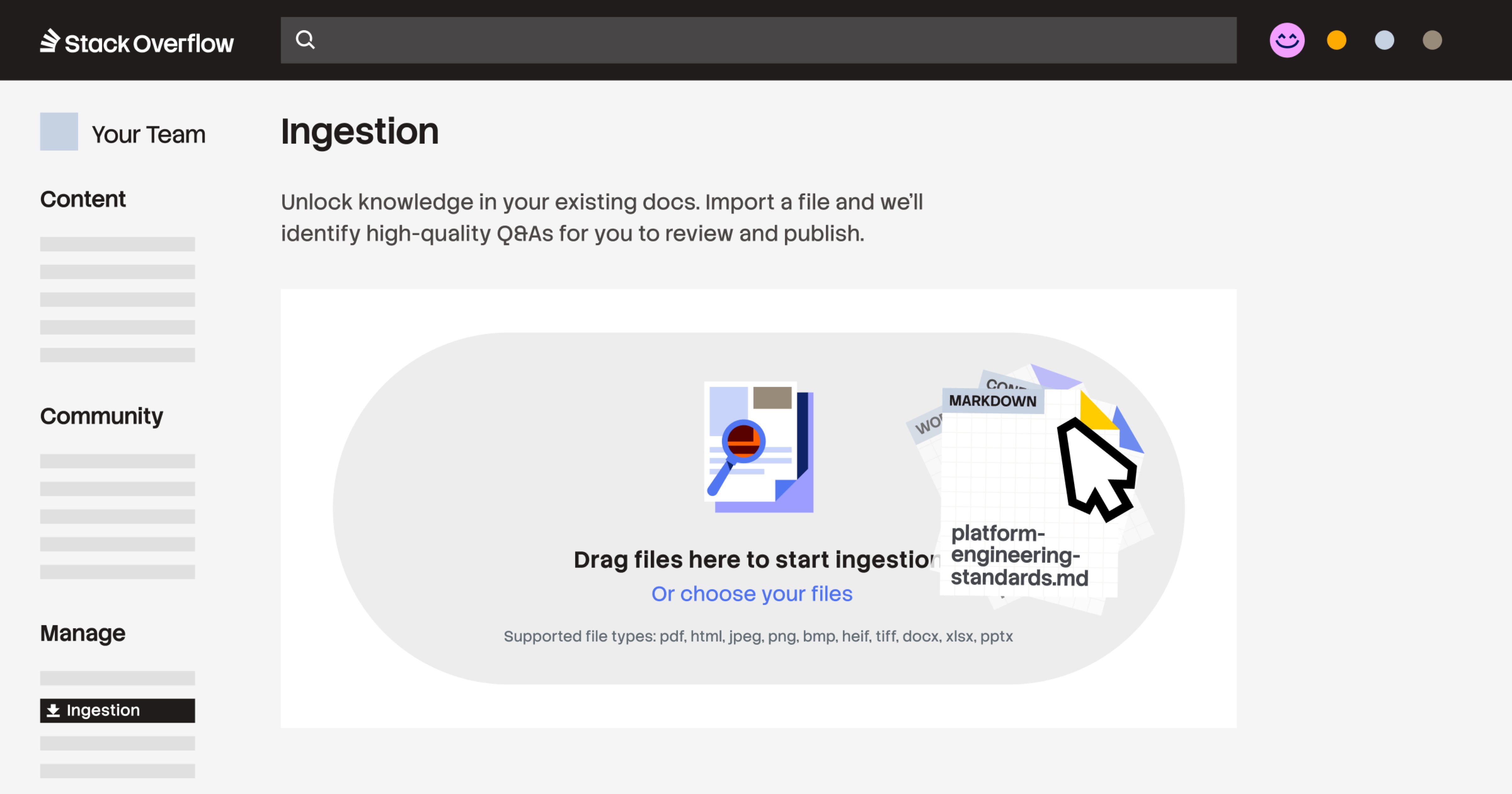

Technical Implementation and File Support

From a technical perspective, Ingestion offers flexible integration options. The system supports uploads of various file formats including PDFs, HTML, Markdown files, images (.jpeg, .jpg, .png, .bmp, .heif, .tiff), and Microsoft Office documents (.docx, .xlsx, pptx). Organizations can automate high-volume migrations via a POST /ingest/file API endpoint or use the intuitive drag-and-drop interface directly in the web application.

This technical flexibility ensures that regardless of where knowledge currently lives within an organization, it can be efficiently imported and structured. The API endpoint enables integration with existing document management systems, while the web interface provides accessibility for users who prefer a more visual approach to content migration.

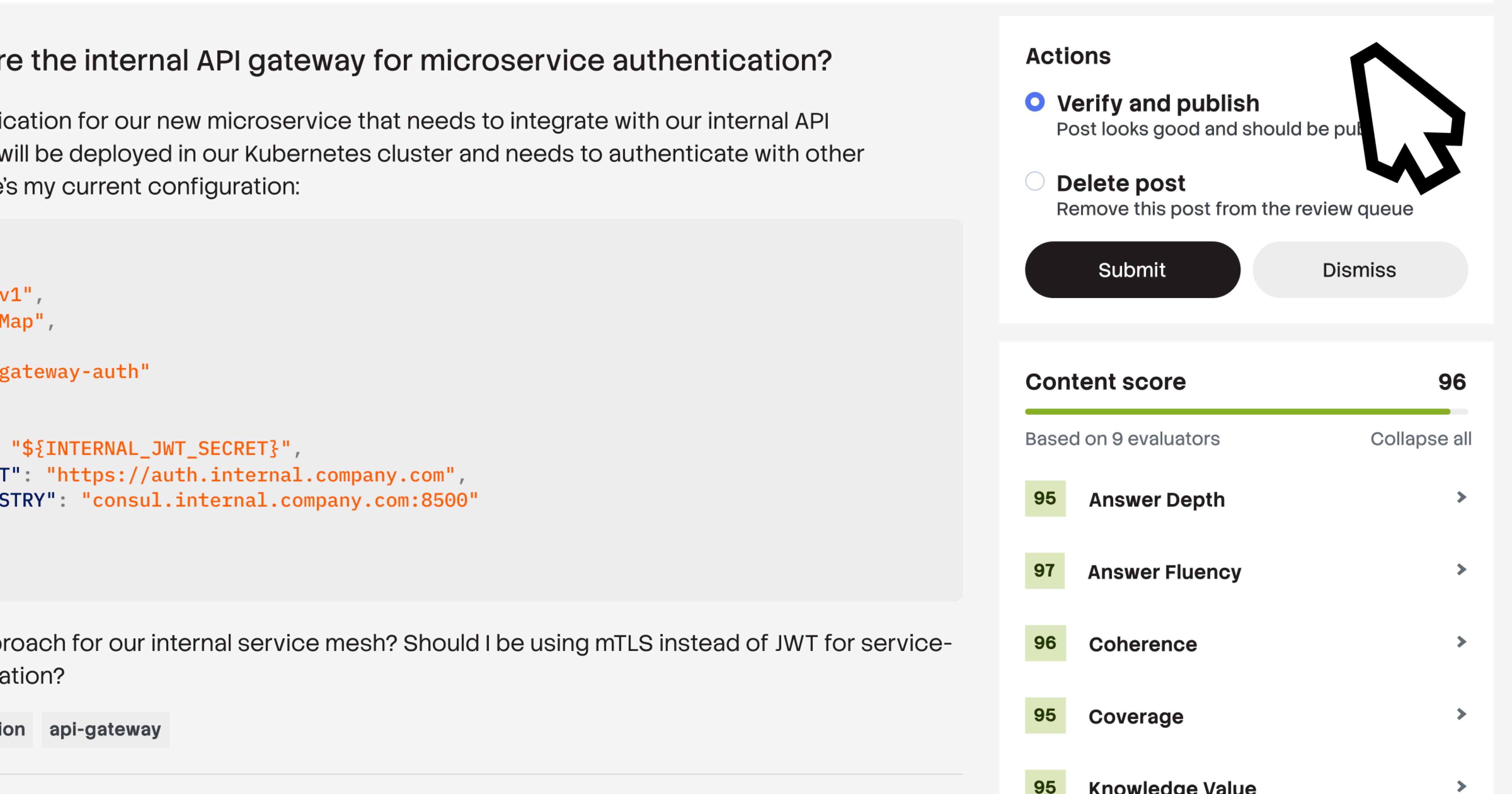

Shifting from Curation to Validation

One of the most significant improvements Ingestion brings is the redefinition of subject matter expert involvement. Rather than creating content from scratch, SMEs, admins, and moderators can focus on validating pre-structured, tagged, and confidence-scored Q&A pairs. This shift from manual curation to expert validation dramatically reduces the time commitment required while maintaining high-quality standards.

By automating the "first draft" of knowledge organization, Ingestion allows organizations to scale their knowledge management efforts without proportionally increasing personnel requirements. The system handles the heavy lifting of content processing, leaving human experts to focus on what they do best: ensuring accuracy and relevance.

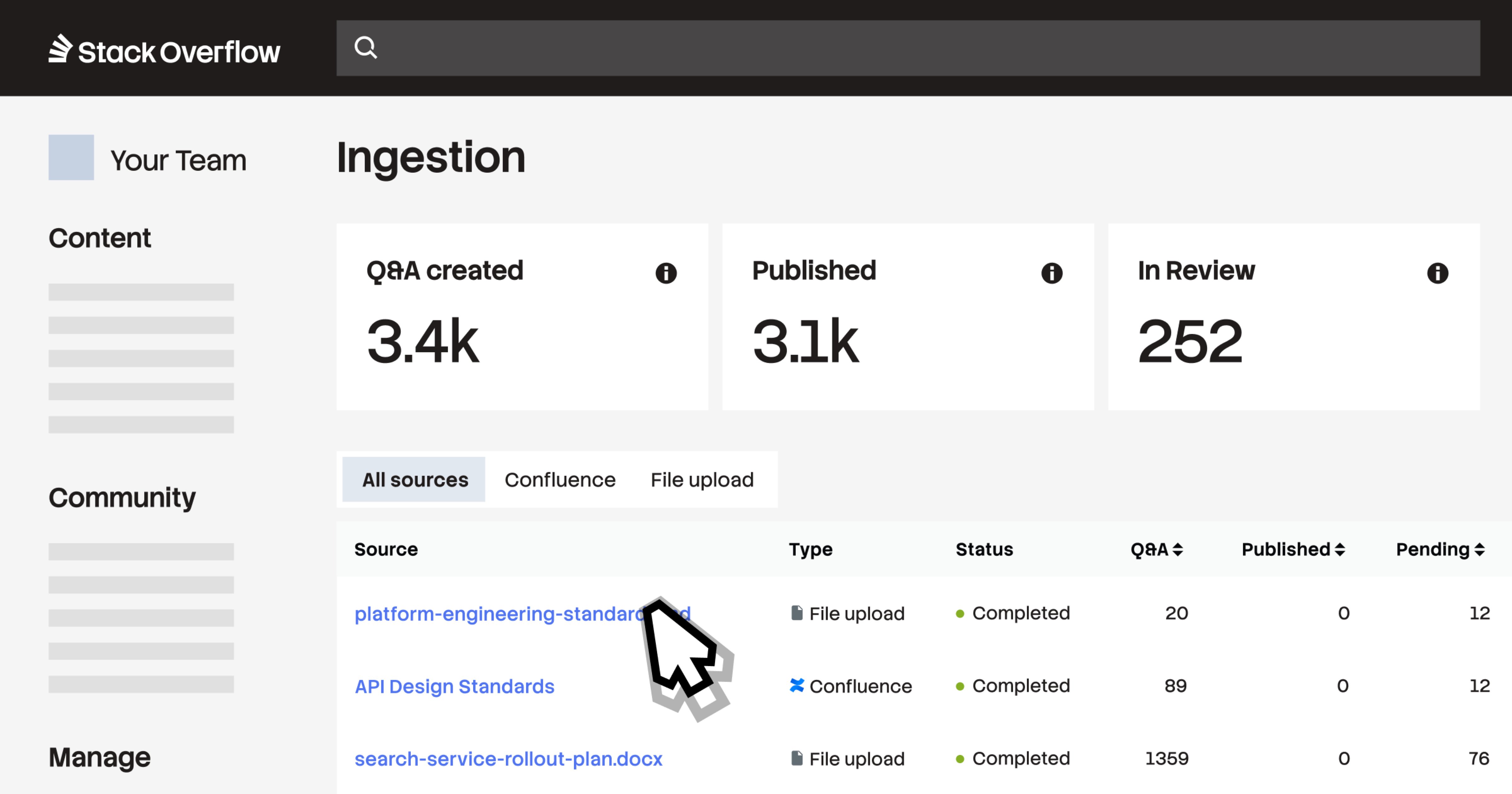

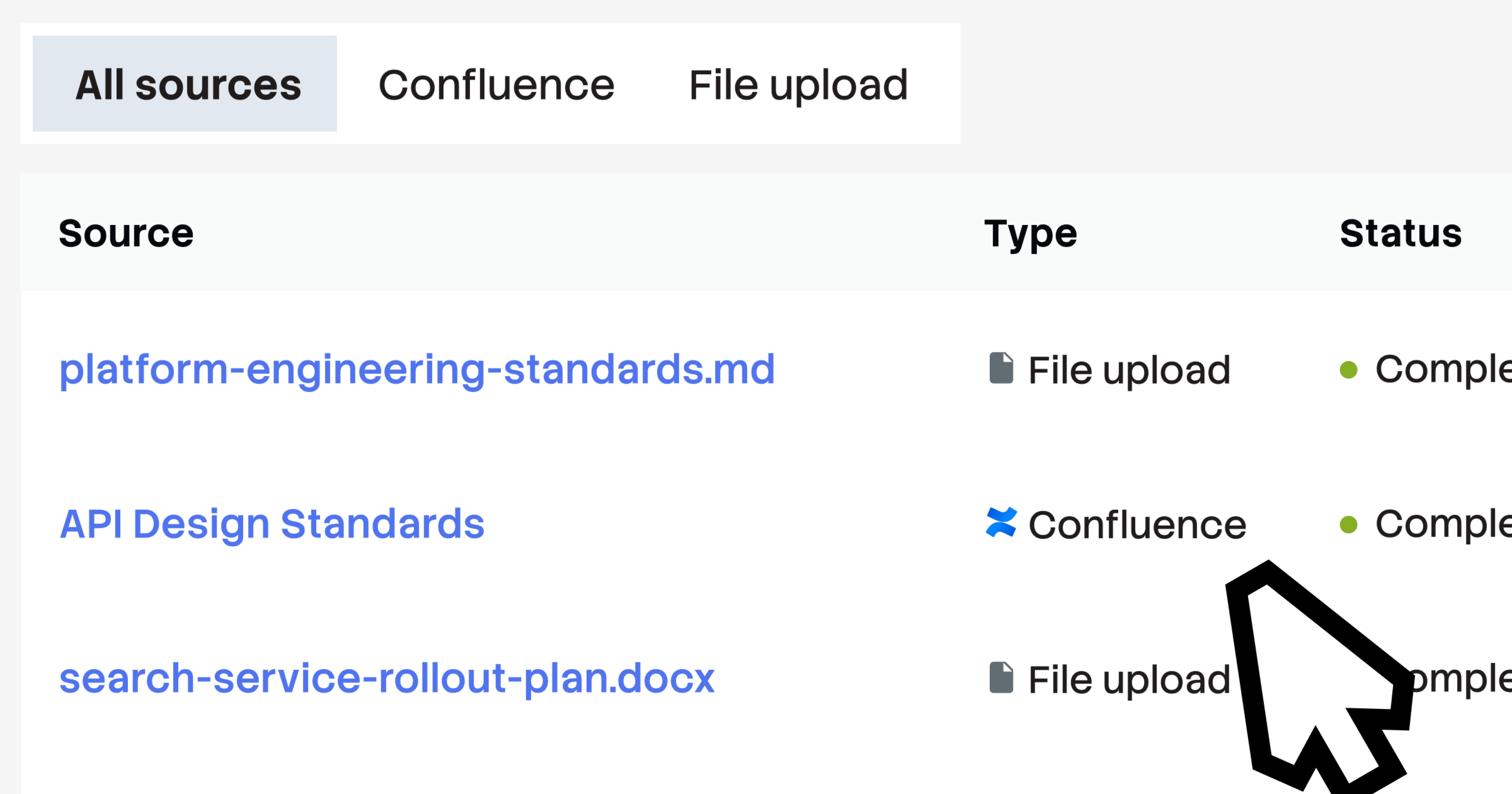

Confluence Integration and Knowledge Traceability

The 2026.3 release includes a particularly valuable integration with Confluence Cloud through a dedicated connector. This feature allows organizations to connect Stack Internal directly to select Confluence spaces, converting static, long-form documentation into SME-verified Q&A pairs that are more easily discoverable, trustworthy, and maintainable at scale.

Crucially, every generated post includes a direct link back to the original Confluence source page, preserving knowledge traceability and allowing teams to reference the original context when needed. This bidirectional connection between structured Q&A and source documentation creates a comprehensive knowledge ecosystem that supports both quick reference and deep understanding.

AI Tool Integration through MCP

Once validated and published, all converted Q&A posts become accessible through Stack Internal's MCP (Model Context Protocol) server. This integration surfaces expert-vetted context directly within AI tools and IDEs where development teams already work. The continuous stream of verified knowledge ensures that AI assistants have access to accurate, up-to-date information, reducing the repetitive "shoulder taps" that pull senior engineers away from high-value work.

This closed-loop system creates a powerful synergy between human expertise and AI assistance. Knowledge doesn't just sit in a repository; it actively informs and improves AI-driven development processes, creating a virtuous cycle of knowledge improvement and application.

Availability and Practical Implementation

Stack Internal Enterprise customers can begin using Ingestion starting April 29, 2026. Implementation is straightforward, with administrators able to enable the feature through Admin Settings (detailed guides are available for reference). All customers receive 100 Knowledge Objects (approved Q&A pairs) per month at no extra cost, providing an entry point for smaller teams or initial pilots.

For organizations with higher volume needs, Stack Overflow offers enterprise-grade plans with increased capacity. Organizations interested in these enhanced capabilities can contact their Stack Overflow account representative to discuss their specific requirements and implementation strategies.

The broader impact of Ingestion extends beyond simple knowledge management. By making technical context more accessible to the tools that need it, organizations can accelerate decision-making, reduce onboarding time for new team members, and create a more efficient knowledge-sharing culture. The 2026.3 release represents not just a product update, but a fundamental advancement in how technical organizations leverage their collective intelligence.

For organizations looking to implement Ingestion, the release notes provide comprehensive information about all updates in the Stack Internal 2026.3 release, ensuring teams can take full advantage of this new capability and its integration with other Stack Internal features.

Comments

Please log in or register to join the discussion