Researchers at UC Irvine have developed a simple but effective 'FlyTrap' attack that uses patterned umbrellas to hijack autonomous drone tracking systems, causing DJI and other drones to be lured closer before being captured or crashed.

Researchers at the University of California, Irvine (UC Irvine) have developed a drone capture and crash-inducing device called the FlyTrap attack that uses special patterns to exploit deficiencies in Autonomous Target Tracking (ATT) systems found in commercial drones.

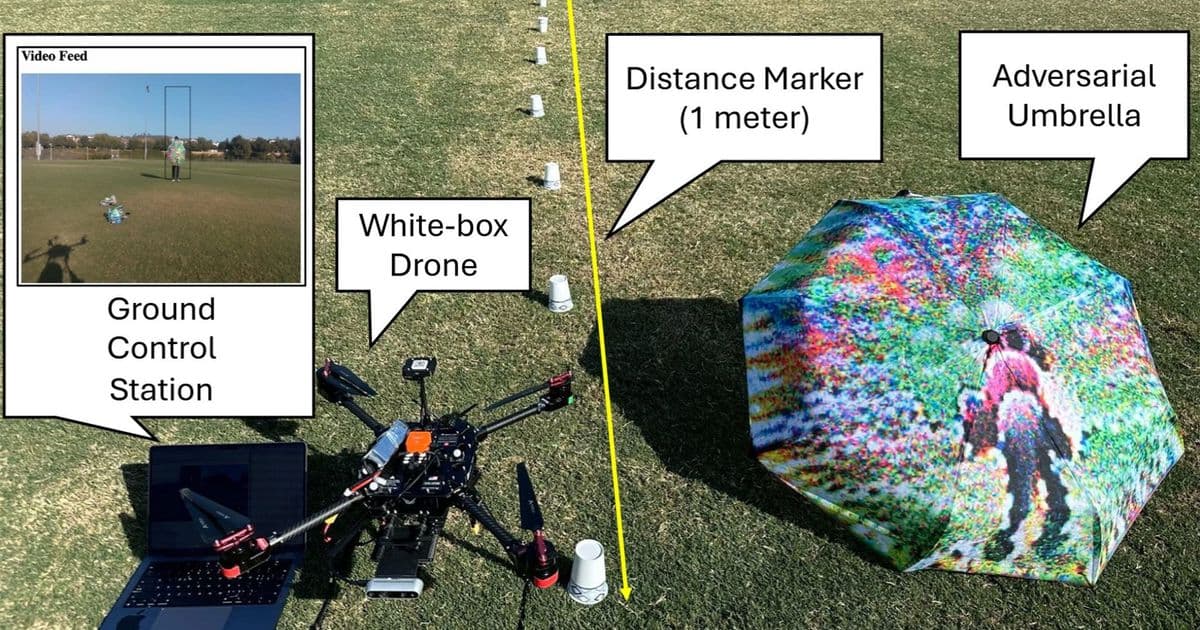

The FlyTrap attack represents a significant breakthrough in adversarial machine learning against autonomous drones. The researchers printed AI-generated patterns on "adversarial umbrellas" that can be easily carried and deployed by anyone. When an autonomous drone locks onto a person carrying the umbrella, the visual pattern performs a next-generation physical distance pulling (PDP) attack that works across multiple angles, even in motion, and in real-world conditions.

In essence, this represents a deliciously lo-fi counter to a high-tech hazard. Umbrellas are also useful if it rains, or for portable shade, making the attack vector both effective and inconspicuous.

How the FlyTrap Attack Works

The attack exploits how autonomous drones interpret visual data. When a drone using ATT systems like Active Track, Motion Track, or Dynamic Track locks onto a target, it continuously adjusts its position to maintain tracking. The FlyTrap pattern manipulates this behavior by causing the drone's neural network tracking systems to interpret the pattern as the subject moving further away.

As the drone approaches the umbrella, the pattern causes the targeting bounding box to continue shrinking, so the drone moves to get closer. This creates a feedback loop where the drone is physically drawn toward the umbrella-printed visual pattern.

Once lured close enough, autonomous drones can be easily ensnared using a net gun, or further induced to crash to Earth. The researchers demonstrated this in field tests, showing how a drone would follow the pattern until it was within capture range.

Tested Drone Models and Effectiveness

The FlyTrap attack was successfully demonstrated on three commercial drone models:

- DJI Mini 4 Pro

- DJI Neo - HoverAir X1

The research shows that FlyTrap is significantly more effective than prior adversarial-ML techniques like older PDP technology and Targeted Gradient Transfer (TGT). The attack works reliably across different lighting conditions and movement scenarios, making it a practical threat in real-world situations.

Technical Innovation and Security Implications

"Autonomous target tracking represents both tremendous potential and significant risk," said paper co-author Alfred Chen, UC Irvine assistant professor of computer science. Chen notes that autonomous drones are used in areas like border patrol and public safety, but also by malicious actors.

Lead author Shaoyuan Xie, a UC Irvine graduate student researcher in computer science, added that "Our findings highlight urgent needs for security improvements in [autonomous target-tracking] systems before wider deployment in critical infrastructure."

The FlyTrap attack demonstrates how physical-world adversarial attacks can bypass digital security measures. Unlike traditional cybersecurity threats that target software vulnerabilities, this attack exploits fundamental limitations in how neural networks process visual information in three-dimensional space.

Responsible Disclosure and Industry Response

Both DJI and HoverAir have been responsibly notified about the neural processing vulnerabilities in their autonomous tracking systems. The researchers followed standard responsible disclosure practices, giving the companies time to evaluate and potentially address these vulnerabilities before public disclosure.

This type of research is crucial for understanding the security limitations of autonomous systems as they become more prevalent in critical infrastructure, surveillance, and public safety applications. The simplicity of the attack vector - requiring only a printed pattern on a common umbrella - makes it particularly concerning from a security standpoint.

Future Implications for Drone Technology

The FlyTrap attack highlights the need for more robust autonomous tracking systems that can distinguish between genuine tracking targets and adversarial patterns. Potential mitigations could include multi-sensor fusion approaches that don't rely solely on visual tracking, or machine learning models trained to recognize and ignore adversarial patterns.

As autonomous drone technology continues to advance and find applications in areas like delivery services, infrastructure inspection, and emergency response, understanding and mitigating these types of attacks becomes increasingly important. The research serves as a reminder that as AI systems become more capable, they also become more vulnerable to creative adversarial attacks that exploit their fundamental processing mechanisms.

The full research paper will be presented at the Network and Distributed System Security Symposium (NDSS) in 2026, where the technical details and potential mitigations will be discussed in greater depth with the security research community.

Comments

Please log in or register to join the discussion