1Password applied AI‑driven agents to dissect and refactor its multi‑million‑line Go monolith, discovering that deterministic tooling, explicit specifications, and careful sequencing are essential for safe production migrations. The experiment yielded modest productivity gains, highlighted the limits of AI‑generated code, and produced a set of practical lessons for teams adopting agentic refactoring.

Introduction

When 1Password set out to split its long‑standing Go monolith (nicknamed B5) into clearer service boundaries, the scale and sensitivity of the codebase made manual refactoring impractical. The team therefore turned to agentic tooling: a pipeline of AI agents that could analyze, plan, and execute changes across millions of lines of production code. What emerged was not a flawless automation story, but a nuanced portrait of where AI agents excel, where they stumble, and how engineering teams can harness them responsibly.

The agentic analysis layer

The first hurdle was sequencing. In a system that stores passwords and other secrets, extracting a component in the wrong order can introduce silent data loss or subtle runtime failures. To tame this problem, the team built a hybrid analysis stack:

- Go SSA (Static Single Assignment) analysis to map functions, packages, and call graphs.

- SQL parsing to surface data‑flow dependencies across tables.

- DataDog MCP integration to inject live coupling metrics (call frequency, latency, error rates).

These three signals produced a domain ownership map, a coupling graph, and a prioritized extraction order. The resulting plan matched what senior engineers would have guessed: start with the Vault service (its own API and security perimeter), then move to Billing, followed by AuthN/AuthZ, leaving Identity as the core.

A key insight was that agents were most valuable when they generated deterministic artifacts—the SSA analyzer, the manifest of call sites, the extraction order—rather than being asked to interpret the code on the fly. Once a stable artifact existed, the AI could reason over it without the variability that typically plagues large language models.

Unexpected side effect

The instrumentation required for the analysis also upgraded 1Password’s end‑to‑end transaction visibility in DataDog, a benefit that outlived the refactoring effort.

Human‑to‑agent ratio: a concrete migration

The next experiment tackled a long‑standing cleanup: replacing the panic‑on‑failure pattern of MustBegin database transactions with graceful error handling. Over 3,000 call sites needed updating—a task that had languished in the backlog.

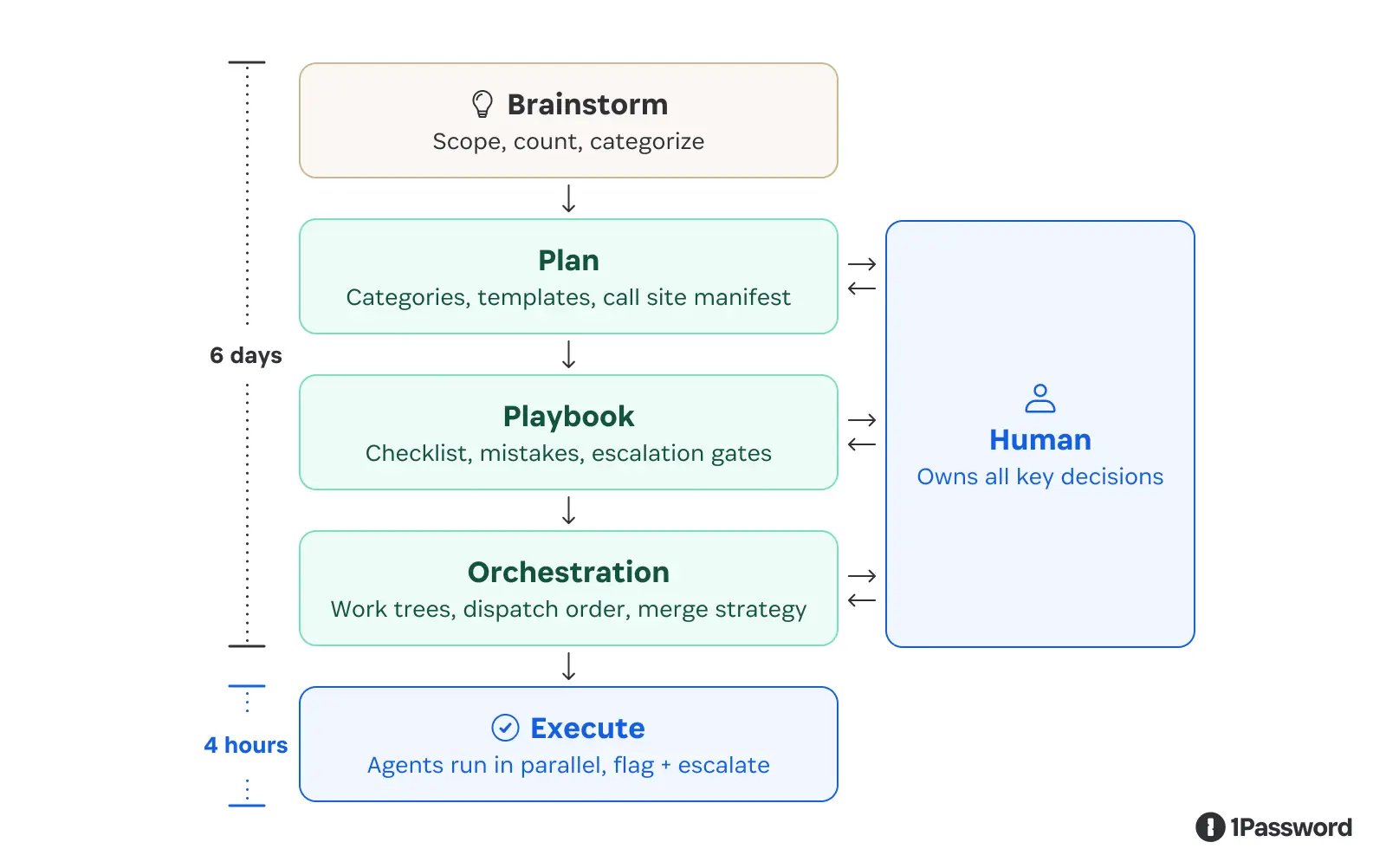

The workflow was deliberately structured:

- Deterministic manifest: SSA generated a complete list of every

MustBegininvocation. - Pattern classification: The manifest was grouped into a handful of recognizable usage patterns.

- Template‑driven patches: For each pattern, a code‑generation template was authored.

- Playbook: A detailed instruction set described how agents should apply patches, what failure modes to watch for, and when to abort and hand off to a human.

- Parallel execution: Multiple agents ran in isolated Git worktrees, preventing cross‑interference.

The actual code changes completed in a matter of hours; the bulk of the effort lay in building the tooling and writing the specification. This ratio—specification first, execution second—proved to be the decisive factor for speed and accuracy.

When agents hit the limits

The most ambitious test was extracting a full service from the monolith. Even a modest service requires coordinated schema migrations, read/write path adjustments, deployment sequencing, and shared contract updates. The agents repeatedly ran into two categories of failure:

- Sequencing violations – e.g., back‑filling a new UUID column before the code that writes new rows was updated, leading to silent data loss.

- Speculative assumptions – when context was missing, the model guessed that an identifier was a ULID and propagated that assumption, forcing a full rollback.

These missteps persisted despite explicit ordering constraints in the prompt. The root cause was the non‑deterministic nature of language models when asked to make decisions that require global invariants. The team found that the most reliable pattern was to let agents produce deterministic artifacts (plans, manifests) and then enforce those artifacts with traditional tooling.

Productivity gains for this class of work settled around 20‑30 %, a modest improvement that nonetheless validated the approach for well‑bounded tasks.

Lessons for teams adopting AI agents

- The bottleneck is not code generation – agents excel at reading and drafting code, but managing ordered, irreversible changes (schema migrations, deployment pipelines) remains a human‑centric challenge.

- Contain non‑determinism – use agents to build stable tools, then constrain downstream work to the outputs of those tools. This creates a predictable foundation even if the model itself is stochastic.

- Specify everything – vague prompts lead to implicit assumptions. Include invariants, ordering constraints, and explicit escalation paths for any scenario that falls outside the defined patterns.

- Parallelism requires isolation – running many agents concurrently only speeds up work when the underlying changes are independent and conflict‑free.

Implications for 1Password’s engineering culture

The experience reshaped how 1Password views AI within its workflow. Rather than treating agents as autonomous coders, the organization now positions them as assistants that generate deterministic scaffolding. Engineers retain responsibility for defining system boundaries, modeling dependencies, and verifying sequencing. The most valuable engineering effort has shifted from writing boilerplate to crafting precise specifications and guardrails that enable safe automation.

The team is actively rolling out these practices across the organization, building playbooks for multi‑agent execution, and refining the balance between human judgment and AI assistance. The open problems—live‑traffic decomposition, coordinated multi‑service extraction, and robust escalation mechanisms—are where 1Password is investing its most interesting engineering talent.

If you are intrigued by the intersection of AI, large‑scale systems, and safe automation, 1Password is hiring.

Related reads

- February 12, 2026 – 1Password's new benchmark teaches AI agents how not to get scammed (AI Developers)

- February 13, 2026 – Agents are making filesystems cool again (AI Developers)

Comments

Please log in or register to join the discussion