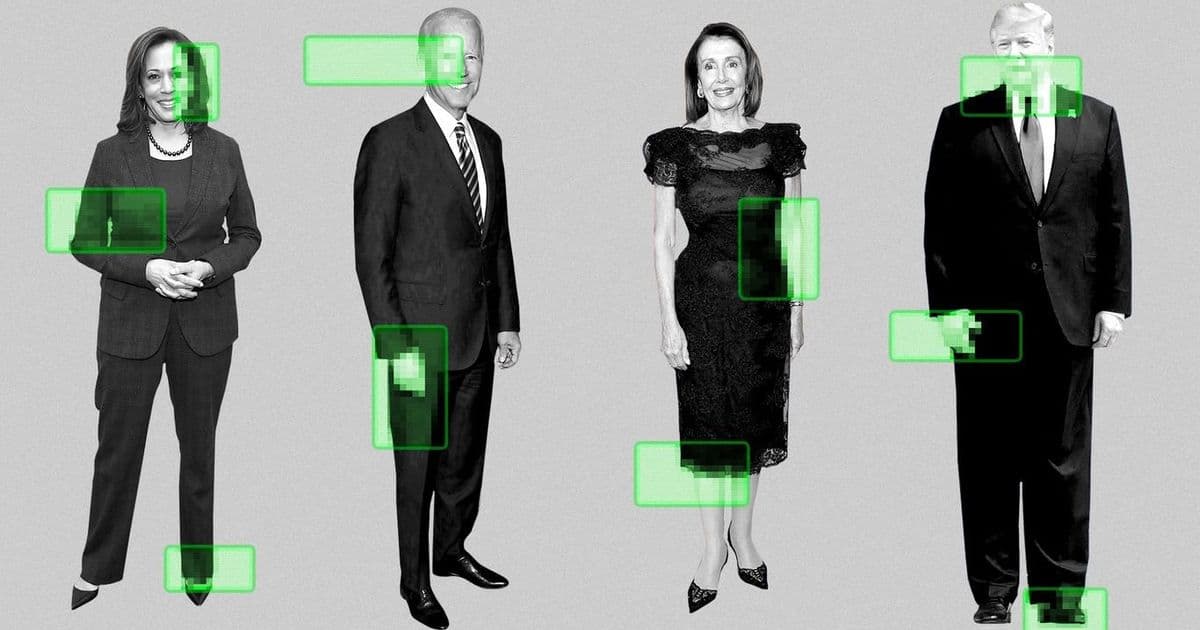

YouTube is expanding its likeness detection tool to select government officials, political candidates, and journalists to combat unauthorized AI impersonation, marking a significant step in platform content moderation.

YouTube is expanding its likeness detection tool to select government officials, political candidates, and journalists to combat unauthorized AI impersonation, marking a significant step in platform content moderation.

According to Axios, the video-sharing platform is rolling out enhanced detection capabilities specifically targeting AI-generated content that impersonates public figures. The tool, which was previously available in limited capacity, will now be extended to help manage the growing challenge of unauthorized AI-generated impersonations.

The expansion comes amid increasing concerns about the misuse of AI technology to create convincing fake content featuring public figures. Government officials, political candidates, and journalists are particularly vulnerable to such impersonation attempts, which can be used to spread misinformation or damage reputations.

YouTube's approach involves using advanced detection algorithms to identify content that may be using someone's likeness without authorization. The tool helps flag potential impersonation attempts before they can be widely distributed on the platform.

This move by YouTube reflects a broader industry trend toward implementing safeguards against AI-generated misinformation. As generative AI tools become more sophisticated and accessible, platforms are under increasing pressure to develop systems that can distinguish between authentic and synthetic content.

For government officials and political candidates, the expanded tool could help prevent the spread of AI-generated videos or audio that falsely portrays them saying or doing things they never actually did. This is particularly important in the context of elections and political discourse.

Journalists, who often find themselves targets of online harassment and misinformation campaigns, may also benefit from the enhanced protection. The tool could help prevent the creation and distribution of fake news reports or interviews that use a journalist's likeness without permission.

The implementation of this expanded detection system raises questions about the balance between preventing impersonation and preserving freedom of expression. YouTube will need to carefully calibrate its detection thresholds to avoid over-censoring legitimate content while still effectively combating malicious impersonation attempts.

This development is part of YouTube's ongoing efforts to address the challenges posed by AI-generated content. The platform has been working on various tools and policies to manage the impact of AI technology on its ecosystem, including content labeling requirements and detection systems for synthetic media.

The expansion of the likeness detection tool represents a proactive step by YouTube to address emerging threats before they become widespread problems. As AI technology continues to advance, platforms will likely need to continually update and refine their detection capabilities to keep pace with new forms of impersonation and misinformation.

For users who fall into the protected categories, YouTube is expected to provide information on how to report potential impersonation attempts and what recourse is available if their likeness is used without authorization. The platform may also implement verification systems to help distinguish between authentic and potentially fake content featuring these public figures.

This move by YouTube could influence other social media platforms to implement similar protections for high-profile individuals. As the technology for creating convincing AI impersonations becomes more accessible, comprehensive detection and prevention systems may become standard across major online platforms.

The effectiveness of YouTube's expanded tool will likely be closely watched by both users and industry observers. Success in preventing unauthorized AI impersonations could set a precedent for how platforms handle synthetic media and protect public figures from digital manipulation.

As AI technology continues to evolve, the challenge of distinguishing between authentic and synthetic content will remain a critical issue for online platforms. YouTube's expanded likeness detection tool represents one approach to addressing this challenge, but it's likely just one part of a broader set of solutions that will be needed to maintain trust and authenticity in digital media.

For now, the expansion of this tool to government officials, political candidates, and journalists marks an important step in YouTube's efforts to combat AI impersonation and protect vulnerable public figures from digital manipulation.

Comments

Please log in or register to join the discussion