AMD and Meta have announced a landmark partnership to deploy 6 gigawatts of AI compute capacity, featuring custom AMD Instinct GPUs and next-generation EPYC CPUs, marking a major shift in Meta's infrastructure strategy and validating AMD's position in the AI hardware market.

The tech world is buzzing with the announcement of a massive strategic partnership between AMD and Meta, one of the largest AI infrastructure deals ever revealed. The companies have inked an agreement to deploy 6 gigawatts of AI compute capacity, with the first 1GW scheduled for deployment in the second half of 2026. This deal represents a significant diversification of Meta's compute infrastructure beyond traditional suppliers and positions AMD as a serious competitor in the AI GPU space.

At the heart of this deployment is the AMD Helios rack-scale architecture, developed jointly by AMD and Meta through the Open Compute Project. This infrastructure was specifically designed for scalable rack-level AI deployments, and the companies showcased different versions of the AMD Helios MI450 Rack at the recent OCP Summit. What makes this particularly noteworthy is that Meta will be deploying custom AMD Instinct GPUs based on the MI450 architecture, rather than standard off-the-shelf parts. This level of customization demonstrates the depth of collaboration between the two companies, with Meta essentially getting hardware tailored to its specific workloads.

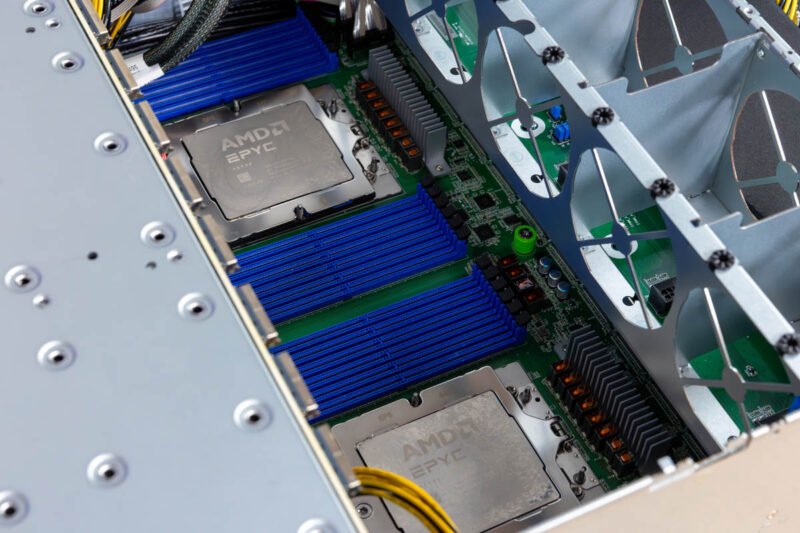

The financial structure of this deal mirrors AMD's recent agreement with OpenAI. AMD has issued Meta a performance-based warrant for up to 160 million shares of AMD common stock. These warrants vest in tranches tied to deployment milestones, starting with the initial 1GW deployment and scaling up to the full 6GW. The vesting is further tied to AMD achieving certain stock price thresholds, and exercising warrants is contingent on Meta achieving key technical and commercial milestones. During the conference call, AMD emphasized that Meta is already a significant EPYC CPU customer, powering many of the company's core services.

This partnership marks a significant evolution in Meta's relationship with AMD. Historically, even when AMD's EPYC 7002 "Rome" generation offered substantial advantages over Intel's 2nd Gen Xeon Scalable "Cascade Lake" (64 cores versus 28 cores, more than double the PCIe I/O, and greater memory bandwidth), Meta would only publicly showcase Intel platforms. The company finally shared details about its Facebook Meta AMD EPYC North Dome CPU and Platform only after years of internal deployment. The fact that Meta is now deploying AMD widely represents a major shift in strategy.

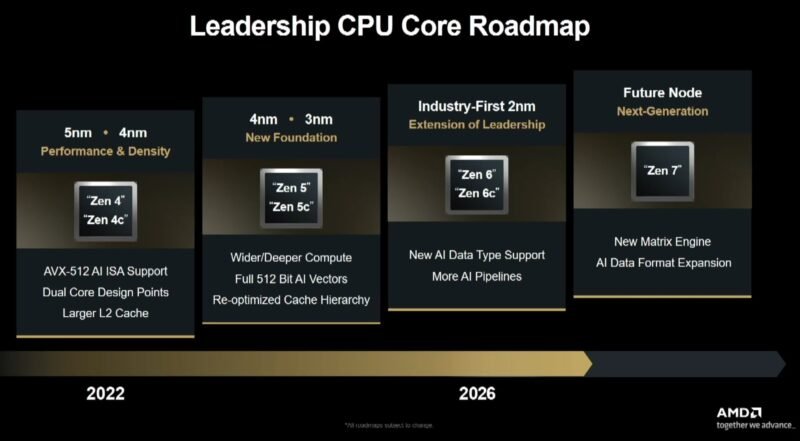

On the CPU side, Meta has been a long-time partner with AMD, having deployed millions of AMD EPYC CPUs across its global infrastructure. AMD revealed that Meta will be a lead customer for the 6th Generation AMD EPYC "Venice" CPUs, as well as for "Verano," the next-generation EPYC processor designed with workload-specific optimizations. Lisa Su noted during the call that the CPU market is "on fire" right now, reflecting the intense demand for high-performance computing.

What makes this deal particularly significant is what it reveals about Meta's broader strategy. Mark Zuckerberg has been clear that Meta wants to build "artificial general intelligence" and needs massive compute infrastructure to achieve this goal. By partnering with AMD, Meta is diversifying its supplier base while still getting purpose-built hardware. This represents a strong vote of confidence in AMD's roadmap and its ability to deliver at scale across multiple generations.

The software side of this equation is equally important. These deployments will run ROCm, AMD's open-source GPU computing platform. AMD has been working diligently to improve ROCm compatibility and performance, and having a customer like Meta deploying at this scale will only accelerate those improvements. Between Meta and OpenAI alone, the industry has moved well past the era of questioning whether AMD's software stack will be mature enough for production-scale deployments. There is now too much money behind the hardware, across multiple generations, for software to remain a long-term bottleneck.

Looking back at AMD's strategic moves, the ZT Systems acquisition appears to be paying significant dividends. At the time of that deal, AMD emphasized that it was needed to target large-scale deployments exactly like this one. Another 6GW deal is precisely the type of engagement that justifies that acquisition and demonstrates AMD's ability to execute on its vision for the AI infrastructure market.

The broader impact of this deal should not be underestimated. Meta is one of the largest operators of AI infrastructure in the world, and their endorsement of AMD Instinct GPUs carries significant weight in the market. This provides validation that AMD can compete at the highest levels of AI computing, challenging NVIDIA's dominance in the space. While NVIDIA will undoubtedly continue to sell a massive number of chips, AMD is clearly shaping up as a winner in this market as well.

This transformational deal represents a major milestone for both companies and for the broader AI infrastructure ecosystem. For AMD, it validates years of investment in GPU technology and software development. For Meta, it provides the compute capacity needed to pursue its ambitious AI goals while reducing dependence on any single supplier. As the AI race continues to accelerate, partnerships like this one will likely become increasingly common as companies seek to secure the infrastructure needed to compete at the cutting edge of artificial intelligence development.

Comments

Please log in or register to join the discussion