Silicon photonics startup Ayar Labs partners with ODM Wiwynn to develop a rack-scale reference platform that can stitch together over 1,024 GPUs using optical interconnects, dramatically reducing power consumption compared to copper-based systems.

Silicon photonics startup Ayar Labs has partnered with ODM Wiwynn to develop a revolutionary rack-scale reference platform capable of stitching together more than 1,024 GPUs into a single unified system. The announcement, made on March 11, 2026, represents a significant leap forward in datacenter architecture, promising to deliver unprecedented compute density while dramatically reducing power consumption compared to traditional copper-based systems.

The Power Problem with Current GPU Racks

Modern GPU-centric datacenters face a fundamental challenge: as interconnect speeds increase, copper cables become increasingly impractical. At the speeds required for high-performance computing and AI workloads, copper can only maintain signal integrity for a few feet before degradation becomes problematic. This limitation forces datacenter operators to pack everything—GPUs, CPUs, switches, and storage—into single, densely packed racks.

The consequences are severe. Systems like Nvidia's 600-kilowatt Vera Rubin Ultra racks generate enormous amounts of heat, requiring sophisticated liquid cooling solutions and pushing datacenter power budgets to their limits. "Looking at the current racks, you're forced to have everything in that one rack," explains Ayar CTO Vladimir Stojanovic. "You're forced to have GPUs there, you're forced to have CPUs there. You're forced to have switches, just because copper doesn't take you that far."

How Photonics Changes the Equation

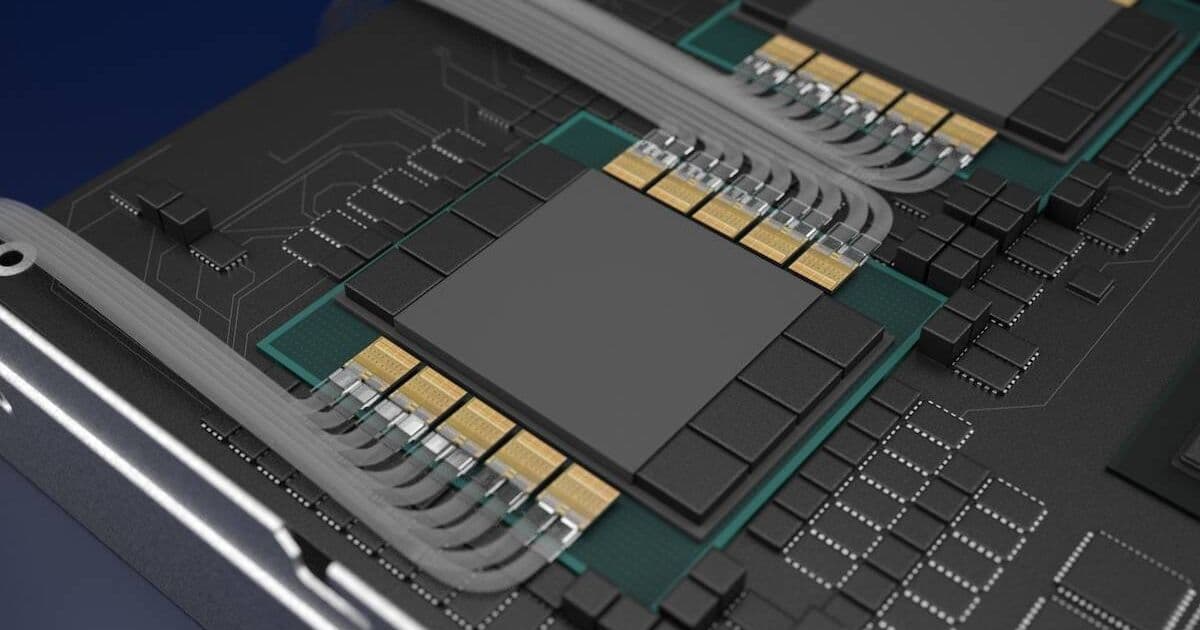

Ayar's solution leverages co-packaged optics (CPO), which integrates optical transceivers directly with the compute silicon. This approach dramatically reduces power consumption compared to traditional pluggable optics while simultaneously boosting reach and bandwidth by up to 3x.

The technology builds on work Ayar demonstrated at Super Computing 2025, where they showcased a prototype accelerator developed with Alchip. This design featured eight of Ayar's TeraPHY optical engines capable of delivering more than 100 Tbps of bandwidth. The key innovation is that by co-packaging the optics with the compute, Ayar can eliminate the power-hungry optical-to-electrical conversions that plague traditional pluggable modules.

A New Architecture for Datacenters

Perhaps most significantly, Ayar's optical interconnects enable a disaggregated architecture that fundamentally changes how datacenters can be organized. Rather than forcing all components into a single rack, operators can now build specialized racks: compute racks, switch racks, and even extended memory racks.

"This allows you to do a disaggregated architecture, where you build a rack monolithically as a compute rack, and then you have another rack that's a switch rack," Stojanovic explains. This flexibility means datacenter operators can optimize their infrastructure for specific workloads rather than being constrained by the physical limitations of copper interconnects.

The Reference Design

The rack-scale reference design unveiled by Ayar and Wiwynn represents the culmination of years of engineering work. The system features two optically interconnected accelerators and a single CPU, with 16 user-serviceable SuperNova laser modules at the front. The design also includes hyperscale-style front-mount network interfaces, making it suitable for large-scale deployment.

Compared to compute blades from Nvidia and AMD, the reference design is about half as dense. However, because it uses optical interconnects, density isn't the primary concern. Rather than connecting 18 blades with super-short copper cables, Ayar can connect hundreds of these systems to form a single enormous logical server.

Engineering Challenges

Developing a photonic rack system presented numerous engineering challenges beyond simply integrating the optics. The mechanical design had to account for liquid cooling routing, with critical decisions about what components to cool first and how to manage thermal gradients.

"As the cold water is coming in, what do you cool first? What do you cool second?" Stojanovic notes. The team also had to address issues that weren't considered in original optical module specifications, such as ensuring components could function reliably in liquid-cooled environments.

Software management and monitoring represent another crucial consideration. With co-packaged optics, a failure affects the entire chip rather than just a single pluggable module. This increased "blast radius" makes sophisticated monitoring and telemetry essential for quickly identifying whether problems are optical or electrical in nature.

Power Efficiency Breakthrough

One of the most compelling aspects of Ayar's approach is its power efficiency. While current high-density GPU racks can consume 600+ kilowatts, the photonic reference design is expected to operate in the 100-200 kilowatt range. This dramatic reduction in power consumption addresses one of the most pressing challenges facing datacenter operators as AI workloads continue to scale.

Industry Context and Timing

The announcement comes at a pivotal moment for the photonics industry. Just a week prior, Ayar closed a $500 million Series E funding round to accelerate mass production of its co-packaged optics. The timing also follows Ayar's partnership with Global Unichip Corp (GUC) to develop reference designs based on its optical I/O chiplets, addressing both the chip and system integration aspects of the technology.

What This Means for the Future

The implications of Ayar and Wiwynn's work extend far beyond a single product announcement. By demonstrating that 1,024 GPUs can be effectively unified into a single logical system while consuming a fraction of the power of traditional approaches, they've shown a viable path forward for scaling AI and high-performance computing infrastructure.

For hyperscalers and cloud providers, this technology could enable the construction of much larger, more efficient clusters without requiring massive electrical infrastructure upgrades. The disaggregated architecture also offers flexibility in how resources are allocated and scaled, potentially leading to more efficient utilization of expensive GPU resources.

The rack-scale reference design will be formally unveiled at the Optical Fiber Communication (OFC) Conference next week, where industry professionals will get their first comprehensive look at what could be the next major evolution in datacenter architecture.

As AI models continue to grow in size and complexity, requiring ever-larger GPU clusters, technologies like Ayar's photonic interconnects may prove essential for keeping pace with computational demands while managing the practical constraints of power, cooling, and physical space in modern datacenters.

Comments

Please log in or register to join the discussion