As AI SOC agents flood the market, Gartner provides critical evaluation criteria to separate genuine operational improvement from marketing hype, with only 15% of organizations expected to achieve measurable improvements without structured assessment.

The market for AI SOC agents is experiencing explosive growth, with dozens of startups entering the space in just the past 18 months. These tools promise to transform security operations by handling alert triage, investigation, and response with unprecedented efficiency. However, new research from Gartner reveals a concerning gap between adoption and actual effectiveness.

According to analysts Craig Lawson and Andrew Davies in their report "Validate the Promises of AI SOC Agents With These Key Questions," while 70% of large Security Operations Centers (SOCs) are expected to pilot AI agents for Tier 1 and Tier 2 operations by 2028, only 15% will achieve measurable improvements without a structured evaluation process. This disparity highlights the challenge facing security teams: how to distinguish between genuine operational enhancement and marketing noise in a crowded, rapidly evolving market.

Understanding the AI SOC Agent Landscape

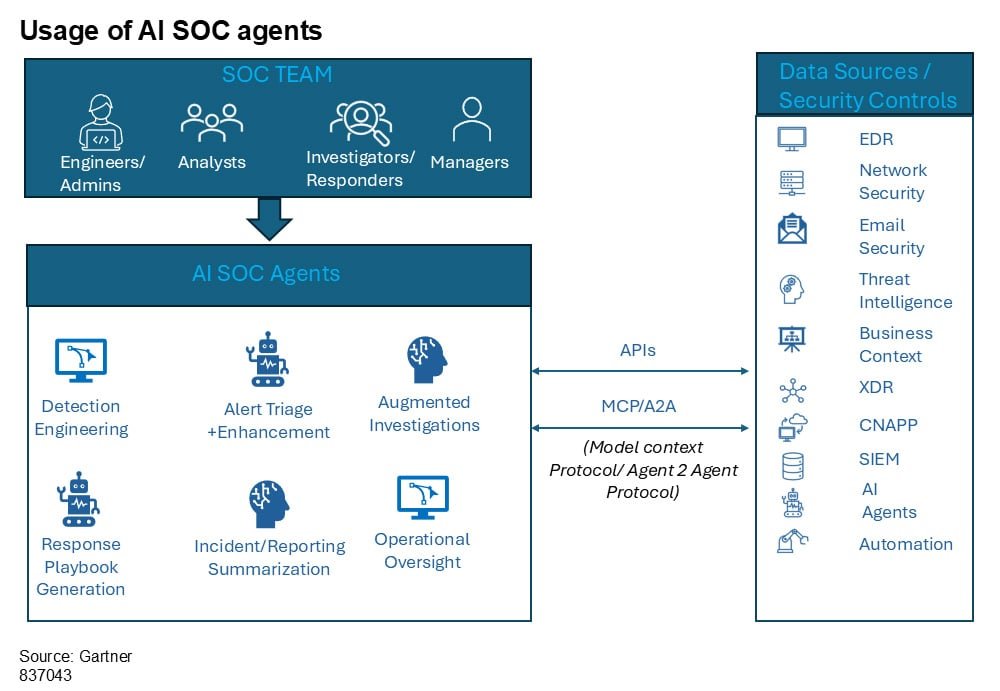

AI SOC agents, or what Gartner terms "Agentic SOC" solutions, represent a significant evolution in security automation. Unlike traditional SOAR (Security Orchestration, Automation and Response) platforms that follow predefined if/then rules, these AI-powered systems can understand context, make decisions, and take actions based on complex analysis of security data.

The fundamental promise is compelling: reduce alert backlogs, accelerate investigation times, and free human analysts to focus on higher-value threats and strategic initiatives. But as with any emerging technology, the implementation reality often falls short of the marketing claims.

Gartner's Seven Critical Evaluation Questions

Gartner's framework provides cybersecurity leaders with a structured approach to evaluating AI SOC agents. These questions go beyond surface-level feature comparisons and focus on operational outcomes, vendor viability, and practical integration considerations.

1. Does it actually reduce the work your team does today?

This question shifts the evaluation from "What can this tool do?" to "Which specific operational bottlenecks does this tool actually solve?" Many vendors demonstrate impressive capabilities in idealized demo environments while addressing workflows your team may have already optimized.

"The evaluation should start with your operational bottlenecks, not the vendor's feature list," explains Gartner. "Understanding that scope upfront prevents misaligned expectations later."

Key considerations include:

- Which specific tasks are best suited for augmentation?

- Is the solution purpose-built for your SOC's primary functions?

- Does it address genuine pain points or just automate processes that already work well?

2. How do you measure outcomes beyond "alerts processed"?

Volume metrics can be misleading. Processing 10,000 alerts monthly means little if investigation quality decreases or true positives slip through. Gartner emphasizes focusing on Threat Detection, Investigation, and Response (TDIR) metrics:

- Mean time to detect (MTTD)

- Mean time to respond (MTTR)

- Mean time to contain (MTTC)

- False positive reduction

"Mean time to contain should be the overall end goal, since containment is where risk actually gets reduced," the report notes. "Any vendor conversation that stops at triage speed without addressing downstream investigation quality and containment timelines is missing the part that matters most."

Qualitative outcomes matter too: analyst satisfaction, improved execution quality, and knowledge retention should all be evaluated.

3. Is the vendor going to be around in two years?

The AI SOC agent market is still in its early stages, characterized by numerous startups with varying approaches and design principles. This diversity drives innovation but introduces vendor risk that requires honest assessment.

Organizations should evaluate:

- When the vendor's solution first became generally available

- The size and stability of their current customer base

- Their funding history and financial outlook

- Potential acquisition likelihood and implications

Pricing models also deserve scrutiny. Some vendors price based on alert volume, others on data volume or token usage. The cost of processing high volumes through LLM-backed systems can scale unexpectedly, making it crucial to understand cost behavior under load.

4. Does it make your analysts better, or just busier in a different way?

This question addresses the nuanced relationship between AI augmentation and human skill development. The concern is valid: if AI handles all investigative legwork, will junior analysts develop the skills needed to become senior analysts?

Gartner recommends evaluating:

- Training and enablement resources provided with the tool

- Whether the AI creates learning opportunities (suggesting threat hunts, recommending best practices)

- Support for detection engineering work

- Transparency in how the AI reaches conclusions

The best implementations present their reasoning in a way that teaches while it triages, giving analysts transparent investigations to review rather than binary verdicts to accept. As the article notes, "That gives junior analysts a model for how experienced investigators approach an alert, rather than just a yes-or-no answer to rubber-stamp."

5. What are the boundaries of AI autonomy?

Gartner distinguishes between "human in the loop" (requiring human approval for each action) and "human on the loop" (AI has broader latitude with strategic human oversight) models. Neither is inherently superior—the right approach depends on your organization's risk appetite, regulatory requirements, and AI system maturity.

Key questions include:

- What actions can the agent perform autonomously versus requiring human approval?

- How are guardrails enforced for high-impact decisions like account disablement or network isolation?

- Can autonomy levels be customized based on task type or risk level?

- What fail-safe mechanisms exist when the AI encounters ambiguity or conflicting signals?

The design philosophy at the edges—how the system behaves in uncertain situations—matters more than any individual feature because that's where real damage can occur.

6. Will it actually work with your existing stack?

Integration claims are common but often difficult to validate. Gartner recommends evaluating native integration depth across SIEM, EDR, SOAR, and identity platforms rather than accepting a logo wall at face value.

A critical consideration is whether the solution requires data centralization or can operate as a plug-and-play across multiple security data sources. For organizations with complex or hybrid architectures, this distinction is operationally significant.

"The difference between a tool that needs all your data in one place and one that can query across multiple security data sources is operationally significant," the report emphasizes. "For organizations with complex or hybrid architectures, this difference can make or break implementation success."

7. Can you actually see what it's doing?

Transparency may be the most important evaluation criterion. Gartner asks:

- How does the solution provide explainability for AI decisions and actions?

- Are human-readable audit trails available for all automated actions?

- How is sensitive data handled, and what controls prevent model misuse or data leakage?

For regulated industries, these aren't nice-to-haves—they're requirements. But even organizations without strict compliance mandates should care about explainability because it directly affects analyst adoption and trust.

"An AI agent that produces a verdict without showing its work puts the analyst in an uncomfortable position," the report states. "They either accept the conclusion on faith, which is risky, or they redo the investigation themselves, which defeats the purpose."

Some vendors have adopted a "glass box" approach, documenting every query, data source, and analytical step used to reach a determination. This transparency builds trust and enables continuous improvement through human feedback.

Implementing Gartner's Framework

For security teams considering AI SOC agents, Gartner's framework provides a structured approach to evaluation that goes beyond feature checklists. The process should:

- Start with documented operational pain points rather than vendor capabilities

- Establish clear success metrics before engaging with vendors

- Require proof of concept in environments similar to your own

- Evaluate vendor stability and long-term viability

- Assess the tool's impact on analyst development and satisfaction

- Define appropriate boundaries for AI autonomy based on your risk tolerance

- Verify integration capabilities and explainability features

The Road Ahead for AI in Security Operations

Gartner's framework doesn't declare winners in a category still taking shape. Instead, it provides a pragmatic approach to evaluation that acknowledges both the potential and limitations of current AI SOC agents.

The technology does offer genuine potential to reduce investigation burden, improve response times, and extend coverage to alert volumes that human teams cannot manually process. However, realizing that potential requires the kind of structured, outcomes-driven evaluation that many buying processes currently skip.

For security leaders, the message is clear: AI SOC agents can transform security operations, but only when implemented with careful consideration of organizational needs, realistic expectations, and thorough evaluation of vendor claims against operational reality.

Organizations interested in applying Gartner's evaluation framework can access the full report "Validate the Promises of AI SOC Agents With These Key Questions" for detailed guidance on each evaluation category and what to look for in vendor responses.

As the security landscape continues to evolve, the organizations that will succeed are those that approach AI augmentation not as a replacement for human expertise but as a enhancement that makes their security teams more effective, efficient, and capable of addressing increasingly sophisticated threats.

Comments

Please log in or register to join the discussion