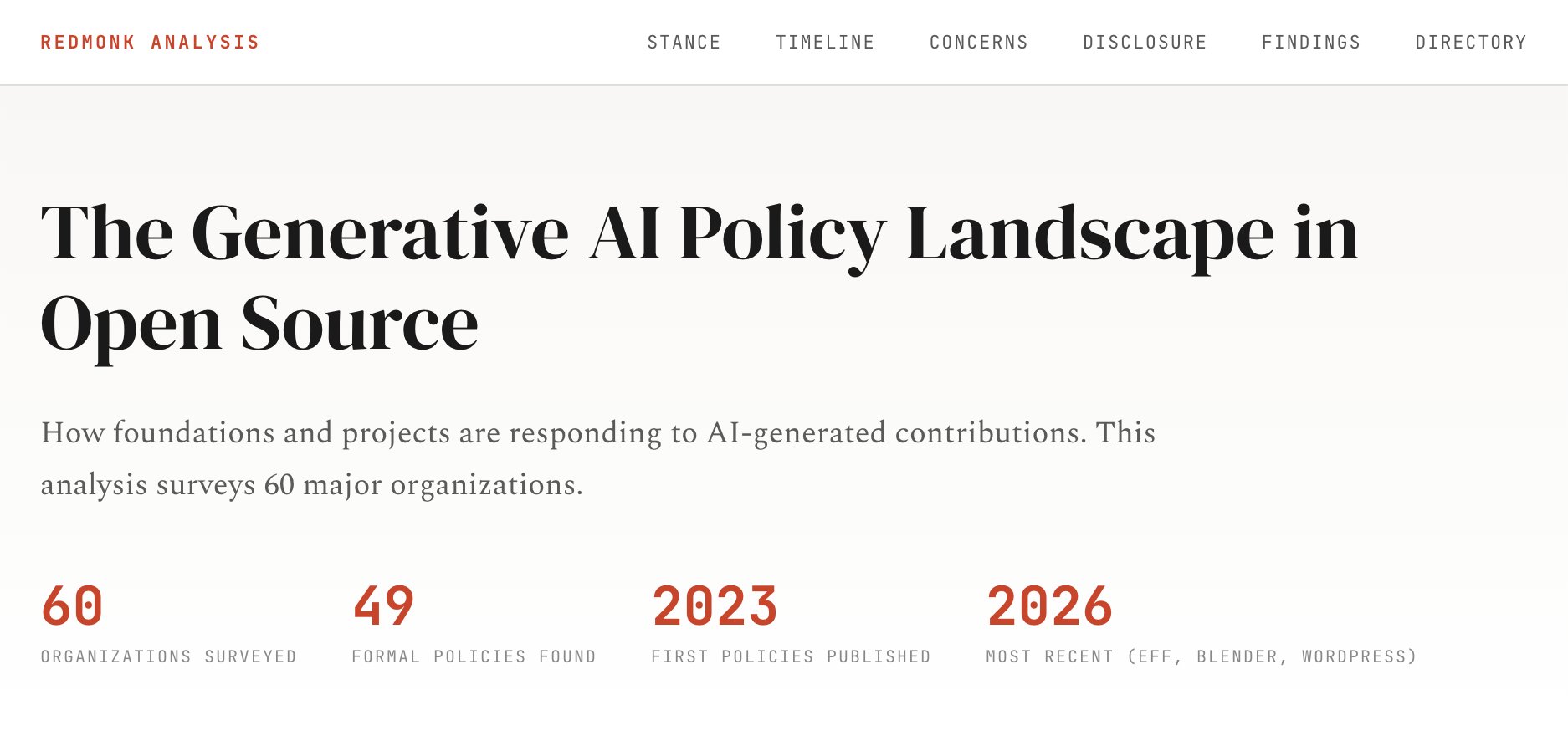

A comprehensive analysis of how 32 open source organizations are responding to AI-generated contributions, revealing a rapidly evolving policy landscape shaped by concerns over code quality, copyright, and ethics.

The open source community finds itself at a critical juncture as generative AI tools become increasingly capable of producing code contributions. While anecdotal evidence of maintainer burnout from "AI slop" has circulated widely, a systematic examination of actual policy responses reveals a complex and evolving landscape. Through analysis of 32 open source organizations—ranging from major foundations like Linux Foundation, Apache, and Eclipse to individual projects including the Linux Kernel, Gentoo, curl, and Matplotlib—a clearer picture emerges of how the community is grappling with this technological disruption.

The resulting policy landscape visualization maps responses across multiple dimensions, revealing patterns that challenge some assumptions while confirming others. Organizations have coalesced around three primary stances: permissive approaches that welcome AI-generated contributions with appropriate safeguards, restrictive bans that prohibit such contributions entirely, and undecided positions that reflect ongoing deliberation. This categorization alone tells a story of a community divided yet engaged in thoughtful consideration of the implications.

What drives these policy decisions? The analysis identifies three dominant concerns shaping organizational responses. Quality considerations focus on the technical adequacy of AI-generated code—its correctness, maintainability, and integration with existing codebases. Copyright concerns address the legal uncertainties surrounding training data and derivative works, particularly given the opaque nature of many AI training processes. Ethical considerations encompass broader questions about attribution, transparency, and the potential displacement of human contributors.

The temporal dimension adds another layer of insight. Since 2023, adoption of formal AI policies has accelerated dramatically, suggesting that what began as ad-hoc responses to emerging challenges has evolved into deliberate governance frameworks. This acceleration reflects both the increasing prevalence of AI tools in development workflows and growing recognition that unmanaged AI contributions pose significant risks to project sustainability.

Disclosure requirements emerge as a particularly nuanced aspect of these policies. Some organizations mandate explicit identification of AI-generated content, while others focus on the outcome rather than the process, evaluating contributions on their merits regardless of origin. This variation reflects deeper philosophical differences about transparency, trust, and the nature of collaborative development itself.

The interactive visualization enables exploration of these patterns, allowing viewers to compare how different organizations weigh various concerns and trace the evolution of thinking over time. The full directory linking to primary policy documents provides transparency and enables deeper investigation for those interested in specific approaches.

This analysis, while comprehensive, acknowledges its limitations. The selection of 32 organizations, though diverse, represents a sample rather than an exhaustive census. The dynamic nature of both AI technology and community responses means that the landscape continues to shift even as it is being mapped. As Alex Bradbury, Scott Shambaugh, and Melissa Weber Mendonça have contributed additional projects since initial publication, the visualization remains a living document of an ongoing conversation.

Melissa Weber Mendonça's complementary work on open-source-ai-contribution-policies provides additional depth and context, demonstrating the collaborative nature of understanding this challenge. Her GitHub repository serves as both a resource and an invitation for continued community engagement in policy development.

The generative AI policy landscape in open source reflects a community in thoughtful transition. Rather than uniform resistance or uncritical embrace, what emerges is a pattern of careful deliberation, with organizations weighing competing values and concerns to chart paths forward. As AI tools continue to evolve and their role in software development becomes increasingly central, these early policy frameworks will likely serve as foundations for more sophisticated governance approaches. The question is not whether AI will transform open source development, but how the community's values and practices will shape that transformation.

The visualization reveals that open source communities are not passive recipients of technological change but active agents shaping its integration into their collaborative practices. This agency—expressed through diverse policy approaches tailored to specific project needs and values—suggests that the future of open source in an AI-augmented world remains firmly in human hands, guided by the community's collective wisdom and commitment to its foundational principles.

For those interested in exploring the complete analysis and interactive visualization, the standalone version is available at oss-ai-policies.netlify.app, providing a window into how one of technology's most influential communities is navigating one of its most significant challenges.

Comments

Please log in or register to join the discussion