Former employees reveal how social media giants prioritized engagement over safety, allowing harmful content to spread to young users as they raced to compete with TikTok.

Whistleblowers from Meta and TikTok have exposed how social media companies made decisions that allowed more harmful content to reach users, particularly teenagers, as they competed to capture attention in an algorithm arms race. The revelations come from more than a dozen former employees who spoke to BBC documentary Inside the Rage Machine, offering an unprecedented inside view of how engagement-driven algorithms can amplify dangerous material.

The Algorithm Arms Race

The explosive growth of TikTok's highly engaging short video algorithm forced competitors like Meta to scramble for market share. According to whistleblowers, this competition led to decisions that prioritized engagement metrics over user safety.

A former Meta engineer, speaking anonymously as "Tim," described how his team had been focused on reducing borderline harmful content - material that's problematic but not illegal, including misogynistic, racist, and conspiracy theory posts. However, this changed when the company faced pressure to compete with TikTok.

"You're losing to TikTok and therefore your stock price must suffer," Tim explained. "People started becoming paranoid and reactive and they were like, let's just do whatever we can to catch up. Where can we get like 2%, 3% revenue for the next quarter?"

This shift meant allowing more borderline content that users were engaging with, even if it was harmful. The engineer said this decision came from senior management, including someone who reported directly to Mark Zuckerberg.

Instagram Reels: Speed Over Safety

When Meta launched Instagram Reels in 2020 as a TikTok competitor, former senior researcher Matt Motyl said it was rolled out without sufficient safeguards. Internal research shared with the BBC showed Reels had significantly higher rates of harmful content compared to Instagram's main feed:

- 75% higher prevalence of bullying and harassment comments

- 19% higher prevalence of hate speech

- 7% higher prevalence of violence and incitement

Motyl, who worked at Meta from 2021 to 2023, described running experiments on hundreds of millions of users without their knowledge. He said there was a "common trade-off between protecting people from harmful content and engagement."

The power dynamics within Meta made it difficult to implement safety measures. Safety teams had to get approval from Reels teams to launch new protective features, but those teams had incentives to avoid changes that might reduce engagement. "Toxic stuff gets more engagement than non-toxic," Motyl explained.

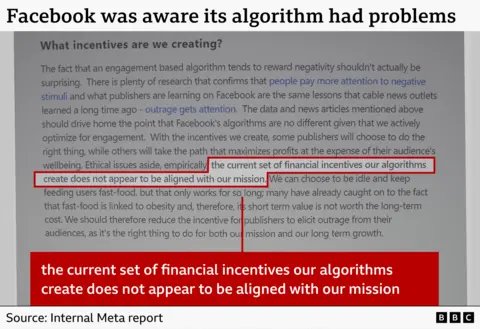

Facebook's Internal Research on Algorithmic Harm

Internal documents reveal that Facebook was aware its algorithm was amplifying harmful content. One study showed that sensitive content - material touching on moral beliefs or inciting violence - was more likely to trigger reactions and engagement, especially when it caused outrage.

"Given the disproportionate engagement, our algorithms presume that users like that content and want more of it," the document stated. Another internal study acknowledged that the algorithm offered content creators "a path that maximizes profits at the expense of their audience's wellbeing."

Brandon Silverman, whose social media monitoring tool Crowdtangle was acquired by Facebook in 2016, witnessed this period firsthand. He described CEO Mark Zuckerberg as "very paranoid" about competition, leading to massive investments in Reels while safety teams struggled to get approval for even small numbers of additional staff.

TikTok's Prioritization Problems

A TikTok trust and safety employee, speaking anonymously as "Nick," provided rare access to the company's internal dashboards showing how cases were prioritized. The evidence revealed that some relatively trivial cases involving politicians were given higher priority than serious reports involving harm to teenagers.

In one example, a political figure who had been mocked by being compared to a chicken was prioritized over:

- A 17-year-old in France reporting cyber bullying and impersonation

- A 16-year-old in Iraq complaining about sexualised images being shared

Nick said the reason for this prioritization was that the company ultimately cared more about maintaining strong relationships with politicians and governments to avoid regulations or bans than about children's safety.

"If you're feeling guilty on a daily basis because of what you're instructed to do, at some point you can decide, should I say something?" Nick said.

The "Radicalisation by Algorithm" Case

Perhaps most disturbing was the case of Calum, now 19, who said he was "radicalised by algorithm" from the age of 14. The algorithm showed him content that outraged him, leading him to adopt racist and misogynistic views.

"The videos energised me, but not really in a good way," Calum said. "They just made me very kind of angry. It very much reflected the way I felt internally, that I was angry at the people around me."

Counter-terror police specialists in the UK have observed the "normalisation" of antisemitic, racist, violent, and far-right posts in recent months. "People are more desensitised to real-world violence and they are not afraid to share their views," one officer said.

The Black Box Problem

Ruofan Ding, a former TikTok machine learning engineer who worked on the recommendation engine from 2020 to 2024, described the algorithms as a "black box" whose internal workings are difficult to scrutinize.

"We have no control of the deep-learning algorithm in itself," Ding said. Engineers don't pay much attention to content, treating posts as numerical IDs rather than actual material. They rely on content safety teams to remove harmful posts before the algorithm can promote them.

"There's the team that are responsible for the acceleration, the engine, right? So we expect the team working on the braking system was doing a good job," he explained.

However, as TikTok improved its algorithm almost weekly to gain market share, Ding started seeing more borderline content - problematic posts that only appeared after users had been browsing for a while.

Company Responses

Meta denied the whistleblowers' claims, stating: "Any suggestion that we deliberately amplify harmful content for financial gain is wrong." The company said it has strict policies to protect users and has made significant investments in safety and security over the last decade.

TikTok rejected the idea that political content is prioritized over young people's safety, calling the claims "fabricated." The company said it invests in technology that prevents harmful content from being viewed and maintains strict recommendation policies.

However, Nick's blunt advice to parents with children using TikTok was: "Delete it, keep them as far away as possible from the app for as long as possible."

The Human Cost

The whistleblowers' accounts reveal a troubling pattern: as social media companies compete for attention and engagement, the systems designed to protect users - especially vulnerable teenagers - are being overwhelmed or deprioritized.

With cuts to moderation teams, reorganization of safety structures, and the introduction of AI to handle some moderation tasks, the ability to deal effectively with harmful content appears to be limited. The volume of cases is too difficult to keep on top of, leaving teenagers and children especially at risk.

As one former Meta engineer put it, the pressure to compete led to a mindset of "do whatever we can to catch up," even if it meant allowing more harmful content to spread. The result is an algorithm arms race where the collateral damage is the mental health and safety of young users who are still developing their understanding of the world.

The whistleblowers' testimony suggests that until social media companies fundamentally realign their incentives - putting user safety ahead of engagement metrics and market competition - these problems will likely continue. The question remains whether these companies will make the necessary changes voluntarily or whether external regulation will be required to protect users from the unintended consequences of engagement-driven algorithms.

Comments

Please log in or register to join the discussion