Meta has entered into a significant agreement with AWS to deploy tens of millions of Graviton ARM cores, representing a major shift in AI infrastructure strategy. This deal underscores the growing demand for specialized compute resources in the era of agentic AI and highlights how major tech companies are securing resources through cloud partnerships rather than solely building their own infrastructure.

Meta's recent announcement of acquiring tens of millions of AWS Graviton ARM cores marks a pivotal moment in the evolution of AI infrastructure and the competitive landscape of server CPUs. This strategic move comes just weeks after Meta positioned itself as a launch customer for Arm's AGI CPU, indicating a clear and deliberate strategy to secure massive computational resources for its AI initiatives.

The Deal: Scale and Implications

According to AWS, Meta's deployment "starts with tens of millions of Graviton cores, with the flexibility to expand as Meta's AI capabilities grow." This unprecedented scale of ARM-based procurement demonstrates how aggressively major players are positioning themselves in the AI arms race. Notably, AWS confirmed that Meta is one of the largest customers of AWS Bedrock, their generative AI application platform, though specifics on whether these Graviton cores will run Bedrock or other applications remain undisclosed.

What makes this particularly interesting is that Meta is not merely purchasing chips but acquiring complete compute solutions hosted by AWS. This means the deal encompasses not just the Graviton processors themselves but also the associated power delivery, data center infrastructure, networking components, and management systems that constitute a modern cloud computing environment.

Technical Deep Dive: AWS Graviton5

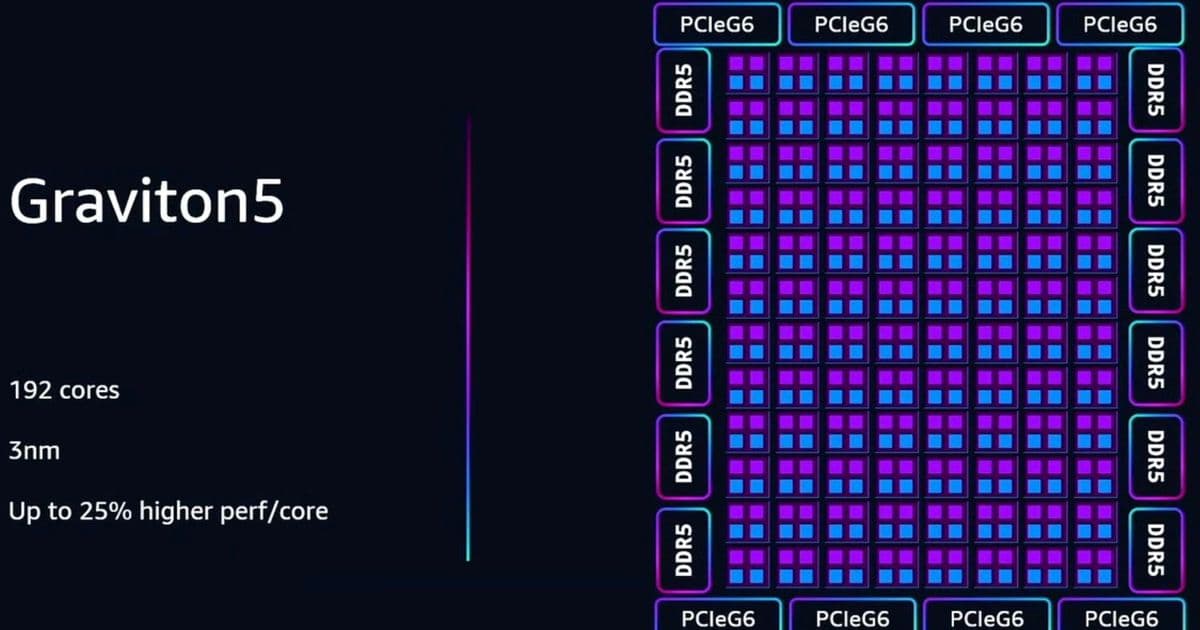

The Graviton5 processor, which appears to be the likely candidate for this deployment given its next-generation capabilities, represents a significant evolution in ARM-based server computing. Let's examine its technical specifications in detail:

- Architecture: 192 Arm Neoverse V3 Cores (all P-cores, no E-cores)

- Instruction Set: Armv9.2-A

- Cache Architecture:

- 2MB L2 cache per core (double the 1MB found in Graviton3)

- 192MB L3 cache

- Total of 600MB of cache per socket

- Memory Support: DDR5-8800

- Connectivity: PCIe Gen6 support

- Physical Design: Single socket with two NUMA (Non-Uniform Memory Access) regions

These specifications position Graviton5 as a competitive alternative to x86 offerings from AMD and Intel. The Neoverse V3 cores represent ARM's latest server-optimized architecture, designed specifically for high-performance computing workloads. The substantial cache hierarchy (600MB total) is particularly noteworthy, as it helps reduce memory latency—a critical factor in AI training and inference workloads.

The 3nm manufacturing process places Graviton5 in the same cutting-edge category as other next-generation server CPUs, including AMD's EPYC 9005 "Turin" and Intel's Xeon 6 series. This technological parity in manufacturing process suggests that ARM-based solutions are no longer just alternatives but direct competitors in the high-performance server space.

Strategic Implications for Meta

Meta's decision to leverage AWS infrastructure rather than solely relying on its own data centers represents a significant strategic shift. While Meta has extensive experience operating at scale—as demonstrated by its massive infrastructure supporting Facebook, Instagram, WhatsApp, and Messenger—this move suggests several strategic considerations:

Resource Scalability: By partnering with AWS, Meta gains access to virtually unlimited computational resources that can scale rapidly to meet fluctuating demands of AI workloads.

Infrastructure Diversification: This move reduces Meta's dependency on its own infrastructure and spreads risk across multiple providers.

Speed of Deployment: Leveraging existing AWS infrastructure allows Meta to deploy massive computational capacity much faster than building equivalent capacity from scratch.

Focus on Core Competencies: Outsourcing infrastructure management allows Meta to concentrate its engineering resources on AI model development and application innovation rather than infrastructure optimization.

Economic Considerations: The total cost of ownership may be favorable when considering the capital expenditure avoidance and the ability to pay only for what's used.

Market Impact and Competitive Dynamics

This deal sends ripples throughout the semiconductor and cloud computing ecosystems:

For AWS

This is a significant validation of their custom silicon strategy. The Graviton processors represent AWS's commitment to vertically integrated solutions that offer performance, cost, and efficiency advantages over traditional x86-based offerings. Securing Meta as a customer of this scale reinforces the market position of AWS's custom silicon portfolio and their broader cloud services.

For ARM

The deal represents a major victory for ARM's server ambitions. After years of trying to establish a meaningful presence in the data center market, ARM-based solutions are now being adopted by hyperscalers and major enterprises for their most demanding workloads. Meta's commitment to tens of millions of cores demonstrates that ARM has successfully transitioned from a niche player to a mainstream option for high-performance computing.

For AMD and Intel

While some might view this as a negative development for x86 vendors, the reality is more nuanced. The AI-driven demand for compute is so substantial that even with ARM gaining share, there remains enormous opportunity for traditional x86 vendors. Intel's recent solid guidance suggests that the market is expanding rapidly enough to accommodate multiple architectures. However, this deal does underscore the importance of specialized solutions for AI workloads and may accelerate the development of competitive x86 alternatives optimized for AI.

For the Broader AI Infrastructure Market

Meta's approach—securing massive computational resources through strategic partnerships rather than sole reliance on internal infrastructure—may become a template for other companies entering the AI space. This could lead to increased collaboration between hyperscalers and AI developers, potentially reshaping how computational resources are provisioned and managed in the AI era.

The Future of AI Infrastructure

The Meta-AWS deal highlights several emerging trends in AI infrastructure:

Specialized Compute: As AI workloads become more diverse and demanding, we're seeing increased adoption of specialized processors optimized for specific AI tasks rather than general-purpose CPUs.

Cloud-Native AI Development: Major AI developers are increasingly building their solutions with cloud deployment in mind, leveraging cloud provider advantages in scale, speed, and specialized hardware.

Hybrid Infrastructure Models: Companies are adopting hybrid approaches, combining internal infrastructure with cloud resources to balance control, cost, and scalability.

ARM's Ascendancy in Data Centers: After years of limited adoption, ARM-based processors are now becoming mainstream options for data center workloads, particularly in cloud environments.

Ecosystem Consolidation: As AI becomes more central to business strategy, we're seeing increased consolidation around major cloud providers and their specialized hardware offerings.

Conclusion

Meta's acquisition of tens of millions of AWS Graviton cores represents more than just a large hardware purchase—it signals a fundamental shift in how computational resources are being secured and deployed for AI workloads. This deal demonstrates that even companies with substantial internal infrastructure are recognizing the strategic advantages of cloud partnerships for AI development.

As the demand for AI compute continues to grow exponentially, we can expect to see more such strategic partnerships between AI developers and cloud providers. The Graviton5's technical specifications suggest that ARM-based solutions are now fully competitive with x86 offerings in many server workloads, particularly AI-related ones.

For industry observers, this deal serves as a bellwether for the direction of AI infrastructure development, highlighting the increasing importance of specialized compute, cloud partnerships, and the continued evolution of ARM as a viable alternative in the data center market.

Comments

Please log in or register to join the discussion