VS Code's recent change to automatically attribute code commits to Copilot by default has ignited a firestorm of criticism from developers who see it as deceptive and invasive.

In a move that has surprised and angered many developers, Microsoft has changed Visual Studio Code's default behavior to automatically add 'Co-authored-by: Copilot' to commit messages, even when users haven't used AI assistance. The pull request, merged into VS Code's main branch with little fanfare, has sparked intense debate about transparency, consent, and the boundaries of AI integration in developer tools.

What Changed

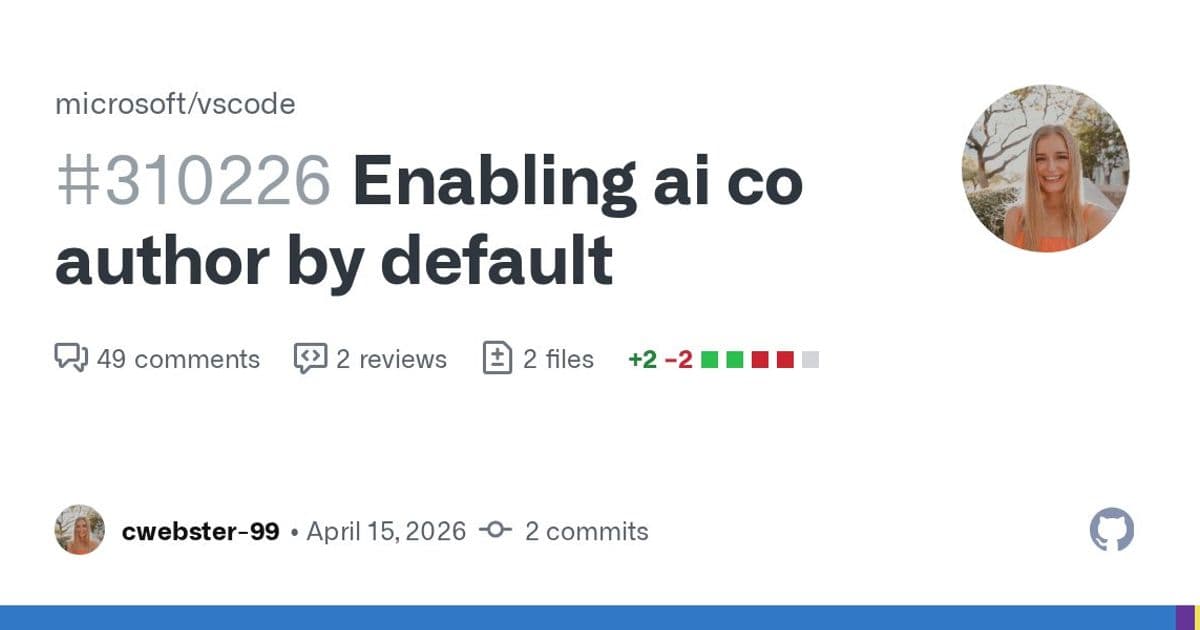

The modification in pull request #310226 alters the git.addAICoAuthor configuration setting in VS Code's Git extension, changing its default value from "off" to "all". This means that by default, when the Git extension detects AI-generated code contributions, it will automatically add a Co-authored-by trailer to commit messages attributing the work to Copilot.

The change appears minimal on paper—just flipping a default setting—but its implications are significant. As one commenter noted, "The logic here was: 'Let's inject our own name into every commit, even for users who never used Copilot, and ship it as a silent default.'"

User Backlash

The reaction to this change has been overwhelmingly negative. The pull request has accumulated 372 dislikes compared to just 2 likes, with many comments expressing frustration and anger.

Developers reported several troubling behaviors:

Attribution without AI use: Users who have explicitly disabled AI features with

"chat.disableAIFeatures": truestill found Copilot attribution added to their commits.Invisible changes: The modification happened silently, with no notification that commit messages would now include AI attribution.

False attribution: Some users reported seeing Copilot co-authorship on commits where they manually wrote every line of code and never interacted with AI features.

"I have 'chat.disableAIFeatures': true and co-authored by copilot still gets inserted into most commits. This is absolutely unacceptable," wrote one contributor.

Broader Concerns

The backlash extends beyond this specific change, touching on deeper issues about trust and transparency in developer tools.

Legal Implications

Several commenters raised legal concerns about automatic co-authorship. "This borders on fraud. Claiming co-authorship is a legal statement, with legal implications for re-licensing code," noted one developer. Co-authorship tags in Git are typically used to give proper credit to human collaborators who contributed to code, and automatically adding AI attribution could have unintended legal consequences.

Erosion of Trust

Many developers felt the change represented a breach of trust. "Whether they used it or not. This is exactly why Microsoft can't be trusted with your code, your commits, or your SDLC," commented one user.

The sentiment was widespread enough that some developers announced they would switch to alternative editors like Zed, Emacs, or other tools that don't automatically insert AI attribution.

Devaluation of Attribution

There's also concern that automatically tagging every commit with AI co-authorship devalues the meaning of co-authorship itself. "If every commit made in any IDE with AI features carries a Co-Authored-by tag, the metadata becomes noise," explained one commenter. "We already have a hard time tracking real authorship in PR-heavy workflows; auto-tagging makes it worse."

Microsoft's Response

After the intense backlash, Microsoft engineer dmitrivMS acknowledged the issues and promised to address them.

"Thank you all for your feedback, professional or otherwise. Sorry about the regression. I will work on fixing this in 1.119," they wrote, outlining several problems with the current implementation:

- Co-author functionality should never be enabled when AI features are disabled

- It shouldn't add attribution to changes not done by AI

- It needs better test coverage before changing the default

A follow-up pull request (#313931) has been opened to address these issues, and the change is now targeted for version 1.119 rather than the initially planned 1.117.0 release.

The Bigger Picture

This incident reflects broader tensions in the AI development tools space. As AI assistants become more prevalent in coding environments, questions arise about how to properly attribute work and maintain transparency with users.

The controversy also highlights a growing concern among developers about "feature creep" and unwanted AI functionality being added to tools they use daily. Many developers appreciate AI assistance when explicitly requested but object to it being automatically applied or attributed.

For Microsoft, this represents a delicate balance between promoting their AI products and respecting user autonomy. The automatic attribution could be seen as an attempt to inflate usage metrics of Copilot across the GitHub ecosystem, but as this backlash shows, such tactics risk alienating the developer community they depend on.

As one commenter put it: "I guess when no one uses your service, you have to pretend people are by adding it to commit messages without their knowledge."

The situation remains fluid, with Microsoft promising fixes in the next version, but the incident has undoubtedly damaged trust and sparked important conversations about the future of AI in developer tools.

Comments

Please log in or register to join the discussion