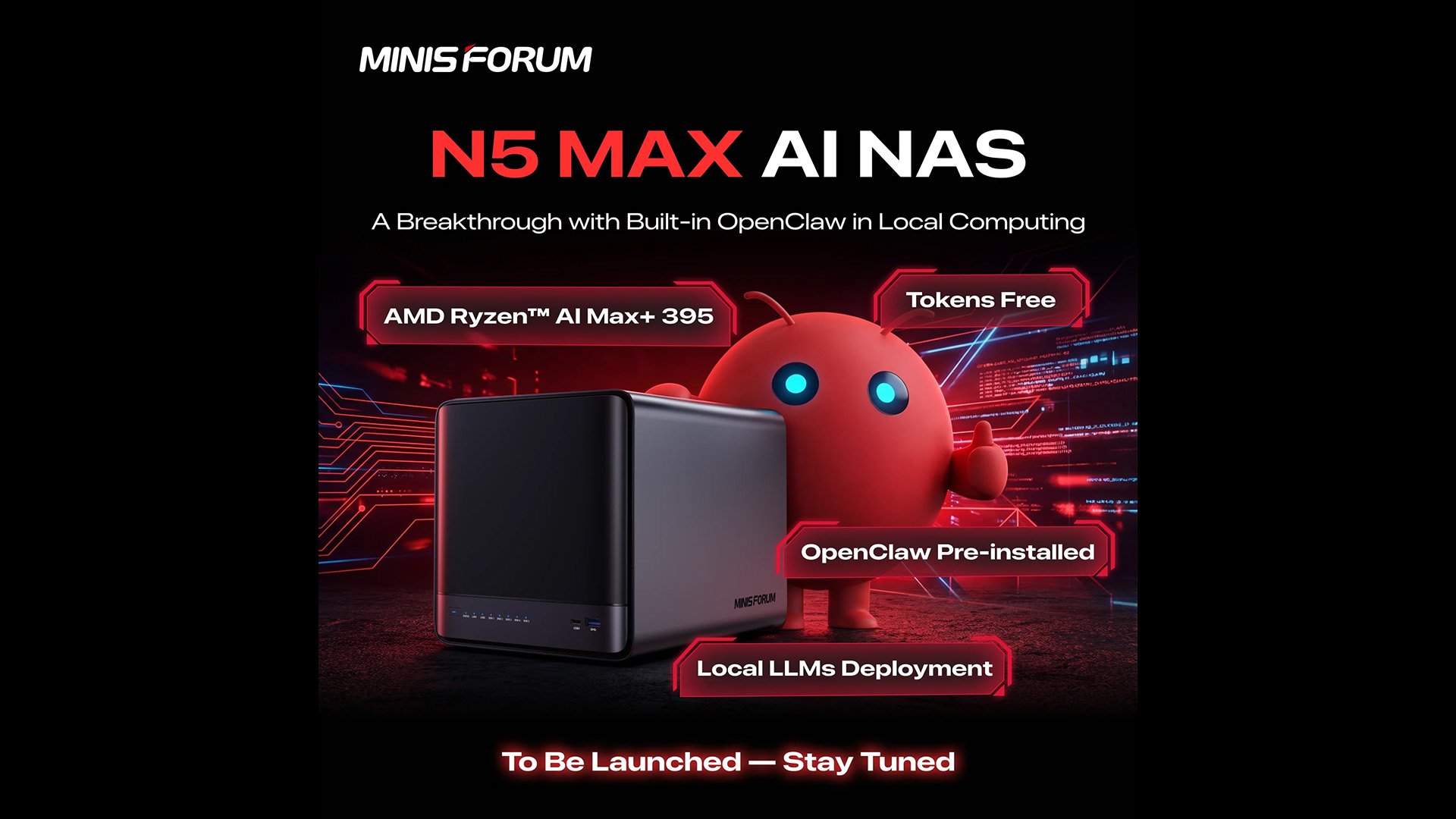

Minisforum's N5 Max AI NAS combines AMD's Strix Halo APU with OpenClaw AI framework, creating a powerful local LLM server that prioritizes data security and processing performance.

Minisforum has unveiled its latest network-attached storage solution that blurs the line between traditional NAS functionality and AI processing capabilities. The N5 Max AI NAS represents a significant evolution in the company's product lineup, featuring AMD's flagship Strix Halo APU and the OpenClaw AI framework pre-installed, positioning it as a dual-purpose device capable of serving both as a conventional NAS and a local large language model server.

Strix Halo APU Powers Next-Generation AI Workloads

The centerpiece of the N5 Max AI NAS is AMD's Ryzen AI Max+ 395 Strix Halo APU, a processor that brings desktop-class performance to the compact NAS form factor. This APU configuration includes 16 Zen 5 CPU cores capable of reaching clock speeds up to 5.1GHz, providing substantial computational power for both traditional NAS operations and AI workloads.

The integrated Radeon 8060S iGPU with 40 compute units adds another layer of processing capability, while the XDNA 2 NPU specifically accelerates AI-related tasks. The processor's 64MB of L3 cache ensures efficient data handling across multiple concurrent operations.

What makes this configuration particularly compelling for AI applications is the memory support. The Strix Halo APU can be configured with 32GB to 128GB of system memory, and Minisforum is likely opting for the higher end of this spectrum. This memory capacity is crucial because large language models scale exceptionally well with increased memory, allowing for larger models to be loaded and processed locally without the need for external cloud resources.

OpenClaw Framework Transforms NAS into AI Server

Beyond the impressive hardware specifications, the N5 Max AI NAS ships with OpenClaw pre-installed, an open-source AI framework that fundamentally changes how users can interact with their data. Unlike traditional NAS devices that simply store and serve files, this system can be programmed to perform intelligent operations on stored content.

OpenClaw operates as a routing layer, directing user requests to appropriate large language models and determining which tools to employ for task completion. This architecture enables a wide range of AI-powered capabilities directly on the device. Users can configure the system to run photo search engines that respond to conversational prompts, automate email composition, publish social media content, or even edit videos based on natural language instructions.

The framework's flexibility allows it to serve as a local AI server, processing requests entirely within the device's hardware. This localization of AI processing offers significant advantages, particularly in terms of data security. All interactions and data processing occur within the machine itself, eliminating the need to share sensitive information with external cloud services or internet-connected AI platforms.

Storage Configuration and Expandability

While Minisforum has been somewhat vague about the complete specifications of the N5 Max AI NAS, educated inferences can be made based on the company's existing N5 series products. The Max variant appears to utilize the same chassis design as the outgoing N5 AI NAS and N5 AI Pro NAS models.

If this chassis continuity holds true, the N5 Max AI NAS would feature five drive bays supporting both 3.5-inch and 2.5-inch HDDs, with each bay capable of accommodating drives up to 30TB in capacity. This translates to a potential raw storage capacity of 150TB across the HDD bays alone.

Additionally, the system would include three M.2 slots for solid-state storage, with two of these slots supporting U.2 drives. This combination of high-capacity HDD storage and high-speed M.2/U.2 SSDs creates a versatile storage architecture capable of handling both bulk data storage and performance-intensive AI workloads.

The Growing Trend of AI-Enabled NAS Systems

The N5 Max AI NAS represents a broader industry trend toward integrating AI acceleration capabilities into network-attached storage systems. This convergence of storage and AI processing creates devices that serve dual purposes, functioning as both traditional file servers and local AI processing hubs.

This trend addresses several key market demands. First, it provides users with the ability to run AI workloads locally, which is particularly important for organizations and individuals concerned about data privacy and security. Second, it reduces dependency on cloud-based AI services, which can be costly and may raise compliance issues for certain types of data.

Third, local AI processing eliminates latency issues associated with cloud-based services, enabling real-time interactions and faster processing of large datasets. This is especially relevant for applications like video analysis, large-scale document processing, or real-time content generation.

Security Considerations and OpenClaw Framework

While the integration of OpenClaw presents exciting possibilities, it also introduces security considerations that users must address. The framework's popularity has grown rapidly, but this growth has been accompanied by security challenges.

The primary concern centers around the framework's configuration requirements. If not properly secured, OpenClaw applications can potentially leak sensitive data to external networks. This risk is compounded by the existence of ClawHub, a third-party repository for OpenClaw extensions that has been found to contain malicious content.

These security challenges highlight the importance of proper configuration and careful selection of extensions when deploying AI-enabled NAS systems. Users must balance the powerful capabilities offered by frameworks like OpenClaw against the potential security risks they introduce.

Market Positioning and Future Implications

The N5 Max AI NAS positions Minisforum at the intersection of several growing market segments: high-performance compact computing, AI processing, and network-attached storage. By combining these elements into a single device, the company is targeting users who require both substantial storage capacity and local AI processing capabilities.

This approach is particularly relevant for small to medium-sized businesses, creative professionals, and technically sophisticated home users who want to maintain control over their data while leveraging AI capabilities. The device's ability to process AI workloads locally while storing large amounts of data makes it suitable for applications ranging from media production to data analysis to content generation.

The success of this product could influence the broader NAS market, potentially accelerating the integration of AI capabilities into storage devices across various price points and form factors. As AI processing becomes increasingly important in both consumer and enterprise contexts, the convergence of storage and AI processing represented by the N5 Max AI NAS may become a standard feature rather than a premium differentiator.

Pricing and Availability Considerations

As of the announcement, Minisforum has not disclosed pricing or a specific release date for the N5 Max AI NAS. This lack of pricing information makes it difficult to assess the device's market positioning relative to competing solutions.

However, given the premium components involved, particularly the Strix Halo APU and the substantial memory configurations likely to be offered, the device is expected to command a price premium over conventional NAS systems. The question will be whether the integrated AI capabilities and the convenience of having both storage and AI processing in a single device justify this premium for the target market.

The timing of the release will also be crucial, as the AI processing market continues to evolve rapidly. The device's success may depend on how well it balances current AI processing capabilities with the flexibility to adapt to emerging AI frameworks and models.

The N5 Max AI NAS represents a significant step in the evolution of network-attached storage, transforming these devices from simple file servers into intelligent processing hubs capable of running sophisticated AI workloads locally. As the boundaries between storage, computing, and AI processing continue to blur, products like this may define the next generation of data management and processing solutions.

Comments

Please log in or register to join the discussion