GitHub engineers reveal common failure points in multi-agent systems and provide concrete patterns for building reliable workflows through typed schemas, action schemas, and structured interfaces enforced by Model Context Protocol (MCP).

Gwen Davis·@purpledragon85 February 24, 2026 | 4 minutes Share: If you've built a multi-agent workflow, you've probably seen it fail in a way that's hard to explain. The system completes, and agents take actions. But somewhere along the way, something subtle goes wrong. You might see an agent close an issue that another agent just opened, or ship a change that fails a downstream check it didn't know existed.

That's because the moment agents begin handling related tasks—triaging issues, proposing changes, running checks, and opening pull requests—they start making implicit assumptions about state, ordering, and validation. Without providing explicit instructions, data formats, and interfaces, things won't go the way you planned.

Through our work on agentic experiences at GitHub across GitHub Copilot, internal automations, and emerging multi-agent orchestration patterns, we've seen multi-agent systems behave much less like chat interfaces and much more like distributed systems. This post is for engineers building multi-agent systems. We'll walk through the most common reasons they fail and the engineering patterns that make them more reliable.

- Natural language is messy. Typed schemas make it reliable.

Multi-agent workflows often fail early because agents exchange messy language or inconsistent JSON. Field names change, data types don't match, formatting shifts, and nothing enforces consistency. Just like establishing contracts early in development helps teams collaborate without stepping on each other, typed interfaces and strict schemas add structure at every boundary.

Agents pass machine-checkable data, invalid messages fail fast, and downstream steps don't have to guess what a payload means. Most teams start by defining the data shape they expect agents to return:

type UserProfile = { id: number; email: string; plan: "free" | "pro" | "enterprise"; };

This changes debugging from "inspect logs and guess" to "this payload violated schema X." Treat schema violations like contract failures: retry, repair, or escalate before bad state propagates.

The bottom line: Typed schemas are table stakes in multi-agent workflows. Without them, nothing else works. See how GitHub Models enable structured, repeatable AI workflows in real projects.

- Vague intent breaks agents. Action schemas make it clear.

Even with typed data, multi-agent workflows still fail because LLMs don't follow implied intent, only explicit instructions. "Analyze this issue and help the team take action" sounds clear. But different agents may close, assign, escalate, or do nothing—each reasonable, none automatable.

Action schemas fix this by defining the exact set of allowed actions and their structure. Not every step needs structure, but the outcome must always resolve to a small, explicit set of actions. Here's what an action schema might look like:

const ActionSchema = z.discriminatedUnion("type", [ { type: "request-more-info", missing: string[] }, { type: "assign", assignee: string }, { type: "close-as-duplicate", duplicateOf: number }, { type: "no-action" } ]);

With this in place, agents must return exactly one valid action. Anything else fails validation and is retried or escalated.

The bottom line: Most agent failures are action failures. For reducing ambiguity even earlier in the workflow—at the instruction level—this guide to writing effective custom instructions is helpful.

- Loose interfaces create errors. MCP adds the structure agents need.

Typed schemas, constrained actions, and structured reasoning only work if they're consistently enforced. Without enforcement, they're conventions, not guarantees. Model Context Protocol (MCP) is the enforcement layer that turns these patterns into contracts.

MCP defines explicit input and output schemas for every tool and resource, validating calls before execution.

{ "name": "create_issue", "input_schema": { ... }, "output_schema": { ... } }

With MCP, agents can't invent fields, omit required inputs, or drift across interfaces. Validation happens before execution, which prevents bad state from ever reaching production systems.

The bottom line: Schemas define structure whereas action schemas define intent. MCP enforces both. Learn more about how MCP works and why it matters.

Moving forward together

Multi-agent systems work when structure is explicit. When you add typed schemas, constrained actions, and structured interfaces enforced by MCP, agents start behaving like reliable system components. The shift is simple but powerful: treat agents like code, not chat interfaces. Learn how MCP enables structured, deterministic agent-tool interactions.

Tags: agentic AI AI agents GitHub Copilot

Written by Gwen Davis is a senior content strategist at GitHub, where she writes about developer experience, AI-powered workflows, and career growth in tech.

Related posts

AI & ML How AI is reshaping developer choice (and Octoverse data proves it) AI is rewiring developer preferences through convenience loops. Octoverse 2025 reveals how AI compatibility is becoming the new standard for technology choice.

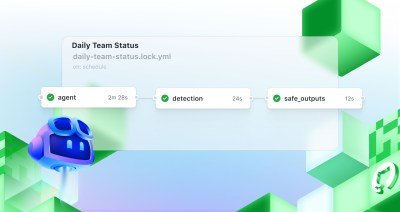

AI & ML Automate repository tasks with GitHub Agentic Workflows Discover GitHub Agentic Workflows, now in technical preview. Build automations using coding agents in GitHub Actions to handle triage, documentation, code quality, and more.

AI & ML Continuous AI in practice: What developers can automate today with agentic CI Think of Continuous AI as background agents that operate in your repository for tasks that require reasoning.

We do newsletters, too Discover tips, technical guides, and best practices in our biweekly newsletter just for devs.

Your email address

Your email address Subscribe Yes please, I'd like GitHub and affiliates to use my information for personalized communications, targeted advertising and campaign effectiveness. See the GitHub Privacy Statement for more details.

Comments

Please log in or register to join the discussion