Nvidia's new DGX Station workstation brings GB300 Grace Blackwell Ultra Desktop Superchip to developers, offering 784GB unified memory and 1600W power for AI workloads.

Nvidia has officially launched its DGX Station workstation PC, a powerful AI development system that brings the company's latest GB300 Grace Blackwell Ultra Desktop Superchip to developers, researchers, and data scientists. The workstation, which was unveiled at GTC 2025, is now available to order and will begin shipping in the coming months from major manufacturers including Asus, Dell, Gigabyte, MSI, Supermicro, and HP.

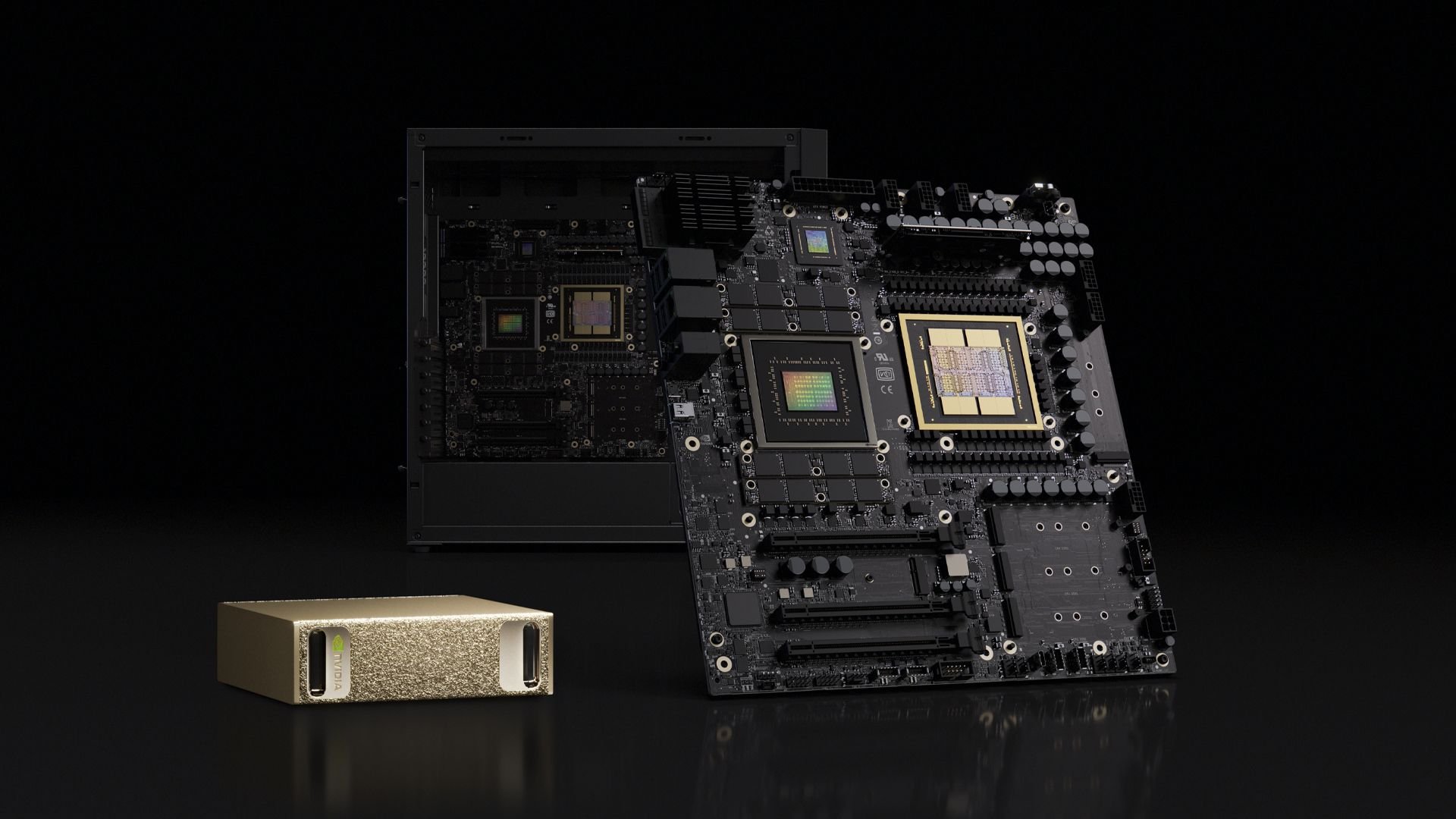

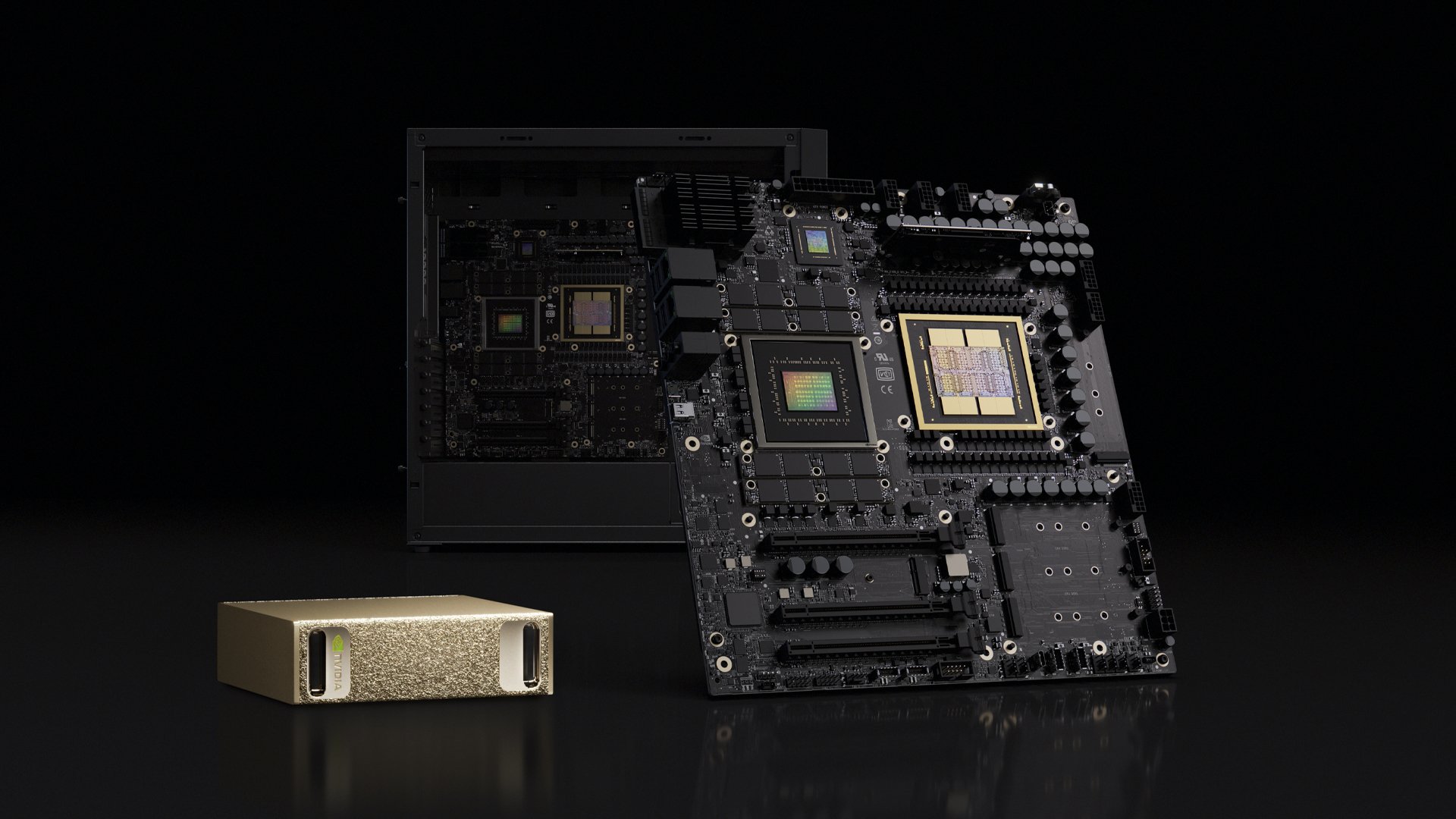

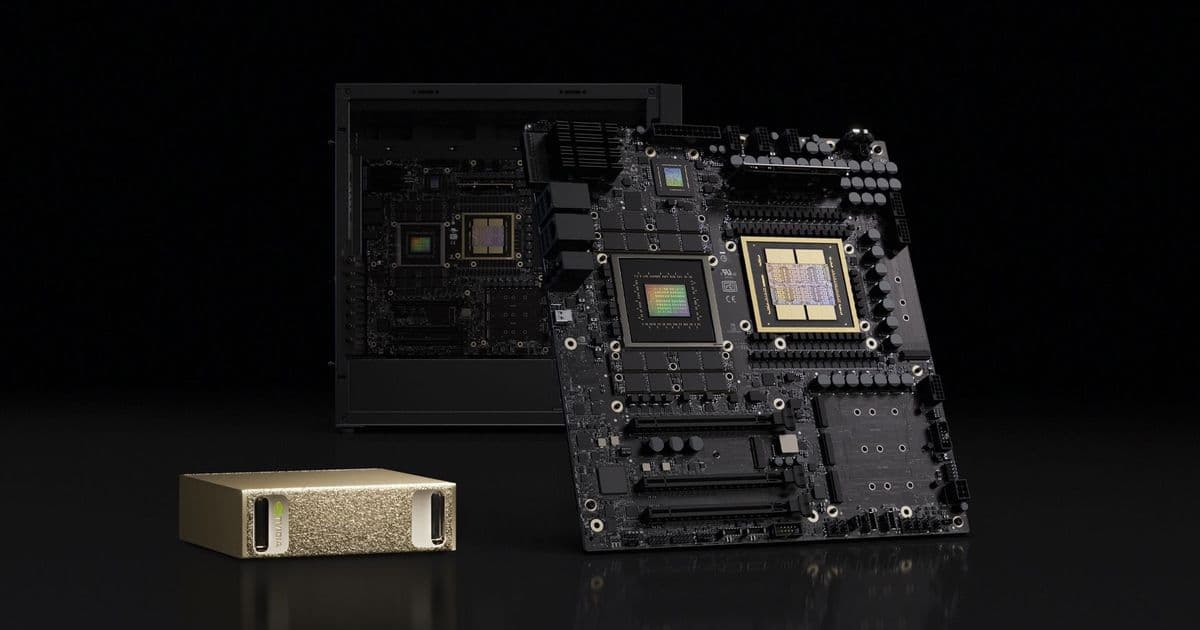

GB300 Grace Blackwell Ultra Desktop Superchip Architecture

The heart of the DGX Station is Nvidia's GB300 Grace Blackwell Ultra Desktop Superchip, a cutting-edge processor that combines a 72-core Grace CPU with a Blackwell Ultra GPU. These components are linked together via a 900 GB/s NVLink C2C interface, creating a unified system that eliminates traditional bottlenecks between CPU and GPU memory.

The GB300 represents a significant leap in AI workstation architecture. By unifying the memory pools, the CPU and GPU can share each other's memory resources, allowing for maximum AI performance without the overhead of data movement between separate memory domains. This architecture is particularly beneficial for large language model development and other memory-intensive AI workloads.

Massive Memory Configuration

One of the standout features of the DGX Station is its impressive memory configuration. The system comes equipped with a total of 784GB of onboard memory:

- CPU Memory: 496GB of LPDDR5X memory rated at 396GB/s of bandwidth

- GPU Memory: 252GB of HBM3e memory rated at 7.1 TB/s of bandwidth

The combination of high-bandwidth LPDDR5X for the CPU and ultra-fast HBM3e for the GPU provides developers with unprecedented memory capacity for AI model development and training. This configuration allows for working with larger models and datasets without the need for external memory expansion or cloud resources.

Expandability and Connectivity

Despite its integrated superchip design, the DGX Station maintains workstation-level expandability. The system features three PCIe Gen 5 x16 slots, with one slot wired with 16 lanes and the other two with eight lanes each. This configuration allows users to add discrete GPUs for specialized workloads such as simulation, ray-traced visualization, or additional AI acceleration.

Nvidia has certified several professional graphics cards for use in the DGX Station:

- RTX Pro 6000 Workstation Edition

- RTX Pro 6000 Blackwell Max-Q Workstation Edition

- RTX Pro 4000 Blackwell SFF Edition

- RTX Pro 2000 Blackwell graphics cards

The workstation also includes four M.2 slots for storage expansion, audio connectors, and multiple USB ports for peripheral connectivity.

Networking Capabilities

For high-speed networking, the DGX Station utilizes Nvidia's ConnectX-8 SuperNIC, which supports speeds of up to 800 Gb/s through two QSFP112 ports. This enterprise-grade networking solution enables rapid data transfer between systems and supports the workstation's ability to connect with another DGX Station to scale model capacity and performance.

The dual DGX Station configuration allows developers to effectively double their available resources, creating a more powerful development environment for larger AI projects without moving to full data center deployments.

Power and Thermal Design

Powering this AI powerhouse requires substantial electrical capacity. The DGX Station is rated for 1600W and features:

- One 24-pin ATX power connector

- One 8-pin EPS connector

- Three 12V-2x6 power connectors for the GPU

The power delivery system is designed to handle sustained high-performance workloads while maintaining system stability. The workstation's thermal solution must effectively dissipate heat from both the CPU and GPU components, which operate at high power levels during AI training and inference tasks.

Target Market and Applications

The DGX Station is specifically targeted at software developers, researchers, data scientists, and other professionals who need more AI processing power than what Nvidia's smaller DGX Spark can provide, but don't require the full-scale data center deployment of larger DGX systems.

This workstation fills a critical gap in the AI development ecosystem by providing a desktop-class system with data center-level AI acceleration capabilities. It's particularly well-suited for:

- Large language model development and fine-tuning

- Computer vision algorithm development

- Scientific computing and simulation

- AI research and prototyping

- Edge AI model development

Market Context and Competition

The launch of the DGX Station comes amid growing demand for AI development hardware as organizations across industries invest in AI capabilities. By offering a powerful yet accessible workstation solution, Nvidia is addressing the needs of developers who require substantial AI processing power but may not have immediate access to cloud resources or the budget for full-scale data center deployments.

The workstation's unified memory architecture and high-bandwidth interconnects position it competitively against other AI development platforms, offering a balance of performance, capacity, and accessibility that's particularly valuable for independent researchers and smaller organizations.

The DGX Station represents Nvidia's continued commitment to democratizing access to advanced AI development tools, bringing data center-class AI acceleration to the desktop workstation form factor.

Comments

Please log in or register to join the discussion