Nvidia has quietly removed its Rubin CPX context phase accelerators from its official roadmap, signaling a strategic pivot toward Groq 3 LPU processors following the $20 billion acquisition of the startup's technology and talent.

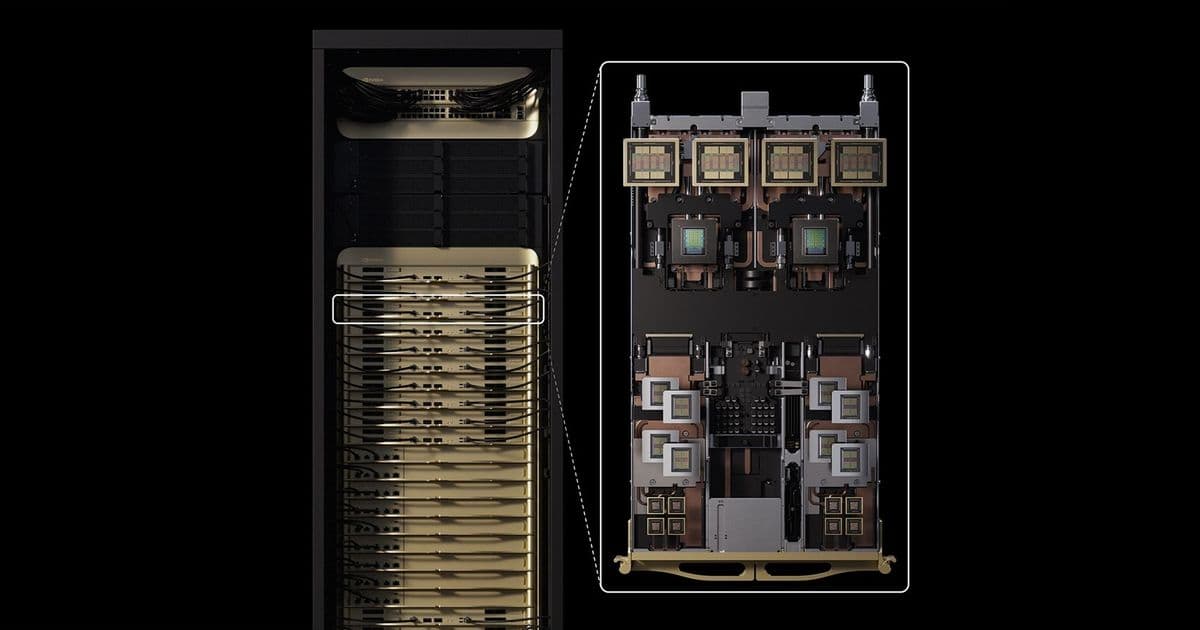

During Jensen Huang's keynote at GTC 2026, one significant absence caught industry observers' attention: the Rubin CPX context phase accelerator that Nvidia had promoted just last year as a critical component of the Vera Rubin platform. The CPX accelerators were notably absent from both the verbal presentation and the visual slides, which instead highlighted Nvidia's upcoming Groq 3 LPU processors and LPX racks, suggesting a fundamental shift in the company's architectural priorities.

Strategic Roadmap Realignment

The Rubin CPX GPU was originally designed as a specialized component within Nvidia's Vera Rubin and Vera Rubin Ultra platforms, specifically engineered to accelerate the initial compute-intensive context phase of queries that process input to generate the first output token. This architectural approach represented Nvidia's attempt to optimize the inference workflow by separating the context processing from the subsequent token generation phases.

The removal of CPX from the official roadmap comes just months after Nvidia's $20 billion non-exclusive license acquisition of startup Groq's chip technology and engineering talent. This substantial investment strongly indicates that the company is now prioritizing the LPU architecture over its previously planned CPX accelerators.

Technical Specifications Comparison

The architectural differences between the two approaches are substantial:

Rubin CPX Accelerators:

- Designed to deliver up to 30 NVFP4 PetaFLOPS of compute throughput

- Utilized GDDR7 memory technology

- Offered moderate bandwidth compared to HBM3E or HBM4

- Consumed significantly less power than high-bandwidth memory solutions

- Targeted the initial context phase of inference workloads

- Exhibited considerably higher latency compared to the LPU approach

Groq 3 LPU Processors:

- Current LP30 processor features 512 MB of SRAM

- Delivers 1.23 FP8 PFLOPS performance per processor

- LPX compute tray achieves 9.6 PF8 PFLOPS

- Complete LPX rack delivers 315 FP8 PFLOPS

- Primarily relies on internal SRAM rather than external DRAM

- Offers extremely low latency due to SRAM architecture

The fundamental architectural distinction lies in memory strategy. While the CPX approach utilized GDDR7 memory as a compromise between bandwidth and power efficiency, the Groq 3 LPU architecture leverages internal SRAM, which by definition provides faster access times, lower latency, and reduced power consumption compared to any type of DRAM technology.

Market Implications and Supply Chain Context

This strategic pivot has several significant implications for the AI accelerator market:

Competitive Positioning: By emphasizing low-latency inference capabilities, Nvidia is directly targeting a different segment of the AI workload spectrum compared to competitors like AMD and Intel, who continue to focus on raw compute performance and memory bandwidth.

Power Efficiency Leadership: The SRAM-based approach of the LPUs positions Nvidia to potentially lead in power efficiency metrics, a critical factor for data center operators facing increasing pressure to reduce energy consumption.

Customer Migration Challenges: Some Nvidia customers have already invested in software optimizations for the CPX architecture. The removal of these accelerators from the roadmap creates uncertainty for these customers, who may need to reassess their deployment strategies.

Industry Precedent: The move follows a pattern in the semiconductor industry where companies occasionally shift focus away from previously announced products, particularly after significant acquisitions. Off-roadmap parts are not uncommon, but the explicit removal from public presentations represents a stronger strategic signal.

Supply Chain Optimization: The SRAM-focused approach of the LPUs may enable Nvidia to simplify its supply chain by reducing dependency on multiple memory types and potentially leveraging more mature SRAM manufacturing processes.

Looking ahead, the industry will closely monitor whether Nvidia completely abandons the CPX architecture or continues to support it for existing customers. The company's public statements and future roadmap presentations will provide further clarity on this strategic shift.

What remains certain is that the $20 billion investment in Groq's technology represents a significant bet on the future of low-latency AI inference processing, potentially reshaping the competitive dynamics in the AI accelerator market for years to come.

Comments

Please log in or register to join the discussion