Oak Ridge National Laboratory is establishing the Next Generation Data Centers Institute to address the surging electricity demand from AI datacenters through integrated research on cooling, power systems, and grid integration.

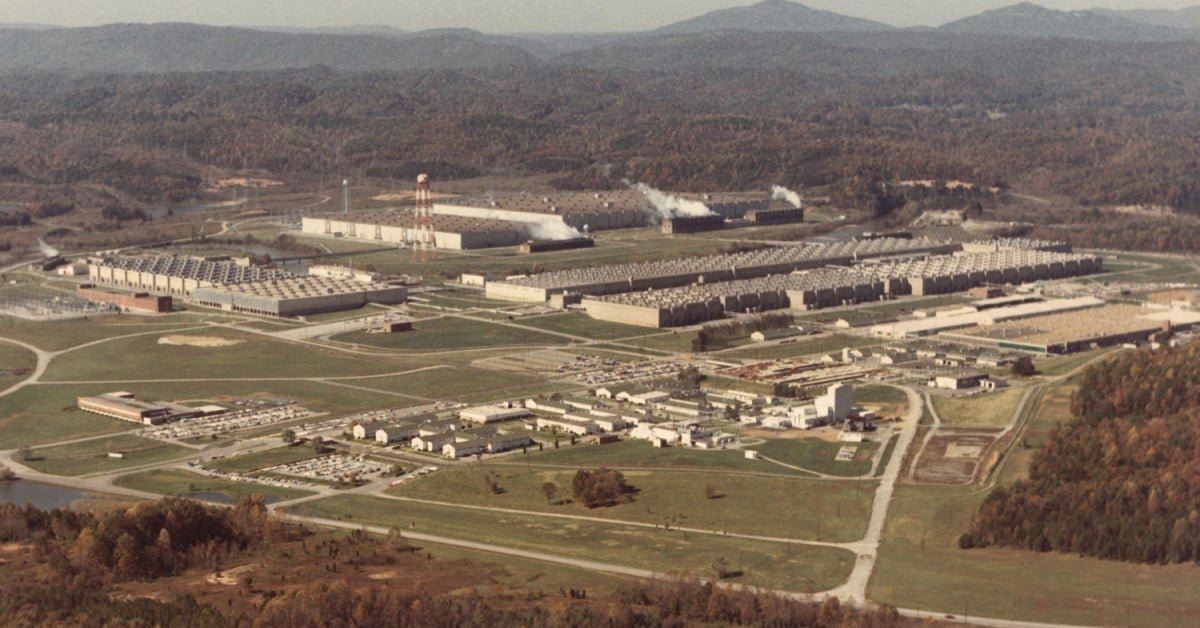

Oak Ridge National Laboratory (ORNL) is launching a new research initiative aimed at solving one of the most pressing challenges facing the AI industry: the explosive growth in electricity demand from datacenters. The Tennessee-based federal research center announced the creation of the Next Generation Data Centers Institute (NGDCI), an internal "institute within an institute" that will bring together expertise from energy, high-performance computing, cybersecurity, and grid technology to address this mounting infrastructure challenge.

The timing of this initiative reflects the urgency of the situation. According to ORNL Director Stephen Streiffer, "Artificial intelligence is transforming every part of our society, but its energy appetite is unlike anything we've seen before." The laboratory projects that electricity required to power AI datacenters could double or triple within the next decade, placing unprecedented strain on an electrical grid that's already under pressure.

This initiative comes as no surprise to industry observers who have watched the AI boom drive massive datacenter construction across the United States. Major tech companies have been racing to build facilities capable of supporting increasingly complex AI models, with each new generation requiring exponentially more computing power and, consequently, more electricity.

Six Research Pillars

The NGDCI will focus on six key research areas, each addressing a critical component of datacenter energy efficiency:

Thermal Management - Current cooling systems represent a staggering 40 to 60 percent of a datacenter's total energy consumption, according to ORNL's analysis. The institute will investigate next-generation cooling technologies that could dramatically reduce this overhead. This research could include advanced liquid cooling systems, phase-change materials, and novel heat rejection methods that go beyond traditional air conditioning.

Power System Architecture - Energy losses occur at every step as electricity travels from generation sources to server racks. The institute will explore innovations like direct current power distribution, advanced power electronics, and new materials that minimize conversion losses. These improvements could significantly increase the efficiency of energy delivery within datacenters.

Grid Integration - As AI datacenters become major power consumers, their relationship with the electrical grid must evolve. Research will focus on how these facilities can better integrate with grid operations, potentially serving as flexible loads that can adjust consumption patterns to help balance grid demand.

Security - Beyond traditional cybersecurity concerns, the institute will examine how to embed cyber-informed engineering principles directly into datacenter infrastructure. This includes developing quantum-safe communications protocols to protect against future threats as quantum computing capabilities advance.

Integrated Systems Modeling - This research area takes a holistic view, looking beyond individual datacenters to model how AI infrastructure will impact energy markets, employment patterns, material supply chains, and US technological competitiveness through 2030 and beyond. The goal is to anticipate second and third-order effects of the AI datacenter boom.

Operational Load Management - Using AI-enabled forecasting and intelligent workload scheduling, this research aims to optimize when and how computing tasks are executed to minimize energy consumption while maintaining performance requirements.

Industry Support and National Context

The initiative has already garnered support from major industry players. Forrest Norrod, head of AMD's Data Center Solutions Business, noted that "NGDCI is designed to address those challenges at scale," highlighting the industry's recognition that this is a problem requiring coordinated research efforts rather than individual company solutions.

ORNL's timing is particularly significant given its involvement in the "Genesis Mission," a Trump administration initiative launched last year to advance AI for scientific discovery. As part of this mission, ORNL is preparing to deploy two major systems: Discovery, set to succeed Frontier as the world's first exascale system, and Lux, an AI cluster designed to advance machine learning capabilities.

The NGDCI will focus on ensuring these and similar systems can operate reliably while minimizing their energy footprint. This research is critical not just for maintaining US competitiveness in AI but for ensuring that the infrastructure supporting this technological advancement remains sustainable.

The Broader Challenge

The scale of the challenge cannot be overstated. As AI models grow more complex and datacenters expand to meet demand, the US electrical grid faces a fundamental question: can it absorb this projected load growth without significant upgrades and new approaches to planning and operation?

ORNL's approach suggests that the answer lies not in simply building more power plants, but in creating intelligent integration between power systems, cooling technologies, workload management, and AI-enabled forecasting. By treating datacenters as adaptive national assets rather than passive power consumers, the institute hopes to transform what could be a crippling infrastructure bottleneck into a competitive advantage.

This research arrives at a critical juncture for the technology industry. As AI continues its rapid advancement, the energy required to train and run these systems has become a limiting factor. Solutions developed through NGDCI could determine whether the AI revolution continues its current trajectory or faces constraints imposed by physical infrastructure limitations.

The global implications are significant as well. While ORNL's research will initially focus on American infrastructure, the technologies developed could benefit datacenters worldwide as the AI boom spreads internationally. The institute's work may ultimately help shape how the entire industry approaches the energy challenge that has emerged as AI's most pressing practical limitation.

Comments

Please log in or register to join the discussion