The emergence of on-device AI runtimes like Microsoft's Foundry Local is enabling a new class of fully functional RAG applications that operate completely offline. This shift from cloud-dependent AI to local-first solutions presents significant opportunities for organizations working in air-gapped environments, remote locations, or under strict data sovereignty requirements.

Offline AI Revolution: Building Cloud-Free RAG Applications with Foundry Local

The AI landscape has long been dominated by cloud-dependent architectures that require constant connectivity, external API calls, and managed endpoints. However, a fundamental shift is occurring with the rise of on-device AI runtimes that enable fully functional applications to operate completely offline. Microsoft's Foundry Local represents a pivotal development in this movement, allowing organizations to build sophisticated RAG (Retrieval-Augmented Generation) applications that run entirely on local hardware without any cloud dependency.

The Changing Paradigm: From Cloud-Tethered to Device-First

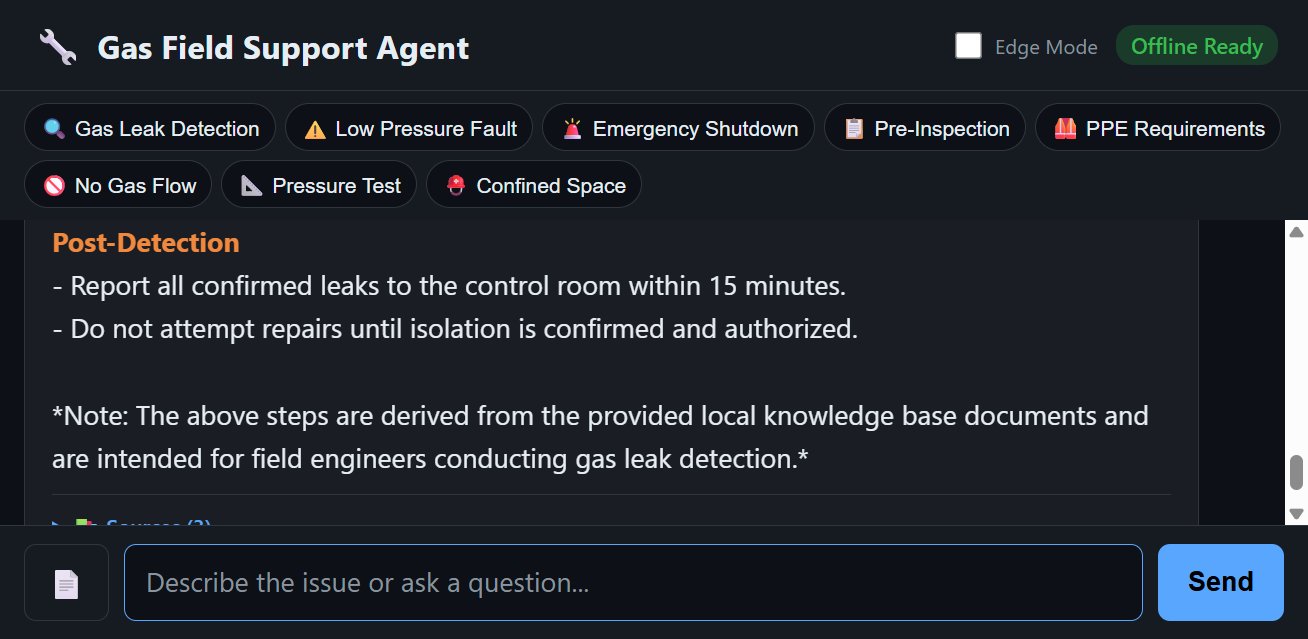

Most AI-powered applications today assume stable internet connectivity and rely on cloud-based language models that process data on remote servers. This approach creates significant limitations for organizations operating in environments with intermittent connectivity, strict data sovereignty requirements, or air-gapped systems. The Gas Field Support Agent project demonstrates a compelling alternative: a fully functional RAG application that runs entirely on a laptop with no outbound network calls required.

This offline capability addresses several critical business scenarios:

- Remote field operations where connectivity is unreliable or nonexistent

- Industrial environments with strict security requirements

- Healthcare facilities with patient data privacy constraints

- Military and government operations requiring air-gapped systems

- Manufacturing plants with network segmentation requirements

Technical Architecture Comparison: Offline vs. Cloud RAG

Cloud-Based RAG Architecture

Traditional cloud-based RAG implementations typically follow this pattern:

- User query sent to cloud API

- Cloud service retrieves relevant documents from cloud vector database

- Cloud service sends retrieved context to cloud language model

- Model generates response and returns to user

This approach introduces latency, ongoing operational costs, and external dependencies.

Offline RAG with Foundry Local

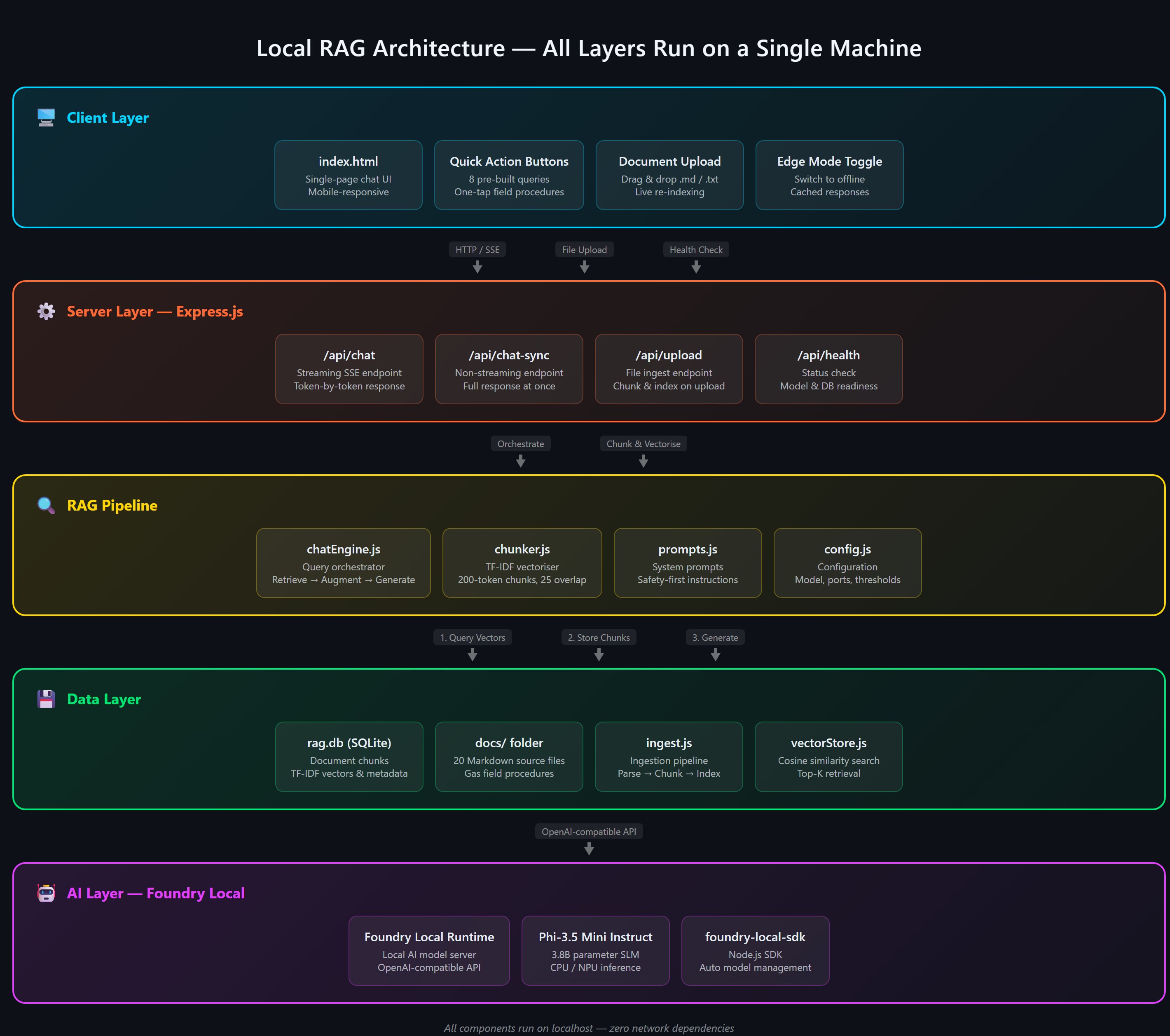

The offline implementation demonstrates a fundamentally different architecture:

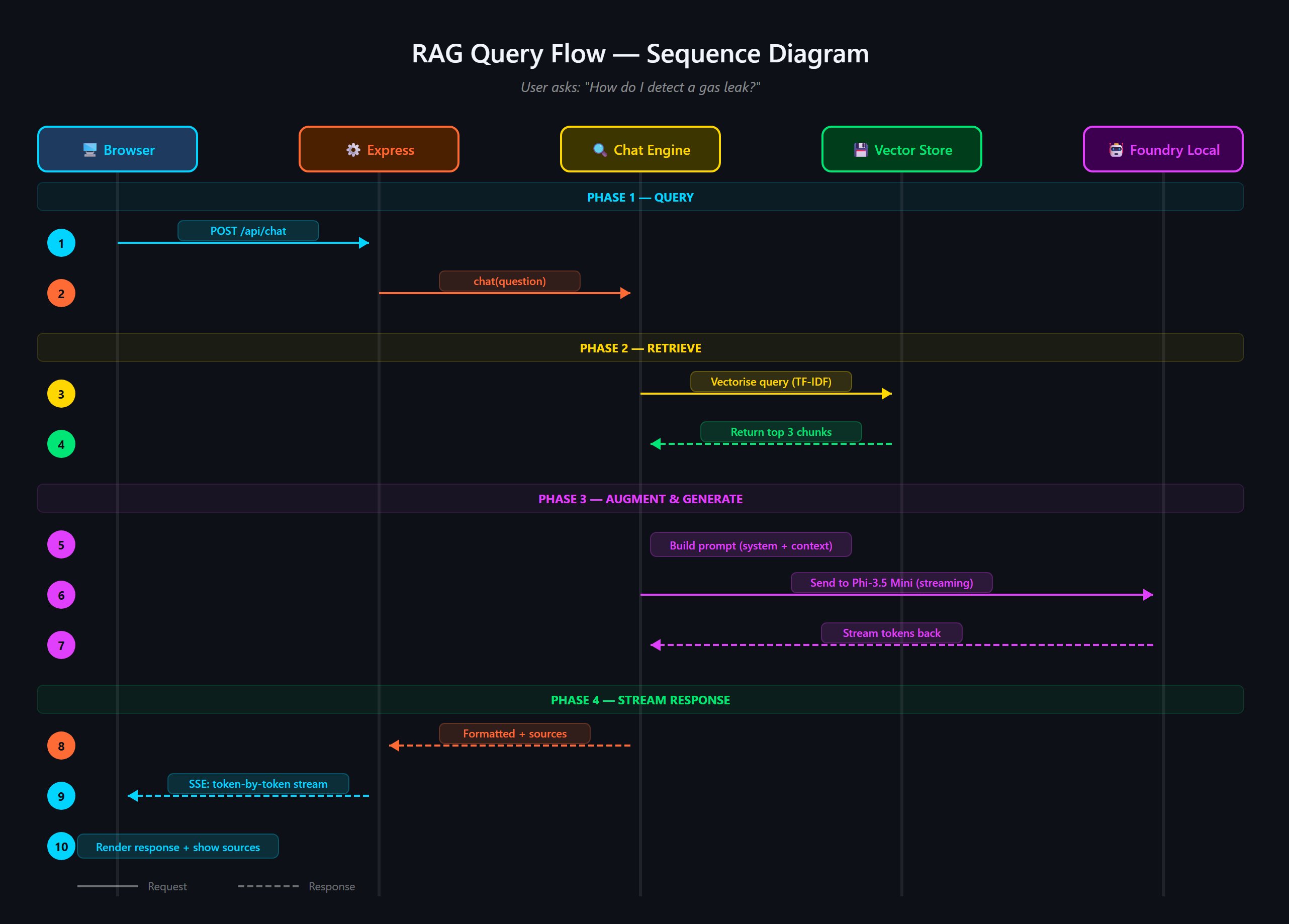

- User query processed locally by browser-based frontend

- Local server converts query to TF-IDF vectors

- Local SQLite database retrieves relevant document chunks

- Local Foundry Local instance generates response using Phi-3.5 Mini

- Response streams back to user via Server-Sent Events

The technical stack comparison reveals significant differences:

| Component | Cloud Approach | Offline Approach |

|---|---|---|

| AI Model | GPT-4, Claude, etc. | Phi-3.5 Mini (local) |

| Vector Store | Pinecone, Weaviate, etc. | SQLite with TF-IDF |

| Backend | Cloud Functions | Node.js + Express |

| Infrastructure | Managed cloud services | Single machine |

| Latency | 500ms-2000ms | <100ms |

| Operational Cost | Per-token pricing | Zero (after setup) |

| Data Sovereignty | Provider-dependent | Complete control |

Business Impact Analysis

Cost Considerations

Cloud-based RAG systems incur ongoing costs based on API usage, vector database operations, and infrastructure management. For organizations processing thousands of queries daily, these costs can become substantial. The offline approach eliminates recurring API costs, though it requires initial hardware investment and local model storage.

Performance Advantages

The offline implementation demonstrates superior performance characteristics:

- Sub-100ms response times compared to cloud-based alternatives

- No network latency or reliability concerns

- Consistent performance regardless of internet connectivity

- Predictable resource consumption patterns

Security and Compliance Benefits

Organizations in regulated industries benefit significantly from offline AI implementations:

- Complete control over data never leaves the device

- No third-party data processing or storage

- Simplified compliance with data sovereignty regulations

- Elimination of data transfer security risks

Implementation Trade-offs

While offline RAG offers compelling advantages, organizations should consider several trade-offs:

- Model Capabilities: Local models like Phi-3.5 Mini offer different capabilities compared to state-of-the-art cloud models

- Document Scale: TF-IDF retrieval works best with smaller document collections (hundreds vs. thousands of documents)

- Hardware Requirements: Local models require sufficient storage and computational resources

- Maintenance: Updates and improvements require manual intervention rather than automatic cloud deployments

Provider Comparison: Foundry Local vs. Alternative Approaches

Microsoft Foundry Local

Foundry Local represents Microsoft's entry into the on-device AI runtime space, with several distinctive characteristics:

- Model Support: Optimized for small language models (SLMs) like Phi-3.5 Mini

- Hardware Flexibility: Runs on CPU or NPU, no GPU required

- API Compatibility: Exposes OpenAI-compatible API, easing migration

- Model Management: Automatic download, caching, and lifecycle management

- Integration: Seamless integration with existing OpenAI-based codebases

Alternative Local AI Solutions

Several other approaches exist for implementing offline AI capabilities:

- Ollama: Open-source alternative supporting multiple models but requiring more manual configuration

- LM Studio: Desktop application with model management but less suited for production deployment

- LocalAI: Open-source OpenAI-compatible API with broader model support but more complex setup

- Direct Model Integration: Using frameworks like Transformers.js for browser-based execution

The key differentiator for Foundry Local is its production-ready nature and enterprise support from Microsoft, making it suitable for deployment in business environments.

Industry-Specific Applications

Oil and Gas Operations

The Gas Field Support Agent demonstrates clear value for energy sector operations:

- Remote field technicians accessing safety procedures without connectivity

- Emergency response guidance available immediately

- Compliance documentation always accessible

- Reduced dependency on communication infrastructure

Healthcare

Healthcare organizations can leverage offline RAG for:

- Clinical decision support at point of care

- Medical reference access in low-connectivity settings

- Patient data privacy compliance

- Emergency response protocols

Manufacturing

Manufacturing environments benefit from:

- Equipment maintenance guidance on factory floors

- Quality control procedures accessible on production lines

- Safety compliance documentation

- Reduced network infrastructure requirements

Implementation Strategy

Organizations considering offline RAG implementations should follow this phased approach:

Phase 1: Assessment

- Identify use cases requiring offline capabilities

- Evaluate document collection size and complexity

- Assess available hardware resources

- Define specific performance requirements

Phase 2: Prototype

- Implement proof-of-concept using the Gas Field Support Agent template

- Test with domain-specific documents

- Evaluate retrieval accuracy and response quality

- Measure performance metrics

Phase 3: Production Deployment

- Customize for specific domain requirements

- Implement additional security measures

- Develop maintenance procedures

- Train end users

Phase 4: Scaling and Enhancement

- Implement multi-agent architectures

- Add embedding-based retrieval for larger document collections

- Develop hybrid cloud/offline capabilities

- Integrate with existing enterprise systems

Future Trajectory

The offline AI space will continue to evolve along several key dimensions:

- Model Advancements: Local models will continue improving in capability while maintaining efficient resource requirements

- Hardware Integration: Deeper integration with specialized NPUs and edge computing hardware

- Multi-Modal Capabilities: Offline support for image, audio, and video processing

- Enterprise Features: Enhanced security, management, and deployment capabilities

- Hybrid Architectures: Seamless transition between offline and cloud modes based on connectivity

Microsoft's strategy appears to be positioning Foundry Local as a development platform that can scale to cloud-based solutions when needed, providing a consistent API experience across deployment models.

Conclusion

The emergence of production-ready offline RAG capabilities through solutions like Foundry Local represents a significant shift in AI deployment models. Organizations operating in constrained environments or with specific data sovereignty requirements now have a viable path to implement sophisticated AI applications without cloud dependencies.

The technical trade-offs are real but manageable for many use cases, and the business benefits—including cost predictability, performance advantages, and enhanced security—make offline AI an attractive option for a growing number of applications. As local models continue to improve and hardware capabilities advance, we can expect offline AI to move from niche use cases to mainstream adoption across multiple industries.

For organizations considering this approach, the Gas Field Support Agent provides an excellent starting point that can be adapted to specific domain requirements while demonstrating the practical viability of fully functional offline RAG systems.

Learn more about the Gas Field Support Agent project or explore Foundry Local for your own offline AI implementations.

Comments

Please log in or register to join the discussion