SynapX introduces SYNData, a multimodal data collection system combining ego vision, EMG signals, and exoskeleton gloves to capture human manipulation data for robot learning, claiming to address the data bottleneck in embodied AI through their Bio2Robot mechanism.

In the rapidly evolving field of embodied AI, data collection has emerged as a critical challenge. SynapX, a company founded just this January, has entered the scene with SYNData, a multimodal data collection system specifically designed for dexterous manipulation tasks. The company's claim that the real bottleneck in embodied AI is no longer model architecture or hardware but rather scalable, high-quality physical interaction data warrants closer examination.

Core Components of SYNData

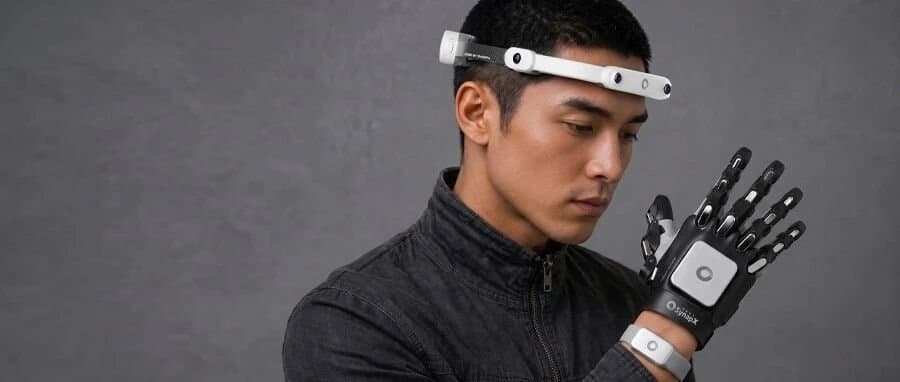

The SYNData system consists of three hardware modules working in concert:

- Quad-camera Ego headset: Captures first-person vision from the user's perspective

- EMG wristbands: Measure bio-electrical signals from the forearm muscles

- Bionic exoskeleton data glove: Records hand pose, full-palm contact states, and force distribution

What sets this system apart is not merely the addition of multiple sensors, but what SynapX terms the "Bio2Robot mechanism"—an AI model designed to transform human biological signals into robot-learnable data. This approach aims to enable data collection at scale without interfering with natural human behavior, a significant challenge in traditional motion capture systems.

Technical Approach and Innovation

The Bio2Robot mechanism represents an interesting approach to the data collection problem. Rather than relying solely on visual or kinematic data, SYNData integrates multiple modalities that provide complementary information about human manipulation. This multimodal approach allows for learning under conditions of visual occlusion, where traditional vision-based systems would fail.

The system's ability to capture simultaneous streams of ego vision, hand pose, contact states, force distribution, and bio-electrical signals creates a rich dataset that could potentially enable more robust manipulation policies for robots. The inclusion of EMG signals is particularly noteworthy, as it provides insight into the human operator's intent and muscle activation patterns, which may be more informative than kinematic data alone.

Performance and Validation

SynapX's performance in the ICRA 2026 AGIBOT World Challenge—securing 2nd place globally and 1st in China just three weeks after the company's founding—suggests their approach has merit. However, the details of their performance metrics and how they compare to other systems remain unclear from the available information.

Practical Applications and Limitations

The intended applications for SYNData appear to be in training robots for dexterous manipulation tasks across various domains, including manufacturing, healthcare, and service robotics. The system's focus on natural human behavior suggests it could be particularly valuable for learning complex manipulation skills that are difficult to program explicitly.

Despite these promising aspects, several questions remain:

Scalability: While the system is designed for scalable data collection, the practical limitations in terms of setup time, participant requirements, and data processing needs are not addressed.

Cost and Accessibility: The article doesn't provide information about the system's cost, which would be crucial for determining its accessibility to researchers and developers.

Data Quality and Quantity: The claim of "high-quality" data lacks specific metrics or benchmarks to substantiate it. Similarly, no details are provided about the volume of data that can be realistically collected.

Comparison to Existing Solutions: The article doesn't position SYNData relative to existing multimodal data collection systems, making it difficult to assess its relative advantages or disadvantages.

The Data Bottleneck in Embodied AI

SynapX's focus on the data bottleneck in embodied AI is well-founded. As robot manipulation capabilities advance, the need for large, diverse datasets that capture human expertise becomes increasingly apparent. Traditional programming approaches struggle to encode the nuanced decision-making and physical interactions involved in complex manipulation tasks.

The embodied AI field has seen significant progress in model architectures and hardware capabilities, but data collection has lagged behind. Systems like SYNData attempt to bridge this gap by providing tools to capture the rich multimodal data needed to train manipulation policies that generalize across different scenarios.

Future Directions

For SYNData to have a significant impact in the embodied AI space, SynapX will need to demonstrate that their system can reliably collect data at scale, that the Bio2Robot mechanism effectively transforms biological signals into actionable robot learning data, and that the resulting models outperform those trained with alternative approaches.

As the field continues to evolve, we may see further integration of biological signals into robot learning systems, potentially including neural interfaces that provide even more direct access to human intent. However, the practical challenges of such approaches remain substantial.

For those interested in embodied AI and robot manipulation, keeping an eye on SynapX's future publications and benchmarks will be crucial for understanding whether SYNData represents a meaningful advance in addressing the data bottleneck or is another addition to the growing collection of multimodal data collection systems.

Comments

Please log in or register to join the discussion