An exploration of how modern coding agents created a functional chess engine in TeX—a language never designed for such purposes—achieving remarkable results through innovative problem-solving.

TeXCCChess: When AI Agents Redefine Programming Boundaries

What happens when you ask a 2026 coding agent to build a chess engine from scratch in a language that was never designed for this purpose? The answer, as demonstrated by Mathieu Acher's fascinating experiment, is both surprising and illuminating. TeXCCChess, a chess engine written entirely in TeX (the macro language behind LaTeX), represents a remarkable achievement in computational creativity and problem-solving within extreme constraints.

The experiment began with a deliberately vague challenge: "I want to build a chess engine in LaTeX..." with no architecture document, no step-by-step guidance, and no prior examples to reference. Building a chess engine is already a non-trivial software engineering challenge, involving board representation, move generation with special rules, recursive tree search with pruning, and evaluation heuristics. Doing it in TeX, which lacks arrays, functions with return values, convenient local variables, or conventional data structures, represents an order of magnitude greater difficulty.

The Architecture of Impossibility

The resulting engine, TeXCCChess, is approximately 2,100 lines of pure TeX code that runs on pdflatex compilation. Its architecture reveals a fascinating adaptation of computer science concepts to TeX's peculiar constraints:

- Board representation: 64 TeX \count registers (\count200 through \count263) serve as memory, one per square

- Piece encoding: Signed integers from -6 to +6 (positive for white, negative for black, zero for empty)

- Move generation: Pseudo-legal generation followed by legality filtering

- Search algorithm: Depth-3 negamax with alpha-beta pruning and quiescence search

- Evaluation function: Material counting plus piece-square tables

- UCI support: A Python wrapper bridges pdflatex to the Universal Chess Interface protocol

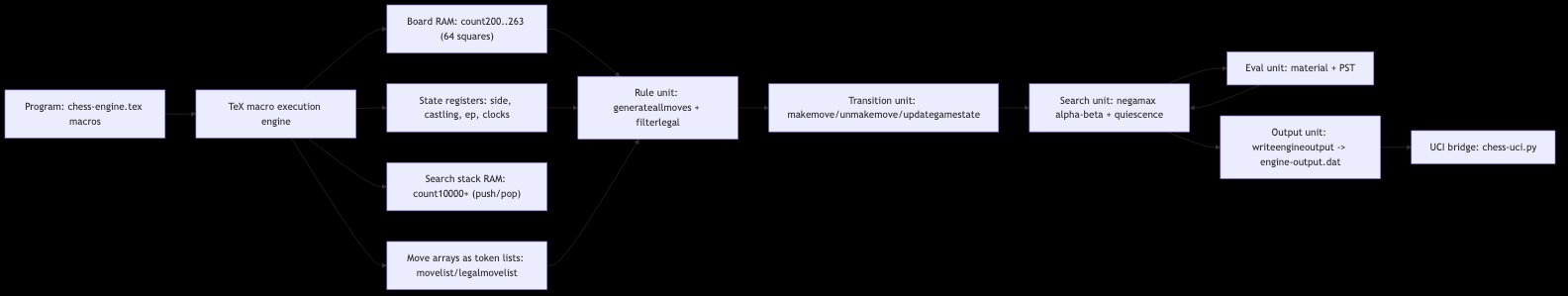

TeXCCChess architecture: from TeX macros through memory registers to UCI bridge

What emerges from this design is essentially a tiny virtual machine built on top of TeX's macro expansion engine. The \count registers function as RAM, with dedicated address ranges for the board, scratch computation, and the search call stack. The \csname lookup tables act as read-only ROM for precomputed data, while token lists serve as dynamically allocated buffers. Macros like \makemove/\unmakemove and \pushstate/\popstate form the instruction set, with TeX's \ifnum and \loop primitives providing control flow.

The entire system operates as a register machine with no stack frames, no heap, and no garbage collector—just flat integer registers and name-based indirection. In essence, pdflatex becomes the CPU executing this custom virtual machine.

The Dance of Constraints

Building a chess engine in TeX surfaced a collection of language-specific challenges that would stump most programmers. The coding agent had to discover and work around these limitations through experimentation:

Division semantics: TeX's \numexpr division rounds rather than truncates. \numexpr 63/8\relax gives 8, not 7. This initially broke coordinate extraction until the solution: precompute lookup tables at load time using \divide (which truncates).

Control sequence naming: Digits in normal control sequence names aren't part of the name. \ray@t1 is parsed as \ray@t followed by 1, not a single control sequence. The solution: use letters instead of digits (\ray@ta, \ray@tb).

Macro character handling: The @ character in macro names requires \makeatletter. Without this, TeX treats @ as a regular character, causing internal macros to silently fail.

Boolean flag limitations: \newif inside macros fails on the second call since it creates global flags. All boolean flags must be declared once at the global level.

Register collision: Since everything is global in TeX, a macro using \count190 as a loop counter silently corrupts any outer macro also using \count190. The engine reserves specific register ranges for specific purposes to avoid this.

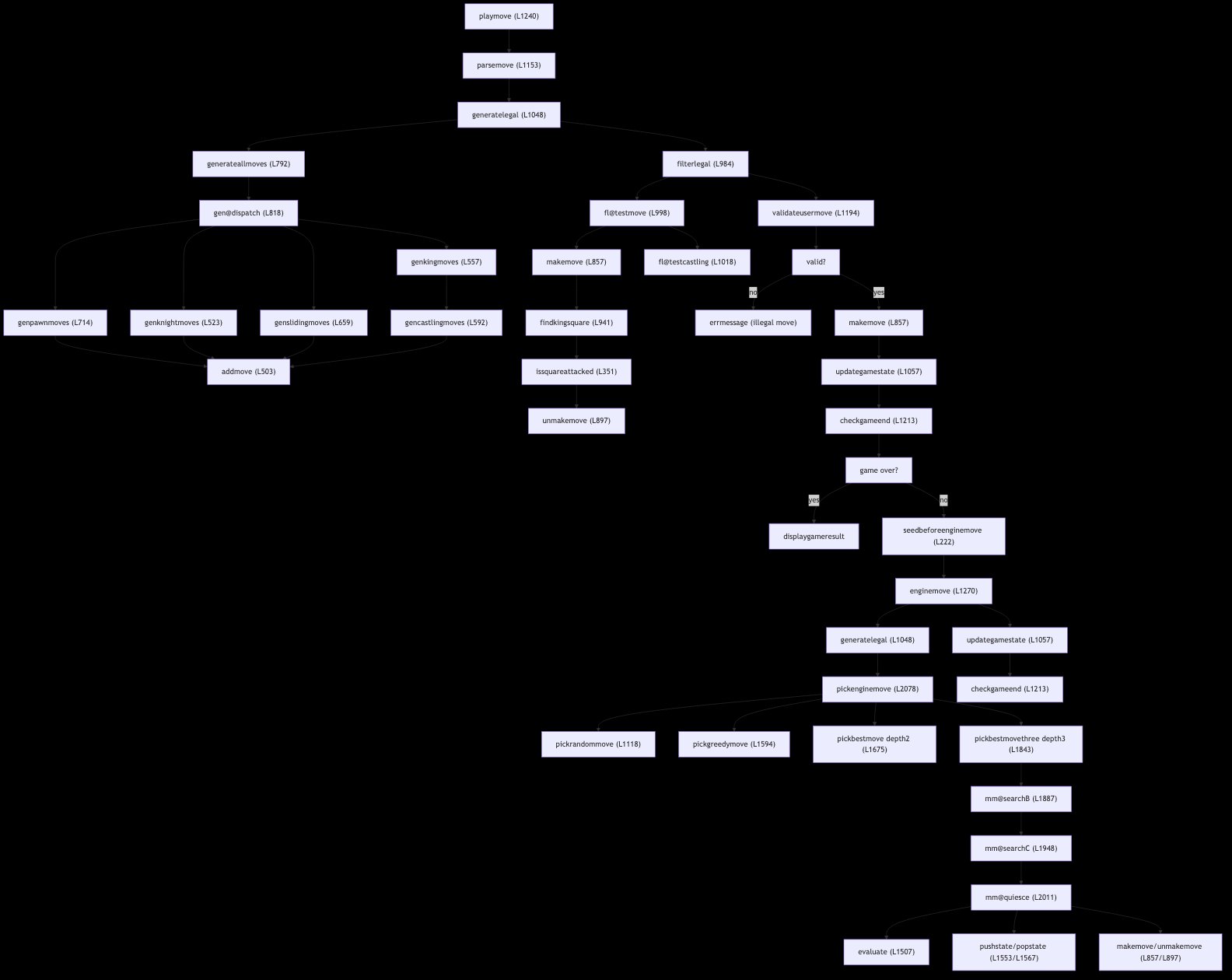

TeXCCChess game flow: from playmove through move generation, legality filtering, and depth-3 search

The State Stack Problem

One of the most fascinating aspects of TeXCCChess is its solution to the state stack problem. A chess engine needs to make a move, search deeper, then unmake the move. In conventional programming, local variables or a call stack handle this naturally. TeX has grouping-based scoping, but the engine's design uses global state throughout.

The solution implemented by the coding agent is a hand-rolled state stack using high-numbered \count registers (starting at \count10000) to avoid collisions. Each search depth gets 9 register slots to save and restore the full game state. The ordering is critical: \pushstate must be called after \makemove but before \updategamestate, with the reverse for unmake. Getting this wrong produces subtle bugs that manifest only occasionally—exactly the kind of edge cases that make programming in constrained environments so challenging.

Search in the Absence of Recursion

TeX can recurse via macro expansion, but lacks a call stack, local variables, and risks hitting engine limits with deep recursion. The search algorithm therefore uses three explicit loop levels, one for each depth:

- loopA (depth 0): iterates over candidate moves

- loopB (depth 1): iterates over opponent's replies

- loopC (depth 2): iterates over counter-replies, capped at 12 moves for speed

Each depth level stores its moves in separate indexed \csname slots because the inner search clobbers global move-tracking variables. After returning from an inner search, the current move must be re-read from its csname storage—a process that reveals the intricate dance of state management required in this environment.

Alpha-beta pruning is implemented using TeX's \ifnum comparisons, with the >= operator requiring a specific idiom since there's no \ifge primitive: \ifnum X < Y \relax\else. Each depth level has its own cutoff flag, declared as TeX booleans at the top level to avoid the "already defined" error.

Evaluation Without Incrementality

The evaluation function scans all 64 squares, summing material values plus piece-square table (PST) bonuses. The PSTs follow the well-known Simplified Evaluation Function from the Chess Programming Wiki, with pawns rewarded for advancing toward the center, knights preferring central squares, and so on.

Notably, the evaluation is computed from scratch on every call—there's no incremental updating. This is slow, but incrementality would require tracking piece-list data structures that are particularly painful to manage in TeX's global-state model. The trade-off reflects a fundamental constraint of the environment: simplicity of implementation trumps performance optimization.

The Development Journey

The development of TeXCCChess spanned five sessions over ten days, with clear progression from basic functionality to sophisticated play:

- Session 1: Architecture planning, with 19 API calls establishing the 17-step implementation plan.

- Session 2: Building a random-move engine (122 API calls), encountering and fixing the coordinate extraction bug.

- Session 3: Creating UCI infrastructure and Elo measurement tools (140 API calls), including a full SAN resolver.

- Session 4: Implementing depth-2 minimax (157 API calls), discovering and fixing register collision issues.

- Session 5: The "big push" to depth-3 with alpha-beta pruning, quiescence search, and PSTs (212 API calls), overcoming timeout issues and achieving the final strength.

The engine's Elo progression tells a compelling story: from ~300 with random moves, to ~550 with depth-2 minimax, finally reaching ~1280 with the full implementation. This represents a thousand-point improvement achieved through algorithmic sophistication rather than raw computational power—a remarkable demonstration of how better algorithms can overcome hardware limitations.

Strengths and Limitations

TeXCCChess achieves an estimated strength of ~1280 Elo (95% CI: ~1225–1345), equivalent to a casual tournament player who has studied some openings and doesn't hang pieces in obvious ways. This is particularly impressive given the constraints:

- Search depth: capped at 3 plies + quiescence

- No opening book: starts from scratch every game

- Simplified evaluation: material + PSTs only

- Always promotes to queen: no under-promotion

The engine loses convincingly to stronger opponents like Stockfish above 1500 Elo, but its performance is respectable given its implementation environment. More importantly, it handles complex chess rules correctly, including castling, en passant, promotion, check detection, stalemate, and the 50-move rule.

Implications for AI and Programming

TeXCCChess serves as a compelling case study in several domains:

Coding agent capabilities: The experiment demonstrates that modern coding agents can create sophisticated, functional software in unconventional programming environments, with no existing examples to reference. This challenges the notion that these agents merely "regurgitate" training data.

Problem-solving within constraints: The engine showcases how fundamental computer science concepts can be implemented in extremely limited environments, offering insights into the essence of computation itself.

Language design trade-offs: TeX's limitations—no arrays, no functions with return values, no local variables—forced the creation of alternative paradigms that might inform more general approaches to programming language design.

Educational value: The implementation provides a unique perspective on how algorithms work at a fundamental level, stripped of the abstractions that normally obscure their operation.

Counter-Perspectives and Questions

Despite its achievements, TeXCCChess raises several questions:

Efficiency vs. creativity: While the engine is functionally impressive, its development required significant human guidance (650 API calls across 5 sessions) and computational resources (53 pdflatex compilations). Does this represent efficient problem-solving or an expensive demonstration of capability?

Scalability: The engine's strength (1280 Elo) is respectable but still relatively weak compared to top chess engines. How much of this limitation stems from TeX's constraints, and how much from the current state of coding agent technology?

Understanding vs. pattern matching: Did the coding agent truly "understand" chess programming, or did it recognize patterns in its training data and assemble them in a novel way? The question touches on deeper issues of AI cognition and creativity.

Practical applications: Beyond demonstrating capability, does TeXCCChess have practical applications? The author notes it's "very unlikely the model memorized one verbatim," but does that make it useful beyond serving as a proof-of-concept?

Conclusion

TeXCCChess represents more than just a chess engine—it's a testament to the evolving capabilities of coding agents and a fascinating exploration of problem-solving within extreme constraints. The experiment demonstrates that modern AI systems can synthesize complex software in environments never intended for such purposes, achieving functional results through innovative adaptations of fundamental computer science concepts.

The development process itself, documented across five sessions with clear progression from basic functionality to sophisticated play, offers valuable insights into how coding agents approach and solve complex problems. The technical challenges overcome—from implementing a state stack without conventional stack frames to creating evaluation functions without incremental updates—reveal both the limitations and potential of programming in constrained environments.

As we continue to explore the boundaries of what coding agents can achieve, TeXCCChess stands as a remarkable milestone—a chess engine that plays not through computational brute force, but through the elegant adaptation of algorithms to an unforgiving programming environment. It reminds us that sometimes the most innovative solutions emerge not from removing constraints, but from learning to dance within them.

The complete source code and evaluation infrastructure for TeXCCChess are available on GitHub, offering researchers and enthusiasts the opportunity to explore this fascinating project further. As part of a series on AI-generated chess engines across programming languages, it represents just one chapter in the ongoing story of how artificial intelligence is redefining what's possible in software development.

For those interested in exploring this project further, the engine can be played interactively by editing a .tex file and recompiling with pdflatex (both locally and on Overleaf), or through automated tournaments against Stockfish via the UCI wrapper. The documentation provides comprehensive instructions for setup, interactive play, and evaluation.

In the end, TeXCCChess demonstrates something profound: that with the right guidance and sufficient capability, even the most unconventional programming environments can yield functional, sophisticated results. It's a reminder that innovation often emerges not from following established paths, but from daring to venture into uncharted territory.

Comments

Please log in or register to join the discussion