A deep analysis of how one researcher reverse-engineered Kasada's sophisticated virtual machine-based anti-bot protection used by major platforms like Nike, Kick, and Twitch, revealing the complex cat-and-mouse game between bot developers and security systems.

The digital realm has become a perpetual arms race between automation and anti-automation, with recent developments showing increasingly sophisticated approaches to bot detection. Kasada, a prominent player in this space, has implemented a virtual machine-based system that represents a significant evolution in anti-bot technology. This article examines how one researcher successfully reverse-engineered this system, shedding light on both the technical sophistication of modern anti-bot measures and the persistent ingenuity of those working to bypass them.

The Architecture of Modern Anti-Bot Systems

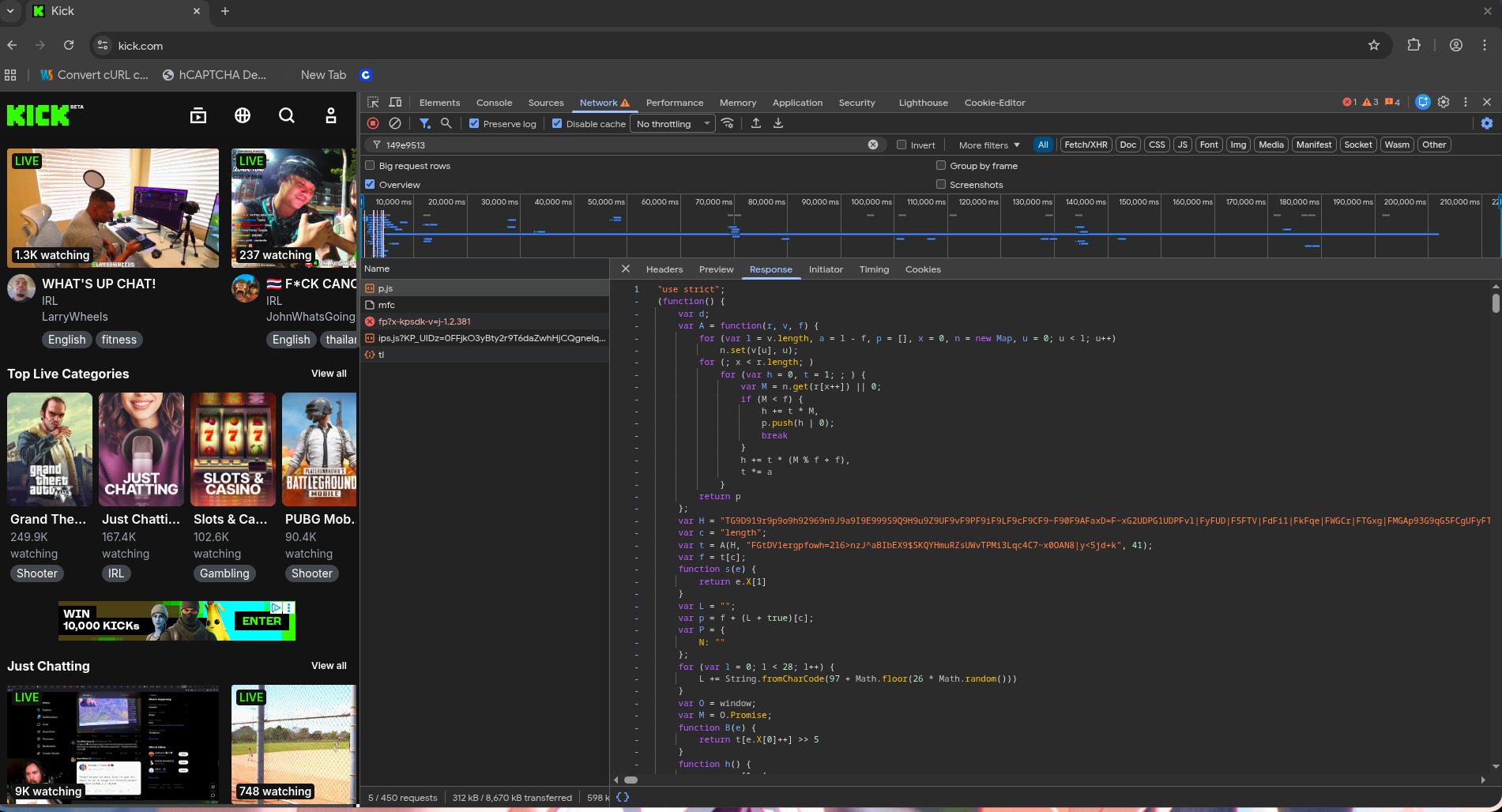

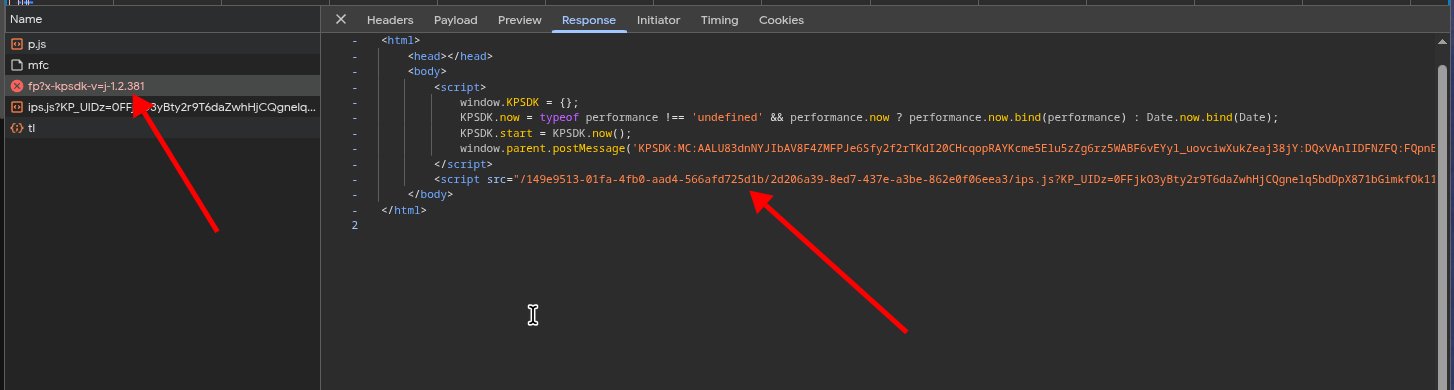

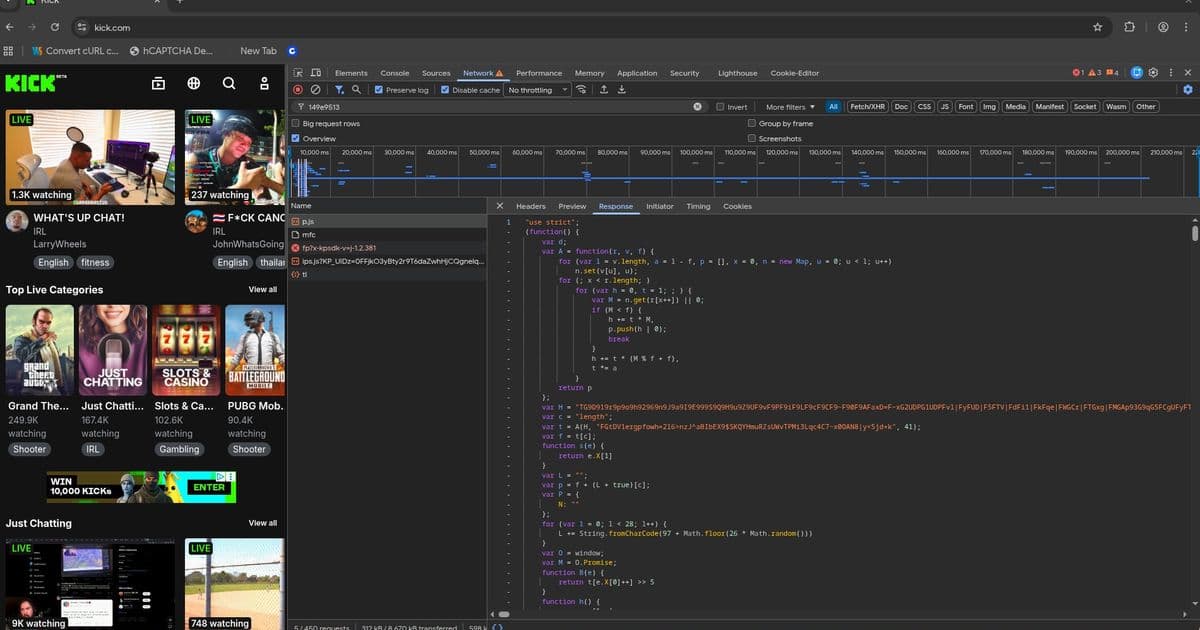

At its core, Kasada's approach represents a paradigm shift from simpler captcha systems to a holistic browser fingerprinting methodology. The system operates through a multi-stage process that begins when a user visits a protected site. Rather than presenting a visible challenge, the system redirects the browser to an invisible iframe that loads a complex fingerprinting script. This script, known as ips.js, is approximately 477KB of heavily obfuscated code that establishes a complete virtual machine environment within the browser.

The workflow involves four critical components: p.js (the initializer), /mfc (which fetches challenge parameters), ips.js (the payload generator), and /tl (where the encrypted fingerprint is submitted). The system produces two essential headers: the CT header (x-kpsdk-ct), which serves as the "I'm human" token, and the CD header (x-kpsdk-cd), which contains the proof-of-work solution. Together, these headers authenticate the browser session.

The Virtual Machine Obfuscation Strategy

What makes Kasada particularly distinctive is its implementation of a complete virtual machine within ips.js. This isn't merely obfuscation—it's a full computational environment designed to be extremely difficult to reverse-engineer. The script contains three primary components: a bytecode decoder, a massive encoded string containing the actual bytecodes, and the VM that executes them.

The bytecode decoder employs a custom encoding scheme that changes with each script version. Each character maps to a position in a dynamic alphabet, with values decoded using a variable-length scheme. The system incorporates a time-lock mechanism where the seed is derived from the current time, creating windows of approximately five hours after which the script becomes undecodable. This temporal obfuscation prevents static analysis of captured scripts.

The VM itself is register-based, with 172 handlers that execute operations through a proxy system. Each handler operates on a state object containing registers, scope chains, and exception handlers. The system implements a sophisticated permutation table that maps raw bytecode opcode values to actual handler indices using a Fisher-Yates shuffle with seed values embedded in the bytecodes. Without the correct permutation, any disassembled output would be meaningless.

The Fingerprinting Matrix

Beyond the VM obfuscation lies the actual purpose of the system: comprehensive browser fingerprinting. The script creates a template array with 428 slots and populates it through 427 different probes organized into 20 batches that execute in parallel. Each probe extracts specific browser properties and behaviors, creating a unique fingerprint that would be computationally expensive to replicate artificially.

The probes cover an extensive range of browser characteristics, from standard properties like user agent and screen dimensions to more subtle behaviors like audio context capabilities, WebGL parameters, and timing measurements. What makes this particularly challenging is that the template undergoes shuffling between batches, with each shuffle using different seeds and algorithms. This means that even if you identify which probe corresponds to which slot in one batch, the mapping changes in subsequent batches.

The system also incorporates a dynamic challenge probe that computes values from the script's own bytecodes combined with device-specific values. This serves as a proof that the VM was actually executed in a real browser environment rather than being simulated or skipped.

The Encryption and Proof-of-Work Layers

Once the fingerprint is complete, it undergoes encryption using XTEA with a custom CBC-like chaining mode. The encryption key isn't stored plainly but is embedded in the script as an array of integers that must be expanded through one of several available functions. Each expansion function applies a series of bitwise rotations, shifts, and arithmetic operations to transform the integers into the actual 16-byte key.

The system also implements a proof-of-work mechanism through p.js, an earlier version of the VM that establishes SHA256 chaining parameters. Each site has its own seed phrase that remains constant for that domain, combined with difficulty parameters and sub-challenge counts retrieved from the /mfc endpoint. The proof-of-work requires finding nonces that produce hash values meeting specific difficulty thresholds, with multiple sub-challenges that must be solved sequentially.

Implications for the Ecosystem

The successful reverse-engineering of Kasada's system reveals several important trends in the ongoing battle between automation and detection:

Increasing Sophistication: Anti-bot systems are evolving from simple challenges to complete computational environments that are expensive to replicate, raising the barrier for bot developers.

The Value of Real Browser Data: Systems like Kasada emphasize collecting actual browser behavior rather than just static properties, making synthetic detection more effective.

The Temporal Arms Race: The time-based expiry of scripts and dynamic parameter changes create a moving target that requires constant maintenance by bot developers.

Economic Factors: As detection systems become more sophisticated, the cost of effective automation increases, potentially creating a natural economic equilibrium where only high-value applications justify the investment.

Counter-Perspectives and Limitations

While the researcher's achievement is impressive, several important counter-considerations should be acknowledged:

The Cat-and-Mouse Game: This represents a single point in an ongoing struggle. Kasada and similar providers will undoubtedly update their systems in response to such disclosures, potentially implementing additional layers of obfuscation or detection.

Specialized vs. General Solutions: The researcher's solver appears optimized for specific implementations. General-purpose automation tools face a much more complex challenge in handling diverse anti-bot systems across different platforms.

Resource Requirements: Even with this solver, the computational cost of running full VM emulation and solving proof-of-work challenges makes large-scale automation significantly more expensive than simpler approaches.

Legal and Ethical Boundaries: While the researcher frames this as an intellectual challenge, the same techniques could be used for malicious purposes, highlighting the dual-use nature of security research.

The Future of Browser Automation

This case study illustrates a fundamental truth about the future of web automation: the distinction between "human" and "bot" is becoming increasingly blurred. Modern systems don't just check if a request comes from a browser—they evaluate whether the behavior matches expected human patterns across multiple dimensions.

The Kasada system represents a significant step toward behavioral biometrics in web security, analyzing not just what browser claims to be, but how it behaves under various conditions. This trend will likely continue, with systems potentially incorporating machine learning to detect subtle anomalies in interaction patterns that would be difficult to replicate artificially.

For organizations relying on automation, the implications are clear: simple approaches will increasingly fail. The future of effective web automation may lie in emulating human behavior at a deeper level, potentially through browser extensions that modify actual human behavior to match expected patterns, or through sophisticated simulation frameworks that can reproduce the nuanced responses of human users.

The researcher's work demonstrates that even sophisticated systems like Kasada's are vulnerable to determined reverse-engineering. However, it also highlights the increasing complexity and resource requirements of effective bypass, suggesting that the most sustainable approach may be not to defeat detection systems, but to work within frameworks that acknowledge and accommodate legitimate automation needs.

For those interested in the technical implementation, the researcher has made available disassembled and decompiled versions of the scripts, along with collector tools for real fingerprint data. This represents a valuable resource for understanding both the current state of anti-bot technology and the methods being used to analyze it.

The ongoing evolution of these systems promises to keep researchers, developers, and security professionals engaged in a complex dance of obfuscation, analysis, and counter-measures that will likely continue to shape the landscape of web automation for years to come.

Comments

Please log in or register to join the discussion