The Thundering Herd problem occurs when multiple processes or requests hit a system simultaneously after waiting for an event, causing cascading failures across distributed systems.

The Thundering Herd Problem Imagine H&M announces a sale at 9:00 AM. Massive crowds are standing outside the doors. As the doors open, everyone rushes inside at the same time. The crowd becomes difficult for store staff to manage, the doorway jams, and there is no place for people to walk inside the store. This problem is referred to as the Thundering Herd problem.

Basically, the Thundering Herd problem occurs when a large number of people are waiting for an event to happen, and as the event opens, everyone strikes at once.

Where does this problem occur?

Caching: When cache expires and all processes try to hit the database at the same time.

Database: If the application queries the same record simultaneously.

Load Balancers: When a server comes back online and is suddenly hit with multiple requests to handle.

Real-world scenario: Cache Expiration The normal flow is: App -> Cache -> Database. If a user sends a request, the app checks if the data exists in the cache and returns it. If it does not exist in the cache, it goes to the database.

The Thundering Herd Problem: Let's suppose the cache expires every 5 minutes. If 1,000 users request data exactly at the 5-minute mark when the cache expires:

The first request goes: App -> Cache -> Database The second request goes: App -> Cache -> Database ...and so on, until all 1,000 requests hit the database.

How do traffic spikes overload the system?

When multiple requests hit simultaneously:

- CPU usage jumps to 100%.

- There are too many database connections.

- The cache is effectively unavailable (missing).

- Response time increases significantly.

Why is it more dangerous in a distributed system?

In a distributed system, each service depends on the others:

- If the cache fails, multiple requests hit the database.

- If the database is slow, the application threads block.

- If the application blocks, the load balancer initiates retries.

- Load balancer retries increase traffic.

Once a spike occurs, it can bring multiple services down in a cascading failure.

Real-world examples

When a famous show is released and everyone watches it at the same time.

If a cricket match or IPL game is ongoing and everyone tries to refresh the score at the same time.

If Amazon has a sale beginning at one particular time and everyone tries to buy an item at that exact second.

So, the Thundering Herd problem is not just about high traffic, but the fact that everyone tries to request data at the same time. This can be avoided by controlling and distributing the traffic.

Solutions to the Thundering Herd Problem

1. Cache Warming

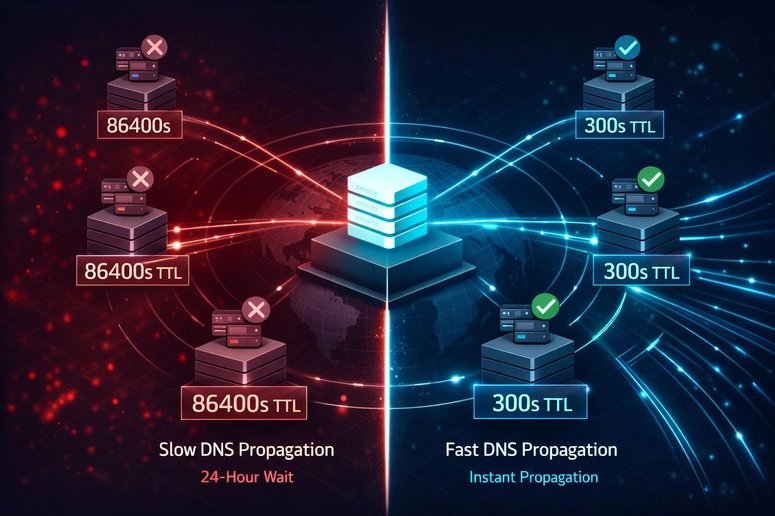

Instead of letting the cache expire completely, implement cache warming strategies. When cache TTL approaches expiration, start refreshing it in the background. This ensures that when the TTL actually expires, the cache still has valid data.

2. Request Coalescing

Implement request coalescing where multiple identical requests are combined into a single request. The first request goes to the database, and subsequent identical requests wait for that result rather than hitting the database independently.

3. Probabilistic Expiration

Instead of expiring all cache entries at exactly the same time, add a small random jitter to expiration times. This spreads out cache misses over time rather than having them all occur simultaneously.

4. Rate Limiting and Backpressure

Implement rate limiting at various layers of your system. When the system detects it's under heavy load, it can start rejecting or delaying requests, giving the system time to recover.

5. Circuit Breakers

Use circuit breakers to prevent cascading failures. When a service is failing or slow, the circuit breaker trips and prevents further requests from going to that service, allowing it time to recover.

The Mathematical Reality

The Thundering Herd problem follows a power law distribution in distributed systems. If you have N services and each has a 1% chance of failing at any given time, the probability that at least one service fails is:

P(failure) = 1 - (0.99)^N

With 100 services, there's a 63% chance that at least one is failing at any given moment. This means your system must be designed to handle partial failures gracefully.

Monitoring and Detection

To detect Thundering Herd problems early:

- Monitor cache hit rates - sudden drops indicate potential herd behavior

- Track database connection pool usage - spikes suggest cache misses

- Measure request latency distributions - sudden increases may indicate system overload

- Set up alerts for unusual traffic patterns

Real-World Impact

In 2017, GitHub experienced a significant outage due to a Thundering Herd problem. When their MySQL database experienced a brief network partition, all application servers simultaneously tried to reconnect, overwhelming the database and causing a cascading failure that took down their entire service.

The financial impact of such outages can be substantial. For a service with 1 million users and an average revenue of $10 per user per month, just one hour of downtime costs approximately $13,889 in lost revenue, not counting the damage to user trust and brand reputation.

Prevention Strategies

Design for failure: Assume that cache will expire, databases will slow down, and networks will partition. Build your system to handle these failures gracefully.

Implement exponential backoff: When services fail, clients should retry with exponentially increasing delays to prevent synchronized retry storms.

Use bulkhead patterns: Isolate different parts of your system so that a failure in one area doesn't cascade to others.

Monitor queue depths: Sudden increases in queue depths across multiple services often indicate herd behavior.

Test under load: Regularly test your system with realistic traffic patterns, including scenarios where many requests hit simultaneously.

Conclusion

The Thundering Herd problem is a fundamental challenge in distributed systems design. It's not just about handling high traffic, but about managing the timing and distribution of that traffic. By understanding the patterns that lead to herd behavior and implementing appropriate mitigation strategies, you can build more resilient systems that continue to function even under extreme load conditions.

The key insight is that distributed systems are not just about scaling up capacity, but about managing the interactions between components. When everyone rushes in at once, the system fails not because it lacks capacity, but because it cannot handle the synchronization of demand.

Comments

Please log in or register to join the discussion