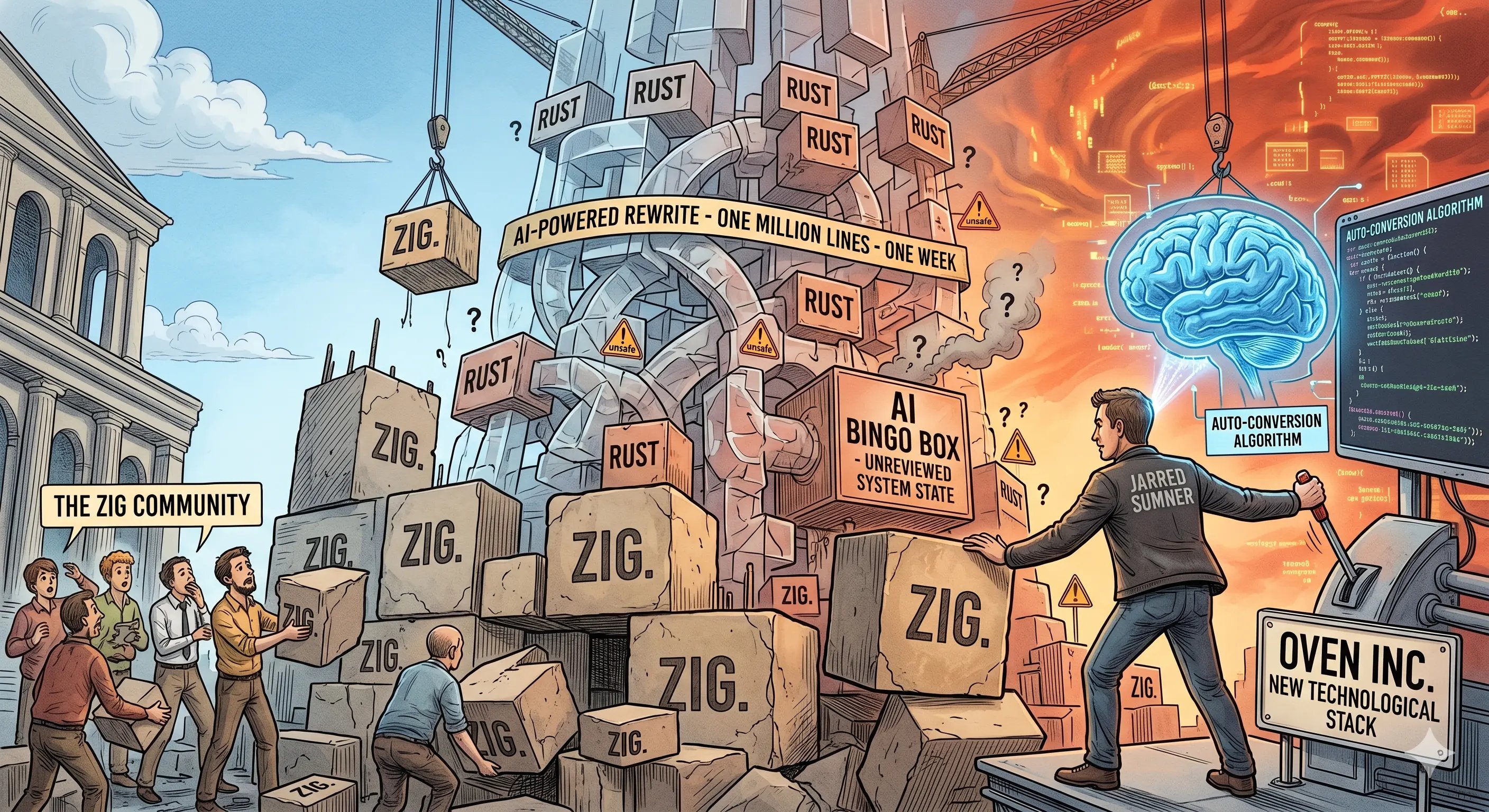

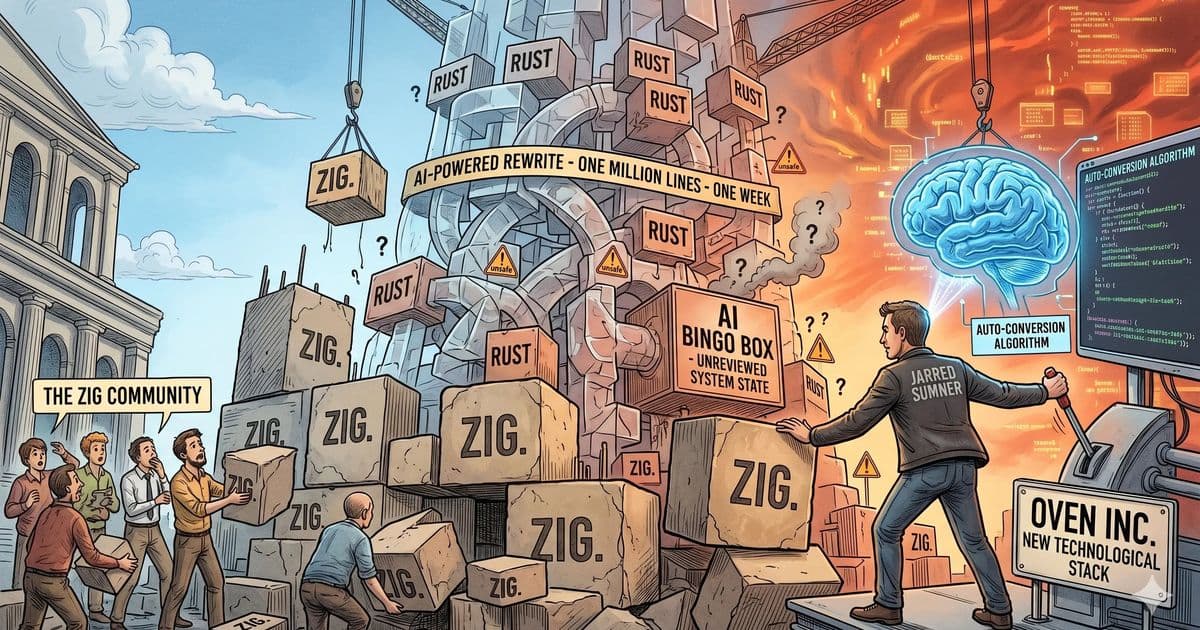

Bun’s recent migration from Zig to Rust, accomplished in a six‑day, AI‑driven rewrite, raises deeper questions about maintainability, human review, and the mismatch between rapid‑iteration startup culture and manual memory management, rather than proving Zig inadequate.

Introduction

The headlines about Bun’s Rust rewrite focus on the speed of the port and the novelty of AI‑generated code. What remains under‑discussed is the architectural lineage that makes this rewrite possible and the long‑term maintenance risk introduced when a production‑grade JavaScript runtime is rewritten by a language model without a single human reading the entire diff. This article unpacks the technical, cultural, and risk‑management dimensions of the transition, arguing that the real story is not “Zig failed, Rust succeeded,” but rather “a startup’s rapid‑iteration model collided with the realities of manual memory management, and the outcome now hinges on how well humans can eventually comprehend a 6,755‑commit AI‑crafted codebase.”

The Zig Foundation

Jarred Sumner chose Zig for Bun’s original implementation not because it was fashionable, but because Zig offered three decisive advantages for a two‑person team building a high‑performance JavaScript engine:

- Direct memory manipulation without the overhead of a garbage collector, allowing Bun to achieve low latency on the hot path.

- Straightforward C interop, which made it easy to embed existing V8‑style components and to experiment with custom JIT pipelines.

- Predictable compilation model, giving the team confidence that the binary they shipped matched the source they wrote.

These properties shaped Bun’s core data structures—its arena allocators, its byte‑code buffers, and the low‑level concurrency primitives that still power the runtime today. As Jarred has repeatedly said, the architecture doesn’t change; the data structures don’t change. The Rust rewrite inherits this skeleton wholesale; it is a translation of a Zig‑born design, not a ground‑up re‑imagining.

The Rewrite in Numbers

- 6,755 commits merged between May 8 and May 14, 2026.

- Branch:

claude/phase-a-port. - Reviewers:

coderabbitai[bot],claude[bot]; the sole human reviewer (alii) never opened the PR. - Test outcome: “All tests pass” on macOS, Linux, and Windows.

The sheer volume of changes, combined with the fact that the only reviewers were bots, means that no human has read the entire diff. This is not a matter of style; it is a structural property of the codebase that will affect future debugging, onboarding, and feature development.

Why Passing Tests Is Not a Panacea

A test suite can certify that known behavior works under known conditions, but it cannot guarantee:

- Correct handling of error paths that are rarely exercised.

- Boundary‑condition behavior when memory pressure spikes or when the runtime is re‑entered from native extensions.

- Concurrent state consistency under high‑throughput workloads.

- Conformance of the memory model to the intended invariants when the JIT emits code that crosses the Rust–C boundary.

Jarred himself has admitted that the Rust version still suffers from memory‑ordering bugs when JavaScript re‑enters native code. Those bugs are not caught by the compiler; they require human insight into the original design’s subtle invariants—insights that are currently locked behind an AI‑generated code wall.

The Real Technical Bet

The core risk is not “Zig vs. Rust” but whether AI‑generated, unreviewed code can be sustainably maintained. Consider two scenarios:

- Short‑term – The canary deployment runs for a few weeks, the test suite continues to pass, and the Rust compiler eliminates a class of use‑after‑free bugs that plagued the Zig version. In this window, the rewrite appears successful.

- Long‑term – Six months later a rare concurrency bug surfaces under a specific load pattern. Engineers must trace the failure through thousands of autogenerated functions, none of which have been manually inspected. The time to locate the root cause can balloon from hours to days, increasing outage cost and eroding confidence in the runtime.

The bet, therefore, is human comprehension. If the team can eventually produce a curated set of architectural documents, targeted code reviews, and a suite of property‑based tests that capture the original invariants, the risk diminishes. If not, the codebase remains a black box, and every production incident becomes a costly excavation.

Why Zig Was Not “Inadequate”

Jarred’s public rationale for the migration cites a proliferation of use‑after‑free, double‑free, and leak bugs in the Zig codebase. Those symptoms are real, but the inference that “Zig doesn’t work” misidentifies the causal chain. The true diagnosis is:

- Cognitive tax – Manual memory management in Zig imposes a mental overhead that conflicted with Bun’s culture of rapid iteration and frequent releases.

- Team expertise – Zig’s safety model assumes a team comfortable with low‑level discipline; Bun’s small, fast‑moving team prioritized speed over exhaustive manual audits.

Other projects, such as TigerBeetle, thrive with Zig because their engineering culture aligns with rigorous memory discipline. The mismatch is between Bun’s business model (fast feature delivery, frequent external contributions) and Zig’s design goals (maximal control, minimal runtime abstraction). The hammer is fine; it was simply the wrong tool for the job at this stage.

Implications for the Ecosystem

- For startups – The Bun case illustrates that a language’s technical merits must be weighed against the organization’s capacity to enforce its safety guarantees. Choosing a “mainstream” language like Rust can lower the barrier to hiring and reviewing, but it does not eliminate the need for deep architectural understanding.

- For AI‑assisted development – The rewrite is a proof‑of‑concept that large language models can translate a sizeable codebase quickly. However, the lack of human oversight highlights the current limits of AI: it can preserve local semantics but struggles with global invariants that reside only in the original author’s mental model.

- For the Zig community – The episode should not be interpreted as a repudiation of Zig. Instead, it underscores the importance of matching project constraints (team size, release cadence, risk appetite) with the language’s ergonomics.

Counter‑Perspectives

- Optimistic view – Some argue that the sheer speed of the rewrite demonstrates that AI can become a viable “first‑draft” author for low‑level systems, freeing engineers to focus on higher‑level design. If the community invests in tooling that surfaces hidden invariants (e.g., model‑checking, formal specifications), the maintenance burden could be mitigated.

- Skeptical view – Others contend that any production system built without a thorough human audit is a liability, regardless of test coverage. They point to historical incidents where subtle memory‑ordering bugs caused catastrophic failures, warning that the Bun rewrite may repeat those patterns under a different veneer.

Both positions have merit; the truth likely lies in a middle ground where AI‑generated code is augmented by systematic human review, static analysis, and property‑based testing before it ever reaches production.

Conclusion

Bun’s Rust rewrite is less a verdict on Zig’s capabilities and more a case study in how technical debt, cultural fit, and AI‑driven code generation intersect. In the short term, the Rust compiler’s safety guarantees will mask many of the memory bugs that plagued the Zig implementation. In the long term, the maintainability of a 6,755‑commit, largely unread codebase will determine whether the rewrite proves sustainable or becomes a cautionary tale.

The real question for engineers and investors alike is not which language is superior, but how we ensure that the blueprints of a complex system remain legible to the people who must fix them when the inevitable leak appears.

References

Comments

Please log in or register to join the discussion