Stephen Wolfram's decades-long quest to make the world computable is now empowering large language models with precise computation and knowledge.

Large language models (LLMs) have demonstrated remarkable capabilities in generating human-like text, yet they fundamentally lack precision and computational depth. This gap becomes critical when tasks require accurate calculations, verified data, or complex algorithmic processing. Wolfram Research addresses this limitation by launching its computational engine as a universal Foundation Tool for AI systems, marking a strategic shift in how LLMs access structured knowledge.

For over 40 years, Wolfram Language has evolved into a comprehensive computational framework spanning mathematics, chemistry, geography, finance, and thousands of specialized domains. Unlike narrow APIs targeting specific functions, it provides unified access to curated datasets, symbolic computation, and algorithmic intelligence. Where LLMs predict patterns statistically, Wolfram's engine delivers deterministic results—whether calculating orbital trajectories, optimizing supply chains, or verifying pharmaceutical compounds.

The integration occurs through Computation-Augmented Generation (CAG), a real-time injection system where Wolfram's computational outputs feed directly into LLM workflows. Imagine an LLM discussing climate models: Instead of hallucinating data, it triggers Wolfram to generate precise atmospheric simulations on demand. This differs from Retrieval-Augmented Generation (RAG), which pulls static documents, by creating dynamic computational results tailored to each query.

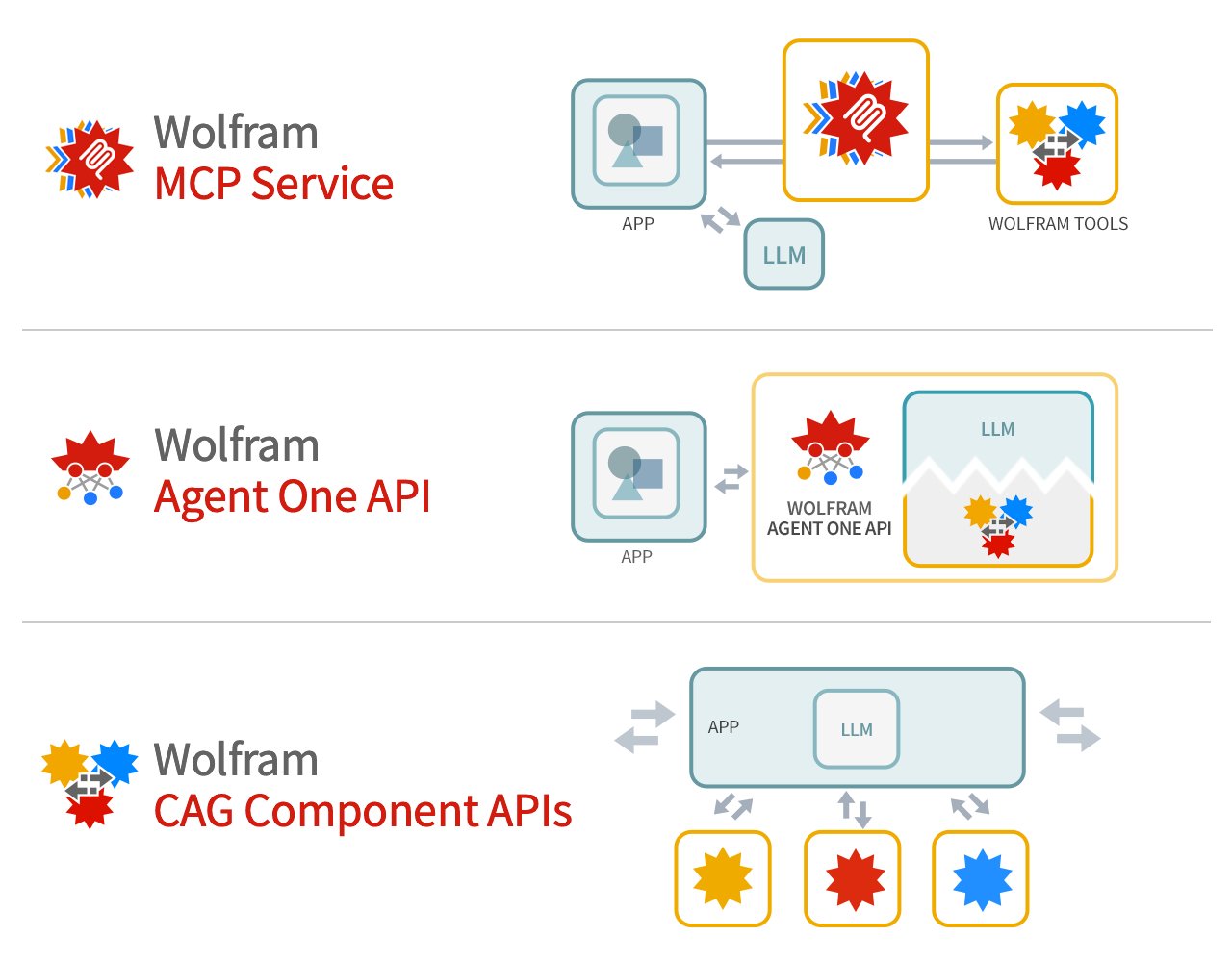

Three deployment options launched today:

- MCP Service: Direct API integration for LLM platforms using the emerging Machine Communication Protocol standard

- Agent One API: A pre-integrated agent combining foundation models with Wolfram's tool as a single endpoint

- CAG Component APIs: Granular access for custom implementations, supporting both cloud and on-premise deployments

Early implementations reveal practical trade-offs. While Wolfram eliminates mathematical hallucinations, its structured outputs require careful prompt engineering. Response times add latency—milliseconds for simple calculations but seconds for intensive simulations. The system shines in STEM domains yet faces limitations in purely creative tasks where precision matters less.

This launch positions Wolfram not as an AI competitor but as infrastructure. By offering its capabilities catalog through standardized interfaces, the company enables LLMs to transcend linguistic pattern-matching. Pharmaceutical researchers might validate molecular interactions; engineers could simulate prototype stress tests—all within conversational AI workflows.

As Stephen Wolfram noted, 'We've spent decades building what LLMs fundamentally cannot: a system for rigorous computation. Now AI systems can leverage it.' This convergence signals a maturation in generative AI—where statistical brilliance combines with engineered precision for trustworthy applications. Enterprises exploring integration can connect through Wolfram's partnership portal.

Comments

Please log in or register to join the discussion