New research compares 19 web frameworks by AI agent efficiency, showing minimal frameworks require significantly fewer tokens for initial implementation while maintaining similar extension costs.

Recent research examining AI agent efficiency across web frameworks reveals significant differences in token consumption during development tasks. The study tested 19 frameworks using Claude Code with Opus 4.6 to build and extend a simple blog application, measuring token usage, tool calls, and success rates.

Methodology

The experiment provided AI agents with identical prompts to create a blog application with specific features: homepage listing, post details, creation form, SQLite storage, and basic styling. Frameworks were categorized as:

- Minimal: Lightweight libraries like Express.js and Flask

- Full-featured: Opinionated frameworks like Rails and Django

All frameworks had prerequisite tools pre-installed (npm, cargo, etc.), and agents could execute common read/write commands. After initial implementation, agents received a follow-up prompt to add category functionality with database migrations and UI updates.

Initial Implementation Results

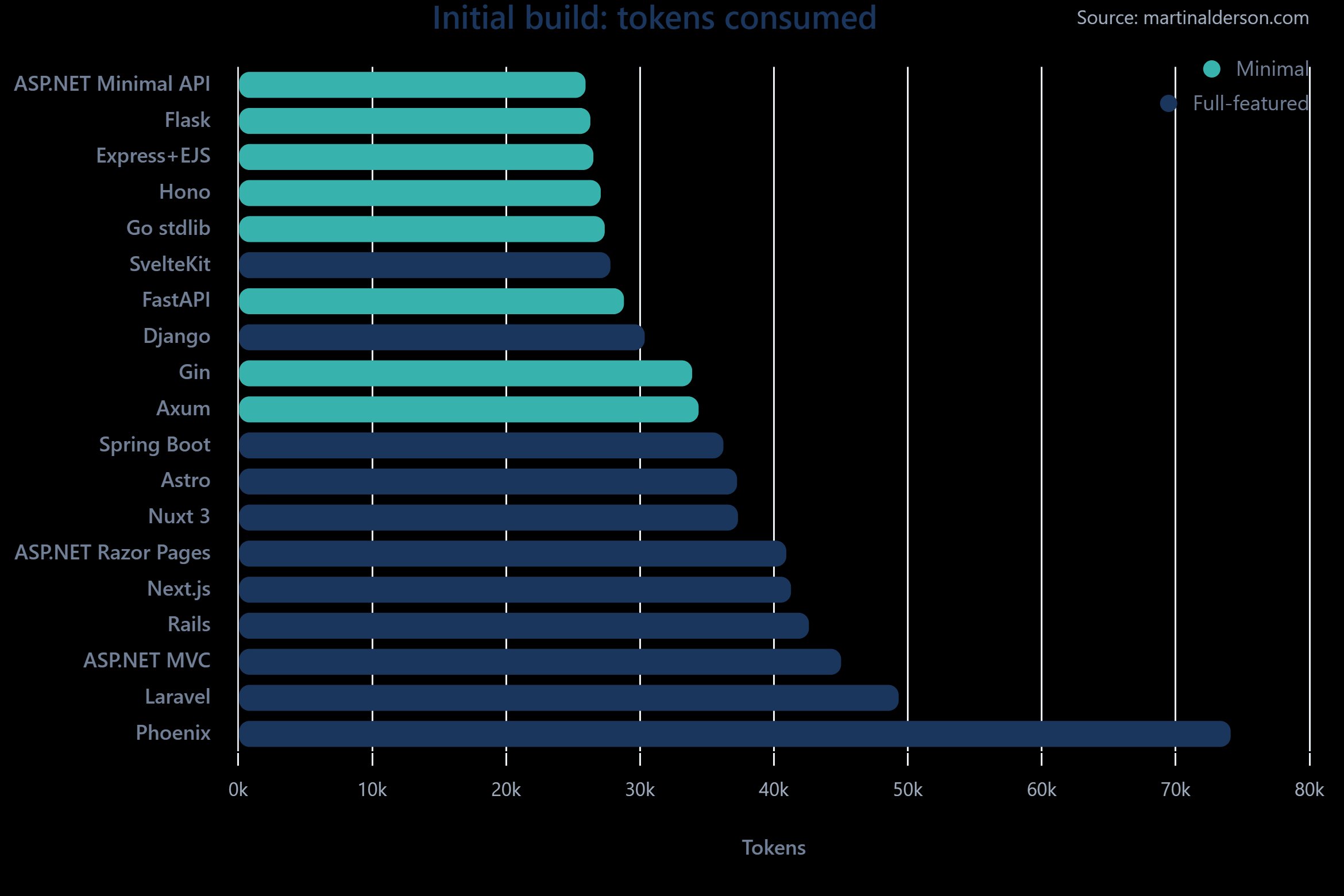

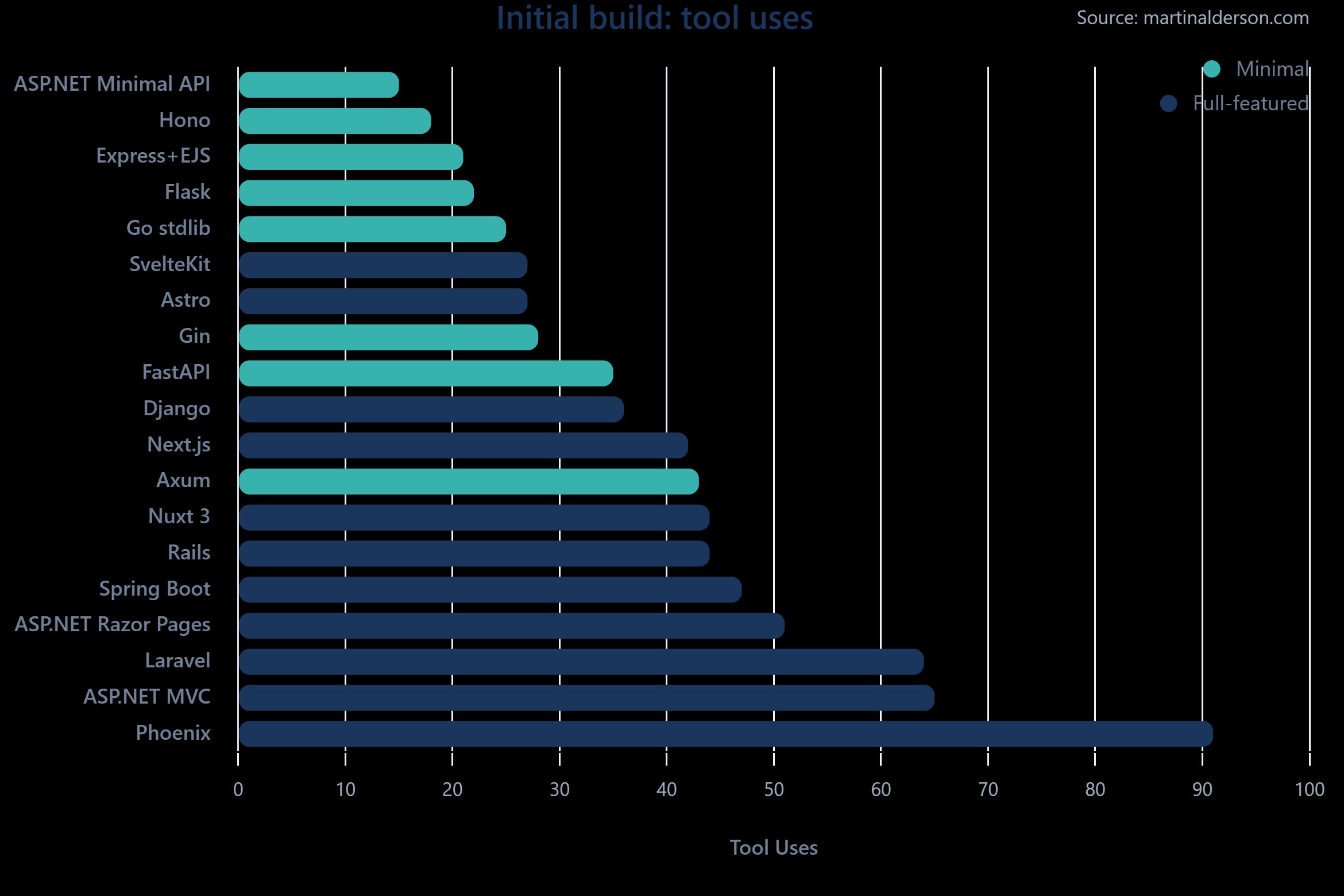

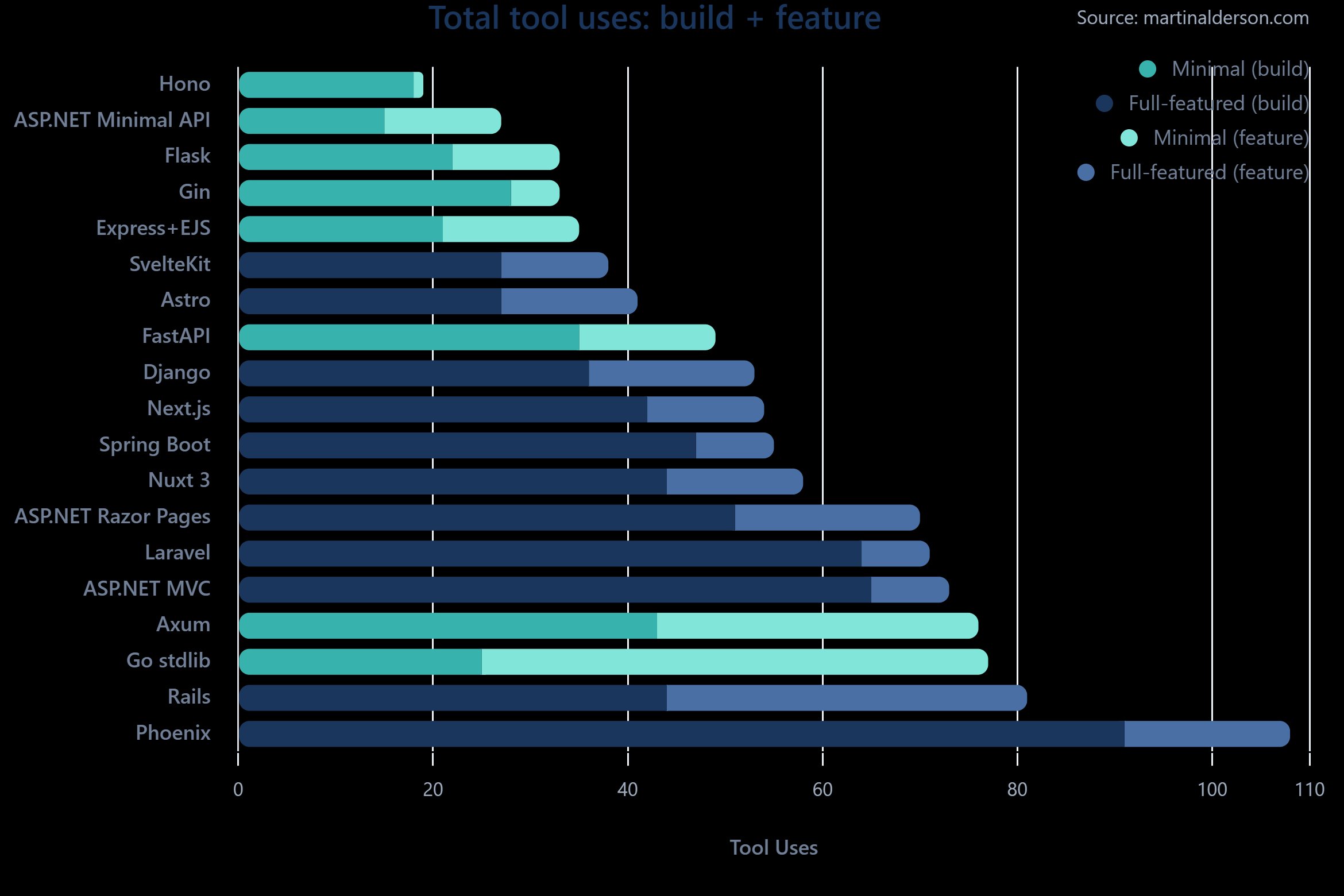

Every framework successfully produced a working blog application, demonstrating remarkable agent capability advancement. However, token consumption varied dramatically:

- Minimal frameworks clustered between 26-29K tokens

- Full-featured frameworks ranged from 28K (SvelteKit) to 74K (Phoenix)

- ASP.NET Minimal API proved most efficient at 26K tokens

- Phoenix required 2.9x more tokens than ASP.NET

Tool call patterns revealed similar efficiency gaps, with more esoteric frameworks requiring additional effort. Phoenix's high token count appeared related to extensive reading of scaffolded code, suggesting limited training data familiarity.

Feature Extension Findings

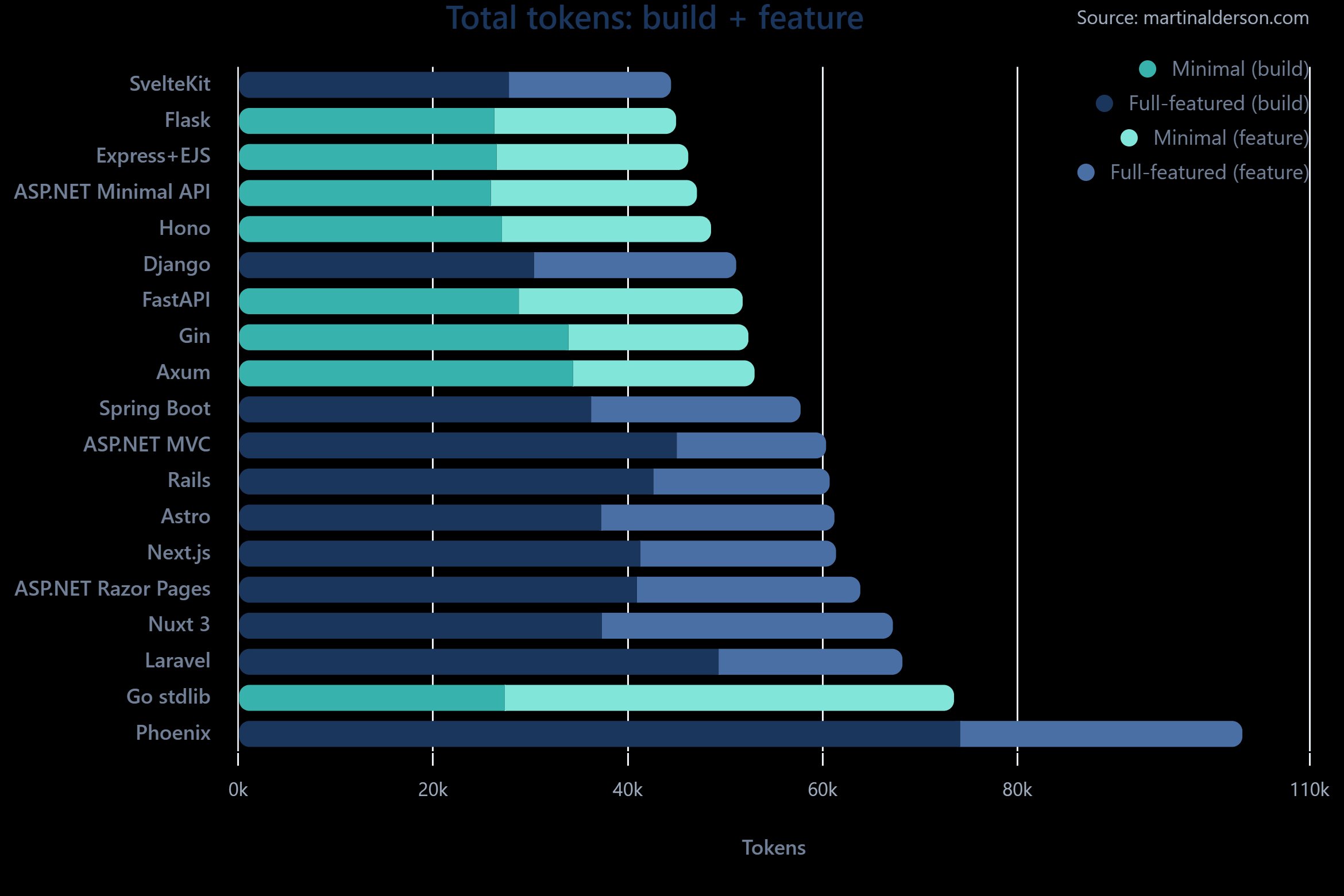

The follow-up task (adding categories) yielded surprising results:

- 18/19 implementations succeeded (Spring Boot failed due to migration issues)

- Token usage converged to 15-30K across all frameworks

- Go stdlib struggled with datetime parsing during database upgrades

- Full-featured frameworks didn't demonstrate expected efficiency advantages

This suggests framework overhead primarily impacts initial setup rather than feature extensions. The convergence indicates that modifying existing code requires similar effort regardless of framework type.

Practical Implications

Key takeaways for developers leveraging AI agents:

- Minimal frameworks offer significant token savings for initial implementation

- ASP.NET Minimal API excels in static typing, speed and low memory usage

- SvelteKit and Django stand out among full-featured options

- The 2.9x token gap matters most in high-frequency development scenarios

While agents capably handle all tested frameworks, token efficiency becomes crucial when scaling agent-assisted development. The research demonstrates that framework choice significantly impacts AI operational costs without necessarily improving extensibility.

For detailed methodology and raw data, see the original study.

Comments

Please log in or register to join the discussion