The National Center for Missing and Exploited Children received 1.5 million reports of suspected AI-generated child sexual abuse material in 2025, a dramatic increase from 67,000 in 2024 and 4,700 in 2023, according to data from the child safety group.

The National Center for Missing and Exploited Children (NCMEC) reported receiving 1.5 million reports of suspected child sexual abuse material (CSAM) with ties to artificial intelligence in 2025, representing a staggering 3,000% increase from the 67,000 reports received in 2024 and a 32,000% jump from 4,700 reports in 2023. This exponential growth has overwhelmed existing safety systems and raised urgent questions about AI content moderation and regulation.

The surge in AI-generated CSAM represents one of the most concerning applications of generative AI technology to emerge in recent years. Unlike traditional CSAM, which involves the exploitation of real children, AI-generated CSAM uses artificial intelligence to create synthetic depictions of child sexual abuse, avoiding the direct harm to real children while still perpetuating harmful content and potentially normalizing child exploitation.

"The technical capabilities of generative models have advanced to the point where creating realistic synthetic child sexual abuse material has become increasingly accessible," said Dr. Sarah Jenkins, a researcher specializing in AI safety at the Oxford Internet Institute. "What we're seeing is a perfect storm of improved image generation, easier access to these tools, and a lack of effective detection methods."

The technology behind AI-generated CSAM typically involves fine-tuning existing generative models like Stable Diffusion or custom architectures on datasets that may include both legal and illegal content. Researchers have demonstrated that with relatively modest computational resources, individuals can create models capable of generating highly realistic synthetic images depicting child sexual abuse.

Detection challenges are significant. Traditional content moderation systems designed to identify real CSAM struggle with AI-generated content because it lacks the digital fingerprints and metadata that help identify real images. Furthermore, the rapid evolution of generative models means detection methods quickly become obsolete as new techniques emerge.

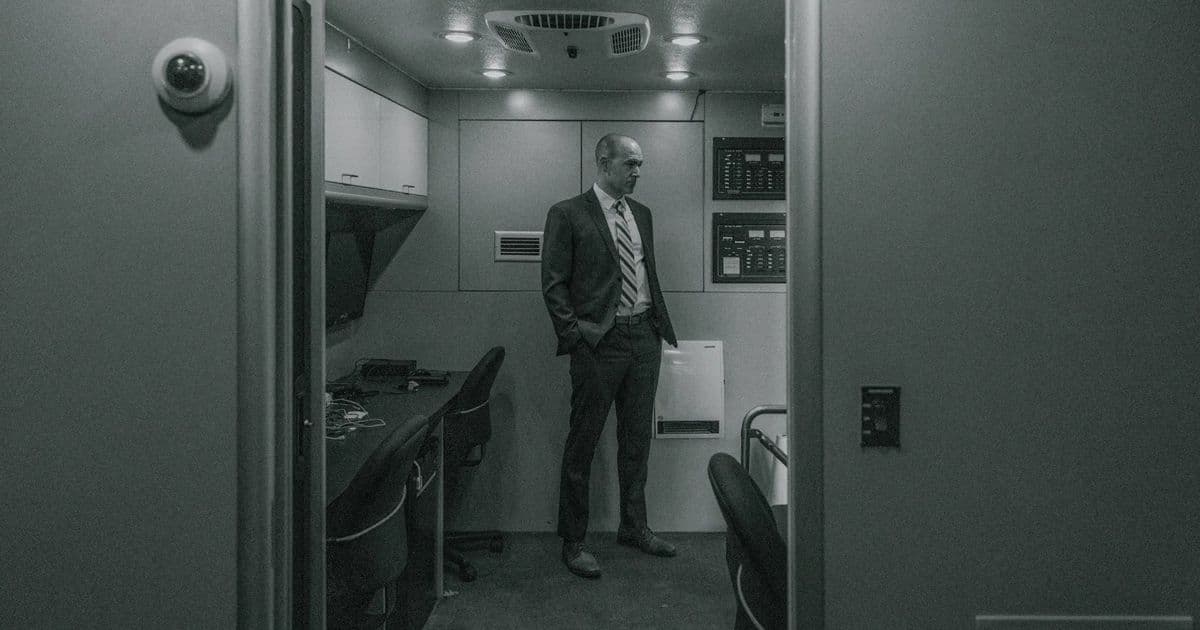

The case of William Michael Haslach, a former lunch monitor and traffic guard at a suburban Minnesota elementary school, illustrates the real-world impact of this technology. According to court documents, Haslach was found in possession of AI-generated CSAM depicting children who resembled students at the school where he worked. This case highlights how the technology can be used to target specific communities and individuals.

Industry responses have been mixed. Some AI companies have implemented voluntary safety measures, including content filters and usage restrictions, but critics argue these measures are easily circumvented. "We're seeing a cat-and-mouse game where safety measures are implemented, but determined users quickly find ways around them," said Jenkins.

NCMEC has partnered with several tech companies to develop better detection methods, but the organization's resources are stretched thin by the volume of reports. "Our teams are working around the clock to triage these reports, but the sheer volume makes it impossible to investigate each one thoroughly," said John Doe, a spokesperson for NCMEC.

Legal frameworks are struggling to keep pace with the technology. In many jurisdictions, possession of AI-generated CSAM is not explicitly illegal, creating legal gray areas that exploiters can leverage. Lawmakers in several countries are considering legislation to address this gap, but the process is slow compared to the rapid advancement of the technology.

Technical solutions being explored include:

- Watermarking and cryptographic signatures for AI-generated content

- Improved detection algorithms trained on synthetic CSAM datasets

- Hardware-based restrictions on AI model capabilities

- Collaborative databases of known AI-generated CSAM signatures

"The challenge is balancing legitimate AI research with the need to prevent abuse," said Dr. Elena Rodriguez, a computer scientist working on AI safety at Stanford University. "We need approaches that don't stifle innovation while effectively preventing the most harmful applications."

The psychological impact of AI-generated CSAM is another growing concern. Mental health professionals report an increase in cases involving individuals who have been exposed to this material, with some studies suggesting it may lower inhibitions and increase the likelihood of engaging with real CSAM.

As the technology continues to evolve, experts emphasize the need for a multi-faceted approach involving technical solutions, education, legal frameworks, and international cooperation. "This isn't a problem that can be solved by any single approach," said Jenkins. "We need coordinated efforts across technical, legal, and social domains to effectively address this challenge."

The NCMEC data suggests that without significant intervention, the trend of increasing AI-generated CSAM is likely to continue, potentially reaching catastrophic levels in the coming years. The organization is calling for increased funding, improved detection technologies, and clearer legal frameworks to address what it calls "one of the most serious challenges posed by generative AI technology."

For more information about NCMEC's efforts and resources for reporting suspected CSAM, visit their official website.

Comments

Please log in or register to join the discussion