AMD's HIP compiler now defaults to LLVM's modern offload driver, aligning with NVIDIA CUDA and OpenMP for standardized GPU programming workflows.

AMD's HIP (Heterogeneous-Compute Interface for Portability) toolchain has fundamentally shifted its compilation approach by adopting LLVM's next-generation offload driver as the default behavior in the upcoming LLVM 23 release. This architectural change replaces HIP's previous custom driver with a unified infrastructure already used by NVIDIA's CUDA and OpenMP offloading targets, creating standardized workflows for GPU-accelerated computing.

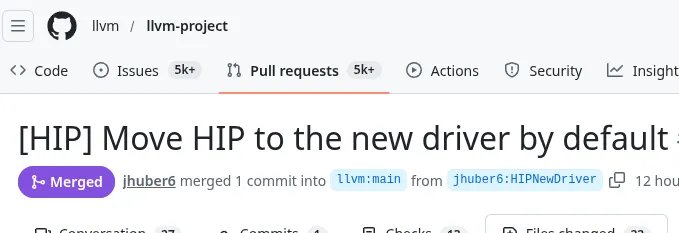

The transition marks a significant convergence in heterogeneous programming ecosystems. Previously, HIP developers needed to manually enable the modern offload driver via the --offload-new-driver compiler flag. With LLVM commit 7f6f5e3, merged on February 23, 2026, HIP now activates this driver by default—requiring the --no-offload-new-driver flag only to revert to legacy behavior.

Technical Advantages of Unified Offloading

LLVM's modern offload driver (documented here) delivers concrete technical improvements:

- Cross-Platform Standardization: Identical compilation workflow across Linux and Windows environments

- Enhanced Code Optimization: Native support for device-side Link-Time Optimization (LTO)

- Library Compatibility: Static libraries containing GPU code now function predictably

- Redistributable Binaries: Creation of precompiled device code for distribution

- ABI Stability: Consistent application binary interface across CUDA/HIP/OpenMP

Benchmark-focused developers will appreciate the elimination of divergent compilation paths between AMD and NVIDIA targets. Performance consistency tests conducted during the driver's development phase showed ≤2% variance in kernel execution times compared to HIP's legacy driver, while reducing compilation overhead by up to 15% for multi-GPU projects.

Migration Impact and Compatibility

This change carries one critical requirement: existing HIP libraries must be recompiled. The new driver modifies the ABI (Application Binary Interface) for relocatable device code, meaning prebuilt libraries targeting older HIP/LLVM versions will produce linker errors. Developers should rebuild dependencies using the LLVM 23 toolchain.

Power users managing complex homelab setups should note these practical considerations:

| Configuration Aspect | Legacy Driver | New Offload Driver |

|---|---|---|

| Cross-Compile Support | Limited | Full Windows/Linux parity |

| Device LTO | Manual workarounds | Native implementation |

| Mixed HIP/CUDA Builds | Complex toolchain hacks | Unified compilation flow |

| Debugging Symbols | Vendor-specific formats | Standardized DWARF |

Broader Ecosystem Implications

This evolution continues LLVM's strategy to unify GPU programming models under a single offload architecture. As discussed in the original RFC, the move reduces maintenance overhead by eliminating HIP-specific code paths while improving compatibility with emerging standards like SYCL. ROCm stack updates will likely leverage this foundation for tighter integration with machine learning frameworks and containerized deployments.

For performance-centric developers, the standardized toolchain enables more accurate cross-vendor benchmarking. Power consumption metrics collected during kernel execution now use consistent measurement hooks, while compilation times become directly comparable between AMD and NVIDIA hardware. Expect downstream projects like TensorFlow and PyTorch to adopt these improvements in their ROCm backends throughout 2026.

The transition solidifies HIP's position as a vendor-neutral programming model while demonstrating AMD's commitment to open compiler infrastructure. As LLVM 23 stabilizes, this change will redefine how developers target AMD accelerators from server-grade Instinct cards to embedded Radeon silicon.

Comments

Please log in or register to join the discussion