A major VoD provider replaced manual QC with an orchestrated pipeline built around Elecard’s Boro VoD, achieving full‑file coverage, lower defect risk, and freed engineering capacity. The case study examines the problem, the integration approach, and the trade‑offs inherent in scaling automated media validation.

Automating VoD Quality Control: Lessons from the Elecard Boro Deployment

The bottleneck

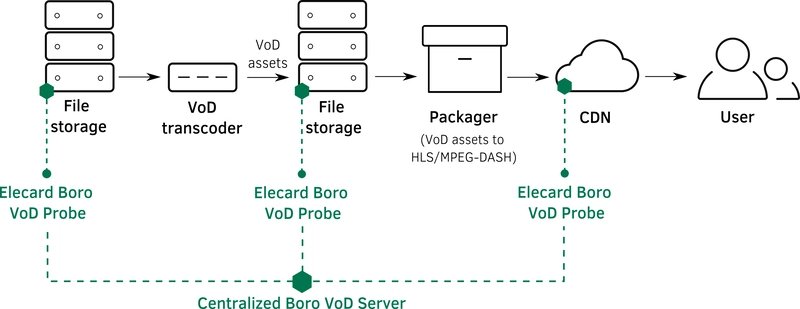

A large video‑on‑demand service was hitting a wall. Their ingest pipeline looked like this:

- Input storage – masters from partners landed on a shared bucket.

- Transcoder – a watch‑folder process produced several bitrate profiles.

- Packager – the profiles were wrapped for CDN delivery.

The steps themselves were sound, but the quality‑control (QC) stage was manual. Engineers opened each file, ran a handful of checks, and signed off. As the catalog grew from a few hundred to tens of thousands of assets per month, the manual loop became impossible to sustain. Missed defects such as black frames, frozen sections, or audio gaps began to surface in production, threatening brand reputation.

Why it mattered

- Operational cost – repetitive checks consumed dozens of engineer‑hours per week.

- Risk exposure – a single defective file could reach millions of viewers before being noticed.

- Scalability ceiling – the watch‑folder model could not keep up with the projected 5× volume increase.

The automated answer

The client set a clear objective: end‑to‑end automation of the preparation and verification stages. The chosen stack consisted of three components:

- An orchestrator (custom workflow engine) to coordinate tasks.

- A high‑throughput transcoder capable of generating multiple output profiles.

- Elecard Boro VoD – a server‑client system that runs configurable QC templates on media files.

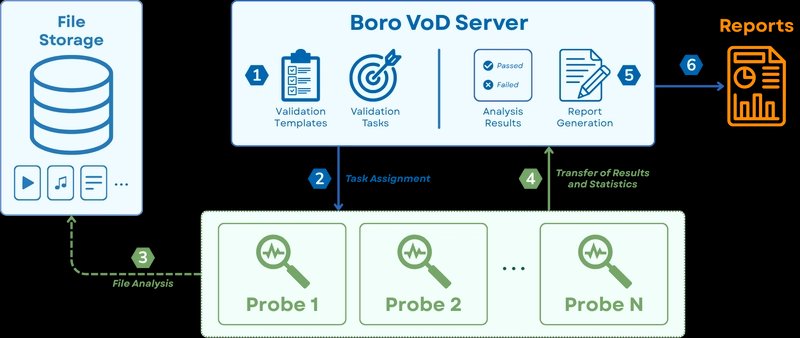

How Boro VoD works

Boro follows a client‑server model. A central server exposes a REST API and a Web UI. Lightweight probes run on the same network as the storage, pull files, and execute two families of tests:

| Category | Example checks |

|---|---|

| Compliance | Container = MP4, video codec = AVC, audio codec = AAC |

| Defect detection | Frozen frames, black screens, silence periods, duration mismatches |

Templates group these tests into reusable bundles. An engineer creates a template once, then any file can be validated by invoking the template via the API.

Integration flow

The first phase focused on post‑transcode validation. The orchestrator now performs the following steps for each master asset:

- Detect a new master in storage and dispatch it to the transcoder.

- Wait for the transcoder to emit the set of profiles.

- Call Boro’s

/tasksendpoint with the profile list and the appropriate template ID. - Poll the task status (

/tasks/{id}) to obtain a progress percentage and an interim error summary. - Retrieve the final report (PDF/JSON) once the task reaches a terminal state.

- Branch based on the status:

- Passed → forward to the packager.

- Failed → move to a quarantine bucket and log the report for engineer review.

All steps are driven by the orchestrator’s state machine, so the pipeline runs 24/7 without human interaction.

Trade‑offs and practical considerations

| Aspect | Benefit | Cost / Limitation |

|---|---|---|

| Full coverage | Every generated profile is inspected, eliminating sampling bias. | Increased compute load on probe nodes; requires sizing probes to match transcoder throughput. |

| Deterministic rules | Templates enforce consistent standards across the catalog. | Rigid templates can reject edge‑case content that would be acceptable after manual review; requires periodic template tuning. |

| API‑driven orchestration | Decouples QC from transcoding; each component can be replaced independently. | Network latency adds a few seconds to the overall latency; failure handling must be robust (retries, idempotent task creation). |

| Report centralization | Engineers receive a single JSON/CSV file with exact timestamps and error codes. | Large reports for high‑resolution assets can grow quickly; storage retention policies must be defined. |

| Scalability | Adding more probe instances scales linearly with file volume. | Coordination overhead grows; the orchestrator must implement back‑pressure to avoid over‑loading probes. |

In practice, the team mitigated the compute cost by deploying probes on spot instances in a cloud VPC and configuring auto‑scaling based on a simple queue length metric. Template maintenance became a quarterly task: a small review of false‑positive rates followed by rule adjustments.

Results

- 100 % QC coverage – every profile was examined, not just a random subset.

- Engineer time saved – manual checks dropped from ~30 h/week to under 2 h/week (mostly for exception handling).

- Defect leakage – incidents of black screens or audio gaps fell from an average of 4 per month to zero over a six‑month observation window.

- Scalable headroom – the pipeline comfortably handled a 3× increase in ingest volume during a promotional campaign.

Takeaways for other pipelines

- Treat QC as a first‑class service – expose it via an API and let the orchestrator drive it, just like transcoding or packaging.

- Invest in reusable templates – a well‑named template becomes a contract that downstream systems can rely on.

- Plan for back‑pressure – a busy transcoder should not flood the QC service; a queue or token bucket helps keep the system stable.

- Monitor both success and failure metrics – track false‑positive rates to avoid unnecessary re‑work.

- Keep the human in the loop for edge cases – automation reduces toil, but a small review step for failed assets preserves quality.

If your organization faces similar scaling pressures, the Elecard Boro VoD stack offers a pragmatic path to replace manual video checks with a reliable, API‑driven workflow.

Learn more about Boro VoD on the official product page.

Comments

Please log in or register to join the discussion