Tangled's new vouching feature introduces a web of trust to combat LLM-generated spam submissions that create maintenance burdens for open source projects. The system allows contributors to vouch for or denounce users, creating visual indicators that help maintainers make informed decisions about interactions.

In the rapidly evolving landscape of AI-assisted development, a new challenge has emerged: submissions that appear correct at first glance but contain subtle errors or problematic patterns. These "uncanny valley" submissions, generated by increasingly sophisticated language models, place an undue burden on project maintainers who must carefully review every contribution. Tangled, the decentralized network for collaborative development, has responded with an innovative solution: a native vouching system that enables the community to build a web of trust around contributors.

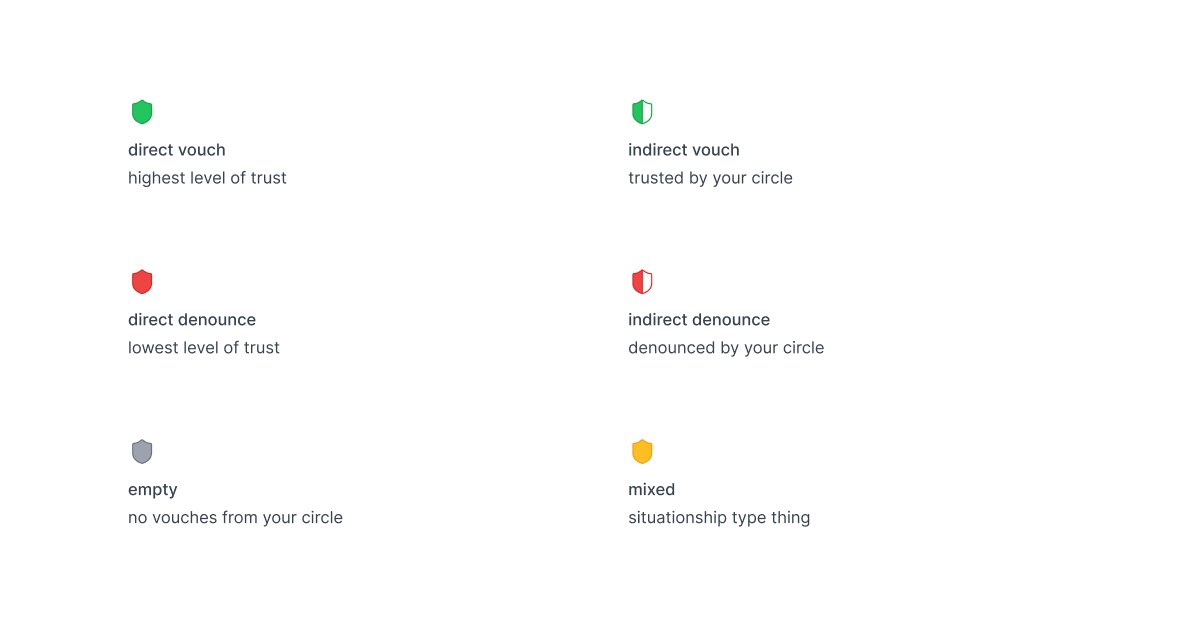

The vouching mechanism allows users to either vouch for or denounce others they interact with, creating visual indicators—a green shield for vouched users and a red warning for denounced ones—that appear at key interaction points. This simple yet powerful concept addresses a critical need in modern open source ecosystems where the barrier to submitting code has dramatically lowered, while the quality control mechanisms have not kept pace.

At its core, the vouching system functions through a clever combination of public records and selective visibility. When a user vouches for or denounces someone, they create a public record on their Personal Data Server (PDS), including an optional text-based reason for their decision. The Tangled application then aggregates this data across the network, displaying "hats" over user profiles in contexts like issues, pull requests, and their associated comments. Importantly, these hats only appear if you have directly vouched for or denounced the user, or if someone you've vouched for has done so—a network effect that scales trust through connections rather than imposing it from above.

This design reflects thoughtful consideration of potential pitfalls. The system includes several safeguards that prevent it from becoming a tool for harassment or undue influence. First, attenuation ensures that users can only see vouch decisions made by themselves and their circle of trusted connections, preventing public shaming or widespread denunciation. Second, denounced users currently face no concrete consequences beyond a visual warning label, allowing for course correction without punitive measures. These design choices recognize that trust is nuanced and context-dependent, and that systems intended to foster trust must themselves be designed with care.

Looking toward the future, the Tangled team has outlined several enhancements that could further refine the vouching system. The concept of "vouch decay" acknowledges that relationships and contributions evolve over time—maintainers and contributors move on from projects, and past performance may not indicate future reliability. By having vouches expire and require renewal, the system would remain current and responsive to changing dynamics. Additionally, the planned "evidence trails" feature would link vouches to specific contributions, such as automatically associating a vouch with a pull request that prompted it, creating a more transparent and verifiable record of trust relationships.

The implications of such a system extend far beyond the immediate problem of LLM spam. In an era where AI-generated content becomes increasingly prevalent across all domains, mechanisms for establishing provenance and reliability will become essential. Tangled's vouching system represents one approach to this challenge, creating a decentralized alternative to centralized reputation systems that could be applied to various contexts beyond code contribution.

However, the vouching system is not without potential challenges and considerations. The network effect that makes the system powerful also creates the risk of echo chambers, where trust is propagated through existing connections rather than being established based on merit. There's also the question of how to handle disagreements in vouching—what happens when two trusted individuals have opposing views about a contributor? These questions highlight the complexity of designing trust systems that balance the need for reliable signals with the recognition that trust is inherently subjective and contextual.

From a technical perspective, the implementation raises interesting questions about the trade-offs between decentralization and usability. By storing vouch records on individual PDS servers rather than a centralized database, Tangled maintains its commitment to decentralization, but this approach also requires careful management of data synchronization and privacy. The aggregation of vouch data across the network must be efficient enough not to impact user experience while still providing timely and accurate information.

The vouching system also reflects a broader shift in how we think about identity and reputation in digital spaces. As online interactions become increasingly automated and AI-mediated, traditional signals of credibility—such as institutional affiliation or publication history—become less relevant. In their place, we need new mechanisms that can evaluate the quality and reliability of contributions in a more granular and context-specific manner. Tangled's approach represents one attempt to address this need, creating a system where reputation is built through direct experience and the judgment of trusted peers.

As we continue to navigate the challenges and opportunities of AI-assisted development, systems like Tangled's vouching feature may become increasingly important. They offer a way to harness the collective wisdom of communities while respecting individual autonomy and privacy. By allowing trust to emerge organically from interactions rather than being imposed from above, such systems may provide a more sustainable approach to quality control in open source and collaborative environments.

The introduction of vouching on Tangled represents more than just a new feature—it's a philosophical statement about how trust should be established and maintained in decentralized networks. In a world where AI can generate convincing but potentially misleading content at scale, the ability to quickly identify and signal reliable contributors becomes essential. Tangled's web of trust offers one path forward, creating a system where community members can collectively build the infrastructure needed to navigate this new landscape.

As with any emergent technology, the true test of the vouching system will come through real-world use and iteration. The initial implementation provides a solid foundation, but the most valuable insights will likely come from how users adapt and extend the system to meet their specific needs. In this sense, the vouching feature is not just a solution to the current problem of LLM spam, but a starting point for a broader conversation about how we can build more trustworthy and sustainable digital collaboration spaces.

For those interested in exploring this system further, the official Tangled page offers more information about the platform, while the PDS documentation explains the underlying infrastructure that makes the vouching system possible. The vouching feature documentation provides additional technical details about implementation and usage.

Comments

Please log in or register to join the discussion