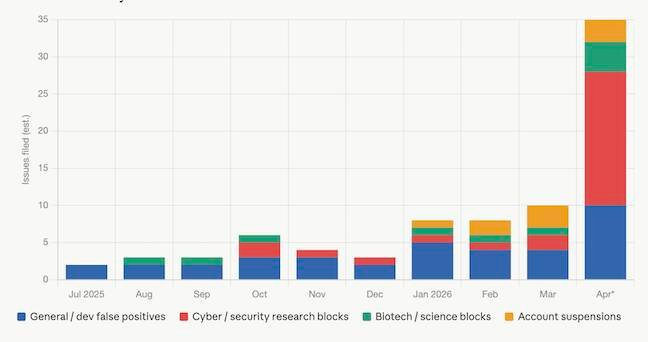

Anthropic's Claude Opus 4.7 release has introduced overly aggressive safeguards that are causing excessive false positives in its Acceptable Use Policy (AUP) classifier, preventing legitimate development, security research, and scientific work. The number of complaints about these refusals has surged from 2-3 per month to over 30 in April alone, leaving customers paying for a service they cannot fully utilize.

Anthropic's recent release of Claude Opus 4.7 was intended to strengthen safeguards against potential misuse, particularly in cybersecurity contexts. However, these enhanced protections have created significant compliance challenges for legitimate users, transforming the AI assistant into an overzealous content enforcement mechanism that blocks harmless requests.

The implementation of these stricter controls comes after Anthropic announced Mythos, a model the company describes as too capable of vulnerability discovery and exploitation for public release. "We are releasing Opus 4.7 with safeguards that automatically detect and block requests that indicate prohibited or high-risk cybersecurity uses," Anthropic stated. "What we learn from the real-world deployment of these safeguards will help us work towards our eventual goal of a broad release of Mythos-class models."

Escalating Refusal Rates

The impact of these enhanced safeguards is evident in the rising number of complaints in Anthropic's GitHub repository for Claude Code. The Acceptable Use Policy (AUP) classifier has become increasingly aggressive, resulting in a substantial increase in false positives that prevent legitimate work.

Historical data shows a clear progression:

- July through September 2025: Approximately 2-3 complaints per month

- October through November 2025: Increased to 5-7 complaints per month

- December 2025: Slight decrease (possibly due to holiday season)

- January 2026: Returned to approximately 8 complaints

- February and March 2026: Similar volume to January

- April 2026: Surged to over 30 reports of false positives

Specific Compliance Challenges

The surge in complaints reveals a pattern of over-enforcement across multiple domains:

Language Barriers: Issue #48442 reports persistent AUP false positives when processing Russian prompts across various projects including psychology books, web applications, infrastructure, and bots.

Scientific Research: Issue #49751 indicates that standard computational structural biology tasks are being flagged as violations, representing a regression from previous versions.

Educational Security Content: Golden G Richard III, director of the LSU Cyber Center and Applied Cybersecurity Lab, reported in issue #50916 that Claude refused to read cybersecurity lab materials. "I expect that for $200+ per month, basic help with editing tasks will not be rejected," Richard wrote. "If the models are going to be hamstrung to the point where cybersecurity educators and researchers can't use them, how is this positively impacting security?"

Data Processing: Issue #48723 describes constant AUP violation errors when Claude Code attempts to read raw data files, including a PDF of a Hasbro Shrek toy advertisement. The developer identified specific PDF content stream syntax that triggered the refusal, translating to "CHARACTER OR FOR DONKEY UNDERNEATH."

API Access Limitations: Issue #49679 highlights that special exemptions granted for cybersecurity research use cases work in Claude Chat but fail when using the Claude Code API, creating inconsistent compliance experiences.

Technical Implementation Concerns

The pattern of refusals suggests potential technical limitations in the AUP classifier implementation. Given that leaked Claude Code source uses regex patterns for sentiment analysis, it appears the AUP classifier may employ similar approaches—checking for forbidden keywords without sufficient contextual understanding.

This approach creates significant compliance challenges for legitimate users who work with technical content that may contain terms that could be misinterpreted as potentially harmful. The lack of contextual awareness transforms what should be nuanced content filtering into blunt enforcement that blocks legitimate technical discourse.

Compliance Timeline and Impact

The implementation of these enhanced safeguards follows a clear timeline with corresponding user impact:

- Initial Implementation (July-September 2025): Moderate refusal rates with minimal disruption

- Expansion of Controls (October-November 2025): Noticeable increase in false positives affecting development workflows

- Holiday Adjustment (December 2025): Temporary reduction in complaints, likely due to decreased usage

- New Year Impact (January-March 2026): Consistent high refusal rates affecting productivity

- Current State (April 2026): Crisis-level false positives rendering the service unusable for many legitimate use cases

Regulatory and Practical Implications

For organizations and individuals relying on Claude for development, research, or educational purposes, these compliance challenges create significant operational hurdles. The overzealous enforcement of the AUP effectively transforms a paid service into one with substantial limitations, raising questions about the value proposition for enterprise customers.

The situation also highlights a broader challenge in AI regulation: balancing security concerns against legitimate use cases. As AI systems become more capable, the tendency to implement increasingly restrictive safeguards may ultimately undermine the very innovation and research that could lead to better security practices.

Anthropic has not responded to requests for comment regarding these compliance issues, leaving affected users to navigate an increasingly restrictive environment without clear guidance on acceptable usage parameters.

For developers experiencing these issues, the GitHub repository remains the primary channel for reporting problems and seeking resolution. However, the escalating volume of complaints suggests a systemic issue that requires more than individual case-by-case resolution.

Comments

Please log in or register to join the discussion