Cloudflare has launched a revolutionary Model Context Protocol server that reduces token usage by 99.9% when AI agents interact with its extensive API platform, enabling more complex automation workflows.

Cloudflare has unveiled a groundbreaking innovation in AI agent architecture with its new Model Context Protocol (MCP) server powered by Code Mode, addressing one of the most significant challenges in agent-to-tool integrations: the prohibitive token costs associated with exposing large API surfaces to large language models.

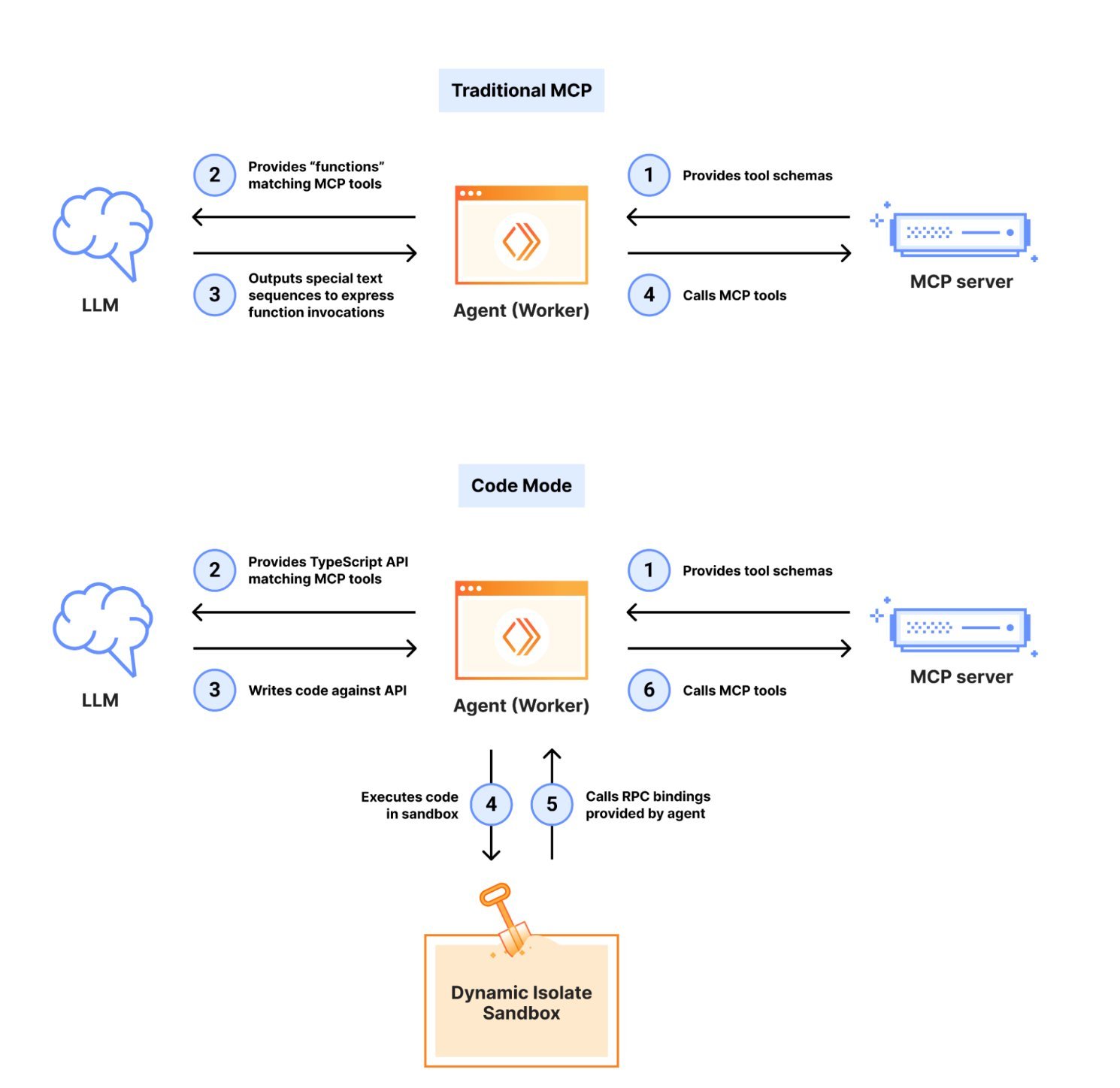

The traditional approach to MCP server design has been straightforward but costly. Each API endpoint requires its own tool definition, which means that for a platform with thousands of endpoints, the model must load and process millions of tokens just to understand what tools are available. This leaves precious little context window for the actual reasoning and task execution that agents need to perform.

The Token Cost Problem in Traditional MCP

In conventional MCP implementations, every tool specification consumes tokens in the model's limited input budget. For Cloudflare's extensive platform spanning DNS, Zero Trust, Workers, and R2 services, this would mean loading over 1.17 million tokens worth of API definitions. This massive token footprint severely limits an agent's ability to reason about complex tasks, as most of the context window is consumed by tool descriptions rather than the actual work at hand.

Code Mode's Revolutionary Approach

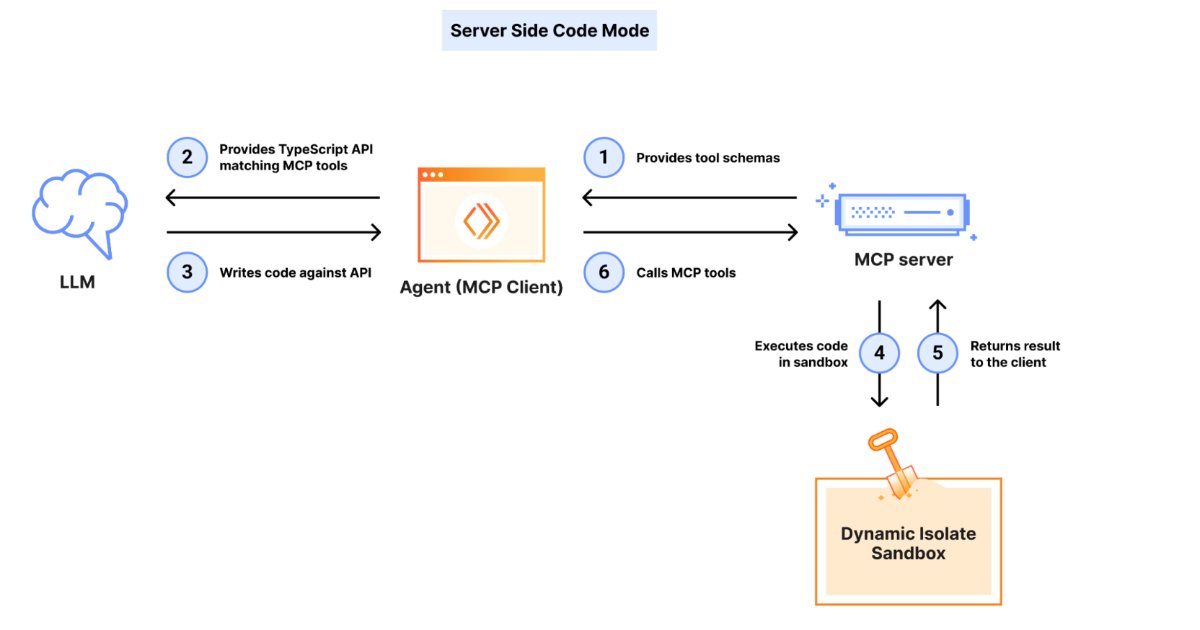

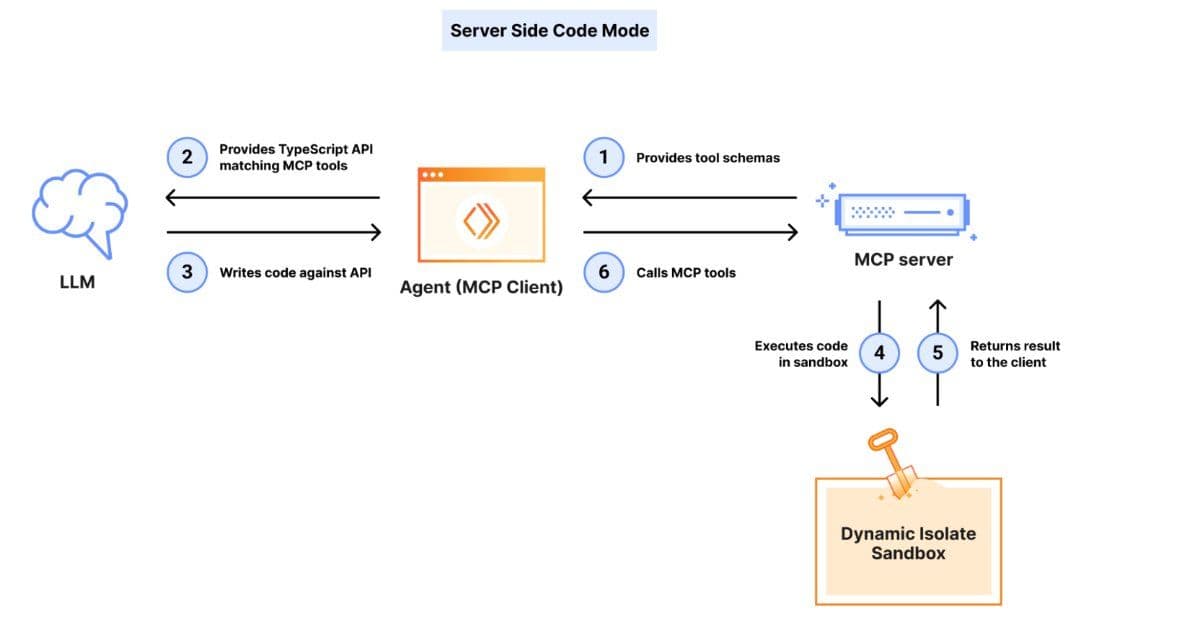

Cloudflare's solution is elegantly simple yet profoundly effective. Instead of exposing thousands of individual tools, Code Mode provides just two: search() and execute(). These tools are backed by a type-aware SDK that allows the model to generate and execute JavaScript code within a secure V8 isolate.

The search() function enables agents to query the OpenAPI specification by product area, path, or metadata without ever loading the entire spec into the model's context. The execute() function then runs code that can handle pagination, conditional logic, and chained API calls in a single operation cycle, dramatically reducing round-trip overhead.

The Numbers Tell the Story

According to Cloudflare's internal testing, this approach reduces the token footprint from over 1.17 million tokens to approximately 1,000 tokens—a reduction of around 99.9%. This fixed footprint holds regardless of the API surface size, meaning agents can work across large, feature-rich platforms without exhausting their context windows.

Security and Sandboxing

Security was a paramount concern in Code Mode's design. The server runs user-generated code in a Dynamic Worker isolate with no file system access, no environment variables exposed, and outbound requests controlled via explicit handlers. This design mitigates risks associated with executing untrusted code while preserving agent autonomy and flexibility.

Immediate Availability and Open Source

The new MCP server for the entire Cloudflare API is immediately available for developers to integrate. Cloudflare has also open-sourced a Code Mode SDK within its broader Agents SDK, enabling similar patterns in third-party MCP implementations. This move positions Cloudflare as a leader in scalable agentic workflows and may influence both standard MCP server designs and agent frameworks in the coming year.

Industry Impact and Future Implications

Analysts and practitioners view Code Mode as a key step in scaling agentic workflows beyond simple, single-service interactions toward broad, multi-API automation. As industry players grapple with context costs and orchestration complexity in production-grade AI agents, Cloudflare's approach offers a compelling blueprint for efficient, scalable agent architectures.

The implications extend far beyond Cloudflare's platform. This pattern could revolutionize how AI agents interact with complex systems, enabling more sophisticated automation workflows that were previously impractical due to token constraints. As the MCP ecosystem continues to evolve, Code Mode may become a standard pattern for efficient agent-to-tool communication.

Technical Deep Dive

The core innovation lies in the specialized encoding strategy that allows expansive API schemas to be compressed into minimal context windows without losing functional precision. By converting MCP tools into a TypeScript API and asking the LLM to write code against it, Cloudflare has created a system where the model can reason about API interactions at a higher level of abstraction.

The V8 isolate execution environment provides the perfect balance between security and functionality. It's isolated enough to prevent malicious code execution while being flexible enough to handle complex API orchestration tasks. The type-aware SDK ensures that the generated code is both syntactically correct and semantically meaningful, reducing the likelihood of runtime errors.

Real-World Applications

For developers building AI agents that need to interact with Cloudflare's platform, Code Mode opens up new possibilities. Tasks that previously required multiple API calls and consumed significant context can now be accomplished with a single code execution. This enables more complex workflows, such as:

- Automated DNS management across multiple zones

- Dynamic Workers deployment and scaling

- Zero Trust policy orchestration

- R2 bucket management and data processing

Each of these tasks can now be accomplished with a fraction of the token cost, enabling agents to handle more complex scenarios and make more sophisticated decisions.

The Broader MCP Ecosystem

Cloudflare's innovation comes at a time when the MCP ecosystem is rapidly evolving. Recent developments include Google bringing MCP support to Colab for cloud execution of AI agents, Pinterest deploying production-scale MCP ecosystems, and various companies exploring multi-agent orchestration frameworks.

Code Mode represents a significant advancement in this ecosystem, demonstrating how thoughtful architecture can overcome fundamental limitations in AI agent design. As more companies adopt MCP standards, Cloudflare's approach may become a reference implementation for efficient, scalable agent architectures.

Looking Ahead

The success of Code Mode could influence the future direction of MCP standards and agent frameworks. As context windows remain a precious resource for large language models, approaches that minimize token usage while maximizing functionality will become increasingly valuable.

Cloudflare's open-source SDK also enables the broader developer community to build similar solutions for their own platforms, potentially creating a new wave of efficient MCP implementations. This democratization of efficient agent architecture could accelerate the development of more sophisticated AI automation tools across the industry.

For organizations building AI agents today, Code Mode offers a compelling case study in how to design for efficiency and scalability from the ground up. The lesson is clear: sometimes the most effective solution is to work at a higher level of abstraction, letting the model generate and execute code rather than trying to expose every possible API endpoint as a separate tool.

As AI agents become increasingly central to automation workflows, innovations like Code Mode will play a crucial role in determining which platforms and architectures can scale effectively. Cloudflare's approach demonstrates that with thoughtful design, it's possible to overcome the token constraints that have limited agent capabilities, opening up new possibilities for AI-driven automation.

Comments

Please log in or register to join the discussion