Confluent's innovation in storing schema metadata in Kafka headers rather than payloads decouples schema governance from data, enabling more flexible event-driven architectures while maintaining compatibility.

Confluent has introduced a significant architectural improvement to Apache Kafka by enabling schema IDs to be stored in message headers rather than in the payload. This change represents a fundamental shift in how schema metadata is managed in event-driven systems, offering organizations greater flexibility in their data governance strategies while maintaining compatibility with existing event formats.

Service Update: The Technical Shift

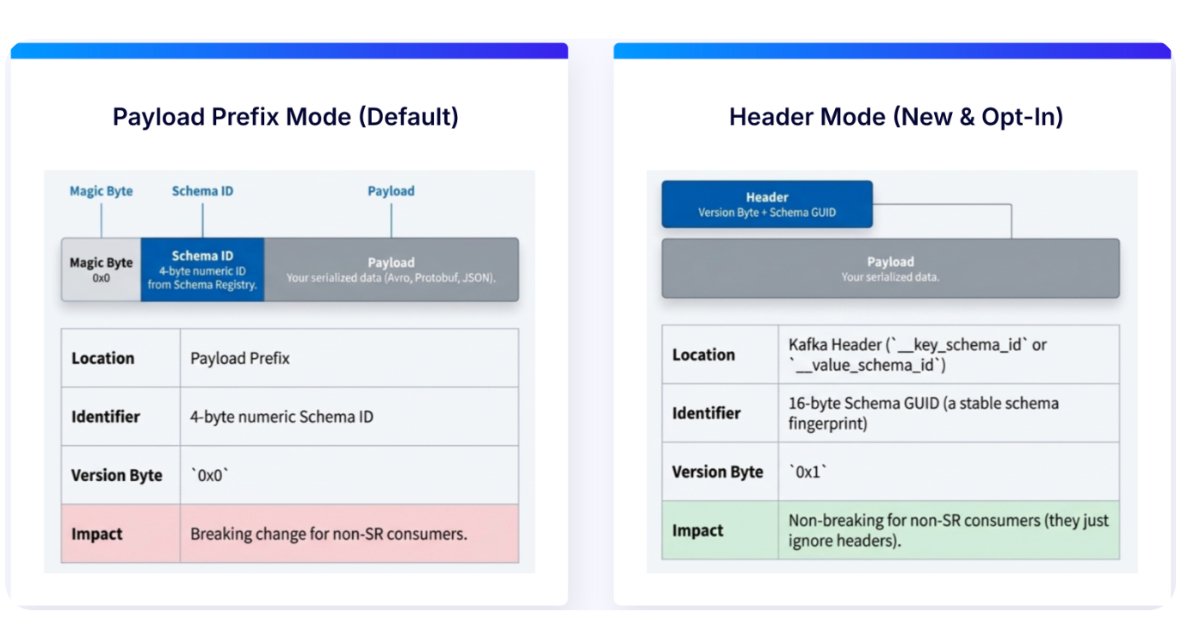

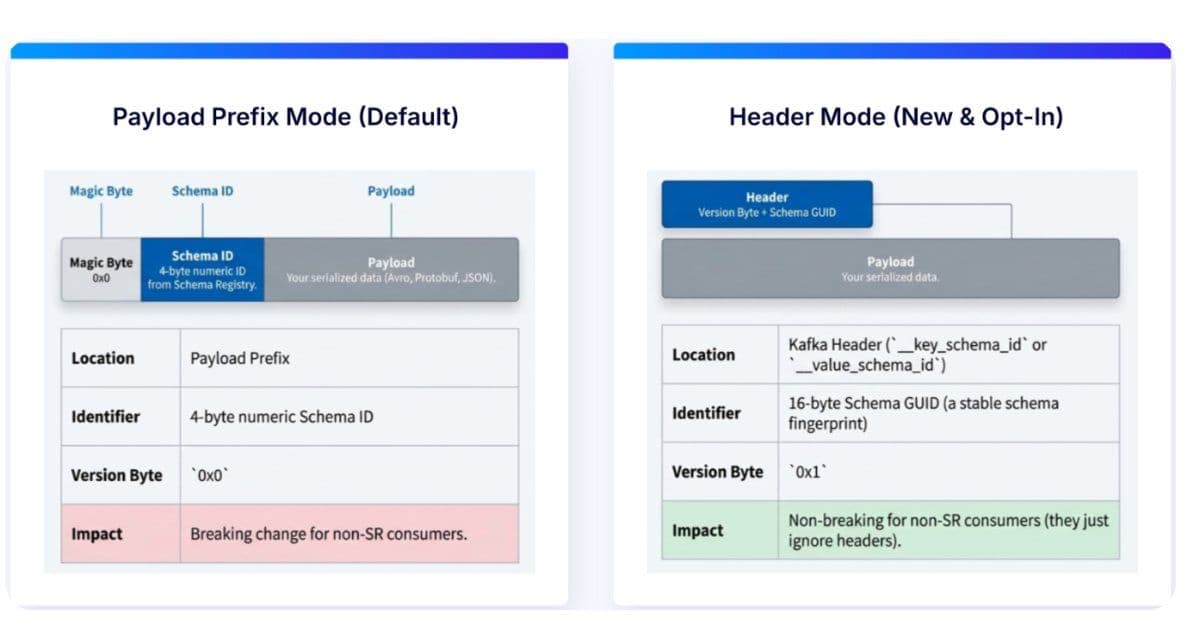

In traditional Kafka deployments using Confluent's wire format, schema identifiers have been embedded directly within the message payload. This approach ensures consumers can correctly deserialize events but creates tight coupling between schema metadata and the data itself. The new approach leverages Kafka's native header support to store schema identifiers separately from the payload, while still maintaining integration with Confluent Schema Registry.

This architectural shift means that consumers now retrieve schema information from the schema registry at runtime using the ID contained in the message header. The payload itself remains unchanged, preserving compatibility with popular serialization formats such as Avro, Protobuf, and JSON Schema. This decoupling of schema resolution from the payload fundamentally changes how event streams are processed and governed.

Use Cases: Practical Benefits for Organizations

The header-based approach offers several compelling advantages for organizations managing complex event-driven architectures:

Simplified Schema Evolution

In microservices environments where multiple teams and systems consume the same event streams, the traditional approach of embedding schema IDs in payloads creates significant coordination overhead. When schema changes are introduced, producers and consumers must be updated simultaneously. With schema IDs moved to headers, producers and consumers can evolve independently, with validation centralized in the schema registry. This reduces the complexity of managing schema changes across distributed systems.

Incremental Adoption

One of the most significant benefits is the ability to introduce schema governance without large-scale rewrites or coordinated changes across all producers and consumers. Organizations can attach schema IDs to existing event streams, allowing teams to gradually adopt stricter schema management practices while maintaining backward compatibility. This is particularly valuable in large enterprises with legacy systems where complete rewrites are impractical.

Improved Interoperability

Gunnar Morling, Technologist at Confluent, emphasized how this change improves interoperability with storage systems and downstream processing frameworks. "Schema IDs into Kafka message headers rather than the message payload is a massive quality of life improvement: payloads become valid, self-contained," Morling noted. This separation enables consistent reuse of structured event data across different pipelines and systems, including Apache Flink and analytics or ML platforms.

Zero-Downtime Implementation

David Araujo, Director of Product Management at Confluent, describes how the feature enables zero downtime and client-independent adoption patterns. "By moving schema IDs to headers, you can attach schemas to existing data in Kafka without touching payload formats," Araujo explains. This approach allows organizations to implement schema governance without disrupting existing workflows or requiring immediate changes to all consuming systems.

Trade-offs: Implementation Considerations

While the header-based approach offers significant benefits, organizations should consider several trade-offs when implementing this change:

Connector and Tool Compatibility

The transition may require updates to Kafka connectors and downstream tools that assume schema metadata is embedded in payloads. During the adoption period, organizations may need to support both approaches simultaneously, creating complexity in their data pipeline architecture. Teams should carefully evaluate their existing tooling ecosystem and plan for potential compatibility issues.

Performance Implications

Storing schema IDs in headers rather than payloads introduces an additional lookup step in the schema registry. While this overhead is generally minimal, organizations with extremely high-throughput systems should conduct performance testing to ensure the change doesn't impact their SLAs. The trade-off between flexibility and performance needs careful consideration in latency-sensitive environments.

Learning Curve and Team Training

Development teams will need to understand the new approach to schema handling, which may require training and documentation updates. Organizations should plan for knowledge transfer sessions and update their internal best practices to reflect the new pattern. The learning curve may be steeper for teams deeply accustomed to the traditional wire format approach.

Broader Architectural Implications

This change reflects a broader trend in event-driven architectures toward greater decoupling of concerns. By separating schema metadata from payload data, Confluent enables more flexible data governance models that can adapt to organizational needs without requiring changes to the underlying data formats.

Patrick Neff, CSTA Team Lead CEMEA at Confluent, highlights the strategic importance of schema governance: "Schemas are the key enabler for unlocking the full value of your data." The header-based approach supports this vision by making schema governance more accessible and less disruptive to implement.

Implementation Path

The feature is currently available in Confluent Cloud and is expected to be included in Confluent Platform with Schema Registry support under existing licensing models. Organizations planning to adopt this approach should:

- Assess their current schema management practices and identify areas where decoupling would provide the most benefit

- Evaluate their existing tooling for compatibility with the header-based approach

- Develop a phased adoption plan that allows for incremental implementation

- Update their documentation and training materials to reflect the new pattern

- Monitor performance metrics during the transition to ensure no unexpected impacts

As organizations continue to build increasingly complex event-driven architectures, innovations like this one from Confluent play a crucial role in simplifying data governance while maintaining the flexibility needed in modern distributed systems. The move to header-based schema IDs represents a thoughtful evolution of Kafka's capabilities that addresses real-world challenges in managing event streams at scale.

For more information about implementing this feature, organizations can refer to the Confluent documentation and the official announcement detailing the technical implementation.

Comments

Please log in or register to join the discussion