HPE unveils new Blackwell and Rubin GPU systems, AI factories, and sovereign computing solutions in partnership with Nvidia, alongside the first Nvidia-certified object storage platform and AI Grid networking architecture.

HPE has unveiled an expanded portfolio of Nvidia-powered AI systems and sovereign computing solutions at the GPU Technology Conference (GTC), marking a significant push into the enterprise AI infrastructure market. The announcements include new systems built on Blackwell and upcoming Rubin GPUs, updates to its Alletra Storage MP X10000, and the introduction of an AI Grid networking architecture.

The company is positioning itself as a key enabler of what it calls "AI factories" - turnkey enterprise AI infrastructure co-engineered with Nvidia that promises to transform AI ambitions into real enterprise value. HPE president and CEO Antonio Neri emphasized the competitive landscape, stating: "The AI race is fundamentally about speed, scale, and trust. Our industry leadership across cloud, networking, and AI enables organizations to operationalize AI securely, efficiently, and at an unprecedented scale."

Sovereign AI Takes Center Stage

A major focus of HPE's announcements centers on sovereign AI capabilities, particularly in Europe. The company is building and installing the supercomputer for the European Union AI Factory, HammerHAI (Hybrid and Advanced Machine learning platform for Manufacturing, Engineering, and Research). This initiative, led by a consortium of leading academic HPC centers in Germany, represents a significant investment in European AI infrastructure.

Dr. Bastian Koller, Managing Director of the High Performance Computing Center at Stuttgart University and lead coordinator of HammerHAI, explained the importance of this approach: "HammerHAI will offer a highly-performant AI platform, alongside services like AI skills training, as an alternative to future users that have historically relied on commercial cloud AI services in which data sovereignty was difficult to ensure."

The sovereign computing push addresses growing concerns about data residency and compliance requirements across different regions. HPE's approach allows organizations to scale AI initiatives while adhering to regional data sovereignty and compliance requirements - a critical consideration for enterprises operating in multiple jurisdictions.

Storage Breakthrough with Nvidia Certification

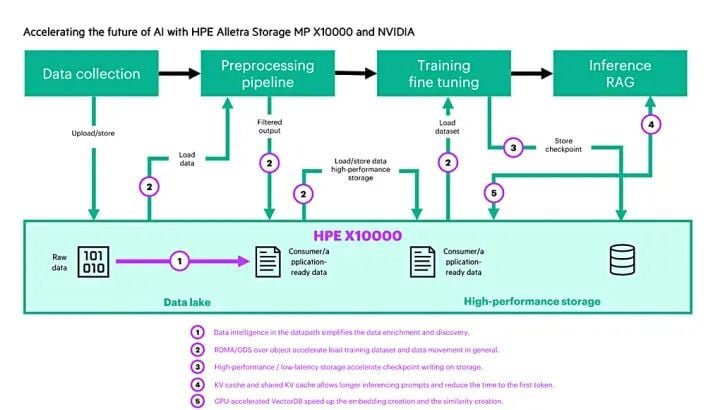

HPE claims a significant milestone with its Alletra Storage MP X10000, which it says is the first object storage platform to achieve Nvidia-Certified Storage validation. This certification validates the array's performance for workloads of up to 128 GPUs and confirms that the storage layer efficiently feeds data to accelerated computing resources.

The certification process included functional tests for enterprise-grade availability and reliability, ensuring the storage solution can deliver faster model training, lower latency inference, and better overall utilization. HPE demonstrated impressive performance metrics, including indexed terabyte-scale vector data in under an hour using the X10000 combined with Nvidia cuVS CAGRA GPU-accelerated vector indexing and cuObject for accelerated storage I/O.

A detailed blog post from HPE explains how the Alletra Storage MP X10000 and Nvidia RDMA for S3 accelerate AI pipelines, RAG (Retrieval Augmented Generation), and real-time inference with low-latency, high-throughput, GPU-direct data paths. The company reports a 17x improvement in index build time and 8x improvement in total end-to-end pipeline transport using a single Nvidia H100 and accelerated remote direct memory access (RDMA).

AI Grid: Connecting Distributed Inference

Perhaps the most innovative announcement is HPE's new AI Grid, an end-to-end offering built on an Nvidia reference architecture to connect AI factories and distributed inference clusters across regional and far-edge sites. This architecture enables service providers to deploy and operate thousands of distributed inference sites, turning AI installations into a single intelligent system.

The AI Grid includes several key components:

- Juniper's telco-grade multicloud routing and coherent optics for predictable long-haul and metro connectivity

- Cloud-native and multi-tenant security, firewalls, and WAN automation

- ProLiant Compute edge and rack servers with Nvidia-accelerated computing

- Spectrum-X Ethernet switches, BlueField DPUs, and Connect-X SuperNICs

- AI blueprints for rapid AI inference deployment

HPE describes this as allowing service providers to convert existing sites with power and connectivity into RAN-ready AI grids, enabling distributed inference and new services at scale. This approach addresses the growing need for edge AI processing and distributed inference capabilities.

Expanded Product Portfolio

The announcements include a comprehensive range of new products and updates:

HPE Private Cloud AI Enhancements:

- Expanded to deliver greater performance, scalability, and flexibility for enterprise inference

- New network expansion racks enabling scaling up to 128 GPUs

- Air-gapped configuration available for sensitive data deployments

- Pre-configured hardware and software stack featuring latest Nvidia AI Enterprise software

- Updated AI-Q blueprint for AI agents and new Omniverse blueprint for digital twins

Edge and Enterprise Solutions:

- HPE ProLiant Compute DL380a Gen12 servers certified for Fortanix Confidential AI

- CrowdStrike agentic security integration for HPE Private Cloud AI

- Support for latest Nemotron open models through Nvidia Agent Toolkit

- RTX PRO 6000 Blackwell Server Edition GPUs across all configurations

- RTX PRO 4500 Blackwell Server Edition GPU for edge deployments and smaller workloads

Multi-Workload Offerings:

- New co-designed solutions for autonomous edge intelligence, retail shopping assistance, video search and summarization, and biomedical research

- Integration of Retail Shopping Assistant Blueprint for retail sector deployment

- Combination of ProLiant Compute servers with Nvidia accelerated computing, Spectrum-X networking, BlueField DPUs, and Connect-X NICs

Technical Foundation and Availability

The technical foundation of these announcements rests on Nvidia's latest technologies, including Vera Rubin accelerators, BlueField-4 DPUs, Spectrum-X networking, ConnectX NICs, and Nvidia's AI software stack. HPE is also supporting the new Nvidia STX rackscale reference architecture to develop new AI storage offerings.

Availability details show a phased rollout through 2026:

- RTX PRO 4500 Blackwell Server Edition GPUs across ProLiant Compute portfolio: Q1-Q2 2026

- HPE Private Cloud AI with air-gapped deployment and Blackwell GPU support: Available now

- Network expansion racks for scaling to 128 GPUs: July 2026

- Fortanix support with ProLiant DL380a Gen12 systems: Q3 2026

These announcements position HPE as a comprehensive provider of AI infrastructure, from sovereign computing solutions for government and research institutions to enterprise AI factories and edge computing deployments. The partnership with Nvidia provides access to cutting-edge GPU technology while HPE's expertise in enterprise infrastructure and security addresses critical concerns around data sovereignty, compliance, and operational reliability.

The timing of these announcements is significant, coming amid growing demand for AI infrastructure that can operate within regulatory constraints while delivering the performance required for modern AI workloads. As organizations worldwide grapple with AI implementation challenges, HPE's integrated approach combining hardware, software, networking, and security may provide a compelling solution for enterprises seeking to operationalize AI at scale.

Comments

Please log in or register to join the discussion