Lyft has transformed its translation pipeline from manual processes to an AI-driven system that delivers 95% of translations in minutes while maintaining quality through human review.

Lyft has overhauled its approach to global content translation, replacing manual workflows with an AI-powered system that processes roughly 99% of user-facing content through a batch translation pipeline. The new architecture achieves a 30-minute SLA for 95% of translations while maintaining the quality standards necessary for a global ride-sharing platform.

From Days to Minutes: The Translation Bottleneck

Before implementing the AI system, Lyft relied on largely manual translation workflows that became a significant bottleneck as the company expanded into new markets. The traditional approach couldn't keep pace with product velocity, often taking days to translate and approve content for international markets.

The new system integrates large language models with automated evaluation and human review, enabling faster turnaround while preserving consistency in tone, style, and legal messaging. This shift has reduced translation turnaround from days to minutes for most content, improving release speed across languages.

Dual-Path Architecture: AI and Human Collaboration

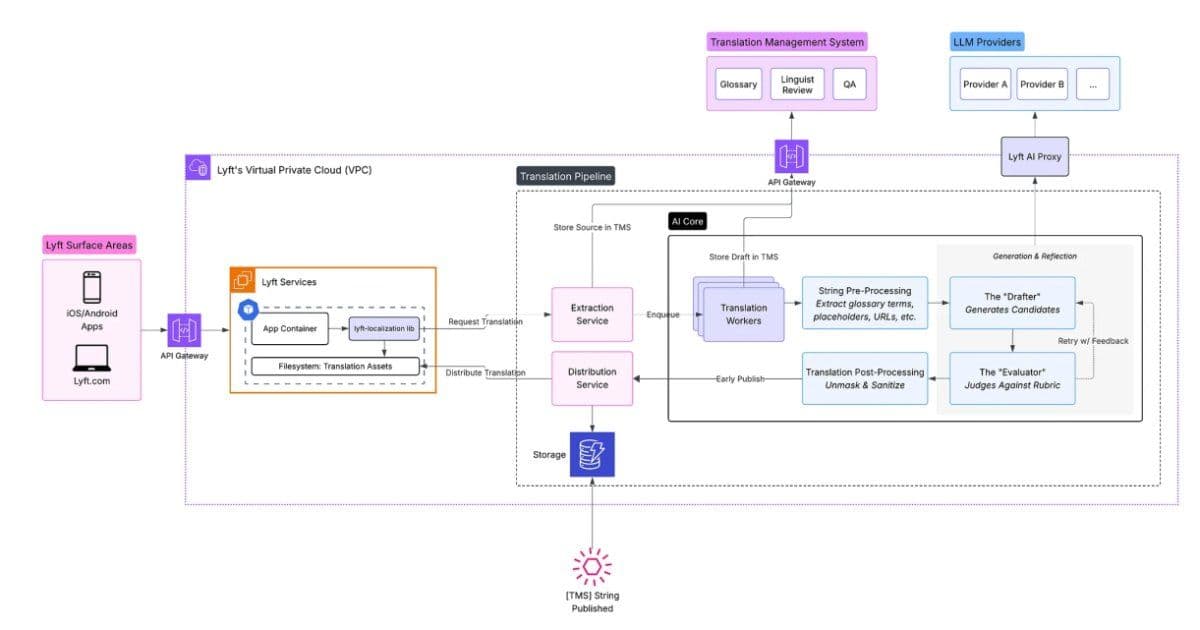

The batch translation pipeline follows a sophisticated dual-path architecture that simultaneously submits source strings to a translation management system (TMS) for human oversight and to LLM-based workers for rapid draft generation. This approach allows AI-generated translations to be used immediately to unblock releases, while the TMS remains the system of record.

Human linguists review translations asynchronously, and approved versions replace initial outputs to ensure quality and consistency. The pipeline processes multiple strings in parallel and supports iterative refinement across multiple passes.

The Drafter-Evaluator Pattern

A key innovation in Lyft's system is the division of responsibilities between a Drafter and an Evaluator:

- Drafter: Generates multiple candidate translations for each source string

- Evaluator: Assesses translations across dimensions such as accuracy, fluency, and brand alignment, selecting the best option or triggering retries for low-confidence outputs

This separation improves error detection and reduces bias by decoupling generation from evaluation. The Evaluator considers context injection, including UI metadata, placeholders, and regional considerations, while deterministic guardrails enforce safety, legal, and stylistic constraints.

Real-Time Translation: A Different Challenge

While batch translations benefit from broader contextual information and iterative evaluation, real-time translation follows a separate architecture focused on low latency. Ride chat messages and other immediate user interactions require a different optimization approach that prioritizes speed over the comprehensive evaluation possible in batch processing.

Quality Through Human-in-the-Loop

Despite the heavy use of AI, human review remains central to Lyft's localization strategy. Approximately 95% of translations pass through human review with minimal changes, while the remaining 5% involve complex cases such as regional idioms, legal disclaimers, or brand-specific language where human oversight ensures accuracy and consistency.

The system continuously collects metrics on translation quality, model performance, and reviewer consistency. These metrics are used to adjust models and improve subsequent translations, creating a feedback loop that enhances the AI's performance over time.

Safe Rollouts and Production Stability

The architecture supports prompt rollouts, allowing new AI translation strategies to be tested on small batches before full deployment. This approach ensures stable production output while enabling continuous improvement of the translation models.

Industry Context and Implications

Lyft's approach represents a broader trend in the industry toward AI-assisted localization that maintains human oversight. Similar patterns are emerging across other domains:

- DoorDash has built LLM conversation simulators to test customer support chatbots at scale

- GitHub is developing agentic workflows for repository automation

- Apple researchers have introduced Ferret-UI Lite for on-device UI understanding

These developments suggest that the future of localization and content translation will likely involve increasingly sophisticated AI systems working in tandem with human experts, rather than replacing them entirely.

Technical Architecture Details

The system's architecture includes several key components:

- Translation Management System (TMS): Serves as the system of record and provides human oversight

- LLM Workers: Generate rapid draft translations for immediate use

- Drafter Module: Creates multiple translation candidates for each source string

- Evaluator Module: Assesses translation quality across multiple dimensions

- Context Injection Layer: Provides UI metadata, placeholders, and regional considerations

- Guardrail System: Enforces safety, legal, and stylistic constraints

- Metrics Collection: Tracks quality, performance, and reviewer consistency

The dual-path approach ensures that translations can be released quickly while maintaining the option for human refinement, balancing speed with quality in a way that supports Lyft's international growth strategy.

The system's success demonstrates how AI can be effectively integrated into production workflows without sacrificing quality, providing a model for other companies facing similar localization challenges as they expand globally.

Comments

Please log in or register to join the discussion