Meta has revealed four custom AI chips in its MTIA series, claiming they outperform commercial silicon, while facing criticism over its ability to flag AI-generated misinformation.

Meta has unveiled details of four custom AI chips it developed in partnership with Broadcom, claiming they outperform commercial alternatives from companies like Nvidia. The social media giant revealed the Meta Training Inference Accelerator (MTIA) series chips as part of its strategy to reduce reliance on third-party hardware for its AI infrastructure.

Meta's Custom Silicon Strategy

The four chips—MTIA 300, 400, 450, and 500—represent different generations and capabilities within Meta's custom silicon roadmap. According to the company's specifications, these chips are designed to handle various AI workloads from ranking and recommendation systems to generative AI inference.

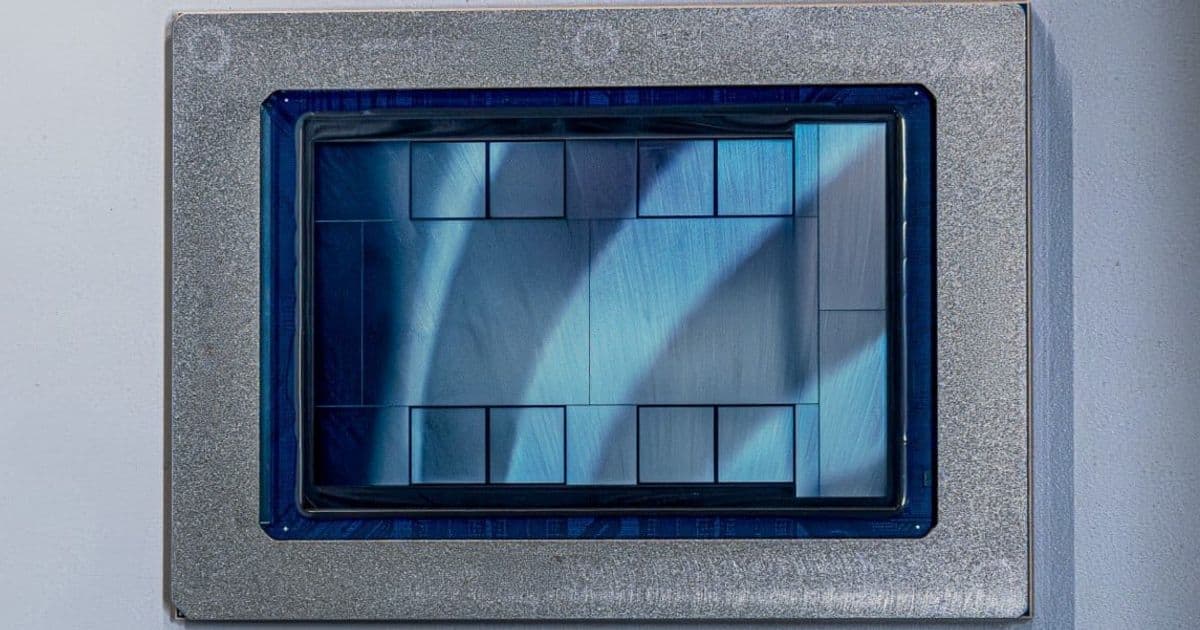

MTIA 300 is already in production and optimized for ranking and recommendation workloads. The chip features one compute chiplet, two network chiplets, and several HBM stacks, with each processing element containing a pair of RISC-V vector cores.

MTIA 400 represents an evolution that can support both generative AI models and recommendation workloads. Meta claims this is its first chip with "raw performance competitive with leading commercial products." The company is testing a rack configuration with 72 MTIA 400 devices connected via a switched backplane, forming a single scale-up domain.

MTIA 450 doubles the HBM bandwidth of the MTIA 400 and is scheduled for mass deployment in early 2027. Meta states its performance is "much higher than that of existing leading commercial products."

MTIA 500 increases HBM bandwidth by an additional 50 percent over the MTIA 450 and uses a 2x2 configuration of smaller compute chiplets. Mass deployment is planned for 2027.

Meta's chip development team says it can now ship a new chip "roughly every six months" thanks to a reusable and modular design approach across chiplets, chassis, racks, and network infrastructure. The MTIA 400, 450, and 500 all utilize the same chassis, rack, and network infrastructure, enabling rapid iteration and deployment.

Broadcom Partnership and Deployment Scale

The chips were developed "in close partnership with Broadcom," and Meta plans to install "multiple gigawatts" of these custom chips in 2027 and beyond. This massive deployment scale suggests Meta is betting heavily on its custom silicon strategy to power its AI services, which include everything from content recommendation algorithms to large language model inference.

Content Moderation Challenges

While Meta has refined its chip development capabilities, the company faces significant criticism over its ability to moderate AI-generated content. The company's Oversight Board recently called for new rules covering content posted during conflicts, highlighting a concerning gap between Meta's technical achievements in hardware and its content moderation capabilities.

The Oversight Board examined a faked video that purportedly depicted scenes from the 2025 conflict between Israel and Iran. Despite independent fact-checkers labeling the content as fake and six users reporting it to Meta, the company did not label the video as "High Risk AI." The Board expressed concern that Meta's mechanisms for flagging fake videos "are neither robust nor comprehensive enough to contend with the scale and velocity of AI-generated content, particularly during a crisis or conflict where there is heightened engagement on the platform."

The Board recommended that Meta ensure fact-checkers are adequately resourced and have clear guidance on prioritizing content from conflicts. It also suggested that Meta's content-checking policies should have allowed for more effective support for third-party fact-checkers during the crisis.

Policy Changes and Digital Services Tax Response

Meta's content moderation challenges come amid significant policy changes. Last year, the company announced the end of its third-party fact-checking program, replacing it with user-led reporting similar to X's (formerly Twitter) approach under Elon Musk. This change led some observers to suggest Meta was attempting to win favor with the Trump administration, which had threatened action against nations imposing Digital Services Taxes on American companies.

In a move that appears to neutralize these taxes, at least in Europe, Meta announced it will charge advertisers a fee equivalent to local digital taxes. The company calls this extra charge a "location fee." For example, an advertiser delivering $100 worth of ads to Italy, where a three percent tax is in force, would receive a bill for $103—$100 for ads and a $3 location fee.

Meta will apply these fees in six nations: Austria, France, Italy, Spain, Türkiye, and the UK. This approach effectively passes the tax burden to advertisers while maintaining Meta's revenue streams.

The Contrast Between Technical and Policy Achievements

The contrast between Meta's technical achievements in custom silicon development and its struggles with content moderation presents an interesting paradox. The company has demonstrated remarkable engineering capabilities, creating a chip development pipeline that can produce competitive hardware on an aggressive six-month cadence. Yet it appears to be struggling with the policy and operational challenges of managing AI-generated content at scale.

This dichotomy raises questions about Meta's priorities and resource allocation. While the company invests heavily in custom hardware to power its AI services, it has simultaneously reduced its investment in content moderation infrastructure, including the elimination of third-party fact-checking.

As Meta continues to deploy its custom chips and expand its AI capabilities, the company will need to address these content moderation challenges. The scale of AI-generated content, particularly during crises and conflicts, requires robust systems for identification and labeling—systems that Meta's Oversight Board suggests are currently inadequate.

The coming years will test whether Meta can maintain its technical momentum in custom silicon while also developing the governance frameworks necessary to responsibly manage the AI-powered services these chips will enable.

Comments

Please log in or register to join the discussion