A new hybrid AI system combines vision-language models with formal planning software to generate effective long-term plans for visual tasks like robot navigation and multirobot assembly.

MIT researchers have developed a generative AI-driven approach for planning long-term visual tasks that is about twice as effective as existing techniques, potentially enabling robots to navigate changing environments or improve multirobot assembly teams.

Bridging Visual Understanding and Formal Planning

The system, called VLM-guided formal planning (VLMFP), addresses a fundamental challenge in robotics: how to plan complex sequences of actions based on visual inputs. Traditional planning software excels at generating step-by-step solutions but cannot process images, while vision-language models understand visual scenes but struggle with multi-step reasoning.

"Our framework combines the advantages of vision-language models, like their ability to understand images, with the strong planning capabilities of a formal solver," explains Yilun Hao, an aeronautics and astronautics graduate student at MIT and lead author of the research.

How the Two-Step System Works

VLMFP employs a novel two-stage approach using two specialized vision-language models that work in tandem:

First, a small model called SimVLM analyzes an image and describes the scenario using natural language. It then simulates a sequence of actions needed to reach a specified goal. This model is carefully trained to understand spatial relationships and goal-oriented behavior without memorizing specific patterns.

Second, a larger model called GenVLM takes SimVLM's description and generates initial files in Planning Domain Definition Language (PDDL), a standard programming language for planning problems. These files define the environment, valid actions, and domain rules, along with the specific initial states and goals for the task at hand.

Iterative Refinement for Accuracy

The system then feeds these PDDL files into classical planning software, which computes a detailed step-by-step plan. Crucially, GenVLM compares the solver's results with SimVLM's simulations and iteratively refines the PDDL files to improve accuracy.

"The generator and simulator work together to be able to reach the exact same result, which is an action simulation that achieves the goal," Hao notes.

Superior Performance on Complex Tasks

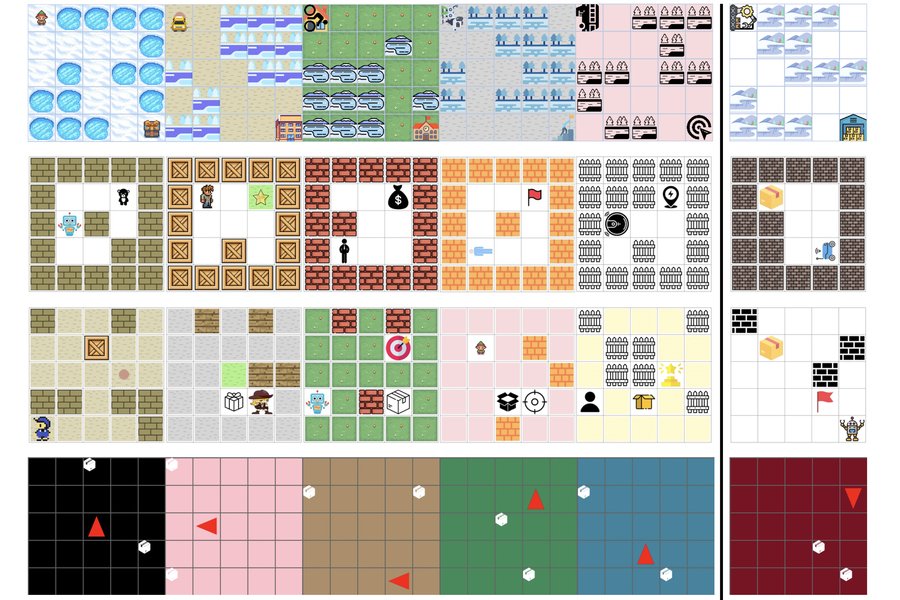

When tested on six 2D planning tasks and two 3D scenarios including multirobot collaboration and robotic assembly, VLMFP achieved an average success rate of about 70 percent. This significantly outperformed the best baseline methods, which reached only about 30 percent success.

More impressively, the system generated valid plans for more than 50 percent of completely new scenarios it hadn't encountered during training. This generalization capability is critical for real-world applications where conditions constantly change.

Key Innovation: Domain Generalization

A crucial advantage of VLMFP is its use of PDDL's domain-general approach. The system generates two separate PDDL files: a domain file that remains constant across all instances in an environment, and a problem file that defines specific initial states and goals.

"One advantage of PDDL is the domain file is the same for all instances in that environment. This makes our framework good at generalizing to unseen instances under the same domain," Hao explains.

Real-World Applications

The research team envisions several practical applications for this technology:

- Robot navigation in dynamic environments where obstacles and conditions change frequently

- Multirobot assembly teams that must coordinate complex manufacturing tasks

- Autonomous systems that need to adapt to new situations without extensive retraining

- Visual planning problems in logistics, warehouse management, and automated warehousing

Future Directions

While the current system shows strong performance, the researchers identify several areas for improvement. They aim to handle more complex scenarios and develop methods to identify and mitigate hallucinations by the vision-language models.

"In the long term, generative AI models could act as agents and make use of the right tools to solve much more complicated problems," says Chuchu Fan, associate professor in aeronautics and astronautics and principal investigator at the Laboratory for Information and Decision Systems. "But what does it mean to have the right tools, and how do we incorporate those tools? There is still a long way to go."

The research was funded in part by the MIT-IBM Watson AI Lab and will be presented at the International Conference on Learning Representations.

The work represents a significant step toward integrating visual understanding with formal planning capabilities, potentially enabling more capable and adaptable robotic systems for complex real-world tasks.

The research team includes Yongchao Chen, a graduate student in the MIT Laboratory for Information and Decision Systems; Chuchu Fan, associate professor in AeroAstro; and Yang Zhang, a research scientist at the MIT-IBM Watson AI Lab. The paper, titled "Simulation to Rules: A Dual-VLM Framework for Formal Visual Planning," is available as open access.

Comments

Please log in or register to join the discussion