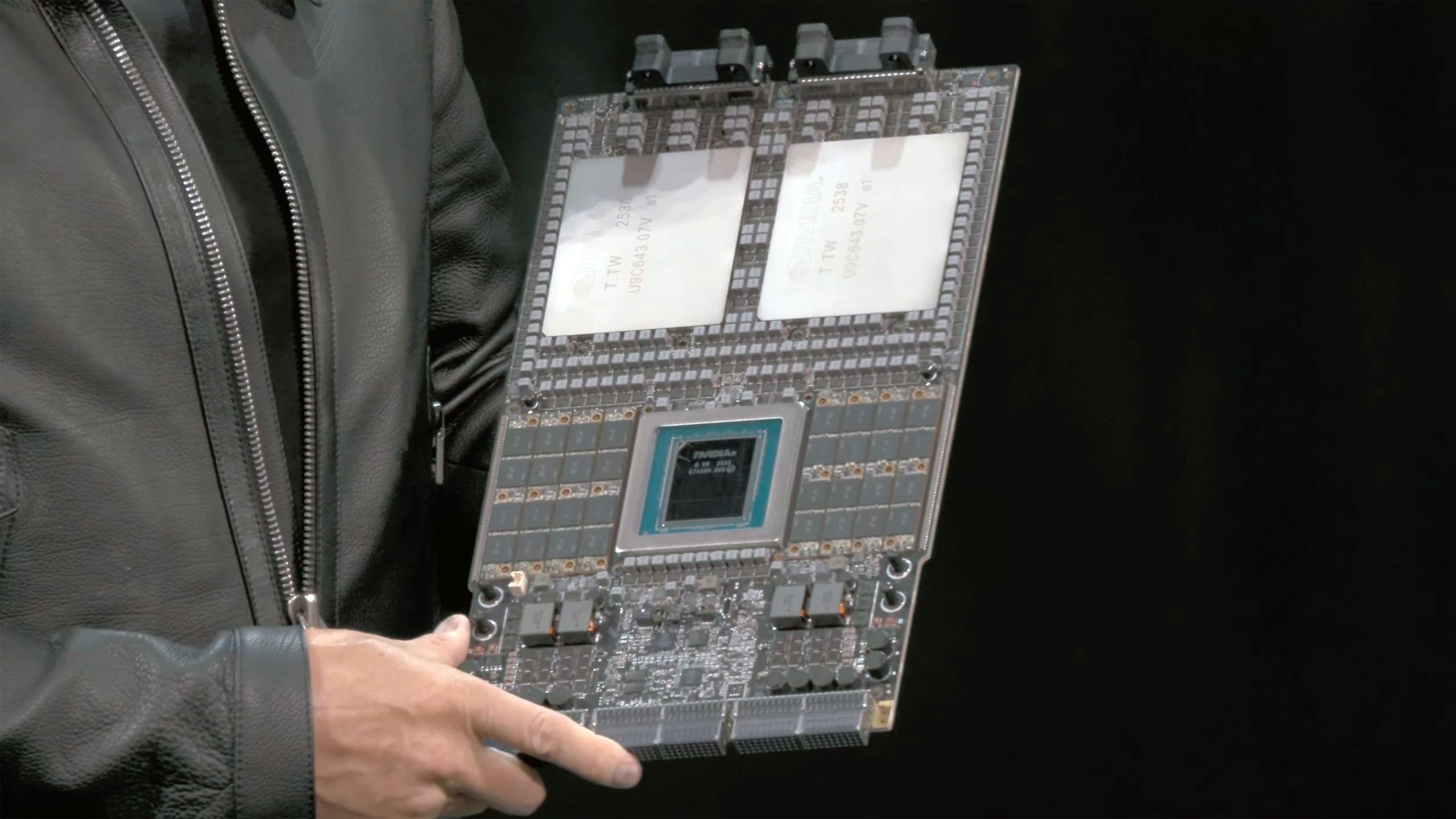

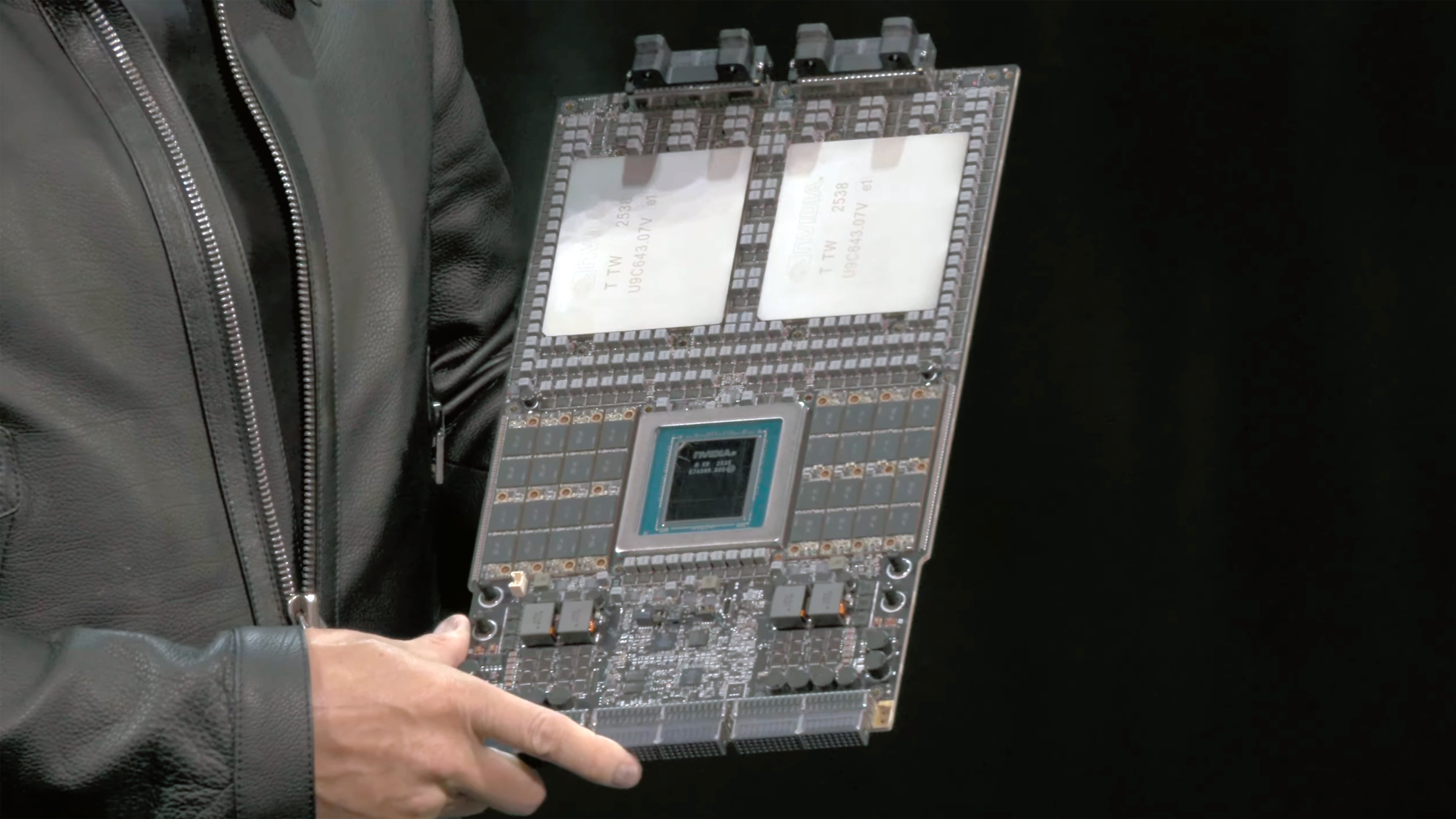

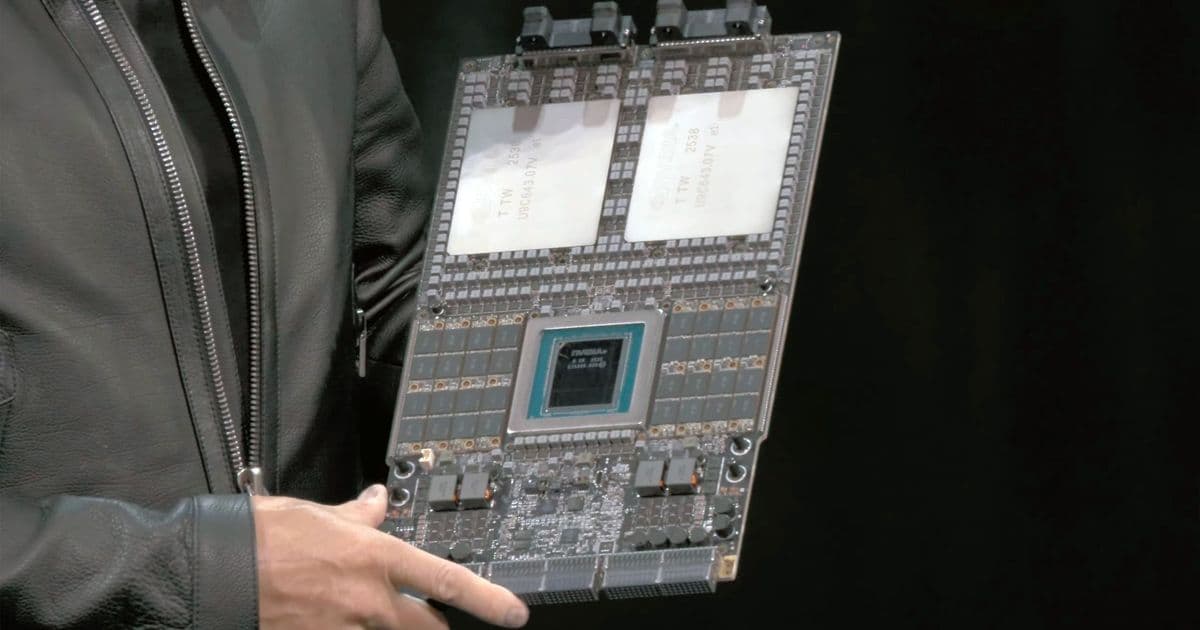

Nvidia has begun shipping Vera Rubin platform samples to select customers, featuring an 88-core Vera CPU paired with Rubin GPUs with 288 GB of HBM4 memory each, targeting 2026-2027 deployment.

Nvidia has begun shipping samples of its next-generation Vera Rubin platform to select customers, marking a significant milestone in the company's AI data center roadmap. The announcement came during the company's earnings call on Wednesday, with CFO Colette Kress confirming that the first samples were delivered earlier this week.

Platform Architecture and Specifications

The Vera Rubin platform represents Nvidia's ambitious push into next-generation AI infrastructure. At its core is the 88-core Vera CPU, which will be paired with Rubin GPUs featuring 288 GB of HBM4 memory per GPU. This represents a substantial increase in memory capacity compared to current-generation solutions, addressing the growing demands of large-scale AI model training and inference.

Beyond the CPU-GPU combination, the platform includes several other critical components:

- Rubin CPX GPU with 128 GB of GDDR7 memory

- NVLink 6.0 switch ASIC for scale-up rack-scale connectivity

- BlueField-4 DPU with integrated SSD for key-value cache storage

- Spectrum-6 Photonics Ethernet

- Quantum-CX9 1.6 Tb/s Photonics InfiniBand NICs

- Spectrum-X Photonics Ethernet and Quantum-CX9 Photonics InfiniBand switching silicon for scale-out connectivity

Manufacturing and Deployment Timeline

With samples now in the hands of customers, Nvidia appears to have finalized the performance and power specifications for the Vera Rubin platform. The company expects production shipments to commence in the second half of 2026, with deployment likely in late 2026 or early 2027.

Partner Preparation and Integration

Nvidia's partners are already preparing for the platform's arrival by developing software and hardware stacks compatible with Vera Rubin. The company is providing different components to various partners, with some receiving complete NVL72 VR200 racks that integrate all platform components.

Hardware partners including Foxconn, Quanta, Supermicro, and Wistron are receiving actual silicon samples. According to market rumors, Nvidia plans to ship fully assembled Level-10 (L10) VR200 compute trays to its original design manufacturers (ODMs). These trays will include the Vera CPU, Rubin GPUs, cooling systems, and interfaces pre-installed, significantly reducing the design and integration work required by ODMs.

Technical Advantages and Market Impact

Kress highlighted several technical advantages of the Vera Rubin platform during the earnings call. The modular cable-free tray design is expected to deliver improved resiliency and serviceability compared to the current Blackwell architecture. This design philosophy could translate to reduced maintenance costs and improved uptime for data center operators.

"We expect every cloud model builder to deploy Vera Rubin," Kress stated, indicating Nvidia's confidence in the platform's market adoption. This broad deployment expectation suggests that the company sees Vera Rubin as a critical component in meeting the escalating computational demands of AI workloads across the industry.

Industry Context and Competition

The timing of Vera Rubin's sampling phase is significant, as it positions Nvidia to maintain its leadership in the AI accelerator market. The substantial memory capacity per GPU (288 GB HBM4) and the high-performance 88-core CPU demonstrate Nvidia's focus on addressing the most demanding AI workloads, including training of massive language models and complex scientific simulations.

As the AI industry continues to evolve rapidly, the Vera Rubin platform represents Nvidia's strategic investment in next-generation infrastructure. The combination of high memory bandwidth, advanced interconnect technologies, and integrated networking capabilities positions the platform to handle the increasingly complex and data-intensive workloads that define modern AI applications.

The sampling phase also provides Nvidia with valuable feedback from early adopters, allowing for potential optimizations before full-scale production begins. This iterative approach has been a hallmark of Nvidia's product development strategy, contributing to the company's dominant position in the AI hardware market.

As the industry awaits the commercial availability of Vera Rubin, the platform's specifications and capabilities will likely influence the direction of AI infrastructure development for years to come, setting new benchmarks for performance, scalability, and efficiency in data center operations.

Comments

Please log in or register to join the discussion