Microsoft and OpenAI have terminated their exclusive cloud arrangement, allowing OpenAI to deploy ChatGPT on multiple cloud platforms while Microsoft adjusts its Copilot revenue model, reflecting the financial challenges facing both AI giants.

Microsoft and OpenAI have announced the end of their exclusive cloud partnership, a move that reshapes the artificial intelligence infrastructure landscape. The termination of Azure's single-provider monopoly for ChatGPT represents a significant shift in how major AI models will be deployed and monetized going forward.

The agreement, which previously required OpenAI to exclusively use Microsoft Azure servers for ChatGPT, has been renegotiated to allow the AI company to pursue partnerships with other cloud service providers. In return, Microsoft will no longer pay OpenAI a share of revenue generated from its Copilot products, according to statements from both companies.

This restructuring addresses several pressing issues for both organizations. For Microsoft, it potentially resolves tensions arising from OpenAI's recent $50 billion investment deal with Amazon, which had reportedly upset Microsoft's legal department. For OpenAI, the change provides greater flexibility in managing its growing computational demands and infrastructure costs.

Financial Pressures Drive Strategic Shift

The renegotiation comes amid significant financial challenges for both companies in the AI sector. Microsoft's Copilot service, integrated across its product suite including GitHub and Microsoft 365, has seen costs more than double since January 2025. In response, Microsoft is transitioning from per-request billing to token-based pricing, which will increase costs for users who generate more verbose responses or require more complex data analysis.

The company has also announced price increases for Microsoft 365 tiers with Copilot integration, adding several dollars per month to most subscription plans. Internal documents suggest Microsoft will further implement rate limits and model tiering to control costs and increase revenue from individual AI users.

OpenAI faces even more severe financial constraints. The company was projected to exhaust its funds by the end of 2027, despite receiving tens of billions in investments. OpenAI's annualized revenue run rate stands at approximately $24 billion per year, far below the hundreds of billions needed to achieve profitability targets.

Recent financial projections indicate OpenAI will miss key revenue targets in 2026, with its own financial officer expressing concerns about the company's ability to fund its promised infrastructure spending. This has forced OpenAI to scale back its compute ambitions from $1.4 trillion to $600 billion by 2030 while still aiming to reach $280 billion in annual revenue by that same date.

Technical Implications and Infrastructure Realities

The end of Azure exclusivity has significant technical implications for AI model deployment and infrastructure management. ChatGPT and other OpenAI models will now be distributed across multiple cloud platforms, requiring sophisticated load balancing, data synchronization, and performance optimization across different cloud environments.

From a chip architecture perspective, this diversification could impact GPU allocation and utilization patterns. OpenAI's recent chip manufacturing ambitions, announced in February, suggest the company is seeking greater control over its hardware stack. However, the immediate need for multi-cloud deployment means OpenAI must maintain compatibility with different cloud providers' hardware configurations.

The compute requirements remain substantial. Even with reduced ambitions, OpenAI projects $600 billion in infrastructure spending by 2030. This translates to approximately 1.6 million NVIDIA H100 GPUs operating continuously, considering current power consumption and performance metrics. The actual number may be higher depending on the efficiency of future chip architectures and the development of more specialized AI accelerators.

Market Implications and Competitive Landscape

This deal restructuring significantly alters the competitive dynamics in the cloud AI market. OpenAI's ability to partner with multiple cloud providers weakens Microsoft's position in the enterprise AI space while strengthening competitors like Amazon Web Services and Google Cloud Platform.

The move also reflects broader industry trends toward more flexible AI deployment models. As enterprises seek to avoid vendor lock-in and optimize costs for AI workloads, multi-cloud strategies are becoming increasingly attractive. This shift benefits cloud providers that can offer competitive pricing, specialized AI hardware, and robust developer ecosystems.

For the semiconductor industry, this development reinforces the importance of AI-optimized hardware. Companies like NVIDIA, AMD, and Intel, along with specialized AI chip startups, will need to demonstrate clear performance advantages and cost efficiencies to capture market share in this evolving landscape.

OpenAI's pursuit of additional funding—including a potential $60 billion investment from Nvidia and SoftBank—could further accelerate demand for advanced AI chips. The company's projected valuation of $730 billion would make it one of the most valuable AI enterprises, with significant implications for hardware procurement strategies.

Path Forward

Both companies maintain that the renegotiation strengthens their partnership while allowing for more strategic flexibility. Microsoft continues to be OpenAI's preferred cloud partner for most workloads, while OpenAI benefits from the ability to diversify its infrastructure and reduce dependency on a single provider.

The AGI (Artificial General Intelligence) clause, which both companies were keen to retain control over, remains intact according to the new agreement. This suggests that despite the commercial restructuring, both organizations still view their collaboration as essential for advancing toward more sophisticated AI systems.

For the broader industry, this development signals a maturation of the AI market. Initial hype and massive investments are giving way to more pragmatic business models focused on sustainable revenue generation and cost management. The coming years will likely see continued consolidation in the AI space, with only companies demonstrating clear profitability paths surviving the transition from experimental technology to commercial product.

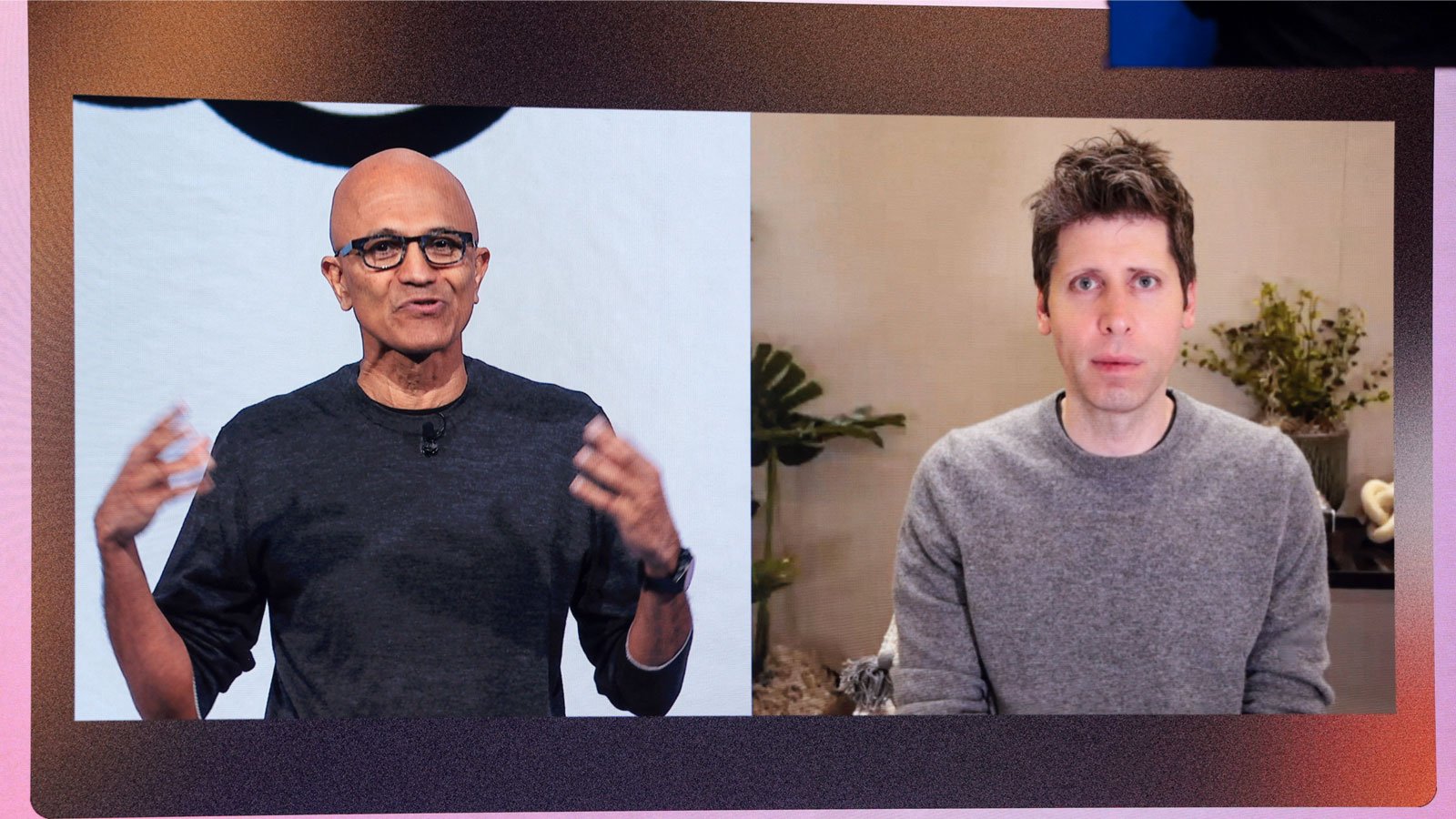

As Microsoft CEO Satya Nadella noted at the World Economic Forum, AI companies must find clear, practical uses for their technology or risk losing 'social permission' to continue their work. The end of the Azure exclusivity deal may be both companies' acknowledgment of this reality, as they pivot toward more sustainable business models in the competitive AI landscape.

Comments

Please log in or register to join the discussion